Understand_Tables.ipynb:

Extract Structured Information from Tables in PDF Documents using IBM Watson Discovery and Text Extensions for Pandas

Introduction¶

Many organizations have valuable information hidden in tables inside human-readable documents like PDF files and web pages. Table identification and extraction technology can turn this human-readable information into a format that data science tools can import and use. Text Extensions for Pandas and Watson Discovery make this process much easier.

In this notebook, we'll follow the journey of Allison, an analyst at an investment bank. Allison's employer has assigned her to cover several different companies, one of which is IBM. As part of her analysis, Allison wants to track IBM's revenue over time, broken down by geographical region. That detailed revenue information is all there in IBM's filings with the U.S. Securities and Exchange Commission (SEC). For example, here's IBM's 2019 annual report:

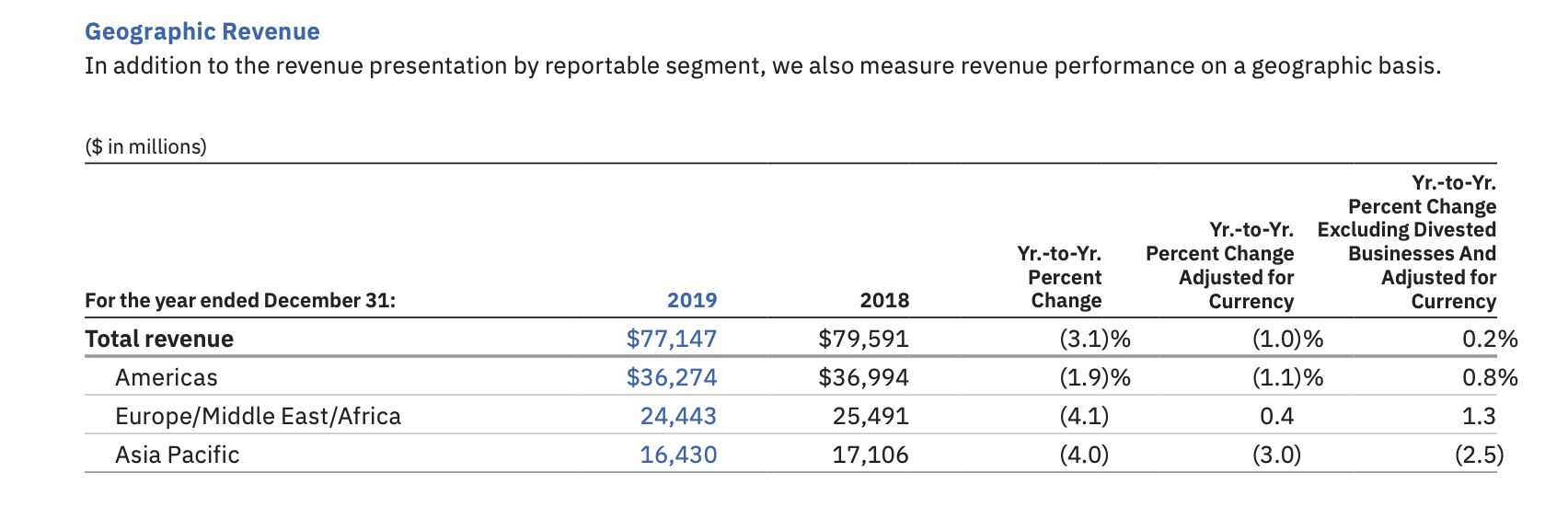

Did you see the table of revenue by geography? It's here, on page 39:

Here's what that table looks like close up:

But this particular table only gives two years' revenue figures. Allison needs to have enough data to draw a meaningful chart of revenue over time. 10 years of annual revenue figures would be a good starting point.

Allison has a collection of IBM annual reports going back to 2009. In total, these documents contain about 1500 pages of financial information. Hidden inside those 1500 pages are the detailed revenue figures that Allison wants. She needs to find those figures, extract them from the documents, and import them into her data science tools.

Fortunately, Allison has Watson Discovery, IBM's suite of tools for managing and extracting value from collections of human-readable documents.

The cells that follow will show how Allison uses Text Extensions for Pandas and Watson Discovery to import the detailed revenue information from her PDF documents into a Pandas DataFrame...

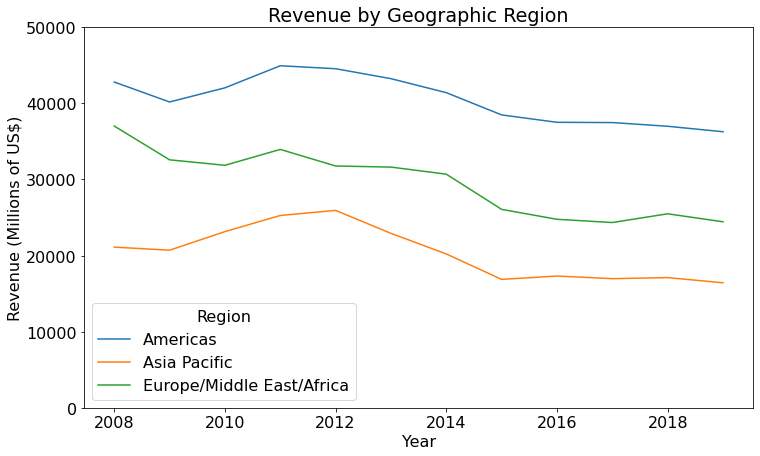

...that she then uses to generate a chart of revenue over time:

But first, let's set your environment up so that you can run Allison's code yourself.

(If you're just reading through the precomputed outputs of this notebook, you can skip ahead to the section labeled "Extract Tables with Watson Discovery").

Environment Setup¶

This notebook requires a Python 3.7 or later environment with the following packages:

- The dependencies listed in the "requirements.txt" file for Text Extensions for Pandas

matplotlibtext_extensions_for_pandas

You can satisfy the dependency on text_extensions_for_pandas in either of two ways:

- Run

pip install text_extensions_for_pandasbefore running this notebook. This command adds the library to your Python environment. - Run this notebook out of your local copy of the Text Extensions for Pandas project's source tree. In this case, the notebook will use the version of Text Extensions for Pandas in your local source tree if the package is not installed in your Python environment.

# Core Python libraries

import json

import os

import sys

from typing import *

import pandas as pd

from matplotlib import pyplot as plt

# And of course we need the text_extensions_for_pandas library itself.

try:

import text_extensions_for_pandas as tp

except ModuleNotFoundError as e:

# If we're running from within the project source tree and the parent Python

# environment doesn't have the text_extensions_for_pandas package, use the

# version in the local source tree.

if not os.getcwd().endswith("notebooks"):

raise e

if ".." not in sys.path:

sys.path.insert(0, "..")

import text_extensions_for_pandas as tp

Extract Tables with Watson Discovery¶

Allison connects to the Watson Discovery component of her firm's IBM Cloud Pak for Data installation on their OpenShift cluster.

She creates a new Watson Discovery project and uploads her stack of IBM annual reports to her project. Then she uses the Watson Discovery's Table Understanding enrichment to identify tables in the PDF documents and to extract detailed information about the cells and headers that make up each table.

To keep this notebook short, we've captured the output of Table Understanding on Allison's documents and checked it into Github here. We will use these JSON files as input for the rest of this scenario. If you'd like to learn more about importing and managing document collections in Watson Discovery, take a look at the Getting Started Guide for Watson Discovery.

Allison reads the JSON output from Watson Discovery's table enrichment into a Python variable, then prints out what the 2019 "Geographic Revenue" table looks like in this raw output.

# Location of the output from Watson Discovery's Table Understanding enrichment

# (relative to this notebook file)

FILES_DIR = "../resources/tables/financial_table_demo/IBM_10-K"

with open(f"{FILES_DIR}/2019.json", "r") as f:

ibm_2019_json = json.load(f)

# Find the table in the "Geographic Revenue" section.

table_index = [i for i in range(len(ibm_2019_json["tables"]))

if ibm_2019_json["tables"][i]["section_title"]["text"] == "Geographic Revenue"][0]

print(json.dumps(ibm_2019_json["tables"][table_index], indent=2))

{

"location": {

"begin": 664612,

"end": 673296

},

"text": "($ in millions)\n For the year ended December 31: 2019 2018\nYr.-to-Yr. Percent Change\nYr.-to-Yr. Percent Change Adjusted for Currency\nYr.-to-Yr. Percent Change\n Excluding Divested Businesses And Adjusted for Currency\nTotal revenue $77,147 $79,591 (3.1 )% (1.0)% 0.2%\nAmericas $36,274 $36,994 (1.9)% (1.1)% 0.8%\nEurope/Middle East/Africa 24,443 25,491 (4.1) 0.4 1.3\nAsia Pacific 16,430 17,106 (4.0) (3.0) (2.5)\n",

"section_title": {

"location": {

"begin": 663834,

"end": 663852

},

"text": "Geographic Revenue"

},

"title": {},

"table_headers": [

{

"cell_id": "tableHeader-664612-664628",

"location": {

"begin": 664612,

"end": 664628

},

"text": "($ in millions)",

"row_index_begin": 0,

"row_index_end": 0,

"column_index_begin": 0,

"column_index_end": 0

}

],

"row_headers": [

{

"cell_id": "rowHeader-667212-667226",

"location": {

"begin": 667212,

"end": 667226

},

"text": "Total revenue",

"text_normalized": "Total revenue",

"row_index_begin": 2,

"row_index_end": 2,

"column_index_begin": 0,

"column_index_end": 0

},

{

"cell_id": "rowHeader-668801-668810",

"location": {

"begin": 668801,

"end": 668810

},

"text": "Americas",

"text_normalized": "Americas",

"row_index_begin": 3,

"row_index_end": 3,

"column_index_begin": 0,

"column_index_end": 0

},

{

"cell_id": "rowHeader-670386-670412",

"location": {

"begin": 670386,

"end": 670412

},

"text": "Europe/Middle East/Africa",

"text_normalized": "Europe/Middle East/Africa",

"row_index_begin": 4,

"row_index_end": 4,

"column_index_begin": 0,

"column_index_end": 0

},

{

"cell_id": "rowHeader-671979-671992",

"location": {

"begin": 671979,

"end": 671992

},

"text": "Asia Pacific",

"text_normalized": "Asia Pacific",

"row_index_begin": 5,

"row_index_end": 5,

"column_index_begin": 0,

"column_index_end": 0

}

],

"column_headers": [

{

"cell_id": "colHeader-664705-664706",

"location": {

"begin": 664705,

"end": 664706

},

"text": "",

"text_normalized": "",

"row_index_begin": 0,

"row_index_end": 0,

"column_index_begin": 1,

"column_index_end": 1

},

{

"cell_id": "colHeader-664770-664771",

"location": {

"begin": 664770,

"end": 664771

},

"text": "",

"text_normalized": "",

"row_index_begin": 0,

"row_index_end": 0,

"column_index_begin": 2,

"column_index_end": 2

},

{

"cell_id": "colHeader-664835-664836",

"location": {

"begin": 664835,

"end": 664836

},

"text": "",

"text_normalized": "",

"row_index_begin": 0,

"row_index_end": 0,

"column_index_begin": 3,

"column_index_end": 3

},

{

"cell_id": "colHeader-664900-664901",

"location": {

"begin": 664900,

"end": 664901

},

"text": "",

"text_normalized": "",

"row_index_begin": 0,

"row_index_end": 0,

"column_index_begin": 4,

"column_index_end": 4

},

{

"cell_id": "colHeader-664965-664966",

"location": {

"begin": 664965,

"end": 664966

},

"text": "",

"text_normalized": "",

"row_index_begin": 0,

"row_index_end": 0,

"column_index_begin": 5,

"column_index_end": 5

},

{

"cell_id": "colHeader-665217-665249",

"location": {

"begin": 665217,

"end": 665249

},

"text": "For the year ended December 31:",

"text_normalized": "For the year ended December 31:",

"row_index_begin": 1,

"row_index_end": 1,

"column_index_begin": 0,

"column_index_end": 0

},

{

"cell_id": "colHeader-665513-665518",

"location": {

"begin": 665513,

"end": 665518

},

"text": "2019",

"text_normalized": "2019",

"row_index_begin": 1,

"row_index_end": 1,

"column_index_begin": 1,

"column_index_end": 1

},

{

"cell_id": "colHeader-665788-665793",

"location": {

"begin": 665788,

"end": 665793

},

"text": "2018",

"text_normalized": "2018",

"row_index_begin": 1,

"row_index_end": 1,

"column_index_begin": 2,

"column_index_end": 2

},

{

"cell_id": "colHeader-666061-666087",

"location": {

"begin": 666061,

"end": 666087

},

"text": "Yr.-to-Yr. Percent Change",

"text_normalized": "Yr.-to-Yr. Percent Change",

"row_index_begin": 1,

"row_index_end": 1,

"column_index_begin": 3,

"column_index_end": 3

},

{

"cell_id": "colHeader-666356-666404",

"location": {

"begin": 666356,

"end": 666404

},

"text": "Yr.-to-Yr. Percent Change Adjusted for Currency",

"text_normalized": "Yr.-to-Yr. Percent Change Adjusted for Currency",

"row_index_begin": 1,

"row_index_end": 1,

"column_index_begin": 4,

"column_index_end": 4

},

{

"cell_id": "colHeader-666675-666948",

"location": {

"begin": 666675,

"end": 666948

},

"text": "Yr.-to-Yr. Percent Change\n Excluding Divested Businesses And Adjusted for Currency",

"text_normalized": "Yr.-to-Yr. Percent Change\n Excluding Divested Businesses And Adjusted for Currency",

"row_index_begin": 1,

"row_index_end": 1,

"column_index_begin": 5,

"column_index_end": 5

}

],

"body_cells": [

{

"cell_id": "bodyCell-667480-667488",

"location": {

"begin": 667480,

"end": 667488

},

"text": "$77,147",

"row_index_begin": 2,

"row_index_end": 2,

"column_index_begin": 1,

"column_index_end": 1,

"row_header_ids": [

"rowHeader-667212-667226"

],

"row_header_texts": [

"Total revenue"

],

"row_header_texts_normalized": [

"Total revenue"

],

"column_header_ids": [

"colHeader-664705-664706",

"colHeader-665513-665518"

],

"column_header_texts": [

"",

"2019"

],

"column_header_texts_normalized": [

"",

"2019"

],

"attributes": [

{

"type": "Currency",

"text": "$77,147",

"location": {

"begin": 667480,

"end": 667487

}

}

]

},

{

"cell_id": "bodyCell-667744-667752",

"location": {

"begin": 667744,

"end": 667752

},

"text": "$79,591",

"row_index_begin": 2,

"row_index_end": 2,

"column_index_begin": 2,

"column_index_end": 2,

"row_header_ids": [

"rowHeader-667212-667226"

],

"row_header_texts": [

"Total revenue"

],

"row_header_texts_normalized": [

"Total revenue"

],

"column_header_ids": [

"colHeader-664770-664771",

"colHeader-665788-665793"

],

"column_header_texts": [

"",

"2018"

],

"column_header_texts_normalized": [

"",

"2018"

],

"attributes": [

{

"type": "Currency",

"text": "$79,591",

"location": {

"begin": 667744,

"end": 667751

}

}

]

},

{

"cell_id": "bodyCell-668006-668014",

"location": {

"begin": 668006,

"end": 668014

},

"text": "(3.1 )%",

"row_index_begin": 2,

"row_index_end": 2,

"column_index_begin": 3,

"column_index_end": 3,

"row_header_ids": [

"rowHeader-667212-667226"

],

"row_header_texts": [

"Total revenue"

],

"row_header_texts_normalized": [

"Total revenue"

],

"column_header_ids": [

"colHeader-664835-664836",

"colHeader-666061-666087"

],

"column_header_texts": [

"",

"Yr.-to-Yr. Percent Change"

],

"column_header_texts_normalized": [

"",

"Yr.-to-Yr. Percent Change"

],

"attributes": [

{

"type": "Number",

"text": "3.1",

"location": {

"begin": 668007,

"end": 668010

}

}

]

},

{

"cell_id": "bodyCell-668266-668273",

"location": {

"begin": 668266,

"end": 668273

},

"text": "(1.0)%",

"row_index_begin": 2,

"row_index_end": 2,

"column_index_begin": 4,

"column_index_end": 4,

"row_header_ids": [

"rowHeader-667212-667226"

],

"row_header_texts": [

"Total revenue"

],

"row_header_texts_normalized": [

"Total revenue"

],

"column_header_ids": [

"colHeader-664900-664901",

"colHeader-666356-666404"

],

"column_header_texts": [

"",

"Yr.-to-Yr. Percent Change Adjusted for Currency"

],

"column_header_texts_normalized": [

"",

"Yr.-to-Yr. Percent Change Adjusted for Currency"

],

"attributes": [

{

"type": "Number",

"text": "1.0",

"location": {

"begin": 668267,

"end": 668270

}

}

]

},

{

"cell_id": "bodyCell-668530-668535",

"location": {

"begin": 668530,

"end": 668535

},

"text": "0.2%",

"row_index_begin": 2,

"row_index_end": 2,

"column_index_begin": 5,

"column_index_end": 5,

"row_header_ids": [

"rowHeader-667212-667226"

],

"row_header_texts": [

"Total revenue"

],

"row_header_texts_normalized": [

"Total revenue"

],

"column_header_ids": [

"colHeader-664965-664966",

"colHeader-666675-666948"

],

"column_header_texts": [

"",

"Yr.-to-Yr. Percent Change\n Excluding Divested Businesses And Adjusted for Currency"

],

"column_header_texts_normalized": [

"",

"Yr.-to-Yr. Percent Change\n Excluding Divested Businesses And Adjusted for Currency"

],

"attributes": [

{

"type": "Percentage",

"text": "0.2%",

"location": {

"begin": 668530,

"end": 668534

}

}

]

},

{

"cell_id": "bodyCell-669065-669073",

"location": {

"begin": 669065,

"end": 669073

},

"text": "$36,274",

"row_index_begin": 3,

"row_index_end": 3,

"column_index_begin": 1,

"column_index_end": 1,

"row_header_ids": [

"rowHeader-668801-668810"

],

"row_header_texts": [

"Americas"

],

"row_header_texts_normalized": [

"Americas"

],

"column_header_ids": [

"colHeader-664705-664706",

"colHeader-665513-665518"

],

"column_header_texts": [

"",

"2019"

],

"column_header_texts_normalized": [

"",

"2019"

],

"attributes": [

{

"type": "Currency",

"text": "$36,274",

"location": {

"begin": 669065,

"end": 669072

}

}

]

},

{

"cell_id": "bodyCell-669330-669338",

"location": {

"begin": 669330,

"end": 669338

},

"text": "$36,994",

"row_index_begin": 3,

"row_index_end": 3,

"column_index_begin": 2,

"column_index_end": 2,

"row_header_ids": [

"rowHeader-668801-668810"

],

"row_header_texts": [

"Americas"

],

"row_header_texts_normalized": [

"Americas"

],

"column_header_ids": [

"colHeader-664770-664771",

"colHeader-665788-665793"

],

"column_header_texts": [

"",

"2018"

],

"column_header_texts_normalized": [

"",

"2018"

],

"attributes": [

{

"type": "Currency",

"text": "$36,994",

"location": {

"begin": 669330,

"end": 669337

}

}

]

},

{

"cell_id": "bodyCell-669591-669598",

"location": {

"begin": 669591,

"end": 669598

},

"text": "(1.9)%",

"row_index_begin": 3,

"row_index_end": 3,

"column_index_begin": 3,

"column_index_end": 3,

"row_header_ids": [

"rowHeader-668801-668810"

],

"row_header_texts": [

"Americas"

],

"row_header_texts_normalized": [

"Americas"

],

"column_header_ids": [

"colHeader-664835-664836",

"colHeader-666061-666087"

],

"column_header_texts": [

"",

"Yr.-to-Yr. Percent Change"

],

"column_header_texts_normalized": [

"",

"Yr.-to-Yr. Percent Change"

],

"attributes": [

{

"type": "Number",

"text": "1.9",

"location": {

"begin": 669592,

"end": 669595

}

}

]

},

{

"cell_id": "bodyCell-669853-669860",

"location": {

"begin": 669853,

"end": 669860

},

"text": "(1.1)%",

"row_index_begin": 3,

"row_index_end": 3,

"column_index_begin": 4,

"column_index_end": 4,

"row_header_ids": [

"rowHeader-668801-668810"

],

"row_header_texts": [

"Americas"

],

"row_header_texts_normalized": [

"Americas"

],

"column_header_ids": [

"colHeader-664900-664901",

"colHeader-666356-666404"

],

"column_header_texts": [

"",

"Yr.-to-Yr. Percent Change Adjusted for Currency"

],

"column_header_texts_normalized": [

"",

"Yr.-to-Yr. Percent Change Adjusted for Currency"

],

"attributes": [

{

"type": "Number",

"text": "1.1",

"location": {

"begin": 669854,

"end": 669857

}

}

]

},

{

"cell_id": "bodyCell-670116-670121",

"location": {

"begin": 670116,

"end": 670121

},

"text": "0.8%",

"row_index_begin": 3,

"row_index_end": 3,

"column_index_begin": 5,

"column_index_end": 5,

"row_header_ids": [

"rowHeader-668801-668810"

],

"row_header_texts": [

"Americas"

],

"row_header_texts_normalized": [

"Americas"

],

"column_header_ids": [

"colHeader-664965-664966",

"colHeader-666675-666948"

],

"column_header_texts": [

"",

"Yr.-to-Yr. Percent Change\n Excluding Divested Businesses And Adjusted for Currency"

],

"column_header_texts_normalized": [

"",

"Yr.-to-Yr. Percent Change\n Excluding Divested Businesses And Adjusted for Currency"

],

"attributes": [

{

"type": "Percentage",

"text": "0.8%",

"location": {

"begin": 670116,

"end": 670120

}

}

]

},

{

"cell_id": "bodyCell-670663-670670",

"location": {

"begin": 670663,

"end": 670670

},

"text": "24,443",

"row_index_begin": 4,

"row_index_end": 4,

"column_index_begin": 1,

"column_index_end": 1,

"row_header_ids": [

"rowHeader-670386-670412"

],

"row_header_texts": [

"Europe/Middle East/Africa"

],

"row_header_texts_normalized": [

"Europe/Middle East/Africa"

],

"column_header_ids": [

"colHeader-664705-664706",

"colHeader-665513-665518"

],

"column_header_texts": [

"",

"2019"

],

"column_header_texts_normalized": [

"",

"2019"

],

"attributes": [

{

"type": "Number",

"text": "24,443",

"location": {

"begin": 670663,

"end": 670669

}

}

]

},

{

"cell_id": "bodyCell-670924-670931",

"location": {

"begin": 670924,

"end": 670931

},

"text": "25,491",

"row_index_begin": 4,

"row_index_end": 4,

"column_index_begin": 2,

"column_index_end": 2,

"row_header_ids": [

"rowHeader-670386-670412"

],

"row_header_texts": [

"Europe/Middle East/Africa"

],

"row_header_texts_normalized": [

"Europe/Middle East/Africa"

],

"column_header_ids": [

"colHeader-664770-664771",

"colHeader-665788-665793"

],

"column_header_texts": [

"",

"2018"

],

"column_header_texts_normalized": [

"",

"2018"

],

"attributes": [

{

"type": "Number",

"text": "25,491",

"location": {

"begin": 670924,

"end": 670930

}

}

]

},

{

"cell_id": "bodyCell-671187-671193",

"location": {

"begin": 671187,

"end": 671193

},

"text": "(4.1)",

"row_index_begin": 4,

"row_index_end": 4,

"column_index_begin": 3,

"column_index_end": 3,

"row_header_ids": [

"rowHeader-670386-670412"

],

"row_header_texts": [

"Europe/Middle East/Africa"

],

"row_header_texts_normalized": [

"Europe/Middle East/Africa"

],

"column_header_ids": [

"colHeader-664835-664836",

"colHeader-666061-666087"

],

"column_header_texts": [

"",

"Yr.-to-Yr. Percent Change"

],

"column_header_texts_normalized": [

"",

"Yr.-to-Yr. Percent Change"

],

"attributes": [

{

"type": "Number",

"text": "4.1",

"location": {

"begin": 671188,

"end": 671191

}

}

]

},

{

"cell_id": "bodyCell-671449-671453",

"location": {

"begin": 671449,

"end": 671453

},

"text": "0.4",

"row_index_begin": 4,

"row_index_end": 4,

"column_index_begin": 4,

"column_index_end": 4,

"row_header_ids": [

"rowHeader-670386-670412"

],

"row_header_texts": [

"Europe/Middle East/Africa"

],

"row_header_texts_normalized": [

"Europe/Middle East/Africa"

],

"column_header_ids": [

"colHeader-664900-664901",

"colHeader-666356-666404"

],

"column_header_texts": [

"",

"Yr.-to-Yr. Percent Change Adjusted for Currency"

],

"column_header_texts_normalized": [

"",

"Yr.-to-Yr. Percent Change Adjusted for Currency"

],

"attributes": [

{

"type": "Number",

"text": "0.4",

"location": {

"begin": 671449,

"end": 671452

}

}

]

},

{

"cell_id": "bodyCell-671711-671715",

"location": {

"begin": 671711,

"end": 671715

},

"text": "1.3",

"row_index_begin": 4,

"row_index_end": 4,

"column_index_begin": 5,

"column_index_end": 5,

"row_header_ids": [

"rowHeader-670386-670412"

],

"row_header_texts": [

"Europe/Middle East/Africa"

],

"row_header_texts_normalized": [

"Europe/Middle East/Africa"

],

"column_header_ids": [

"colHeader-664965-664966",

"colHeader-666675-666948"

],

"column_header_texts": [

"",

"Yr.-to-Yr. Percent Change\n Excluding Divested Businesses And Adjusted for Currency"

],

"column_header_texts_normalized": [

"",

"Yr.-to-Yr. Percent Change\n Excluding Divested Businesses And Adjusted for Currency"

],

"attributes": [

{

"type": "Number",

"text": "1.3",

"location": {

"begin": 671711,

"end": 671714

}

}

]

},

{

"cell_id": "bodyCell-672244-672251",

"location": {

"begin": 672244,

"end": 672251

},

"text": "16,430",

"row_index_begin": 5,

"row_index_end": 5,

"column_index_begin": 1,

"column_index_end": 1,

"row_header_ids": [

"rowHeader-671979-671992"

],

"row_header_texts": [

"Asia Pacific"

],

"row_header_texts_normalized": [

"Asia Pacific"

],

"column_header_ids": [

"colHeader-664705-664706",

"colHeader-665513-665518"

],

"column_header_texts": [

"",

"2019"

],

"column_header_texts_normalized": [

"",

"2019"

],

"attributes": [

{

"type": "Number",

"text": "16,430",

"location": {

"begin": 672244,

"end": 672250

}

}

]

},

{

"cell_id": "bodyCell-672505-672512",

"location": {

"begin": 672505,

"end": 672512

},

"text": "17,106",

"row_index_begin": 5,

"row_index_end": 5,

"column_index_begin": 2,

"column_index_end": 2,

"row_header_ids": [

"rowHeader-671979-671992"

],

"row_header_texts": [

"Asia Pacific"

],

"row_header_texts_normalized": [

"Asia Pacific"

],

"column_header_ids": [

"colHeader-664770-664771",

"colHeader-665788-665793"

],

"column_header_texts": [

"",

"2018"

],

"column_header_texts_normalized": [

"",

"2018"

],

"attributes": [

{

"type": "Number",

"text": "17,106",

"location": {

"begin": 672505,

"end": 672511

}

}

]

},

{

"cell_id": "bodyCell-672767-672773",

"location": {

"begin": 672767,

"end": 672773

},

"text": "(4.0)",

"row_index_begin": 5,

"row_index_end": 5,

"column_index_begin": 3,

"column_index_end": 3,

"row_header_ids": [

"rowHeader-671979-671992"

],

"row_header_texts": [

"Asia Pacific"

],

"row_header_texts_normalized": [

"Asia Pacific"

],

"column_header_ids": [

"colHeader-664835-664836",

"colHeader-666061-666087"

],

"column_header_texts": [

"",

"Yr.-to-Yr. Percent Change"

],

"column_header_texts_normalized": [

"",

"Yr.-to-Yr. Percent Change"

],

"attributes": [

{

"type": "Number",

"text": "4.0",

"location": {

"begin": 672768,

"end": 672771

}

}

]

},

{

"cell_id": "bodyCell-673028-673034",

"location": {

"begin": 673028,

"end": 673034

},

"text": "(3.0)",

"row_index_begin": 5,

"row_index_end": 5,

"column_index_begin": 4,

"column_index_end": 4,

"row_header_ids": [

"rowHeader-671979-671992"

],

"row_header_texts": [

"Asia Pacific"

],

"row_header_texts_normalized": [

"Asia Pacific"

],

"column_header_ids": [

"colHeader-664900-664901",

"colHeader-666356-666404"

],

"column_header_texts": [

"",

"Yr.-to-Yr. Percent Change Adjusted for Currency"

],

"column_header_texts_normalized": [

"",

"Yr.-to-Yr. Percent Change Adjusted for Currency"

],

"attributes": [

{

"type": "Number",

"text": "3.0",

"location": {

"begin": 673029,

"end": 673032

}

}

]

},

{

"cell_id": "bodyCell-673290-673296",

"location": {

"begin": 673290,

"end": 673296

},

"text": "(2.5)",

"row_index_begin": 5,

"row_index_end": 5,

"column_index_begin": 5,

"column_index_end": 5,

"row_header_ids": [

"rowHeader-671979-671992"

],

"row_header_texts": [

"Asia Pacific"

],

"row_header_texts_normalized": [

"Asia Pacific"

],

"column_header_ids": [

"colHeader-664965-664966",

"colHeader-666675-666948"

],

"column_header_texts": [

"",

"Yr.-to-Yr. Percent Change\n Excluding Divested Businesses And Adjusted for Currency"

],

"column_header_texts_normalized": [

"",

"Yr.-to-Yr. Percent Change\n Excluding Divested Businesses And Adjusted for Currency"

],

"attributes": [

{

"type": "Number",

"text": "2.5",

"location": {

"begin": 673291,

"end": 673294

}

}

]

}

],

"contexts": [

{

"location": {

"begin": 664050,

"end": 664171

},

"text": "In addition to the revenue presentation by reportable segment, we also measure revenue performance on a geographic basis."

},

{

"location": {

"begin": 673587,

"end": 674274

},

"text": "Total revenue of $77,147 million in 2019 decreased 3.1 percent year to year as reported (1 percent adjusted for currency), but increased 0.2 percent excluding divested businesses and adjusted for currency."

},

{

"location": {

"begin": 674465,

"end": 674948

},

"text": "Americas revenue decreased 1.9 percent as reported (1 percent adjusted for currency), but grew 1 percent excluding divested businesses and adjusted for currency."

}

],

"key_value_pairs": []

}

That raw output contains everything Allison needs to extract the revenue figures from this document, but it's in a format that's cumbersome to deal with. So Allison uses Text Extensions for Pandas to convert this JSON into a collection of Pandas DataFrames. These DataFrames encode information about the row headers, column headers, and cells that make up the table.

table_data = tp.io.watson.tables.parse_response(ibm_2019_json,

select_table="Geographic Revenue")

table_data.keys()

dict_keys(['row_headers', 'col_headers', 'body_cells', 'given_loc'])

table_data["body_cells"].head(5)

| text | column_index_begin | column_index_end | row_index_begin | row_index_end | cell_id | column_header_ids | column_header_texts | row_header_ids | row_header_texts | attributes.text | attributes.type | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | $77,147 | 1 | 1 | 2 | 2 | bodyCell-667480-667488 | [colHeader-664705-664706, colHeader-665513-665... | [2019] | [rowHeader-667212-667226] | [Total revenue] | [$77,147] | [Currency] |

| 1 | $79,591 | 2 | 2 | 2 | 2 | bodyCell-667744-667752 | [colHeader-664770-664771, colHeader-665788-665... | [2018] | [rowHeader-667212-667226] | [Total revenue] | [$79,591] | [Currency] |

| 2 | (3.1 )% | 3 | 3 | 2 | 2 | bodyCell-668006-668014 | [colHeader-664835-664836, colHeader-666061-666... | [Yr.-to-Yr. Percent Change] | [rowHeader-667212-667226] | [Total revenue] | [3.1] | [Number] |

| 3 | (1.0)% | 4 | 4 | 2 | 2 | bodyCell-668266-668273 | [colHeader-664900-664901, colHeader-666356-666... | [Yr.-to-Yr. Percent Change Adjusted for Currency] | [rowHeader-667212-667226] | [Total revenue] | [1.0] | [Number] |

| 4 | 0.2% | 5 | 5 | 2 | 2 | bodyCell-668530-668535 | [colHeader-664965-664966, colHeader-666675-666... | [Yr.-to-Yr. Percent Change\n Excluding Diveste... | [rowHeader-667212-667226] | [Total revenue] | [0.2%] | [Percentage] |

Text Extensions for Pandas can convert these DataFrames into a single Pandas DataFrame that matches the layout of the original table in the document. Allison calls the make_table() function to perform that conversion and inspects the output.

revenue_2019_df = tp.io.watson.tables.make_table(table_data)

revenue_2019_df

| 2019 | 2018 | Yr.-to-Yr. Percent Change | Yr.-to-Yr. Percent Change Adjusted for Currency | Yr.-to-Yr. Percent Change\n Excluding Divested Businesses And Adjusted for Currency | |

|---|---|---|---|---|---|

| Total revenue | $77,147 | $79,591 | -3.1 | -1.0 | 0.2 |

| Americas | $36,274 | $36,994 | -1.9 | -1.1 | 0.8 |

| Europe/Middle East/Africa | 24,443 | 25,491 | -4.1 | 0.4 | 1.3 |

| Asia Pacific | 16,430 | 17,106 | -4.0 | -3.0 | -2.5 |

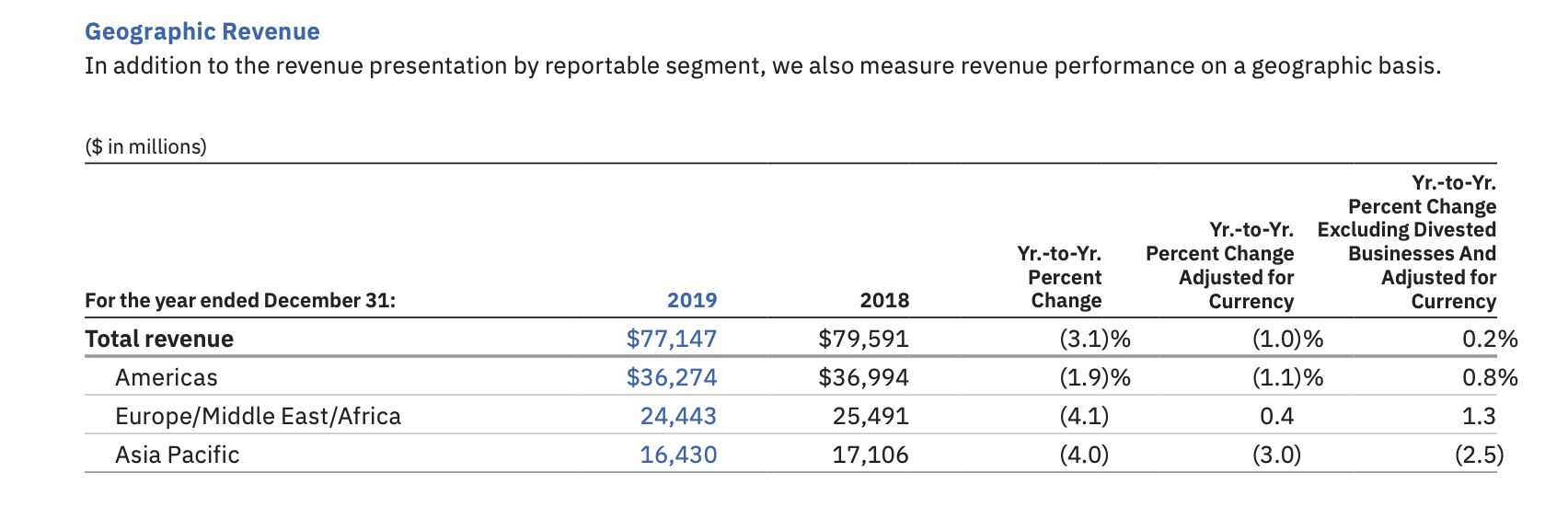

The reconstructed dataframe looks good! Here's what the original table in the PDF document looked like:

If Allison just wanted to create a DataFrame of 2018/2019 revenue figures, her task would be done. But Allison wants to reconstruct ten years of revenue by geographic region. To do that, she will need to combine information from multiple documents. For tables like this one that have multiple levels of header information, this kind of integration is easier to perform over the "exploded" version of the table, where each cell in the table is represented a single row containing all the corresponding header values.

Allison passes the same table data from the 2019 report through the Text Extensions for Pandas function make_exploded_df() to produce the exploded represention of the table:

exploded_df, row_header_names, col_header_names = (

tp.io.watson.tables.make_exploded_df(table_data, col_explode_by="concat"))

exploded_df

| text | row_header_texts_0 | column_header_texts | attributes.type | |

|---|---|---|---|---|

| 0 | $77,147 | Total revenue | 2019 | [Currency] |

| 1 | $79,591 | Total revenue | 2018 | [Currency] |

| 2 | (3.1 )% | Total revenue | Yr.-to-Yr. Percent Change | [Number] |

| 3 | (1.0)% | Total revenue | Yr.-to-Yr. Percent Change Adjusted for Currency | [Number] |

| 4 | 0.2% | Total revenue | Yr.-to-Yr. Percent Change\n Excluding Divested... | [Percentage] |

| 5 | $36,274 | Americas | 2019 | [Currency] |

| 6 | $36,994 | Americas | 2018 | [Currency] |

| 7 | (1.9)% | Americas | Yr.-to-Yr. Percent Change | [Number] |

| 8 | (1.1)% | Americas | Yr.-to-Yr. Percent Change Adjusted for Currency | [Number] |

| 9 | 0.8% | Americas | Yr.-to-Yr. Percent Change\n Excluding Divested... | [Percentage] |

| 10 | 24,443 | Europe/Middle East/Africa | 2019 | [Number] |

| 11 | 25,491 | Europe/Middle East/Africa | 2018 | [Number] |

| 12 | (4.1) | Europe/Middle East/Africa | Yr.-to-Yr. Percent Change | [Number] |

| 13 | 0.4 | Europe/Middle East/Africa | Yr.-to-Yr. Percent Change Adjusted for Currency | [Number] |

| 14 | 1.3 | Europe/Middle East/Africa | Yr.-to-Yr. Percent Change\n Excluding Divested... | [Number] |

| 15 | 16,430 | Asia Pacific | 2019 | [Number] |

| 16 | 17,106 | Asia Pacific | 2018 | [Number] |

| 17 | (4.0) | Asia Pacific | Yr.-to-Yr. Percent Change | [Number] |

| 18 | (3.0) | Asia Pacific | Yr.-to-Yr. Percent Change Adjusted for Currency | [Number] |

| 19 | (2.5) | Asia Pacific | Yr.-to-Yr. Percent Change\n Excluding Divested... | [Number] |

This exploded version of the table is the exact same data, just represented in a different way. If she wants, Allison can convert it back to the format from the original document by calling pandas.DataFrame.pivot():

exploded_df.pivot(index="row_header_texts_0", columns="column_header_texts", values="text")

| column_header_texts | 2018 | 2019 | Yr.-to-Yr. Percent Change | Yr.-to-Yr. Percent Change\n Excluding Divested Businesses And Adjusted for Currency | Yr.-to-Yr. Percent Change Adjusted for Currency |

|---|---|---|---|---|---|

| row_header_texts_0 | |||||

| Americas | $36,994 | $36,274 | (1.9)% | 0.8% | (1.1)% |

| Asia Pacific | 17,106 | 16,430 | (4.0) | (2.5) | (3.0) |

| Europe/Middle East/Africa | 25,491 | 24,443 | (4.1) | 1.3 | 0.4 |

| Total revenue | $79,591 | $77,147 | (3.1 )% | 0.2% | (1.0)% |

But because she is about to merge this DataFrame with similar data from other documents, Allison keeps the data in exploded format for now.

Allison's next task is to write some Pandas transformations that will clean and reformat the DataFrame for each source table prior to merging them all together. She uses the 2019 report's data as a test case for creating this code. The first step is to convert the cell values in the Watson Discovery output from text to numeric values. Text Extensions for Pandas includes a more robust version of pandas.to_numeric() that can handle common idioms for representing currencies and percentages. Allison uses this function, called convert_cols_to_numeric(), to convert all the cell values to numbers. She adds a new column "value" to her DataFrame to hold these numbers.

exploded_df["value"] = \

tp.io.watson.tables.convert_cols_to_numeric(exploded_df[["text"]])

exploded_df

| text | row_header_texts_0 | column_header_texts | attributes.type | value | |

|---|---|---|---|---|---|

| 0 | $77,147 | Total revenue | 2019 | [Currency] | 77147.0 |

| 1 | $79,591 | Total revenue | 2018 | [Currency] | 79591.0 |

| 2 | (3.1 )% | Total revenue | Yr.-to-Yr. Percent Change | [Number] | -3.1 |

| 3 | (1.0)% | Total revenue | Yr.-to-Yr. Percent Change Adjusted for Currency | [Number] | -1.0 |

| 4 | 0.2% | Total revenue | Yr.-to-Yr. Percent Change\n Excluding Divested... | [Percentage] | 0.2 |

| 5 | $36,274 | Americas | 2019 | [Currency] | 36274.0 |

| 6 | $36,994 | Americas | 2018 | [Currency] | 36994.0 |

| 7 | (1.9)% | Americas | Yr.-to-Yr. Percent Change | [Number] | -1.9 |

| 8 | (1.1)% | Americas | Yr.-to-Yr. Percent Change Adjusted for Currency | [Number] | -1.1 |

| 9 | 0.8% | Americas | Yr.-to-Yr. Percent Change\n Excluding Divested... | [Percentage] | 0.8 |

| 10 | 24,443 | Europe/Middle East/Africa | 2019 | [Number] | 24443.0 |

| 11 | 25,491 | Europe/Middle East/Africa | 2018 | [Number] | 25491.0 |

| 12 | (4.1) | Europe/Middle East/Africa | Yr.-to-Yr. Percent Change | [Number] | -4.1 |

| 13 | 0.4 | Europe/Middle East/Africa | Yr.-to-Yr. Percent Change Adjusted for Currency | [Number] | 0.4 |

| 14 | 1.3 | Europe/Middle East/Africa | Yr.-to-Yr. Percent Change\n Excluding Divested... | [Number] | 1.3 |

| 15 | 16,430 | Asia Pacific | 2019 | [Number] | 16430.0 |

| 16 | 17,106 | Asia Pacific | 2018 | [Number] | 17106.0 |

| 17 | (4.0) | Asia Pacific | Yr.-to-Yr. Percent Change | [Number] | -4.0 |

| 18 | (3.0) | Asia Pacific | Yr.-to-Yr. Percent Change Adjusted for Currency | [Number] | -3.0 |

| 19 | (2.5) | Asia Pacific | Yr.-to-Yr. Percent Change\n Excluding Divested... | [Number] | -2.5 |

Now all the cell values have been converted to floating-point numbers, but only some of these numbers represent revenue. Looking at the 2019 data, Allison can see that the revenue numbers have 4-digit years in their column headers. So she filters the DataFrame down to just those rows with 4-digit numbers in the "column_header_texts" column.

rows_to_retain = exploded_df[exploded_df["column_header_texts"].str.fullmatch("\d{4}")].copy()

rows_to_retain

| text | row_header_texts_0 | column_header_texts | attributes.type | value | |

|---|---|---|---|---|---|

| 0 | $77,147 | Total revenue | 2019 | [Currency] | 77147.0 |

| 1 | $79,591 | Total revenue | 2018 | [Currency] | 79591.0 |

| 5 | $36,274 | Americas | 2019 | [Currency] | 36274.0 |

| 6 | $36,994 | Americas | 2018 | [Currency] | 36994.0 |

| 10 | 24,443 | Europe/Middle East/Africa | 2019 | [Number] | 24443.0 |

| 11 | 25,491 | Europe/Middle East/Africa | 2018 | [Number] | 25491.0 |

| 15 | 16,430 | Asia Pacific | 2019 | [Number] | 16430.0 |

| 16 | 17,106 | Asia Pacific | 2018 | [Number] | 17106.0 |

That's looking good! Now Allison drops the unnecessary columns and gives some more friendly names to the columns that remain.

rows_to_retain.rename(

columns={

"row_header_texts_0": "Region",

"column_header_texts": "Year",

"value": "Revenue"

})[["Year", "Region", "Revenue"]]

| Year | Region | Revenue | |

|---|---|---|---|

| 0 | 2019 | Total revenue | 77147.0 |

| 1 | 2018 | Total revenue | 79591.0 |

| 5 | 2019 | Americas | 36274.0 |

| 6 | 2018 | Americas | 36994.0 |

| 10 | 2019 | Europe/Middle East/Africa | 24443.0 |

| 11 | 2018 | Europe/Middle East/Africa | 25491.0 |

| 15 | 2019 | Asia Pacific | 16430.0 |

| 16 | 2018 | Asia Pacific | 17106.0 |

The code from the last few cells worked to clean up the 2019 data, so Allison copies and pastes that code into a Python function:

def dataframe_for_file(filename: str):

with open(f"{FILES_DIR}/{filename}", "r") as f:

json_output = json.load(f)

table_data = tp.io.watson.tables.parse_response(json_output,

select_table="Geographic Revenue")

exploded_df, _, _ = tp.io.watson.tables.make_exploded_df(

table_data, col_explode_by="concat")

rows_to_retain = exploded_df[exploded_df["column_header_texts"].str.fullmatch("\d{4}")

& (exploded_df["text"].str.len() > 0)].copy()

rows_to_retain["value"] = tp.io.watson.tables.convert_cols_to_numeric(

rows_to_retain[["text"]])

rows_to_retain["file"] = filename

return (

rows_to_retain.rename(columns={

"row_header_texts_0": "Region", "column_header_texts": "Year", "value": "Revenue"})

[["Year", "Region", "Revenue"]]

)

Then she calls that function on the Watson Discovery output from the 2019 annual report to verify that it produces the same answer.

dataframe_for_file("2019.json")

| Year | Region | Revenue | |

|---|---|---|---|

| 0 | 2019 | Total revenue | 77147.0 |

| 1 | 2018 | Total revenue | 79591.0 |

| 5 | 2019 | Americas | 36274.0 |

| 6 | 2018 | Americas | 36994.0 |

| 10 | 2019 | Europe/Middle East/Africa | 24443.0 |

| 11 | 2018 | Europe/Middle East/Africa | 25491.0 |

| 15 | 2019 | Asia Pacific | 16430.0 |

| 16 | 2018 | Asia Pacific | 17106.0 |

Looks good! Time to run the same function over an entire stack of reports. Allison puts the names of all her Watson Discovery output files into a single Python list.

all_files = sorted([f for f in os.listdir(FILES_DIR) if f.endswith(".json")])

all_files

['2009.json', '2010.json', '2012.json', '2013.json', '2015.json', '2016.json', '2017.json', '2018.json', '2019.json']

Note that the annual reports for 2011 and 2014 aren't in the collection of files that Allison has. But that's ok; each report contains the previous year's figures, so Allison can reconstruct the missing data from adjacent years.

Allison calls her dataframe_for_file() function on each of the files, then concatenates all of the resulting Pandas DataFrames into a single large DataFrame.

revenue_df = pd.concat([dataframe_for_file(f) for f in all_files])

revenue_df

| Year | Region | Revenue | |

|---|---|---|---|

| 0 | 2009 | Total revenue: | 95758.0 |

| 1 | 2008 | Total revenue: | 103630.0 |

| 4 | 2009 | Geographies: | 93477.0 |

| 5 | 2008 | Geographies: | 100939.0 |

| 8 | 2009 | Americas | 40184.0 |

| ... | ... | ... | ... |

| 6 | 2018 | Americas | 36994.0 |

| 10 | 2019 | Europe/Middle East/Africa | 24443.0 |

| 11 | 2018 | Europe/Middle East/Africa | 25491.0 |

| 15 | 2019 | Asia Pacific | 16430.0 |

| 16 | 2018 | Asia Pacific | 17106.0 |

82 rows × 3 columns

Allison can see that the first four lines of this DataFrame contain total worldwide revenue; and that this total occurred under different names in different documents. Allison is interested in the fine-grained revenue figures, not the totals, so she needs to filter out all these rows with worldwide revenue.

What are all the names of geographic regions that IBM annual reports have used over the last ten years?

revenue_df[["Region"]].drop_duplicates()

| Region | |

|---|---|

| 0 | Total revenue: |

| 4 | Geographies: |

| 8 | Americas |

| 12 | Europe/Middle East/Africa |

| 16 | Asia Pacific |

| 0 | Total revenue |

| 4 | Geographies |

| 16 | Asia Pacifi c |

It looks like all the worldwide revenue figures are under some variation of "Geographies" or "Total revenue". Allison uses Pandas' string matching facilities to filter out the rows whose "Region" column contains the words "geographies" or "total".

geo_revenue_df = (

revenue_df[~( # "~" operator inverts a Pandas selection condition

(revenue_df["Region"].str.contains("geographies", case=False))

| (revenue_df["Region"].str.contains("total", case=False))

)]).copy()

geo_revenue_df

| Year | Region | Revenue | |

|---|---|---|---|

| 8 | 2009 | Americas | 40184.0 |

| 9 | 2008 | Americas | 42807.0 |

| 12 | 2009 | Europe/Middle East/Africa | 32583.0 |

| 13 | 2008 | Europe/Middle East/Africa | 37020.0 |

| 16 | 2009 | Asia Pacific | 20710.0 |

| 17 | 2008 | Asia Pacific | 21111.0 |

| 8 | 2010 | Americas | 42044.0 |

| 9 | 2009 | Americas | 40184.0 |

| 12 | 2010 | Europe/Middle East/Africa | 31866.0 |

| 13 | 2009 | Europe/Middle East/Africa | 32583.0 |

| 16 | 2010 | Asia Pacific | 23150.0 |

| 17 | 2009 | Asia Pacific | 20710.0 |

| 8 | 2012 | Americas | 44556.0 |

| 9 | 2011 | Americas | 44944.0 |

| 12 | 2012 | Europe/Middle East/Africa | 31775.0 |

| 13 | 2011 | Europe/Middle East/Africa | 33952.0 |

| 16 | 2012 | Asia Pacific | 25937.0 |

| 17 | 2011 | Asia Pacific | 25273.0 |

| 8 | 2013 | Americas | 43249.0 |

| 9 | 2012 | Americas | 44556.0 |

| 12 | 2013 | Europe/Middle East/Africa | 31628.0 |

| 13 | 2012 | Europe/Middle East/Africa | 31775.0 |

| 16 | 2013 | Asia Pacific | 22923.0 |

| 17 | 2012 | Asia Pacific | 25937.0 |

| 8 | 2015 | Americas | 38486.0 |

| 9 | 2014 | Americas | 41410.0 |

| 12 | 2015 | Europe/Middle East/Africa | 26073.0 |

| 13 | 2014 | Europe/Middle East/Africa | 30700.0 |

| 16 | 2015 | Asia Pacifi c | 16871.0 |

| 17 | 2014 | Asia Pacifi c | 20216.0 |

| 8 | 2016 | Americas | 37513.0 |

| 9 | 2015 | Americas | 38486.0 |

| 12 | 2016 | Europe/Middle East/Africa | 24769.0 |

| 13 | 2015 | Europe/Middle East/Africa | 26073.0 |

| 16 | 2016 | Asia Pacifi c | 17313.0 |

| 17 | 2015 | Asia Pacifi c | 16871.0 |

| 8 | 2017 | Americas | 37479.0 |

| 9 | 2016 | Americas | 37513.0 |

| 12 | 2017 | Europe/Middle East/Africa | 24345.0 |

| 13 | 2016 | Europe/Middle East/Africa | 24769.0 |

| 16 | 2017 | Asia Pacific | 16970.0 |

| 17 | 2016 | Asia Pacific | 17313.0 |

| 4 | 2018 | Americas | 36994.0 |

| 8 | 2018 | Europe/Middle East/Africa | 25491.0 |

| 12 | 2018 | Asia Pacific | 17106.0 |

| 5 | 2019 | Americas | 36274.0 |

| 6 | 2018 | Americas | 36994.0 |

| 10 | 2019 | Europe/Middle East/Africa | 24443.0 |

| 11 | 2018 | Europe/Middle East/Africa | 25491.0 |

| 15 | 2019 | Asia Pacific | 16430.0 |

| 16 | 2018 | Asia Pacific | 17106.0 |

Now every row contains a regional revenue figure. What are the regions represented?

geo_revenue_df[["Region"]].drop_duplicates()

| Region | |

|---|---|

| 8 | Americas |

| 12 | Europe/Middle East/Africa |

| 16 | Asia Pacific |

| 16 | Asia Pacifi c |

That's strange — one of the regions is "Asia Pacifi c", with a space before the last "c". It looks like the PDF conversion on the 2016 annual report added an extra space. Allison uses the function pandas.Series.replace() to correct that issue.

geo_revenue_df["Region"] = geo_revenue_df["Region"].replace("Asia Pacifi c", "Asia Pacific")

geo_revenue_df

| Year | Region | Revenue | |

|---|---|---|---|

| 8 | 2009 | Americas | 40184.0 |

| 9 | 2008 | Americas | 42807.0 |

| 12 | 2009 | Europe/Middle East/Africa | 32583.0 |

| 13 | 2008 | Europe/Middle East/Africa | 37020.0 |

| 16 | 2009 | Asia Pacific | 20710.0 |

| 17 | 2008 | Asia Pacific | 21111.0 |

| 8 | 2010 | Americas | 42044.0 |

| 9 | 2009 | Americas | 40184.0 |

| 12 | 2010 | Europe/Middle East/Africa | 31866.0 |

| 13 | 2009 | Europe/Middle East/Africa | 32583.0 |

| 16 | 2010 | Asia Pacific | 23150.0 |

| 17 | 2009 | Asia Pacific | 20710.0 |

| 8 | 2012 | Americas | 44556.0 |

| 9 | 2011 | Americas | 44944.0 |

| 12 | 2012 | Europe/Middle East/Africa | 31775.0 |

| 13 | 2011 | Europe/Middle East/Africa | 33952.0 |

| 16 | 2012 | Asia Pacific | 25937.0 |

| 17 | 2011 | Asia Pacific | 25273.0 |

| 8 | 2013 | Americas | 43249.0 |

| 9 | 2012 | Americas | 44556.0 |

| 12 | 2013 | Europe/Middle East/Africa | 31628.0 |

| 13 | 2012 | Europe/Middle East/Africa | 31775.0 |

| 16 | 2013 | Asia Pacific | 22923.0 |

| 17 | 2012 | Asia Pacific | 25937.0 |

| 8 | 2015 | Americas | 38486.0 |

| 9 | 2014 | Americas | 41410.0 |

| 12 | 2015 | Europe/Middle East/Africa | 26073.0 |

| 13 | 2014 | Europe/Middle East/Africa | 30700.0 |

| 16 | 2015 | Asia Pacific | 16871.0 |

| 17 | 2014 | Asia Pacific | 20216.0 |

| 8 | 2016 | Americas | 37513.0 |

| 9 | 2015 | Americas | 38486.0 |

| 12 | 2016 | Europe/Middle East/Africa | 24769.0 |

| 13 | 2015 | Europe/Middle East/Africa | 26073.0 |

| 16 | 2016 | Asia Pacific | 17313.0 |

| 17 | 2015 | Asia Pacific | 16871.0 |

| 8 | 2017 | Americas | 37479.0 |

| 9 | 2016 | Americas | 37513.0 |

| 12 | 2017 | Europe/Middle East/Africa | 24345.0 |

| 13 | 2016 | Europe/Middle East/Africa | 24769.0 |

| 16 | 2017 | Asia Pacific | 16970.0 |

| 17 | 2016 | Asia Pacific | 17313.0 |

| 4 | 2018 | Americas | 36994.0 |

| 8 | 2018 | Europe/Middle East/Africa | 25491.0 |

| 12 | 2018 | Asia Pacific | 17106.0 |

| 5 | 2019 | Americas | 36274.0 |

| 6 | 2018 | Americas | 36994.0 |

| 10 | 2019 | Europe/Middle East/Africa | 24443.0 |

| 11 | 2018 | Europe/Middle East/Africa | 25491.0 |

| 15 | 2019 | Asia Pacific | 16430.0 |

| 16 | 2018 | Asia Pacific | 17106.0 |

Allison inspects the time series of revenue for the "Americas" region:

geo_revenue_df[geo_revenue_df["Region"] == "Americas"].sort_values("Year")

| Year | Region | Revenue | |

|---|---|---|---|

| 9 | 2008 | Americas | 42807.0 |

| 8 | 2009 | Americas | 40184.0 |

| 9 | 2009 | Americas | 40184.0 |

| 8 | 2010 | Americas | 42044.0 |

| 9 | 2011 | Americas | 44944.0 |

| 8 | 2012 | Americas | 44556.0 |

| 9 | 2012 | Americas | 44556.0 |

| 8 | 2013 | Americas | 43249.0 |

| 9 | 2014 | Americas | 41410.0 |

| 9 | 2015 | Americas | 38486.0 |

| 8 | 2015 | Americas | 38486.0 |

| 9 | 2016 | Americas | 37513.0 |

| 8 | 2016 | Americas | 37513.0 |

| 8 | 2017 | Americas | 37479.0 |

| 4 | 2018 | Americas | 36994.0 |

| 6 | 2018 | Americas | 36994.0 |

| 5 | 2019 | Americas | 36274.0 |

Every year from 2008 to 2019 is present, but many of the years appear twice. That's to be expected,

since each of the annual reports contains two years of geographical revenue figures.

Allison drops the duplicate values using pandas.DataFrame.drop_duplicates().

geo_revenue_df.drop_duplicates(["Region", "Year"], inplace=True)

geo_revenue_df

| Year | Region | Revenue | |

|---|---|---|---|

| 8 | 2009 | Americas | 40184.0 |

| 9 | 2008 | Americas | 42807.0 |

| 12 | 2009 | Europe/Middle East/Africa | 32583.0 |

| 13 | 2008 | Europe/Middle East/Africa | 37020.0 |

| 16 | 2009 | Asia Pacific | 20710.0 |

| 17 | 2008 | Asia Pacific | 21111.0 |

| 8 | 2010 | Americas | 42044.0 |

| 12 | 2010 | Europe/Middle East/Africa | 31866.0 |

| 16 | 2010 | Asia Pacific | 23150.0 |

| 8 | 2012 | Americas | 44556.0 |

| 9 | 2011 | Americas | 44944.0 |

| 12 | 2012 | Europe/Middle East/Africa | 31775.0 |

| 13 | 2011 | Europe/Middle East/Africa | 33952.0 |

| 16 | 2012 | Asia Pacific | 25937.0 |

| 17 | 2011 | Asia Pacific | 25273.0 |

| 8 | 2013 | Americas | 43249.0 |

| 12 | 2013 | Europe/Middle East/Africa | 31628.0 |

| 16 | 2013 | Asia Pacific | 22923.0 |

| 8 | 2015 | Americas | 38486.0 |

| 9 | 2014 | Americas | 41410.0 |

| 12 | 2015 | Europe/Middle East/Africa | 26073.0 |

| 13 | 2014 | Europe/Middle East/Africa | 30700.0 |

| 16 | 2015 | Asia Pacific | 16871.0 |

| 17 | 2014 | Asia Pacific | 20216.0 |

| 8 | 2016 | Americas | 37513.0 |

| 12 | 2016 | Europe/Middle East/Africa | 24769.0 |

| 16 | 2016 | Asia Pacific | 17313.0 |

| 8 | 2017 | Americas | 37479.0 |

| 12 | 2017 | Europe/Middle East/Africa | 24345.0 |

| 16 | 2017 | Asia Pacific | 16970.0 |

| 4 | 2018 | Americas | 36994.0 |

| 8 | 2018 | Europe/Middle East/Africa | 25491.0 |

| 12 | 2018 | Asia Pacific | 17106.0 |

| 5 | 2019 | Americas | 36274.0 |

| 10 | 2019 | Europe/Middle East/Africa | 24443.0 |

| 15 | 2019 | Asia Pacific | 16430.0 |

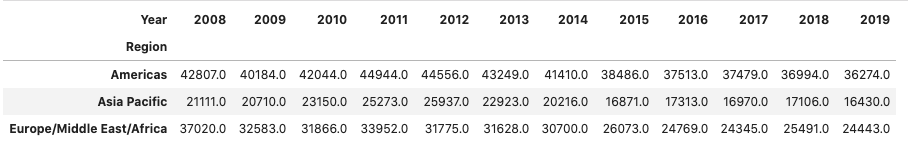

Now Allison has a clean and complete set of revenue figures by geographical region for the years 2008-2019.

She uses Pandas' pandas.DataFrame.pivot() method to convert this data into a compact table.

revenue_table = geo_revenue_df.pivot(index="Region", columns="Year", values="Revenue")

revenue_table

| Year | 2008 | 2009 | 2010 | 2011 | 2012 | 2013 | 2014 | 2015 | 2016 | 2017 | 2018 | 2019 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Region | ||||||||||||

| Americas | 42807.0 | 40184.0 | 42044.0 | 44944.0 | 44556.0 | 43249.0 | 41410.0 | 38486.0 | 37513.0 | 37479.0 | 36994.0 | 36274.0 |

| Asia Pacific | 21111.0 | 20710.0 | 23150.0 | 25273.0 | 25937.0 | 22923.0 | 20216.0 | 16871.0 | 17313.0 | 16970.0 | 17106.0 | 16430.0 |

| Europe/Middle East/Africa | 37020.0 | 32583.0 | 31866.0 | 33952.0 | 31775.0 | 31628.0 | 30700.0 | 26073.0 | 24769.0 | 24345.0 | 25491.0 | 24443.0 |

Then she uses that table to produce a plot of revenue by region over that 11-year period.

plt.rcParams.update({'font.size': 16})

_ = revenue_table.transpose().plot(title="Revenue by Geographic Region",

ylabel="Revenue (Millions of US$)",

figsize=(12, 7), ylim=(0, 50000))

Now Allison has a clear picture of the detailed revenue data that was hidden inside those 1500 pages of PDF files. As she works on her analyst report, Allison can use the same process to extract DataFrames for other financial metrics too!