# Import Python libraries

from typing import *

import os

import ibm_watson

import ibm_watson.natural_language_understanding_v1 as nlu

import ibm_cloud_sdk_core

import pandas as pd

import sys

# And of course we need the text_extensions_for_pandas library itself.

try:

import text_extensions_for_pandas as tp

except ModuleNotFoundError as e:

raise Exception("text_extensions_for_pandas package not found on the Jupyter "

"kernel's path. Please either run:\n"

" ln -s ../../text_extensions_for_pandas .\n"

"from the directory containing this notebook, or use a Python "

"environment on which you have used `pip` to install the package.")

if "IBM_API_KEY" not in os.environ:

raise ValueError("IBM_API_KEY environment variable not set. Please create "

"a free instance of IBM Watson Natural Language Understanding "

"(see https://www.ibm.com/cloud/watson-natural-language-understanding) "

"and set the IBM_API_KEY environment variable to your instance's "

"API key value.")

api_key = os.environ.get("IBM_API_KEY")

service_url = os.environ.get("IBM_SERVICE_URL")

natural_language_understanding = ibm_watson.NaturalLanguageUnderstandingV1(

version="2021-01-01",

authenticator=ibm_cloud_sdk_core.authenticators.IAMAuthenticator(api_key)

)

natural_language_understanding.set_service_url(service_url)

# Github notebook gists will be this wide: ------------------>

# Screenshots of this notebook should be this wide: ----------------------------->

Market Intelligence with Pandas and IBM Watson¶

In this article, we'll show how to perform an example market intelligence task using Watson Natural Language Understanding and our open source library Text Extensions for Pandas.

Market intelligence is an important application of natural language processing. In this context, "market intelligence" means "finding useful facts about customers and competitors in news articles". This article focuses on a market intelligence task: extracting the names of executives from corporate press releases.

Information about a company's leadership has many uses. You could use that information to identify points of contact for sales or partnership discussions. Or you could estimate how much attention a company is giving to different strategic areas. Some organizations even use this information for recruiting purposes.

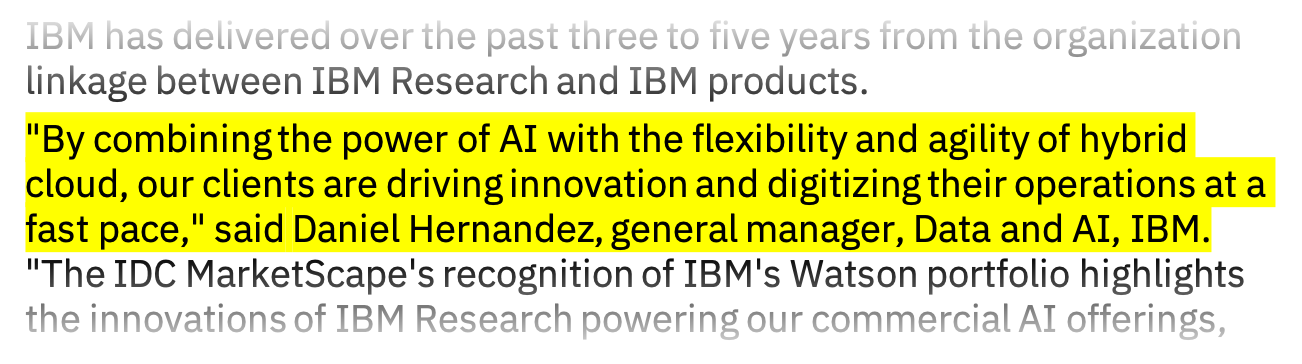

Press releases are a good place to find the names of executives, because these articles often feature quotes from company leaders. Here's an example quote from an IBM press release from December 2020:

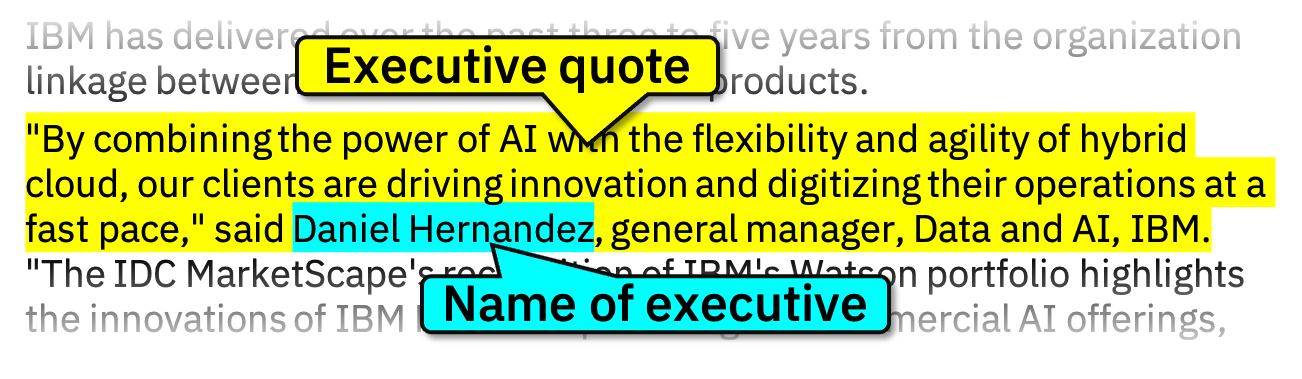

This quote contains information about the name of an executive:

This snippet is an example of the general pattern that we will look for:

- The article contains a quotation.

- The person to whom the quotation is attributed is mentioned by name.

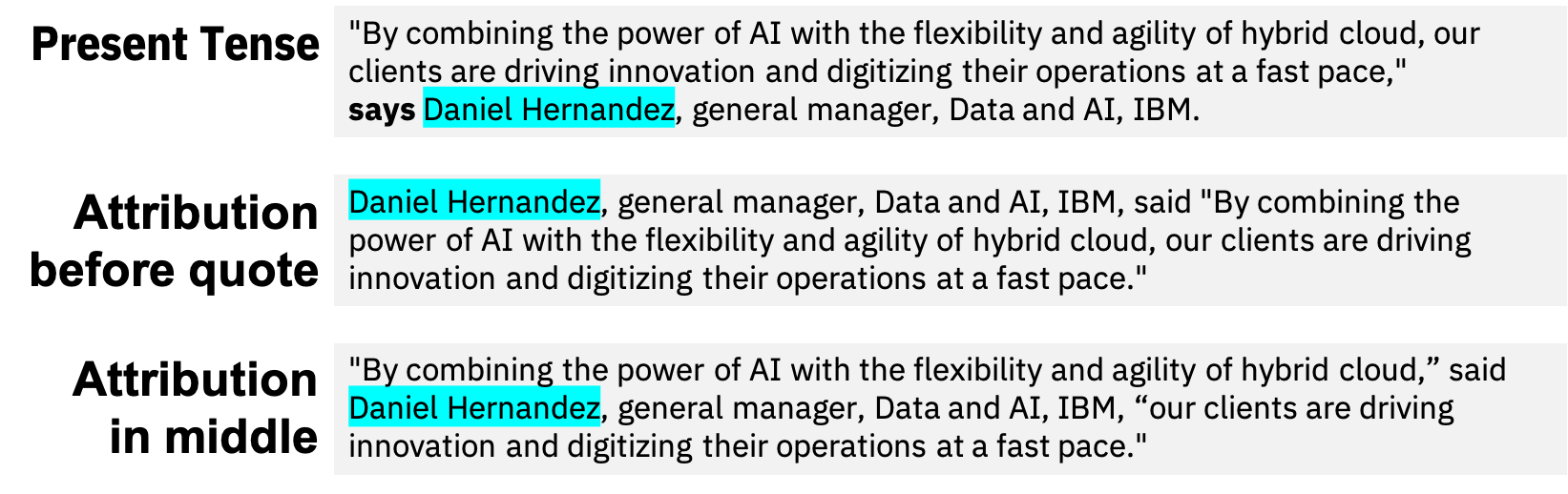

The key challenge that we need to address is the many different forms that this pattern can take. Here are some examples of variations that we would like to capture:

We'll deal with this variability by using general-purpose semantic models. These models extract high-level facts from formal text. The text could express a given fact in many different ways, but all of those different forms produce the same output.

Semantic models can save a lot of work. There's no need to label separate training data or write separate rules or for all of the variations of our target pattern. A small amount of code can capture all these variations at once.

Let's get started!

Use IBM Watson to identify people quoted by name.¶

IBM Watson Natural Language Understanding includes a model called semantic_roles that performs Semantic Role Labeling. You can think of Semantic Role Labeling as finding subject-verb-object triples:

- The actions that occurred in the text (the verb),

- Who performed each action (the subject), and

- On whom the action was performed (the object).

If take our example executive quote and feed it through the semantic_roles model, we get the following raw output:

response = natural_language_understanding.analyze(

text='''"By combining the power of AI with the flexibility and agility of \

hybrid cloud, our clients are driving innovation and digitizing their operations \

at a fast pace," said Daniel Hernandez, general manager, Data and AI, IBM.''',

return_analyzed_text=True,

features=nlu.Features(

semantic_roles=nlu.SemanticRolesOptions()

)).get_result()

response

{'usage': {'text_units': 1, 'text_characters': 221, 'features': 1},

'semantic_roles': [{'subject': {'text': 'our clients'},

'sentence': '"By combining the power of AI with the flexibility and agility of hybrid cloud, our clients are driving innovation and digitizing their operations at a fast pace," said\xa0Daniel Hernandez, general manager, Data and AI, IBM.',

'object': {'text': 'driving innovation and digitizing their operations'},

'action': {'verb': {'text': 'be', 'tense': 'present'},

'text': 'are',

'normalized': 'be'}},

{'subject': {'text': 'our clients'},

'sentence': '"By combining the power of AI with the flexibility and agility of hybrid cloud, our clients are driving innovation and digitizing their operations at a fast pace," said\xa0Daniel Hernandez, general manager, Data and AI, IBM.',

'object': {'text': 'innovation and digitizing their operations'},

'action': {'verb': {'text': 'drive', 'tense': 'present'},

'text': 'are driving',

'normalized': 'be drive'}},

{'subject': {'text': 'our clients'},

'sentence': '"By combining the power of AI with the flexibility and agility of hybrid cloud, our clients are driving innovation and digitizing their operations at a fast pace," said\xa0Daniel Hernandez, general manager, Data and AI, IBM.',

'object': {'text': 'their operations'},

'action': {'verb': {'text': 'digitize', 'tense': 'present'},

'text': 'digitizing',

'normalized': 'digitize'}},

{'subject': {'text': 'Daniel Hernandez, general manager, Data and AI, IBM'},

'sentence': '"By combining the power of AI with the flexibility and agility of hybrid cloud, our clients are driving innovation and digitizing their operations at a fast pace," said\xa0Daniel Hernandez, general manager, Data and AI, IBM.',

'object': {'text': 'By combining the power of AI with the flexibility and agility of hybrid cloud, our clients are driving innovation and digitizing their operations at a fast pace'},

'action': {'verb': {'text': 'say', 'tense': 'past'},

'text': 'said',

'normalized': 'say'}}],

'language': 'en',

'analyzed_text': '"By combining the power of AI with the flexibility and agility of hybrid cloud, our clients are driving innovation and digitizing their operations at a fast pace," said\xa0Daniel Hernandez, general manager, Data and AI, IBM.'}

That format is a bit hard to read. Let's use our open-source library, Text Extensions for Pandas, to convert it to a Pandas DataFrame:

import text_extensions_for_pandas as tp

dfs = tp.io.watson.nlu.parse_response(response)

dfs["semantic_roles"]

| subject.text | sentence | object.text | action.verb.text | action.verb.tense | action.text | action.normalized | |

|---|---|---|---|---|---|---|---|

| 0 | our clients | "By combining the power of AI with the flexibi... | driving innovation and digitizing their operat... | be | present | are | be |

| 1 | our clients | "By combining the power of AI with the flexibi... | innovation and digitizing their operations | drive | present | are driving | be drive |

| 2 | our clients | "By combining the power of AI with the flexibi... | their operations | digitize | present | digitizing | digitize |

| 3 | Daniel Hernandez, general manager, Data and AI... | "By combining the power of AI with the flexibi... | By combining the power of AI with the flexibil... | say | past | said | say |

Now we can see that the semantic_roles model has identified four subject-verb-object triples. Each row of this DataFrame contains one triple. In the first row, the verb is "to be", and in the last row, the verb is "to say".

The last row is where things get interesting for us, because the verb "to say" indicates that someone made a statement. And that's exactly the high-level pattern we're looking for. Let's filter the DataFrame down to that row and look at it more closely.

dfs["semantic_roles"][dfs["semantic_roles"]["action.normalized"] == "say"]

| subject.text | sentence | object.text | action.verb.text | action.verb.tense | action.text | action.normalized | |

|---|---|---|---|---|---|---|---|

| 3 | Daniel Hernandez, general manager, Data and AI... | "By combining the power of AI with the flexibi... | By combining the power of AI with the flexibil... | say | past | said | say |

The subject in this subject-verb-object triple is "Daniel Hernandez, general manager, Data and AI, IBM", and the object is the quote from Mr. Hernandez.

This model's output has captured the general action of "[person] says [quotation]". Different variations of that general pattern will produce the same output. If we move the attribution to the middle of the quote, we get the same result:

response = natural_language_understanding.analyze(

text='''"By combining the power of AI with the flexibility and agility of \

hybrid cloud,” said Daniel Hernandez, general manager, Data and AI, IBM, “our \

clients are driving innovation and digitizing their operations at a fast pace."''',

return_analyzed_text=True,

features=nlu.Features(semantic_roles=nlu.SemanticRolesOptions())).get_result()

dfs = tp.io.watson.nlu.parse_response(response)

dfs["semantic_roles"][dfs["semantic_roles"]["action.normalized"] == "say"]

| subject.text | sentence | object.text | action.verb.text | action.verb.tense | action.text | action.normalized | |

|---|---|---|---|---|---|---|---|

| 0 | Daniel Hernandez, general manager, Data and AI... | "By combining the power of AI with the flexibi... | By combining the power of AI with the flexibil... | say | past | said | say |

If we change the past-tense verb "said" to the present-tense "says", we get the same result again:

response = natural_language_understanding.analyze(

text='''"By combining the power of AI with the flexibility and agility of \

hybrid cloud, our clients are driving innovation and digitizing their operations \

at a fast pace," says Daniel Hernandez, general manager, Data and AI, IBM.''',

return_analyzed_text=True,

features=nlu.Features(semantic_roles=nlu.SemanticRolesOptions())).get_result()

dfs = tp.io.watson.nlu.parse_response(response)

dfs["semantic_roles"][dfs["semantic_roles"]["action.normalized"] == "say"]

| subject.text | sentence | object.text | action.verb.text | action.verb.tense | action.text | action.normalized | |

|---|---|---|---|---|---|---|---|

| 3 | Daniel Hernandez, general manager, Data and AI... | "By combining the power of AI with the flexibi... | By combining the power of AI with the flexibil... | say | present | says | say |

All the different variations that we talked about earlier will produce the same result. This model lets us capture them all with very little code. All we need to do is to run the model and filter the outputs down to the verb we're looking for.

So far we've been looking at one paragraph. Let's rerun the same process on the entire press release.

Finding instances of "Someone Said Something"¶

As before, we can run the document through Watson Natural Language Understanding's Python interface and tell Watson to run its semantic_roles model. Then we use Text Extensions for Pandas to convert the model results to a DataFrame:

DOC_URL = "https://newsroom.ibm.com/2020-12-02-IBM-Named-a-Leader-in-the-2020-IDC-MarketScape-For-Worldwide-Advanced-Machine-Learning-Software-Platform"

# Make the request

response = natural_language_understanding.analyze(

url=DOC_URL, # NLU will fetch the URL for us.

return_analyzed_text=True,

features=nlu.Features(

semantic_roles=nlu.SemanticRolesOptions()

)).get_result()

# Convert the output of the `semantic_roles` model to a DataFrame

semantic_roles_df = tp.io.watson.nlu.parse_response(response)["semantic_roles"]

semantic_roles_df.head()

| subject.text | sentence | object.text | action.verb.text | action.verb.tense | action.text | action.normalized | |

|---|---|---|---|---|---|---|---|

| 0 | IBM) | ARMONK, N.Y., Dec. 2, 2020 /PRNewswire/ -- IBM... | to the Leaders Category in the latest IDC Mark... | name | past | has been named | have be name |

| 1 | The report | The report evaluated vendors who offer tools ... | vendors who offer tools and frameworks for dev... | evaluate | past | evaluated | evaluate |

| 2 | vendors | The report evaluated vendors who offer tools ... | tools and frameworks | offer | present | offer | offer |

| 3 | by the IDC MarketScape | As reported by the IDC MarketScape, IBM offer... | IBM offers a wide range of innovative machine ... | report | past | reported | report |

| 4 | innovative machine | As reported by the IDC MarketScape, IBM offer... | capabilities | learn | present | learning | learn |

If we filter down to the subject-verb-object triples for the verb "to say", we can see that this document has quite a few examples of the "person says statement" pattern:

quotes_df = semantic_roles_df[semantic_roles_df["action.normalized"] == "say"]

quotes_df

| subject.text | sentence | object.text | action.verb.text | action.verb.tense | action.text | action.normalized | |

|---|---|---|---|---|---|---|---|

| 15 | Daniel Hernandez, general manager, Data and AI... | "By combining the power of AI with the flexib... | By combining the power of AI with the flexibil... | say | past | said | say |

| 21 | Curren Katz, Director of Data Science R&D, Hig... | "At the beginning of the COVID-19 pandemic, H... | At the beginning of the COVID-19 pandemic, Hig... | say | past | said | say |

| 31 | Ritu Jyoti, program vice president, AI researc... | Digital Transformation (DX) is one of the key... | Digital Transformation (DX) is one of the key ... | say | present | says | say |

The DataFrame quotes_df contains all the instances of the "person says statement" pattern that the model has found. We want to filter this set down to cases where the subject (the person making the statement) is mentioned by name. We also want to extract that name.

In this press release, all three instances of the "person says statement" pattern happen to have a name in the subject. But there will not always be a name. Consider this example sentence from another IBM press release:

27 percent of Gen Z surveyed said they will increase outside \

interaction, compared to 19 percent of Gen X surveyed and only 16 percent of

those surveyed over 55.

Here, the subject for the verb "said" is "27 percent of Gen Z surveyed". That subject that does not include a person name.

# Do not include this cell in the blog.

# Show that the `semantic_roles` model produces the output we described above.

response = natural_language_understanding.analyze(

text='''27 percent of Gen Z surveyed said they will increase outside \

interaction, compared to 19 percent of Gen X surveyed and only 16 percent of \

those surveyed over 55.''',

return_analyzed_text=True,

features=nlu.Features(semantic_roles=nlu.SemanticRolesOptions())).get_result()

# Convert the output of the `semantic_roles` model to a DataFrame

tp.io.watson.nlu.parse_response(response)["semantic_roles"]

| subject.text | sentence | object.text | action.verb.text | action.verb.tense | action.text | action.normalized | |

|---|---|---|---|---|---|---|---|

| 0 | 27 percent of Gen Z surveyed | 27 percent of Gen Z surveyed said they will in... | they will increase outside interaction, compar... | say | past | said | say |

Finding places where a person is mentioned by name¶

How can we find the matches where the subject contains a person's name? Fortunately for us, Watson Natural Language Understanding has a model for exactly that task. The entities model in this Watson service finds named entity mentions. A named entity mention is a place where the document mentions an entity like a person or company by the entity's name.

This model will find person names with high accuracy. The code below tells the Watson service to run the entities model and retrieve mentions. Then we convert the result to a DataFrame using Text Extensions for Pandas:

pd.options.display.max_rows = 30 # Keep the output of this cell compact

response = natural_language_understanding.analyze(

url=DOC_URL,

return_analyzed_text=True,

features=nlu.Features(

# Ask Watson to find mentions of named entities

entities=nlu.EntitiesOptions(mentions=True),

# Also divide the document into words. We'll use these in just a moment.

syntax=nlu.SyntaxOptions(tokens=nlu.SyntaxOptionsTokens()),

)).get_result()

entity_mentions_df = tp.io.watson.nlu.parse_response(response)["entity_mentions"]

entity_mentions_df

| type | text | span | confidence | |

|---|---|---|---|---|

| 0 | Organization | IDC MarketScape | [112, 127): 'IDC MarketScape' | 0.466973 |

| 1 | Organization | IDC MarketScape | [383, 398): 'IDC MarketScape' | 0.753796 |

| 2 | Organization | IDC MarketScape | [956, 971): 'IDC MarketScape' | 0.664680 |

| 3 | Organization | IDC MarketScape | [1346, 1361): 'IDC MarketScape' | 0.677499 |

| 4 | Organization | IDC MarketScape | [3786, 3801): 'IDC MarketScape' | 0.524242 |

| ... | ... | ... | ... | ... |

| 49 | Organization | AI | [2512, 2514): 'AI' | 0.514581 |

| 50 | Organization | ICT | [3534, 3537): 'ICT' | 0.691880 |

| 51 | JobTitle | telecommunications vendors | [3997, 4023): 'telecommunications vendors' | 0.259333 |

| 52 | Person | Tyler Allen | [4213, 4224): 'Tyler Allen' | 0.964611 |

| 53 | EmailAddress | tballen@us.ibm.com | [4248, 4266): 'tballen@us.ibm.com' | 0.800000 |

54 rows × 4 columns

The entities model's output contains mentions of many types of entity. For this application, we need

mentions of person names. Let's filter our DataFrame down to just those types of mentions:

person_mentions_df = entity_mentions_df[entity_mentions_df["type"] == "Person"]

person_mentions_df.tail(4)

| type | text | span | confidence | |

|---|---|---|---|---|

| 31 | Person | IBM Watson | [1915, 1925): 'IBM Watson' | 0.364448 |

| 34 | Person | Ritu Jyoti | [2476, 2486): 'Ritu Jyoti' | 0.959464 |

| 39 | Person | Watson | [2891, 2897): 'Watson' | 0.933148 |

| 40 | Person | Watson | [3060, 3066): 'Watson' | 0.988052 |

Tying it all together¶

Now we have two pieces of information that we need to combine:

- Instances of the "person said statement" pattern from the

semantic_rolesmodel - Mentions of person names from the

entitiesmodel

We need to align the "subject" part of the semantic role labeler's output with the person mentions. We can use the span manipulation facilities of Text Extensions for Pandas to do this.

Spans are a common concept in natural language processing. A span represents a region of the document, usually as begin and end offsets and a reference to the document's text. Text Extensions for Pandas adds a special SpanDtype data type to Pandas DataFrames. With this data type, you can define a DataFrame with one or more columns of span data. For example, the column called "span" in the DataFrame above is of the SpanDtype data type. The first span in this column, [1288, 1304): 'Daniel Hernandez', shows that the name "Daniel Hernandez" occurs between locations 1288 and 1304 in the document.

The output of the semantic_roles model doesn't contain location information. But that's ok, because it's easy to create your own spans. We just need to use some string matching to recover the missing locations:

# Retrieve the full document text from the entity mentions output.

doc_text = entity_mentions_df["span"].array.document_text

# Filter down to just the rows and columns we're interested in

subjects_df = quotes_df[["subject.text"]].copy().reset_index(drop=True)

# Use String.index() to find where the strings in "subject.text" begin

subjects_df["begin"] = pd.Series(

[doc_text.index(s) for s in subjects_df["subject.text"]], dtype=int)

# Compute end offsets and wrap the <begin, end, text> triples in a SpanArray

subjects_df["end"] = subjects_df["begin"] + subjects_df["subject.text"].str.len()

subjects_df["span"] = tp.SpanArray(doc_text, subjects_df["begin"],

subjects_df["end"])

subjects_df = subjects_df.drop(columns=["begin", "end"])

subjects_df

| subject.text | span | |

|---|---|---|

| 0 | Daniel Hernandez, general manager, Data and AI... | [1288, 1339): 'Daniel Hernandez, general manag... |

| 1 | Curren Katz, Director of Data Science R&D, Hig... | [1838, 1896): 'Curren Katz, Director of Data S... |

| 2 | Ritu Jyoti, program vice president, AI researc... | [2476, 2581): 'Ritu Jyoti, program vice presid... |

Now we have a column of span data for the semantic_roles model's output, and we can align these spans with the spans of person mentions. Text Extensions for Pandas includes built-in span operations. One of these operations, contain_join(), takes two columns of span data and identifies all pairs of spans where the first span contains the second span. We can use this operation to find all the places where the span from the semantic_roles model contains a span from the output of the entities model:

execs_df = tp.spanner.contain_join(subjects_df["span"],

person_mentions_df["span"],

"subject", "person")

execs_df[["subject", "person"]]

| subject | person | |

|---|---|---|

| 0 | [1288, 1339): 'Daniel Hernandez, general manag... | [1288, 1304): 'Daniel Hernandez' |

| 1 | [1838, 1896): 'Curren Katz, Director of Data S... | [1838, 1849): 'Curren Katz' |

| 2 | [2476, 2581): 'Ritu Jyoti, program vice presid... | [2476, 2486): 'Ritu Jyoti' |

To recap: With a few lines of Python code, we've identified places in the article where the article quoted a person by name. For each of those quotations, we've identified the person name and its location in the document (the person column in the DataFrame above).

Combining Code Into One Function¶

Here's all the code we've just created, condensed down to a single Python function:

# In the blog post, this will be a Github gist.

# See https://gist.github.com/frreiss/038ac63ef20eed323a5637f9ddb2de8d

import pandas as pd

import text_extensions_for_pandas as tp

import ibm_watson

import ibm_watson.natural_language_understanding_v1 as nlu

import ibm_cloud_sdk_core

def find_persons_quoted_by_name(doc_url, api_key, service_url) -> pd.DataFrame:

# Ask Watson Natural Language Understanding to run its "semantic_roles"

# and "entities" models.

natural_language_understanding = ibm_watson.NaturalLanguageUnderstandingV1(

version="2021-01-01",

authenticator=ibm_cloud_sdk_core.authenticators.IAMAuthenticator(api_key)

)

natural_language_understanding.set_service_url(service_url)

nlu_results = natural_language_understanding.analyze(

url=doc_url,

return_analyzed_text=True,

features=nlu.Features(

entities=nlu.EntitiesOptions(mentions=True),

semantic_roles=nlu.SemanticRolesOptions())).get_result()

# Convert the output of Watson Natural Language Understanding to DataFrames.

dataframes = tp.io.watson.nlu.parse_response(nlu_results)

entity_mentions_df = dataframes["entity_mentions"]

semantic_roles_df = dataframes["semantic_roles"]

# Extract mentions of person names

person_mentions_df = entity_mentions_df[entity_mentions_df["type"] == "Person"]

# Extract instances of subjects that made statements

quotes_df = semantic_roles_df[semantic_roles_df["action.normalized"] == "say"]

subjects_df = quotes_df[["subject.text"]].copy().reset_index(drop=True)

# Retrieve the full document text from the entity mentions output.

doc_text = entity_mentions_df["span"].array.document_text

# Filter down to just the rows and columns we're interested in

subjects_df = quotes_df[["subject.text"]].copy().reset_index(drop=True)

# Use String.index() to find where the strings in "subject.text" begin

subjects_df["begin"] = pd.Series(

[doc_text.index(s) for s in subjects_df["subject.text"]], dtype=int)

# Compute end offsets and wrap the <begin, end, text> triples in a SpanArray column

subjects_df["end"] = subjects_df["begin"] + subjects_df["subject.text"].str.len()

subjects_df["span"] = tp.SpanArray(doc_text, subjects_df["begin"], subjects_df["end"])

# Align subjects with person names

execs_df = tp.spanner.contain_join(subjects_df["span"],

person_mentions_df["span"],

"subject", "person")

# Add on the document URL.

execs_df["url"] = doc_url

return execs_df[["person", "url"]]

# Don't include this cell in the blog post.

# Verify that the code above works

find_persons_quoted_by_name(DOC_URL, api_key, service_url)

| person | url | |

|---|---|---|

| 0 | [1288, 1304): 'Daniel Hernandez' | https://newsroom.ibm.com/2020-12-02-IBM-Named-... |

| 1 | [1838, 1849): 'Curren Katz' | https://newsroom.ibm.com/2020-12-02-IBM-Named-... |

| 2 | [2476, 2486): 'Ritu Jyoti' | https://newsroom.ibm.com/2020-12-02-IBM-Named-... |

Calling the Function on Many Documents¶

This function, find_persons_quoted_by_name(), turns a press release into a list of executive names. Here's the output that we get if we pass a year's worth articles from the "Announcements" section of ibm.com through it:

# Don't include this cell in the blog post.

# Load press release URLs from a file

with open("ibm_press_releases.txt", "r") as f:

lines = [l.strip() for l in f.readlines()]

ibm_press_release_urls = [l for l in lines if len(l) > 0 and l[0] != "#"]

executive_names = pd.concat([

find_persons_quoted_by_name(url, api_key, service_url)

for url in ibm_press_release_urls

])

executive_names

| person | url | |

|---|---|---|

| 0 | [1201, 1215): 'Wendi Whitmore' | https://newsroom.ibm.com/2020-02-11-IBM-X-Forc... |

| 0 | [1281, 1292): 'Rob DiCicco' | https://newsroom.ibm.com/2020-02-18-IBM-Study-... |

| 0 | [1213, 1229): 'Christoph Herman' | https://newsroom.ibm.com/2020-02-19-IBM-Power-... |

| 1 | [2227, 2242): 'Stephen Leonard' | https://newsroom.ibm.com/2020-02-19-IBM-Power-... |

| 0 | [2068, 2076): 'Bob Lord' | https://newsroom.ibm.com/2020-02-26-2020-Call-... |

| ... | ... | ... |

| 0 | [3114, 3124): 'Mike Doran' | https://newsroom.ibm.com/2021-01-25-OVHcloud-t... |

| 0 | [3155, 3169): 'Howard Boville' | https://newsroom.ibm.com/2021-01-26-Luminor-Ba... |

| 0 | [3114, 3137): 'Samuel Brack Co-Founder' | https://newsroom.ibm.com/2021-01-26-DIA-Levera... |

| 1 | [3509, 3523): 'Hillery Hunter' | https://newsroom.ibm.com/2021-01-26-DIA-Levera... |

| 0 | [1487, 1497): 'Ana Zamper' | https://newsroom.ibm.com/2021-01-26-Latin-Amer... |

314 rows × 2 columns

Now we've turned 191 press releases into a DataFrame with 301 executive names (EDIT: 314 names with the latest version of Watson Natural Language Understanding, as of October 2021). That's a lot of power packed into one screen's worth of code! To find out more about the advanced semantic models that let us do so much with so little code, check out Watson Natural Language Understanding here!

# Alternate version of adding spans to subjecs: Use dictionary matching.

# This method is currently problematic because we don't have payloads

# for dictionary entries. We have to use exact string matching to map the

# original strings back to the dictionary matches.

# Create a dictionary from the strings in quotes_df["subject.text"].

tokenizer = tp.io.spacy.simple_tokenizer()

dictionary = tp.spanner.extract.create_dict(quotes_df["subject.text"], tokenizer)

# Match the dictionary against the document text.

doc_text = entity_mentions_df["span"].array.document_text

tokens = tp.io.spacy.make_tokens(doc_text, tokenizer)

matches_df = tp.spanner.extract_dict(tokens, dictionary, output_col_name="span")

matches_df["subject.text"] = matches_df["span"].array.covered_text # Join key

# Merge the dictionary matches back with the original strings.

subjects_df = quotes_df[["subject.text"]].merge(matches_df)

subjects_df

| subject.text | span | |

|---|---|---|

| 0 | Daniel Hernandez, general manager, Data and AI... | [1288, 1339): 'Daniel Hernandez, general manag... |

| 1 | Curren Katz, Director of Data Science R&D, Hig... | [1838, 1896): 'Curren Katz, Director of Data S... |

| 2 | Ritu Jyoti, program vice president, AI researc... | [2476, 2581): 'Ritu Jyoti, program vice presid... |