Collatex in context¶

- Collation - computing - interpretation

- Common issues: past and present of semi-automatic collation

- Collation tools

Collation - computing - interpretation¶

Collation is, computationally speaking, a form of data comparison.

Data comparison is an integral part of file synchronization, backup, version control, all things that have been and are part of our daily interaction with computers. Data comparison is generally performed using diff utilities, that calculates and displays the differences between two files.

So ... what we do on a daily base is not so different from collation!

But! Diff utilities can only calculate the differences between two versions of a file, generally work at a line-level granularity (but there are some implementations using word-level, as Wdiff) and are not meant for dealing with the complexity of textual variation (e.g. transposition).

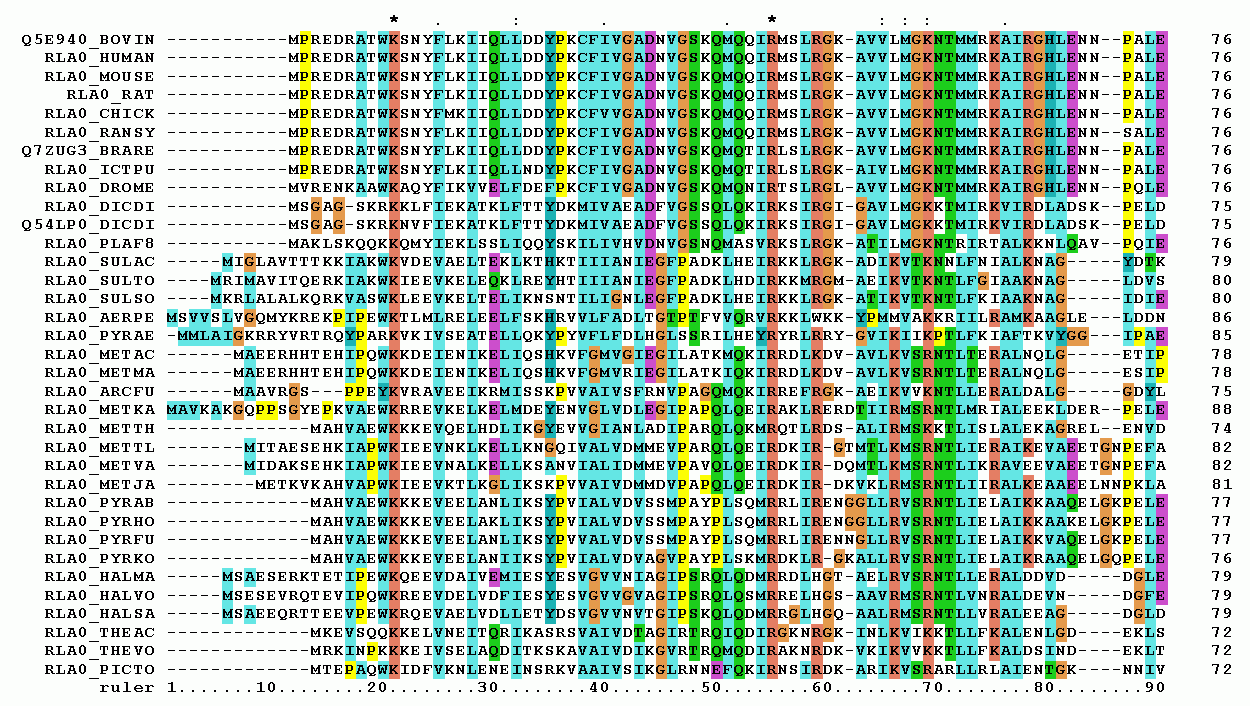

Some of these issues, namely comparing more than two versions, are addressed by bioinformatics in sequence alignment of DNA, RNA or protein. Recent developments in textual collation make use of this research, even if that research does not pursue the complexity of textual variation.

Some of these issues, namely comparing more than two versions, are addressed by bioinformatics in sequence alignment of DNA, RNA or protein. Recent developments in textual collation make use of this research, even if that research does not pursue the complexity of textual variation.

It is fundamental to bear in mind that "the assessment of findings in the Humanities [and not only] is based on interpretation. While it certainly can be supported by computational means, it is not necessarily computable" CollateX Doc: Alignment.

Common issues: past and present of semi-automatic collation¶

Since the 1960's, scholars tried to use machines to perform collation. The quotes listed below show that some of the issues encountered by scholars are the same over time (Note: this section is not concerned with the algorithms that have been used nor with technical details).

Froger 1968: La collation des manuscrits est une opération qui relève en quelque sorte de la comptabilité: la machine peut fort bien l'exécuter.

It's funny to notice that in the Preface of this book Robert Marichal, eminent archiviste paléographe and Froger's supervisor, wrote

Pour les collations l'emploi d'un ordinateur est dans la plupart de cas irréalisable.

Collaboration¶

Dearing 1970: ... a student introduced me to a professional programmer in the aircraft industry. [...] He did the programming in his spare time over a couple of months and charged it to experience. [...] Mr. Bandat [the second programmer] also worked in his spare time. [...] We paid him a lump sum on delivery, but it did not fully compensate him for his time.

Petty and Gibson 1970: Since one of the most tirying difficulties of our first attempt had been the uncertainty of our communications with the programmers, Mr. Petty proposed that a second effort be made in which he would be the programmer. We would thus be certain that decisions made by us as textual scholars would not be circumvented when they were translated into machine code.

Rigorous re-thinking¶

Michel 1982: Les progrès réalisés sont largement déterminés par la rigueur méthodologique des concepts que l'informatique impose à celui qui en use.

Robinson 1989: Along the way, I learnt several computer languages and found myself re-thinking some of the fundamental notions of textual criticism.

Modularity¶

Gilbert 1979: The program is modular in design, that is, it consists of several steps each doing a simple task [...] The COLLATE system is modular, i.e., it is composed of a series of programs each of which performs a simple procedure. Such an approach allows the editor to correct errors in the data as they are perceived. It also means that less time is spent waiting for computer resources to become available.

Waite 1979: ... separating as much as possible the different steps in the program so that they can be altered independently to meet varying conditions.

How to¶

Raben 1979: If we consider this problem [that of the alignment] in terms of points on a series of arrays rather than on several linear formats.

Dearing 1970: It is not as difficult to compare texts by computer as it is to record the results of the comparison in a way that will be easy and unambiguous to read. My desires as to the print-out determined how Mr. Bland [the programmer] chose to do the comparison.

Future in the past¶

Ott 1973: By means of this new tool [Tustep], which we have in electronic data processing, new and higher standards are imposed not only on the results of others sciences, but also on critical editions - standards which can scarcely be satisfied by traditional methods. This is , in my view, the main reason why electronic data processing should be employed in the preparation of critical editions, especially in large and complex projects. The question whether it is possible or not to save time and / or money by these methods is only of secondary importance. The expenses necessary for future critical editions may possibly be even higher than they have been in the past when these tools were not yet available.

Raben 1979: We who believe in bringing to the study of literature every tool that can increase our aesthetic responses and intellectual comprehension are accused of restricting our field of vision, of reducing the greatness of art to the trivial level of counting or alphabetizing. It may be many years before the true range and basis of our efforts will be generally recognized.

Collation tools¶

Tools commonly used for the machine-assisted collation and visualization of witnesses with variation include the following:

Collation:

- Collate

- TuStep

- MVD format

- TEIComparator

- Juxta

- CollateX

- Traviz

Visualization:

- Versioning machine

- CATview

Where we were able, we’ve used the tools to collate or compare the first paragraph of Charles Darwin’s Origin of species according to the six editions published during his lifetime. All tools listed below, except the original Collate, are open source.

Collate¶

[Collate](http://www.textualcommunities.usask.ca/web/textual-community/wiki/-/wiki/Main/The+background+to+the+Textual+Communities+Project) was an automated collation tool that ran on early Macintosh computers. It is no longer distributed or maintained, but it is historically important as one of the first tools largely distributed designed specifically to perform the sort of alignment, collation, and reporting undertaken by textual scholars in the preparation of critical editions.

[Collate](http://www.textualcommunities.usask.ca/web/textual-community/wiki/-/wiki/Main/The+background+to+the+Textual+Communities+Project) was an automated collation tool that ran on early Macintosh computers. It is no longer distributed or maintained, but it is historically important as one of the first tools largely distributed designed specifically to perform the sort of alignment, collation, and reporting undertaken by textual scholars in the preparation of critical editions.

TUSTEP¶

TUSTEP (the TUebingen System of Text Processing Programs, was developed to support the processing of textual data for scholarly research. With the COLLATE feature of TUSTEP, “a listing of the differences between one or several text versions and a basic printing in lines synoptic to the basic text. The differences must be provided in form of correcting instructions containing a correction key, as generated by the TUSTEP programs COMPARE or PRESORT.” (http://www.tustep.uni-tuebingen.de/pdf/hdb93_eng.pdf, p. 66) The English-language documentation does not appear to have kept pace with the German. TXSTEP (http://www.txstep.de/) is an XML-oriented front end to TUSTEP. License (both TUSTEP and TXSTEP): revised BSD.

MVD (Multi-Version Document) format¶

A multi-version document is for recording texts that exist in multiple versions. It is for recording the non-linear structure of a text and it represents a text as a set of merged versions in a single digital entity, which can be edited, and its versions listed, compared and searched. Versions can overlap freely, and this overcomes the limitation of markup languages. This form is equivalent to a 'graph', a set of intermingling paths that start at one point, branch, rejoin and split again, until they all join back together at the end. The MVD format needs a suite of tools in order to be processed, as exemplified by the technical doc of this edition.

TEIComparator¶

http://tei-comparator.sourceforge.net/

Juxta¶

[Juxta](http://www.juxtasoftware.org/) supports the collation, comparison, and visualization of textual variation (in plain text or XML sources) as either a desktop application or a web service. Visualizations include a heat map (image above), histogram, side-by-side synoptic view, TEI parallel segmentation, an experimental critical edition layout with apparatus criticus, and output for the Versioning machine (see below). License: Apache.

[Juxta](http://www.juxtasoftware.org/) supports the collation, comparison, and visualization of textual variation (in plain text or XML sources) as either a desktop application or a web service. Visualizations include a heat map (image above), histogram, side-by-side synoptic view, TEI parallel segmentation, an experimental critical edition layout with apparatus criticus, and output for the Versioning machine (see below). License: Apache.

CollateX¶

CollateX is available in Java (http://collatex.net) and Python (https://pypi.python.org/pypi/collatex) versions. The Python version, which is the focus of this workshop, provides hooks for user modification of the Tokenization and Normalization stages of the Gothenburg collation model, and supports output as a plain text table, HTML, SVG variant graph, GraphML, generic XML, and TEI parallel segmentation XML. The materials for this workshop provide tutorial information on all aspects of using the Python version of CollateX.

CollateX is available in Java (http://collatex.net) and Python (https://pypi.python.org/pypi/collatex) versions. The Python version, which is the focus of this workshop, provides hooks for user modification of the Tokenization and Normalization stages of the Gothenburg collation model, and supports output as a plain text table, HTML, SVG variant graph, GraphML, generic XML, and TEI parallel segmentation XML. The materials for this workshop provide tutorial information on all aspects of using the Python version of CollateX.

TRAViz¶

[TRAViz](http://www.traviz.vizcovery.org/index.html) is a JavaScript library that generates visualizations for Text Variant Graphs that show the variations among different editions of texts. TRAViz supports the collation task by providing methods to align various editions of a text, visualize the alignment, improve the readability for Text Variant Graphs compared to other approaches, and interact with the graph to discover how individual editions disseminate. (paraphrased from the TRAViz splash page). The image of the Darwin texts, above, is a static screen shot, but the TRAViz interface responds to mouse events to provide additional information about particularly moments in the visualization. License: FAL.

[TRAViz](http://www.traviz.vizcovery.org/index.html) is a JavaScript library that generates visualizations for Text Variant Graphs that show the variations among different editions of texts. TRAViz supports the collation task by providing methods to align various editions of a text, visualize the alignment, improve the readability for Text Variant Graphs compared to other approaches, and interact with the graph to discover how individual editions disseminate. (paraphrased from the TRAViz splash page). The image of the Darwin texts, above, is a static screen shot, but the TRAViz interface responds to mouse events to provide additional information about particularly moments in the visualization. License: FAL.

Versioning machine¶

The [Versioning machine](http://v-machine.org/) does not perform collation, but it takes texts whose variants have already been collated and tagged with TEI parallel segmentation or location referenced markup and displays the witnesses in a way that supports the identification, highlighting, and exploration of variation. The visualization above was captured from the Versioning machine output of Juxta after Juxta performed the collation and alignment. License: GPL.

The [Versioning machine](http://v-machine.org/) does not perform collation, but it takes texts whose variants have already been collated and tagged with TEI parallel segmentation or location referenced markup and displays the witnesses in a way that supports the identification, highlighting, and exploration of variation. The visualization above was captured from the Versioning machine output of Juxta after Juxta performed the collation and alignment. License: GPL.

CATview¶

[CATview](http://catview.uzi.uni-halle.de/) is an interactive visualization tool for textual variation. It consists of CSS and JavaScript libraries. It allows to view the differences at different granularity, immediately spot the degree of dissimilarity, navigate through the text, perform searches.

[CATview](http://catview.uzi.uni-halle.de/) is an interactive visualization tool for textual variation. It consists of CSS and JavaScript libraries. It allows to view the differences at different granularity, immediately spot the degree of dissimilarity, navigate through the text, perform searches.

Bibliography¶

Dearing, Vinton. 1970. ‘Computer Aids to Editing the Text of Dryden’. In Art and Error: Modern Textual Editing, edited by Ronald Gottesman and Scott Bennett, 254–78. Bloomington: Indiana University Press.

Froger, Jacques. 1968. La critique des textes et son automatisation.

Gilbert, Penny. 1979. ‘Preparation of prose-text edition’. In La Pratique des ordinateurs dans la critique des textes: Paris, [Colloque international], 29-31 mars 1978 (eds.) Irigoin, Jean, and Gian Piero Zarri. Paris: Ed. du C.N.R.S.

Michel, Jacques-Henri. 1982. ‘Review: La pratique des ordinateurs dans la critique des textes. Actes du colloque de Paris (29-31 mars 1978), publiés par Jean Irigoin et Gian Piero Zarri’. L’antiquité classique 51 (1): 509–11.

Ott, Wilhelm. 1973. ‘Computer Applications in Textual Criticism’. In The Computer and Literary Studies, edited by A.J. Aitken, R.W. Bailey, and N. Hamilton-Smith, 199–223. Edinburgh: Edinburgh University Press.

Petty, George R., and William M. Gibson. 1970. Project Occult: The Ordered Computer Collation of Unprepared Literary Text. New York: New York University Press.

Raben, Joseph. 1979. De acibus et faeni acervis: text comparison as a means of collation. In La Pratique des ordinateurs dans la critique des textes: Paris, [Colloque international], 29-31 mars 1978 (eds.) Irigoin, Jean, and Gian Piero Zarri. Paris: Ed. du C.N.R.S.

Robinson, P. M. W. 1989. ‘The Collation and Textual Criticism of Icelandic Manuscripts (1): Collation’. Literary and Linguistic Computing 4 (2): 99–105.

Waite, Stephen V.f. 1979. ‘Two programs for comparing texts’. In La Pratique des ordinateurs dans la critique des textes: Paris, [Colloque international], 29-31 mars 1978 (eds.) Irigoin, Jean, and Gian Piero Zarri. Paris: Ed. du C.N.R.S.