Tutorial¶

This notebook gets you started with using Text-Fabric for coding in the Hebrew Bible.

Chances are that a bit of reading about the underlying data model helps you to follow the exercises below, and vice versa.

Installing Text-Fabric¶

Python¶

You need to have Python on your system. Most systems have it out of the box, but alas, that is python2 and we need at least python 3.6.

Install it from python.org or from Anaconda.

Jupyter notebook¶

You need Jupyter.

If it is not already installed:

pip3 install jupyter

TF itself¶

pip3 install text-fabric

%load_ext autoreload

%autoreload 2

import sys, os, collections

from IPython.display import HTML

from tf.fabric import Fabric

from tf.extra.bhsa import Bhsa

Call Text-Fabric¶

Everything starts by calling up Text-Fabric. It needs to know where to look for data.

The Hebrew Bible is in the same repository as this tutorial.

I assume you have cloned bhsa

and phono

in your directory ~/github/etcbc, so that your directory structure looks like this

your home direcectory\

| - github\

| | - etcbc\

| | | - bhsa

| | | - phono

Tip¶

If you start computing with this tutorial, first copy its parent directory to somewhere else,

outside your bhsa directory.

If you pull changes from the bhsa repository later, your work will not be overwritten.

Where you put your tutorial directory is up till you.

It will work from any directory.

VERSION = '2017'

DATABASE = '~/github/etcbc'

BHSA = f'bhsa/tf/{VERSION}'

PHONO = f'phono/tf/{VERSION}'

TF = Fabric(locations=[DATABASE], modules=[BHSA, PHONO], silent=False )

This is Text-Fabric 5.4.2 Api reference : https://dans-labs.github.io/text-fabric/Api/General/ Tutorial : https://github.com/Dans-labs/text-fabric/blob/master/docs/tutorial.ipynb Example data : https://github.com/Dans-labs/text-fabric-data 118 features found and 0 ignored

Note that we have added a module phono.

The BHSA data has a special 1-1 transcription from Hebrew to ASCII,

but not a phonetic transcription.

I have made a notebook that tries hard to find phonological representations for all the words. The result is a module in text-fabric format. We'll encounter that later.

NB: This is a real-world example of how to add data to an existing data source as a module.

Load Features¶

The data of the BHSA is organized in features. They are columns of data. Think of the Hebrew Bible as a gigantic spreadsheet, where row 1 corresponds to the first word, row 2 to the second word, and so on, for all 425,000 words.

The information which part-of-speech each word is, constitutes a column in that spreadsheet. The BHSA contains over 100 columns, not only for the 425,000 words, but also for a million more textual objects.

Instead of putting that information in one big table, the data is organized in separate columns. We call those columns features.

We just load the features we need for this tutorial. Later on, where we use them, it will become clear what they mean.

api = TF.load('''

sp lex voc_lex_utf8

g_word trailer

g_lex_utf8

qere qere_trailer

language freq_lex gloss

mother

''')

api.makeAvailableIn(globals())

0.00s loading features ... 0.67s All features loaded/computed - for details use loadLog()

The result of this all is that we have a bunch of special variables at our disposal that give us access to the text and data of the Hebrew Bible.

At this point it is helpful to throw a quick glance at the text-fabric API documentation.

The most essential thing for now is that we can use F to access the data in the features

we've loaded.

But there is more, such as N, which helps us to walk over the text, as we see in a minute.

More power¶

There are extra functions on top of Text-Fabric that know about the Hebrew Bible. Lets acquire additional power.

B = Bhsa(api, 'start', version=VERSION)

Documentation: BHSA Feature docs BHSA API Text-Fabric API 5.4.2 Search Reference

A few things to note:

- You supply the

apias first argument toBhsa() - You supply the plain name of the notebook that you are writing as the second argument

- You supply the version of the BHSA data as the third argument

The result is that you have a few handy links to

- the data provenance and documentation

- the BHSA API and the Text-Fabric API

- the online versions of this notebook on GitHub and NBViewer.

Search¶

Text-Fabric contains a flexible search engine, that does not only work for the BHSA data, but also for data that you add to it.

Search is the quickest way to come up-to-speed with your data, without too much programming.

Jump to the dedicated search search tutorial first, to whet your appetite. And if you already know MQL queries, you can build from that in searchFromMQL.

The real power of search lies in the fact that it is integrated in a programming environment. You can use programming to:

- compose dynamic queries

- process query results

Therefore, the rest of this tutorial is still important when you want to tap that power. If you continue here, you learn all the basics of data-navigation with Text-Fabric.

Counting¶

In order to get acquainted with the data, we start with the simple task of counting.

Count all nodes¶

We use the

N() generator

to walk through the nodes.

We compared the BHSA data to a gigantic spreadsheet, where the rows correspond to the words.

In Text-Fabric, we call the rows slots, because they are the textual positions that can be filled with words.

We also mentioned that there are also 1,000,000 more textual objects. They are the phrases, clauses, sentences, verses, chapters and books. They also correspond to rows in the big spreadsheet.

In Text-Fabric we call all these rows nodes, and the N() generator

carries us through those nodes in the textual order.

Just one extra thing: the info statements generate timed messages.

If you use them instead of print you'll get a sense of the amount of time that

the various processing steps typically need.

indent(reset=True)

info('Counting nodes ...')

i = 0

for n in N(): i += 1

info('{} nodes'.format(i))

0.00s Counting nodes ... 0.30s 1446635 nodes

Here you see it: 1,4 M nodes!

What are those million nodes?¶

Every node has a type, like word, or phrase, sentence. We know that we have approximately 425,000 words and a million other nodes. But what exactly are they?

Text-Fabric has two special features, otype and oslots, that must occur in every Text-Fabric data set.

otype tells you for each node its type, and you can ask for the number of slots in the text.

Here we go!

F.otype.slotType

'word'

F.otype.maxSlot

426584

F.otype.maxNode

1446635

F.otype.all

('book',

'chapter',

'lex',

'verse',

'half_verse',

'sentence',

'sentence_atom',

'clause',

'clause_atom',

'phrase',

'phrase_atom',

'subphrase',

'word')

C.levels.data

(('book', 10938.051282051281, 426585, 426623),

('chapter', 459.18622174381056, 426624, 427552),

('lex', 46.2021011588866, 1437403, 1446635),

('verse', 18.37694395381898, 1414190, 1437402),

('half_verse', 9.441876936697653, 606323, 651502),

('sentence', 6.695609863288914, 1172209, 1235919),

('sentence_atom', 6.615141270973544, 1235920, 1300405),

('clause', 4.841988172665464, 427553, 515653),

('clause_atom', 4.704849507549438, 515654, 606322),

('phrase', 1.6848574373881755, 651503, 904689),

('phrase_atom', 1.5945932812248849, 904690, 1172208),

('subphrase', 1.4240578640230612, 1300406, 1414189),

('word', 1, 1, 426584))

This is interesting: above you see all the textual objects, with the average size of their objects, the node where they start, and the node where they end.

Count individual object types¶

This is an intuitive way to count the number of nodes in each type.

Note in passing, how we use the indent in conjunction with info to produce neat timed

and indented progress messages.

indent(reset=True)

info('counting objects ...')

for otype in F.otype.all:

i = 0

indent(level=1, reset=True)

for n in F.otype.s(otype): i+=1

info('{:>7} {}s'.format(i, otype))

indent(level=0)

info('Done')

0.00s counting objects ... | 0.00s 39 books | 0.00s 929 chapters | 0.00s 9233 lexs | 0.01s 23213 verses | 0.01s 45180 half_verses | 0.01s 63711 sentences | 0.01s 64486 sentence_atoms | 0.01s 88101 clauses | 0.01s 90669 clause_atoms | 0.04s 253187 phrases | 0.04s 267519 phrase_atoms | 0.01s 113784 subphrases | 0.06s 426584 words 0.24s Done

Viewing textual objects¶

We use the BHSA API (the extra power) to peek into the corpus.

First a word. Node 100,000 is a slot. Let's see what it is and where it is.

Note

- if you click on the word you go to a page in SHEBANQ that shows a list of all occurrences of this lexeme;

- if you hover on the part-of-speech (

prephere), you see the passage, and if you click on it, you go to SHEBANQ, to exactly this verse.

Let us do the same for more complex objects, such as phrases, sentences, etc.

phraseShow = 700001

B.pretty(phraseShow)

clauseShow = 500002

B.pretty(clauseShow)

sentenceShow = 1200001

B.pretty(sentenceShow)

verseShow = 1420000

B.pretty(verseShow)

chapterShow = 427000

print(F.otype.v(chapterShow))

B.pretty(chapterShow)

chapter

If you need a link to shebanq for just any node:

million = 1000000

B.shbLink(million)

Feature statistics¶

F

gives access to all features.

Every feature has a method

freqList()

to generate a frequency list of its values, higher frequencies first.

Here are the parts of speech:

F.sp.freqList()

(('subs', 125558),

('verb', 75450),

('prep', 73298),

('conj', 62737),

('nmpr', 35696),

('art', 30387),

('adjv', 10075),

('nega', 6059),

('prps', 5035),

('advb', 4603),

('prde', 2678),

('intj', 1912),

('inrg', 1303),

('prin', 1026))

verbs = collections.Counter()

indent(reset=True)

info('Collecting data')

for w in F.otype.s('word'):

if F.sp.v(w) != 'verb': continue

verbs[F.lex.v(w)] +=1

info('Done')

print(''.join(

'{}: {}\n'.format(verb, cnt) for (verb, cnt) in sorted(

verbs.items() , key=lambda x: (-x[1], x[0]))[0:10],

)

)

0.00s Collecting data 0.33s Done >MR[: 5378 HJH[: 3561 <FH[: 2629 BW>[: 2570 NTN[: 2017 HLK[: 1554 R>H[: 1298 CM<[: 1168 DBR[: 1138 JCB[: 1082

Method 2: counting lexemes¶

An alternative way to do this is to use the feature freq_lex, defined for lex nodes.

Now we walk the lexemes instead of the occurrences.

Note that the feature sp (part-of-speech) is defined for nodes of type word as well as lex.

Both also have the lex feature.

verbs = collections.Counter()

indent(reset=True)

info('Collecting data')

for w in F.otype.s('lex'):

if F.sp.v(w) != 'verb': continue

verbs[F.lex.v(w)] += F.freq_lex.v(w)

info('Done')

print(''.join(

'{}: {}\n'.format(verb, cnt) for (verb, cnt) in sorted(

verbs.items() , key=lambda x: (-x[1], x[0]))[0:10],

)

)

0.00s Collecting data 0.01s Done >MR[: 5378 HJH[: 3561 <FH[: 2629 BW>[: 2570 NTN[: 2017 HLK[: 1554 R>H[: 1298 CM<[: 1168 DBR[: 1138 JCB[: 1082

This is an order of magnitude faster. In this case, that means the difference between a third of a second and a hundredth of a second, not a big gain in absolute terms. But suppose you need to run this a 1000 times in a loop. Then it is the difference between 5 minutes and 10 seconds. A five minute wait is not pleasant in interactive computing!

A frequency mapping of lexemes¶

We make a mapping between lexeme forms and the number of occurrences of those lexemes.

lexeme_dict = {

F.g_lex_utf8.v(n): F.freq_lex.v(n)

for n in F.otype.s('word')

}

list(lexeme_dict.items())[0:10]

[('בְּ', 15542),

('רֵאשִׁית', 51),

('בָּרָא', 48),

('אֱלֹה', 2601),

('אֵת', 10997),

('הַ', 30386),

('שָּׁמַי', 421),

('וְ', 50272),

('הָ', 30386),

('אָרֶץ', 2504)]

Real work¶

As a primer of real world work on lexeme distribution, have a look at James Cuénod's notebook on Collocation MI Analysis of the Hebrew Bible

It is a nice example how you collect data with TF API calls, then do research with your own methods and tools, and then use TF for presenting results.

In case the name has changed, the enclosing repo is here.

indent(reset=True)

hapax = []

zero = set()

for l in F.otype.s('lex'):

occs = L.d(l, otype='word')

n = len(occs)

if n == 0: # that's weird: should not happen

zero.add(l)

elif n == 1: # hapax found!

hapax.append(l)

info('{} hapaxes found'.format(len(hapax)))

if zero:

error('{} zeroes found'.format(len(zero)), tm=False)

else:

info('No zeroes found', tm=False)

for h in hapax[0:10]:

print('\t{:<8} {}'.format(F.lex.v(h), F.gloss.v(h)))

0.17s 3072 hapaxes found No zeroes found PJCWN/ Pishon CWP[ bruise HRWN/ pregnancy Z<H/ sweat LHV/ flame NWD/ Nod XNWK=/ Enoch MXWJ>L/ Mehujael MXJJ>L/ Mehujael JBL=/ Jabal

Small occurrence base¶

The occurrence base of a lexeme are the verses, chapters and books in which occurs. Let's look for lexemes that occur in a single chapter.

If a lexeme occurs in a single chapter, its slots are a subset of the slots of that chapter. So, if you go up from the lexeme, you encounter the chapter.

Normally, lexemes occur in many chapters, and then none of them totally includes all occurrences of it, so if you go up from such lexemes, you don not find chapters.

Let's check it out.

Oh yes, we have already found the hapaxes, we will skip them here.

indent(reset=True)

info('Finding single chapter lexemes')

singleCh = []

multiple = []

for l in F.otype.s('lex'):

chapters = L.u(l, 'chapter')

if len(chapters) == 1:

if l not in hapax:

singleCh.append(l)

elif len(chapters) > 0: # should not happen

multipleCh.append(l)

info('{} single chapter lexemes found'.format(len(singleCh)))

if multiple:

error('{} chapter embedders of multiple lexemes found'.format(len(multiple)), tm=False)

else:

info('No chapter embedders of multiple lexemes found', tm=False)

for s in singleCh[0:10]:

print('{:<20} {:<6}'.format(

'{} {}:{}'.format(*T.sectionFromNode(s)),

F.lex.v(s),

))

0.00s Finding single chapter lexemes 0.16s 450 single chapter lexemes found No chapter embedders of multiple lexemes found Genesis 4:1 QJN=/ Genesis 4:2 HBL=/ Genesis 4:18 <JRD/ Genesis 4:18 MTWC>L/ Genesis 4:19 YLH/ Genesis 4:22 TWBL_QJN/ Genesis 10:11 KLX=/ Genesis 14:1 >MRPL/ Genesis 14:1 >RJWK/ Genesis 14:1 >LSR/

Confined to books¶

As a final exercise with lexemes, lets make a list of all books, and show their total number of lexemes and the number of lexemes that occur exclusively in that book.

indent(reset=True)

info('Making book-lexeme index')

allBook = collections.defaultdict(set)

allLex = set()

for b in F.otype.s('book'):

for w in L.d(b, 'word'):

l = L.u(w, 'lex')[0]

allBook[b].add(l)

allLex.add(l)

info('Found {} lexemes'.format(len(allLex)))

0.00s Making book-lexeme index 4.48s Found 9233 lexemes

indent(reset=True)

info('Finding single book lexemes')

singleBook = collections.defaultdict(lambda:0)

for l in F.otype.s('lex'):

book = L.u(l, 'book')

if len(book) == 1:

singleBook[book[0]] += 1

info('found {} single book lexemes'.format(sum(singleBook.values())))

0.00s Finding single book lexemes 0.05s found 4226 single book lexemes

print('{:<20}{:>5}{:>5}{:>5}\n{}'.format(

'book', '#all', '#own', '%own',

'-'*35,

))

booklist = []

for b in F.otype.s('book'):

book = T.bookName(b)

a = len(allBook[b])

o = singleBook.get(b, 0)

p = 100 * o / a

booklist.append((book, a, o, p))

for x in sorted(booklist, key=lambda e: (-e[3], -e[1], e[0])):

print('{:<20} {:>4} {:>4} {:>4.1f}%'.format(*x))

book #all #own %own ----------------------------------- Daniel 1121 428 38.2% 1_Chronicles 2015 488 24.2% Ezra 991 199 20.1% Joshua 1175 206 17.5% Esther 472 67 14.2% Isaiah 2553 350 13.7% Numbers 1457 197 13.5% Ezekiel 1718 212 12.3% Song_of_songs 503 60 11.9% Job 1717 202 11.8% Genesis 1817 208 11.4% Nehemiah 1076 110 10.2% Psalms 2251 216 9.6% Leviticus 960 89 9.3% Judges 1210 99 8.2% Ecclesiastes 575 46 8.0% Proverbs 1356 103 7.6% Jeremiah 1949 147 7.5% 2_Samuel 1304 89 6.8% 1_Samuel 1256 85 6.8% 2_Kings 1266 85 6.7% Exodus 1425 92 6.5% 1_Kings 1291 81 6.3% Deuteronomy 1449 80 5.5% Lamentations 592 31 5.2% 2_Chronicles 1411 67 4.7% Nahum 357 16 4.5% Hosea 742 33 4.4% Ruth 319 14 4.4% Habakkuk 393 17 4.3% Amos 652 27 4.1% Joel 398 14 3.5% Zechariah 726 25 3.4% Obadiah 167 5 3.0% Micah 586 16 2.7% Zephaniah 367 10 2.7% Jonah 252 5 2.0% Haggai 208 3 1.4% Malachi 314 4 1.3%

The book names may sound a bit unfamiliar, they are in Latin here. Later we'll see that you can also get them in English, or in Swahili.

Locality API¶

We travel upwards and downwards, forwards and backwards through the nodes.

The Locality-API (L) provides functions: u() for going up, and d() for going down,

n() for going to next nodes and p() for going to previous nodes.

These directions are indirect notions: nodes are just numbers, but by means of the

oslots feature they are linked to slots. One node contains an other node, if the one is linked to a set of slots that contains the set of slots that the other is linked to.

And one if next or previous to an other, if its slots follow or precede the slots of the other one.

L.u(node) Up is going to nodes that embed node.

L.d(node) Down is the opposite direction, to those that are contained in node.

L.n(node) Next are the next adjacent nodes, i.e. nodes whose first slot comes immediately after the last slot of node.

L.p(node) Previous are the previous adjacent nodes, i.e. nodes whose last slot comes immediately before the first slot of node.

All these functions yield nodes of all possible otypes. By passing an optional parameter, you can restrict the results to nodes of that type.

The result are ordered according to the order of things in the text.

The functions return always a tuple, even if there is just one node in the result.

Going up¶

We go from the first word to the book it contains.

Note the [0] at the end. You expect one book, yet L returns a tuple.

To get the only element of that tuple, you need to do that [0].

If you are like me, you keep forgetting it, and that will lead to weird error messages later on.

firstBook = L.u(1, otype='book')[0]

print(firstBook)

426585

And let's see all the containing objects of word 3:

w = 3

for otype in F.otype.all:

if otype == F.otype.slotType: continue

up = L.u(w, otype=otype)

upNode = 'x' if len(up) == 0 else up[0]

print('word {} is contained in {} {}'.format(w, otype, upNode))

word 3 is contained in book 426585 word 3 is contained in chapter 426624 word 3 is contained in lex 1437405 word 3 is contained in verse 1414190 word 3 is contained in half_verse 606323 word 3 is contained in sentence 1172209 word 3 is contained in sentence_atom 1235920 word 3 is contained in clause 427553 word 3 is contained in clause_atom 515654 word 3 is contained in phrase 651504 word 3 is contained in phrase_atom 904691 word 3 is contained in subphrase x

Going next¶

Let's go to the next nodes of the first book.

afterFirstBook = L.n(firstBook)

for n in afterFirstBook:

print('{:>7}: {:<13} first slot={:<6}, last slot={:<6}'.format(

n, F.otype.v(n),

E.oslots.s(n)[0],

E.oslots.s(n)[-1],

))

secondBook = L.n(firstBook, otype='book')[0]

28764: word first slot=28764 , last slot=28764 923447: phrase_atom first slot=28764 , last slot=28764 669484: phrase first slot=28764 , last slot=28764 521793: clause_atom first slot=28764 , last slot=28768 433543: clause first slot=28764 , last slot=28768 609323: half_verse first slot=28764 , last slot=28771 1240568: sentence_atom first slot=28764 , last slot=28773 1176828: sentence first slot=28764 , last slot=28792 1415723: verse first slot=28764 , last slot=28777 426674: chapter first slot=28764 , last slot=29112 426586: book first slot=28764 , last slot=52511

Going previous¶

And let's see what is right before the second book.

for n in L.p(secondBook):

print('{:>7}: {:<13} first slot={:<6}, last slot={:<6}'.format(

n, F.otype.v(n),

E.oslots.s(n)[0],

E.oslots.s(n)[-1],

))

426585: book first slot=1 , last slot=28763 426673: chapter first slot=28259 , last slot=28763 1415722: verse first slot=28746 , last slot=28763 609322: half_verse first slot=28754 , last slot=28763 1176827: sentence first slot=28757 , last slot=28763 1240567: sentence_atom first slot=28757 , last slot=28763 433542: clause first slot=28757 , last slot=28763 521792: clause_atom first slot=28757 , last slot=28763 669483: phrase first slot=28762 , last slot=28763 923446: phrase_atom first slot=28762 , last slot=28763 28763: word first slot=28763 , last slot=28763

Going down¶

We go to the chapters of the second book, and just count them.

chapters = L.d(secondBook, otype='chapter')

print(len(chapters))

40

The first verse¶

We pick the first verse and the first word, and explore what is above and below them.

for n in [1, L.u(1, otype='verse')[0]]:

indent(level=0)

info('Node {}'.format(n), tm=False)

indent(level=1)

info('UP', tm=False)

indent(level=2)

info('\n'.join(['{:<15} {}'.format(u, F.otype.v(u)) for u in L.u(n)]), tm=False)

indent(level=1)

info('DOWN', tm=False)

indent(level=2)

info('\n'.join(['{:<15} {}'.format(u, F.otype.v(u)) for u in L.d(n)]), tm=False)

indent(level=0)

info('Done', tm=False)

Node 1 | UP | | 1437403 lex | | 904690 phrase_atom | | 651503 phrase | | 606323 half_verse | | 515654 clause_atom | | 427553 clause | | 1235920 sentence_atom | | 1172209 sentence | | 1414190 verse | | 426624 chapter | | 426585 book | DOWN | | Node 1414190 | UP | | 426624 chapter | | 426585 book | DOWN | | 1172209 sentence | | 1235920 sentence_atom | | 427553 clause | | 515654 clause_atom | | 606323 half_verse | | 651503 phrase | | 904690 phrase_atom | | 1 word | | 2 word | | 651504 phrase | | 904691 phrase_atom | | 3 word | | 651505 phrase | | 904692 phrase_atom | | 4 word | | 606324 half_verse | | 651506 phrase | | 904693 phrase_atom | | 1300406 subphrase | | 5 word | | 6 word | | 7 word | | 8 word | | 1300407 subphrase | | 9 word | | 10 word | | 11 word Done

Text API¶

So far, we have mainly seen nodes and their numbers, and the names of node types. You would almost forget that we are dealing with text. So let's try to see some text.

In the same way as F gives access to feature data,

T gives access to the text.

That is also feature data, but you can tell Text-Fabric which features are specifically

carrying the text, and in return Text-Fabric offers you

a Text API: T.

Formats¶

Hebrew text can be represented in a number of ways:

- fully pointed (vocalized and accented), or consonantal,

- in transliteration, phonetic transcription or in Hebrew characters,

- showing the actual text or only the lexemes,

- following the ketiv or the qere, at places where they deviate from each other.

If you wonder where the information about text formats is stored:

not in the program text-fabric, but in the data set.

It has a feature otext, which specifies the formats and which features

must be used to produce them. otext is the third special feature in a TF data set,

next to otype and oslots.

It is an optional feature.

If it is absent, there will be no T API.

Here is a list of all available formats in this data set.

sorted(T.formats)

['lex-orig-full', 'lex-orig-plain', 'lex-trans-full', 'lex-trans-plain', 'text-orig-full', 'text-orig-full-ketiv', 'text-orig-plain', 'text-phono-full', 'text-trans-full', 'text-trans-full-ketiv', 'text-trans-plain']

Note the text-phono-full format here.

It does not come from the main data source bhsa, but from the module phono.

Look in your data directory, find ~/github/etcbc/phono/tf/2017/otext@phono.tf,

and you'll see this format defined there.

Using the formats¶

Now let's use those formats to print out the first verse of the Hebrew Bible.

for fmt in sorted(T.formats):

print('{}:\n\t{}'.format(fmt, T.text(range(1,12), fmt=fmt)))

lex-orig-full: בְּ רֵאשִׁית בָּרָא אֱלֹה אֵת הַ שָּׁמַי וְ אֵת הָ אָרֶץ lex-orig-plain: ב ראשׁית ברא אלהים את ה שׁמים ו את ה ארץ lex-trans-full: B.:- R;>CIJT B.@R@> >:ELOH >;T HA- C.@MAJ W:- >;T H@- >@REY lex-trans-plain: B R>CJT BR> >LHJM >T H CMJM W >T H >RY text-orig-full: בְּרֵאשִׁ֖ית בָּרָ֣א אֱלֹהִ֑ים אֵ֥ת הַשָּׁמַ֖יִם וְאֵ֥ת הָאָֽרֶץ׃ text-orig-full-ketiv: בְּרֵאשִׁ֖ית בָּרָ֣א אֱלֹהִ֑ים אֵ֥ת הַשָּׁמַ֖יִם וְאֵ֥ת הָאָֽרֶץ׃ text-orig-plain: בראשׁית ברא אלהים את השׁמים ואת הארץ׃ text-phono-full: bᵊrēšˌîṯ bārˈā ʔᵉlōhˈîm ʔˌēṯ haššāmˌayim wᵊʔˌēṯ hāʔˈāreṣ . text-trans-full: B.:-R;>CI73JT B.@R@74> >:ELOHI92JM >;71T HA-C.@MA73JIM W:->;71T H@->@75REY00 text-trans-full-ketiv: B.:-R;>CI73JT B.@R@74> >:ELOHI92JM >;71T HA-C.@MA73JIM W:->;71T H@->@75REY00 text-trans-plain: BR>CJT BR> >LHJM >T HCMJM W>T H>RY00

If we do not specify a format, the default format is used (text-orig-full).

print(T.text(range(1,12)))

בְּרֵאשִׁ֖ית בָּרָ֣א אֱלֹהִ֑ים אֵ֥ת הַשָּׁמַ֖יִם וְאֵ֥ת הָאָֽרֶץ׃

Whole text in all formats in just 10 seconds¶

Part of the pleasure of working with computers is that they can crunch massive amounts of data. The text of the Hebrew Bible is a piece of cake.

It takes just ten seconds to have that cake and eat it. In nearly a dozen formats.

indent(reset=True)

info('writing plain text of whole Bible in all formats')

text = collections.defaultdict(list)

for v in F.otype.s('verse'):

words = L.d(v, 'word')

for fmt in sorted(T.formats):

text[fmt].append(T.text(words, fmt=fmt))

info('done {} formats'.format(len(text)))

for fmt in sorted(text):

print('{}\n{}\n'.format(fmt, '\n'.join(text[fmt][0:5])))

0.00s writing plain text of whole Bible in all formats 9.32s done 11 formats lex-orig-full בְּ רֵאשִׁית בָּרָא אֱלֹה אֵת הַ שָּׁמַי וְ אֵת הָ אָרֶץ וְ הָ אָרֶץ הָי תֹהוּ וָ בֹהוּ וְ חֹשֶׁךְ עַל פְּן תְהֹום וְ רוּחַ אֱלֹה רַחֶף עַל פְּן הַ מָּי וַ אמֶר אֱלֹה הִי אֹור וַ הִי אֹור וַ רְא אֱלֹה אֶת הָ אֹור כִּי טֹוב וַ בְדֵּל אֱלֹה בֵּין הָ אֹור וּ בֵין הַ חֹשֶׁךְ וַ קְרָא אֱלֹה לָ אֹור יֹום וְ לַ חֹשֶׁךְ קָרָא לָיְלָה וַ הִי עֶרֶב וַ הִי בֹקֶר יֹום אֶחָד lex-orig-plain ב ראשׁית ברא אלהים את ה שׁמים ו את ה ארץ ו ה ארץ היה תהו ו בהו ו חשׁך על פנה תהום ו רוח אלהים רחף על פנה ה מים ו אמר אלהים היה אור ו היה אור ו ראה אלהים את ה אור כי טוב ו בדל אלהים בין ה אור ו בין ה חשׁך ו קרא אלהים ל ה אור יום ו ל ה חשׁך קרא לילה ו היה ערב ו היה בקר יום אחד lex-trans-full B.:- R;>CIJT B.@R@> >:ELOH >;T HA- C.@MAJ W:- >;T H@- >@REY W:- H@- >@REY H@J TOHW. W@- BOHW. W:- XOCEK: <AL P.:N T:HOWM W:- RW.XA >:ELOH RAXEP <AL P.:N HA- M.@J WA- >MER >:ELOH HIJ >OWR WA- HIJ >OWR WA- R:> >:ELOH >ET H@- >OWR K.IJ VOWB WA- B:D.;L >:ELOH B.;JN H@- >OWR W.- B;JN HA- XOCEK: WA- Q:R@> >:ELOH L@- - >OWR JOWM W:- LA- - XOCEK: Q@R@> L@J:L@H WA- HIJ <EREB WA- HIJ BOQER JOWM >EX@D lex-trans-plain B R>CJT BR> >LHJM >T H CMJM W >T H >RY W H >RY HJH THW W BHW W XCK <L PNH THWM W RWX >LHJM RXP <L PNH H MJM W >MR >LHJM HJH >WR W HJH >WR W R>H >LHJM >T H >WR KJ VWB W BDL >LHJM BJN H >WR W BJN H XCK W QR> >LHJM L H >WR JWM W L H XCK QR> LJLH W HJH <RB W HJH BQR JWM >XD text-orig-full בְּרֵאשִׁ֖ית בָּרָ֣א אֱלֹהִ֑ים אֵ֥ת הַשָּׁמַ֖יִם וְאֵ֥ת הָאָֽרֶץ׃ וְהָאָ֗רֶץ הָיְתָ֥ה תֹ֨הוּ֙ וָבֹ֔הוּ וְחֹ֖שֶׁךְ עַל־פְּנֵ֣י תְהֹ֑ום וְר֣וּחַ אֱלֹהִ֔ים מְרַחֶ֖פֶת עַל־פְּנֵ֥י הַמָּֽיִם׃ וַיֹּ֥אמֶר אֱלֹהִ֖ים יְהִ֣י אֹ֑ור וַֽיְהִי־אֹֽור׃ וַיַּ֧רְא אֱלֹהִ֛ים אֶת־הָאֹ֖ור כִּי־טֹ֑וב וַיַּבְדֵּ֣ל אֱלֹהִ֔ים בֵּ֥ין הָאֹ֖ור וּבֵ֥ין הַחֹֽשֶׁךְ׃ וַיִּקְרָ֨א אֱלֹהִ֤ים׀ לָאֹור֙ יֹ֔ום וְלַחֹ֖שֶׁךְ קָ֣רָא לָ֑יְלָה וַֽיְהִי־עֶ֥רֶב וַֽיְהִי־בֹ֖קֶר יֹ֥ום אֶחָֽד׃ פ text-orig-full-ketiv בְּרֵאשִׁ֖ית בָּרָ֣א אֱלֹהִ֑ים אֵ֥ת הַשָּׁמַ֖יִם וְאֵ֥ת הָאָֽרֶץ׃ וְהָאָ֗רֶץ הָיְתָ֥ה תֹ֨הוּ֙ וָבֹ֔הוּ וְחֹ֖שֶׁךְ עַל־פְּנֵ֣י תְהֹ֑ום וְר֣וּחַ אֱלֹהִ֔ים מְרַחֶ֖פֶת עַל־פְּנֵ֥י הַמָּֽיִם׃ וַיֹּ֥אמֶר אֱלֹהִ֖ים יְהִ֣י אֹ֑ור וַֽיְהִי־אֹֽור׃ וַיַּ֧רְא אֱלֹהִ֛ים אֶת־הָאֹ֖ור כִּי־טֹ֑וב וַיַּבְדֵּ֣ל אֱלֹהִ֔ים בֵּ֥ין הָאֹ֖ור וּבֵ֥ין הַחֹֽשֶׁךְ׃ וַיִּקְרָ֨א אֱלֹהִ֤ים׀ לָאֹור֙ יֹ֔ום וְלַחֹ֖שֶׁךְ קָ֣רָא לָ֑יְלָה וַֽיְהִי־עֶ֥רֶב וַֽיְהִי־בֹ֖קֶר יֹ֥ום אֶחָֽד׃ פ text-orig-plain בראשׁית ברא אלהים את השׁמים ואת הארץ׃ והארץ היתה תהו ובהו וחשׁך על־פני תהום ורוח אלהים מרחפת על־פני המים׃ ויאמר אלהים יהי אור ויהי־אור׃ וירא אלהים את־האור כי־טוב ויבדל אלהים בין האור ובין החשׁך׃ ויקרא אלהים׀ לאור יום ולחשׁך קרא לילה ויהי־ערב ויהי־בקר יום אחד׃ פ text-phono-full bᵊrēšˌîṯ bārˈā ʔᵉlōhˈîm ʔˌēṯ haššāmˌayim wᵊʔˌēṯ hāʔˈāreṣ . wᵊhāʔˈāreṣ hāyᵊṯˌā ṯˈōhû wāvˈōhû wᵊḥˌōšeḵ ʕal-pᵊnˈê ṯᵊhˈôm wᵊrˈûₐḥ ʔᵉlōhˈîm mᵊraḥˌefeṯ ʕal-pᵊnˌê hammˈāyim . wayyˌōmer ʔᵉlōhˌîm yᵊhˈî ʔˈôr wˈayᵊhî-ʔˈôr . wayyˈar ʔᵉlōhˈîm ʔeṯ-hāʔˌôr kî-ṭˈôv wayyavdˈēl ʔᵉlōhˈîm bˌên hāʔˌôr ûvˌên haḥˈōšeḵ . wayyiqrˌā ʔᵉlōhˈîm lāʔôr yˈôm wᵊlaḥˌōšeḵ qˈārā lˈāyᵊlā wˈayᵊhî-ʕˌerev wˈayᵊhî-vˌōqer yˌôm ʔeḥˈāḏ . f text-trans-full B.:-R;>CI73JT B.@R@74> >:ELOHI92JM >;71T HA-C.@MA73JIM W:->;71T H@->@75REY00 W:-H@->@81REY H@J:T@71H TO33HW.03 W@-BO80HW. W:-XO73CEK: <AL&P.:N;74J T:HO92WM W:-R74W.XA >:ELOHI80JM M:RAXE73PET <AL&P.:N;71J HA-M.@75JIM00 WA-J.O71>MER >:ELOHI73JM J:HI74J >O92WR WA45-J:HIJ&>O75WR00 WA-J.A94R:> >:ELOHI91JM >ET&H@->O73WR K.IJ&VO92WB WA-J.AB:D.;74L >:ELOHI80JM B.;71JN H@->O73WR W.-B;71JN HA-XO75CEK:00 WA-J.IQ:R@63> >:ELOHI70JM05 L@-->OWR03 JO80WM W:-LA--XO73CEK: Q@74R@> L@92J:L@H WA45-J:HIJ&<E71REB WA45-J:HIJ&BO73QER JO71WM >EX@75D00_P text-trans-full-ketiv B.:-R;>CI73JT B.@R@74> >:ELOHI92JM >;71T HA-C.@MA73JIM W:->;71T H@->@75REY00 W:-H@->@81REY H@J:T@71H TO33HW.03 W@-BO80HW. W:-XO73CEK: <AL&P.:N;74J T:HO92WM W:-R74W.XA >:ELOHI80JM M:RAXE73PET <AL&P.:N;71J HA-M.@75JIM00 WA-J.O71>MER >:ELOHI73JM J:HI74J >O92WR WA45-J:HIJ&>O75WR00 WA-J.A94R:> >:ELOHI91JM >ET&H@->O73WR K.IJ&VO92WB WA-J.AB:D.;74L >:ELOHI80JM B.;71JN H@->O73WR W.-B;71JN HA-XO75CEK:00 WA-J.IQ:R@63> >:ELOHI70JM05 L@-->OWR03 JO80WM W:-LA--XO73CEK: Q@74R@> L@92J:L@H WA45-J:HIJ&<E71REB WA45-J:HIJ&BO73QER JO71WM >EX@75D00_P text-trans-plain BR>CJT BR> >LHJM >T HCMJM W>T H>RY00 WH>RY HJTH THW WBHW WXCK <L&PNJ THWM WRWX >LHJM MRXPT <L&PNJ HMJM00 WJ>MR >LHJM JHJ >WR WJHJ&>WR00 WJR> >LHJM >T&H>WR KJ&VWB WJBDL >LHJM BJN H>WR WBJN HXCK00 WJQR> >LHJM05 L>WR JWM WLXCK QR> LJLH WJHJ&<RB WJHJ&BQR JWM >XD00_P

The full plain text¶

We write a few formats to file, in your Downloads folder.

T.formats

{'lex-orig-full',

'lex-orig-plain',

'lex-trans-full',

'lex-trans-plain',

'text-orig-full',

'text-orig-full-ketiv',

'text-orig-plain',

'text-phono-full',

'text-trans-full',

'text-trans-full-ketiv',

'text-trans-plain'}

for fmt in '''

text-orig-full

text-phono-full

'''.strip().split():

with open(os.path.expanduser(f'~/Downloads/{fmt}.txt'), 'w') as f:

f.write('\n'.join(text[fmt]))

T.languages

{'': {'language': 'default', 'languageEnglish': 'default'},

'am': {'language': 'ኣማርኛ', 'languageEnglish': 'amharic'},

'ar': {'language': 'العَرَبِية', 'languageEnglish': 'arabic'},

'bn': {'language': 'বাংলা', 'languageEnglish': 'bengali'},

'da': {'language': 'Dansk', 'languageEnglish': 'danish'},

'de': {'language': 'Deutsch', 'languageEnglish': 'german'},

'el': {'language': 'Ελληνικά', 'languageEnglish': 'greek'},

'en': {'language': 'English', 'languageEnglish': 'english'},

'es': {'language': 'Español', 'languageEnglish': 'spanish'},

'fa': {'language': 'فارسی', 'languageEnglish': 'farsi'},

'fr': {'language': 'Français', 'languageEnglish': 'french'},

'he': {'language': 'עברית', 'languageEnglish': 'hebrew'},

'hi': {'language': 'हिन्दी', 'languageEnglish': 'hindi'},

'id': {'language': 'Bahasa Indonesia', 'languageEnglish': 'indonesian'},

'ja': {'language': '日本語', 'languageEnglish': 'japanese'},

'ko': {'language': '한국어', 'languageEnglish': 'korean'},

'la': {'language': 'Latina', 'languageEnglish': 'latin'},

'nl': {'language': 'Nederlands', 'languageEnglish': 'dutch'},

'pa': {'language': 'ਪੰਜਾਬੀ', 'languageEnglish': 'punjabi'},

'pt': {'language': 'Português', 'languageEnglish': 'portuguese'},

'ru': {'language': 'Русский', 'languageEnglish': 'russian'},

'sw': {'language': 'Kiswahili', 'languageEnglish': 'swahili'},

'syc': {'language': 'ܠܫܢܐ ܣܘܪܝܝܐ', 'languageEnglish': 'syriac'},

'tr': {'language': 'Türkçe', 'languageEnglish': 'turkish'},

'ur': {'language': 'اُردُو', 'languageEnglish': 'urdu'},

'yo': {'language': 'èdè Yorùbá', 'languageEnglish': 'yoruba'},

'zh': {'language': '中文', 'languageEnglish': 'chinese'}}

Book names in Swahili¶

Get the book names in Swahili.

nodeToSwahili = ''

for b in F.otype.s('book'):

nodeToSwahili += '{} = {}\n'.format(b, T.bookName(b, lang='sw'))

print(nodeToSwahili)

426585 = Mwanzo 426586 = Kutoka 426587 = Mambo_ya_Walawi 426588 = Hesabu 426589 = Kumbukumbu_la_Torati 426590 = Yoshua 426591 = Waamuzi 426592 = 1_Samweli 426593 = 2_Samweli 426594 = 1_Wafalme 426595 = 2_Wafalme 426596 = Isaya 426597 = Yeremia 426598 = Ezekieli 426599 = Hosea 426600 = Yoeli 426601 = Amosi 426602 = Obadia 426603 = Yona 426604 = Mika 426605 = Nahumu 426606 = Habakuki 426607 = Sefania 426608 = Hagai 426609 = Zekaria 426610 = Malaki 426611 = Zaburi 426612 = Ayubu 426613 = Mithali 426614 = Ruthi 426615 = Wimbo_Ulio_Bora 426616 = Mhubiri 426617 = Maombolezo 426618 = Esta 426619 = Danieli 426620 = Ezra 426621 = Nehemia 426622 = 1_Mambo_ya_Nyakati 426623 = 2_Mambo_ya_Nyakati

Book nodes from Swahili¶

OK, there they are. We copy them into a string, and do the opposite: get the nodes back. We check whether we get exactly the same nodes as the ones we started with.

swahiliNames = '''

Mwanzo

Kutoka

Mambo_ya_Walawi

Hesabu

Kumbukumbu_la_Torati

Yoshua

Waamuzi

1_Samweli

2_Samweli

1_Wafalme

2_Wafalme

Isaya

Yeremia

Ezekieli

Hosea

Yoeli

Amosi

Obadia

Yona

Mika

Nahumu

Habakuki

Sefania

Hagai

Zekaria

Malaki

Zaburi

Ayubu

Mithali

Ruthi

Wimbo_Ulio_Bora

Mhubiri

Maombolezo

Esta

Danieli

Ezra

Nehemia

1_Mambo_ya_Nyakati

2_Mambo_ya_Nyakati

'''.strip().split()

swahiliToNode = ''

for nm in swahiliNames:

swahiliToNode += '{} = {}\n'.format(T.bookNode(nm, lang='sw'), nm)

if swahiliToNode != nodeToSwahili:

print('Something is not right with the book names')

else:

print('Going from nodes to booknames and back yields the original nodes')

Going from nodes to booknames and back yields the original nodes

Sections¶

A section in the Hebrew bible is a book, a chapter or a verse.

Knowledge of sections is not baked into Text-Fabric.

The config feature otext.tf may specify three section levels, and tell

what the corresponding node types and features are.

From that knowledge it can construct mappings from nodes to sections, e.g. from verse nodes to tuples of the form:

(bookName, chapterNumber, verseNumber)

Here are examples of getting the section that corresponds to a node and vice versa.

NB: sectionFromNode always delivers a verse specification, either from the

first slot belonging to that node, or, if lastSlot, from the last slot

belonging to that node.

for x in (

('section of first word', T.sectionFromNode(1) ),

('node of Gen 1:1', T.nodeFromSection(('Genesis', 1, 1)) ),

('idem', T.nodeFromSection(('Mwanzo', 1, 1), lang='sw') ),

('node of book Genesis', T.nodeFromSection(('Genesis',)) ),

('node of Genesis 1', T.nodeFromSection(('Genesis', 1)) ),

('section of book node', T.sectionFromNode(1367534) ),

('idem, now last word', T.sectionFromNode(1367534, lastSlot=True) ),

('section of chapter node', T.sectionFromNode(1367573) ),

('idem, now last word', T.sectionFromNode(1367573, lastSlot=True) ),

): print('{:<30} {}'.format(*x))

section of first word ('Genesis', 1, 1)

node of Gen 1:1 1414190

idem 1414190

node of book Genesis 426585

node of Genesis 1 426624

section of book node ('Jeremiah', 36, 13)

idem, now last word ('Jeremiah', 36, 13)

section of chapter node ('Jeremiah', 36, 21)

idem, now last word ('Jeremiah', 36, 21)

Sentences spanning multiple verses¶

If you go up from a sentence node, you expect to find a verse node. But some sentences span multiple verses, and in that case, you will not find the enclosing verse node, because it is not there.

Here is a piece of code to detect and list all cases where sentences span multiple verses.

The idea is to pick the first and the last word of a sentence, use T.sectionFromNode to

discover the verse in which that word occurs, and if they are different: bingo!

We show the first 5 of ca. 900 cases.

By the way: doing this in the 2016 version of the data yields 915 results.

The splitting up of the text into sentences is not carved in stone!

indent(reset=True)

info('Get sentences that span multiple verses')

spanSentences = []

for s in F.otype.s('sentence'):

f = T.sectionFromNode(s, lastSlot=False)

l = T.sectionFromNode(s, lastSlot=True)

if f != l:

spanSentences.append('{} {}:{}-{}'.format(f[0], f[1], f[2], l[2]))

info('Found {} cases'.format(len(spanSentences)))

info('\n{}'.format('\n'.join(spanSentences[0:10])))

0.00s Get sentences that span multiple verses 4.88s Found 892 cases 4.88s Genesis 1:17-18 Genesis 1:29-30 Genesis 2:4-7 Genesis 7:2-3 Genesis 7:8-9 Genesis 7:13-14 Genesis 9:9-10 Genesis 10:11-12 Genesis 10:13-14 Genesis 10:15-18

A different way, with better display, is:

indent(reset=True)

info('Get sentences that span multiple verses')

spanSentences = []

for s in F.otype.s('sentence'):

words = L.d(s, otype='word')

fw = words[0]

lw = words[-1]

fVerse = L.u(fw, otype='verse')[0]

lVerse = L.u(lw, otype='verse')[0]

if fVerse != lVerse:

spanSentences.append((s, fVerse, lVerse))

info('Found {} cases'.format(len(spanSentences)))

B.table(spanSentences, end=10, linked=2)

0.00s Get sentences that span multiple verses 1.64s Found 892 cases

| n | sentence | verse | verse |

|---|---|---|---|

| 1 | וַיִּתֵּ֥ן אֹתָ֛ם אֱלֹהִ֖ים בִּרְקִ֣יעַ הַשָּׁמָ֑יִם לְהָאִ֖יר עַל־הָאָֽרֶץ׃ וְלִמְשֹׁל֙ בַּיֹּ֣ום וּבַלַּ֔יְלָה וּֽלֲהַבְדִּ֔יל בֵּ֥ין הָאֹ֖ור וּבֵ֣ין הַחֹ֑שֶׁךְ | Genesis 1:17 | Genesis 1:18 |

| 2 | הִנֵּה֩ נָתַ֨תִּי לָכֶ֜ם אֶת־כָּל־עֵ֣שֶׂב׀ זֹרֵ֣עַ זֶ֗רַע אֲשֶׁר֙ עַל־פְּנֵ֣י כָל־הָאָ֔רֶץ וְאֶת־כָּל־הָעֵ֛ץ אֲשֶׁר־בֹּ֥ו פְרִי־עֵ֖ץ זֹרֵ֣עַ זָ֑רַע וּֽלְכָל־חַיַּ֣ת הָ֠אָרֶץ וּלְכָל־עֹ֨וף הַשָּׁמַ֜יִם וּלְכֹ֣ל׀ רֹומֵ֣שׂ עַל־הָאָ֗רֶץ אֲשֶׁר־בֹּו֙ נֶ֣פֶשׁ חַיָּ֔ה אֶת־כָּל־יֶ֥רֶק עֵ֖שֶׂב לְאָכְלָ֑ה | Genesis 1:29 | Genesis 1:30 |

| 3 | בְּיֹ֗ום עֲשֹׂ֛ות יְהוָ֥ה אֱלֹהִ֖ים אֶ֥רֶץ וְשָׁמָֽיִם׃ וַיִּיצֶר֩ יְהוָ֨ה אֱלֹהִ֜ים אֶת־הָֽאָדָ֗ם עָפָר֙ מִן־הָ֣אֲדָמָ֔ה | Genesis 2:4 | Genesis 2:7 |

| 4 | מִכֹּ֣ל׀ הַבְּהֵמָ֣ה הַטְּהֹורָ֗ה תִּֽקַּח־לְךָ֛ שִׁבְעָ֥ה שִׁבְעָ֖ה אִ֣ישׁ וְאִשְׁתֹּ֑ו וּמִן־הַבְּהֵמָ֡ה אֲ֠שֶׁר לֹ֣א טְהֹרָ֥ה הִ֛וא שְׁנַ֖יִם אִ֥ישׁ וְאִשְׁתֹּֽו׃ גַּ֣ם מֵעֹ֧וף הַשָּׁמַ֛יִם שִׁבְעָ֥ה שִׁבְעָ֖ה זָכָ֣ר וּנְקֵבָ֑ה לְחַיֹּ֥ות זֶ֖רַע עַל־פְּנֵ֥י כָל־הָאָֽרֶץ׃ | Genesis 7:2 | Genesis 7:3 |

| 5 | מִן־הַבְּהֵמָה֙ הַטְּהֹורָ֔ה וּמִן־הַ֨בְּהֵמָ֔ה אֲשֶׁ֥ר אֵינֶ֖נָּה טְהֹרָ֑ה וּמִ֨ן־הָעֹ֔וף וְכֹ֥ל אֲשֶׁר־רֹמֵ֖שׂ עַל־הָֽאֲדָמָֽה׃ שְׁנַ֨יִם שְׁנַ֜יִם בָּ֧אוּ אֶל־נֹ֛חַ אֶל־הַתֵּבָ֖ה זָכָ֣ר וּנְקֵבָ֑ה כַּֽאֲשֶׁ֛ר צִוָּ֥ה אֱלֹהִ֖ים אֶת־נֹֽחַ׃ | Genesis 7:8 | Genesis 7:9 |

| 6 | בְּעֶ֨צֶם הַיֹּ֤ום הַזֶּה֙ בָּ֣א נֹ֔חַ וְשֵׁם־וְחָ֥ם וָיֶ֖פֶת בְּנֵי־נֹ֑חַ וְאֵ֣שֶׁת נֹ֗חַ וּשְׁלֹ֧שֶׁת נְשֵֽׁי־בָנָ֛יו אִתָּ֖ם אֶל־הַתֵּבָֽה׃ הֵ֜מָּה וְכָל־הַֽחַיָּ֣ה לְמִינָ֗הּ וְכָל־הַבְּהֵמָה֙ לְמִינָ֔הּ וְכָל־הָרֶ֛מֶשׂ הָרֹמֵ֥שׂ עַל־הָאָ֖רֶץ לְמִינֵ֑הוּ וְכָל־הָעֹ֣וף לְמִינֵ֔הוּ כֹּ֖ל צִפֹּ֥ור כָּל־כָּנָֽף׃ | Genesis 7:13 | Genesis 7:14 |

| 7 | וַאֲנִ֕י הִנְנִ֥י מֵקִ֛ים אֶת־בְּרִיתִ֖י אִתְּכֶ֑ם וְאֶֽת־זַרְעֲכֶ֖ם אַֽחֲרֵיכֶֽם׃ וְאֵ֨ת כָּל־נֶ֤פֶשׁ הַֽחַיָּה֙ אֲשֶׁ֣ר אִתְּכֶ֔ם בָּעֹ֧וף בַּבְּהֵמָ֛ה וּֽבְכָל־חַיַּ֥ת הָאָ֖רֶץ אִתְּכֶ֑ם מִכֹּל֙ יֹצְאֵ֣י הַתֵּבָ֔ה לְכֹ֖ל חַיַּ֥ת הָאָֽרֶץ׃ | Genesis 9:9 | Genesis 9:10 |

| 8 | וַיִּ֨בֶן֙ אֶת־נִ֣ינְוֵ֔ה וְאֶת־רְחֹבֹ֥ת עִ֖יר וְאֶת־כָּֽלַח׃ וְֽאֶת־רֶ֔סֶן בֵּ֥ין נִֽינְוֵ֖ה וּבֵ֣ין כָּ֑לַח | Genesis 10:11 | Genesis 10:12 |

| 9 | וּמִצְרַ֡יִם יָלַ֞ד אֶת־לוּדִ֧ים וְאֶת־עֲנָמִ֛ים וְאֶת־לְהָבִ֖ים וְאֶת־נַפְתֻּחִֽים׃ וְֽאֶת־פַּתְרֻסִ֞ים וְאֶת־כַּסְלֻחִ֗ים אֲשֶׁ֨ר יָצְא֥וּ מִשָּׁ֛ם פְּלִשְׁתִּ֖ים וְאֶת־כַּפְתֹּרִֽים׃ ס | Genesis 10:13 | Genesis 10:14 |

| 10 | וּכְנַ֗עַן יָלַ֛ד אֶת־צִידֹ֥ן בְּכֹרֹ֖ו וְאֶת־חֵֽת׃ וְאֶת־הַיְבוּסִי֙ וְאֶת־הָ֣אֱמֹרִ֔י וְאֵ֖ת הַגִּרְגָּשִֽׁי׃ וְאֶת־הַֽחִוִּ֥י וְאֶת־הַֽעַרְקִ֖י וְאֶת־הַסִּינִֽי׃ וְאֶת־הָֽאַרְוָדִ֥י וְאֶת־הַצְּמָרִ֖י וְאֶת־הַֽחֲמָתִ֑י | Genesis 10:15 | Genesis 10:18 |

We can zoom in:

B.show(spanSentences, condensed=False, start=6, end=6)

Result 6¶

B.pretty(spanSentences[5][0])

Ketiv Qere¶

Let us explore where Ketiv/Qere pairs are and how they render.

qeres = [w for w in F.otype.s('word') if F.qere.v(w) != None]

print('{} qeres'.format(len(qeres)))

for w in qeres[0:10]:

print('{}: ketiv = "{}"+"{}" qere = "{}"+"{}"'.format(

w, F.g_word.v(w), F.trailer.v(w), F.qere.v(w), F.qere_trailer.v(w),

))

1892 qeres 3897: ketiv = "*HWY>"+" " qere = "HAJ:Y;74>"+" " 4420: ketiv = "*>HLH"+" " qere = ">@H:@LO75W"+"00" 5645: ketiv = "*>HLH"+" " qere = ">@H:@LO92W"+" " 5912: ketiv = "*>HLH"+" " qere = ">@95H:@LOW03"+" " 6246: ketiv = "*YBJJM"+" " qere = "Y:BOWJI80m"+" " 6354: ketiv = "*YBJJM"+" " qere = "Y:BOWJI80m"+" " 11761: ketiv = "*W-"+"" qere = "WA"+"" 11762: ketiv = "*JJFM"+" " qere = "J.W.FA70m"+" " 12783: ketiv = "*GJJM"+" " qere = "GOWJIm03"+" " 13684: ketiv = "*YJDH"+" " qere = "Y@75JID"+"00"

Show a ketiv-qere pair¶

Let us print all text representations of the verse in which word node 4419 occurs.

refWord = 4419

vn = L.u(refWord, otype='verse')[0]

ws = L.d(vn, otype='word')

print('{} {}:{}'.format(*T.sectionFromNode(refWord)))

for fmt in sorted(T.formats):

if fmt.startswith('text-'):

print('{:<25} {}'.format(fmt, T.text(ws, fmt=fmt)))

Genesis 9:21 text-orig-full וַיֵּ֥שְׁתְּ מִן־הַיַּ֖יִן וַיִּשְׁכָּ֑ר וַיִּתְגַּ֖ל בְּתֹ֥וךְ אָהֳלֹֽו׃ text-orig-full-ketiv וַיֵּ֥שְׁתְּ מִן־הַיַּ֖יִן וַיִּשְׁכָּ֑ר וַיִּתְגַּ֖ל בְּתֹ֥וךְ אהלה text-orig-plain וישׁת מן־היין וישׁכר ויתגל בתוך אהלה text-phono-full wayyˌēšt min-hayyˌayin wayyiškˈār wayyiṯgˌal bᵊṯˌôḵ *ʔohᵒlˈô . text-trans-full WA-J.;71C:T.: MIN&HA-J.A73JIN WA-J.IC:K.@92R WA-J.IT:G.A73L B.:-TO71WK: >@H:@LO75W00 text-trans-full-ketiv WA-J.;71C:T.: MIN&HA-J.A73JIN WA-J.IC:K.@92R WA-J.IT:G.A73L B.:-TO71WK: *>HLH text-trans-plain WJCT MN&HJJN WJCKR WJTGL BTWK >HLH

Edge features: mother¶

We have not talked about edges much. If the nodes correspond to the rows in the big spreadsheet, the edges point from one row to another.

One edge we have encountered: the special feature oslots.

Each non-slot node is linked by oslots to all of its slot nodes.

An edge is really a feature as well. Whereas a node feature is a column of information, one cell per node, an edge feature is also a column of information, one cell per pair of nodes.

Linguists use more relationships between textual objects, for example:

linguistic dependency.

In the BHSA all cases of linguistic dependency are coded in the edge feature mother.

Let us do a few basic enquiry on an edge feature: mother.

We count how many mothers nodes can have (it turns to be 0 or 1).

We walk through all nodes and per node we retrieve the mother nodes, and

we store the lengths (if non-zero) in a dictionary (mother_len).

We see that nodes have at most one mother.

We also count the inverse relationship: daughters.

info('Counting mothers')

motherLen = {}

daughterLen = {}

for c in N():

lms = E.mother.f(c) or []

lds = E.mother.t(c) or []

nms = len(lms)

nds = len(lds)

if nms: motherLen[c] = nms

if nds: daughterLen[c] = nds

info('{} nodes have mothers'.format(len(motherLen)))

info('{} nodes have daughters'.format(len(daughterLen)))

motherCount = collections.Counter()

daughterCount = collections.Counter()

for (n, lm) in motherLen.items(): motherCount[lm] += 1

for (n, ld) in daughterLen.items(): daughterCount[ld] += 1

print('mothers', motherCount)

print('daughters', daughterCount)

16s Counting mothers

19s 182159 nodes have mothers

19s 144059 nodes have daughters

mothers Counter({1: 182159})

daughters Counter({1: 117926, 2: 17408, 3: 6272, 4: 1843, 5: 462, 6: 122, 7: 21, 8: 5})

Next steps¶

By now you have an impression how to compute around in the Hebrew Bible. While this is still the beginning, I hope you already sense the power of unlimited programmatic access to all the bits and bytes in the data set.

Here are a few directions for unleashing that power.

Explore additional data¶

The ETCBC has a few other repositories with data that work in conjunction with the BHSA data. One of them you have already seen: phono, for phonetic transcriptions.

There is also parallels for detecting parallel passages, and valence for studying patterns around verbs that determine their meanings.

Add your own data¶

If you study the additional data, you can observe how that data is created and also

how it is turned into a text-fabric data module.

The last step is incredibly easy. You can write out every Python dictionary where the keys are numbers

and the values string or numbers as a Text-Fabric feature.

When you are creating data, you have already constructed those dictionaries, so writing

them out is just one method call.

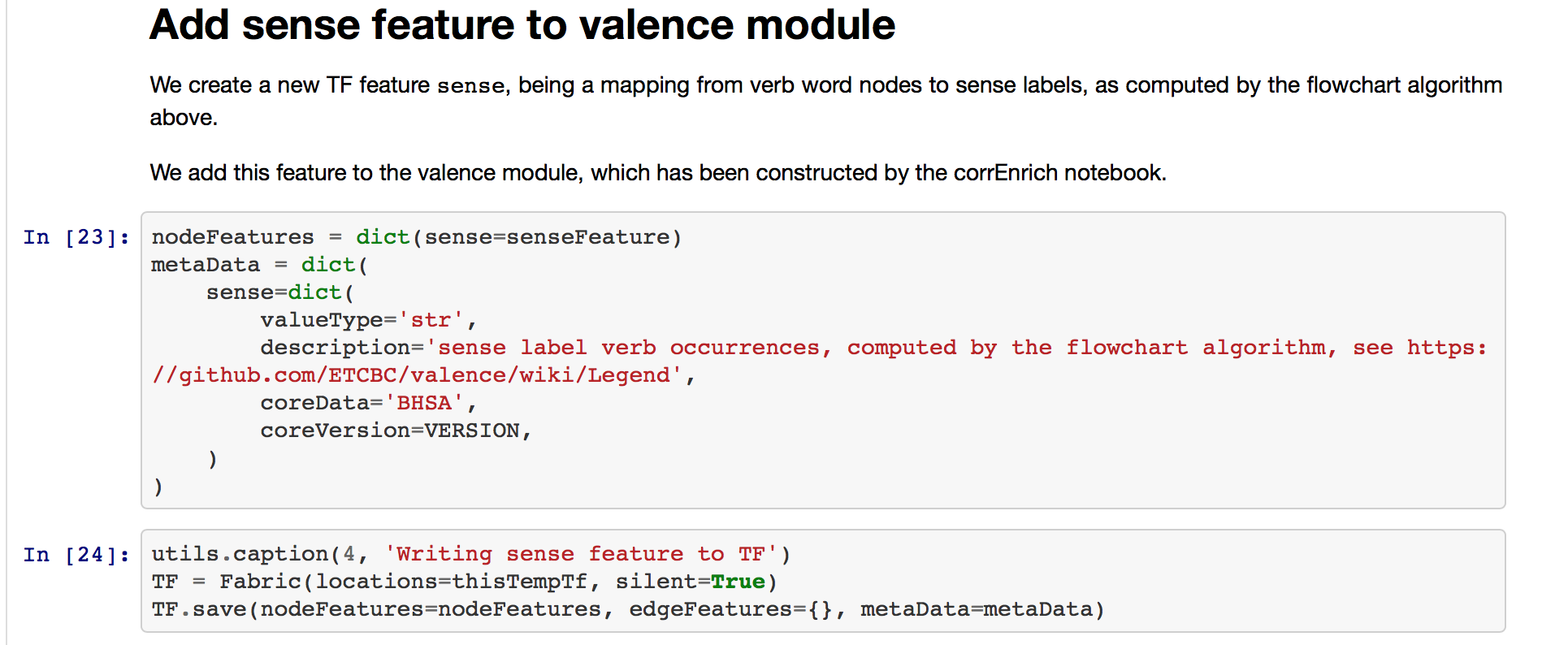

See for example how the

flowchart

notebook in valence writes out verb sense data.

You can then easily share your new features on GitHub, so that your colleagues everywhere can try it out for themselves.

Export to Emdros MQL¶

EMDROS, written by Ulrik Petersen, is a text database system with the powerful topographic query language MQL. The ideas are based on a model devised by Christ-Jan Doedens in Text Databases: One Database Model and Several Retrieval Languages.

Text-Fabric's model of slots, nodes and edges is a fairly straightforward translation of the models of Christ-Jan Doedens and Ulrik Petersen.

SHEBANQ uses EMDROS to offer users to execute and save MQL queries against the Hebrew Text Database of the ETCBC.

So it is kind of logical and convenient to be able to work with a Text-Fabric resource through MQL.

If you have obtained an MQL dataset somehow, you can turn it into a text-fabric data set by importMQL(),

which we will not show here.

And if you want to export a Text-Fabric data set to MQL, that is also possible.

After the Fabric(modules=...) call, you can call exportMQL() in order to save all features of the

indicated modules into a big MQL dump, which can be imported by an EMDROS database.

TF.exportMQL('mybhsa','~/Downloads')

0.00s Checking features of dataset mybhsa

| 0.00s feature "book@am" => "book_am" | 0.00s feature "book@ar" => "book_ar" | 0.00s feature "book@bn" => "book_bn" | 0.00s feature "book@da" => "book_da" | 0.00s feature "book@de" => "book_de" | 0.00s feature "book@el" => "book_el" | 0.00s feature "book@en" => "book_en" | 0.00s feature "book@es" => "book_es" | 0.00s feature "book@fa" => "book_fa" | 0.00s feature "book@fr" => "book_fr" | 0.00s feature "book@he" => "book_he" | 0.00s feature "book@hi" => "book_hi" | 0.00s feature "book@id" => "book_id" | 0.00s feature "book@ja" => "book_ja" | 0.00s feature "book@ko" => "book_ko" | 0.00s feature "book@la" => "book_la" | 0.00s feature "book@nl" => "book_nl" | 0.00s feature "book@pa" => "book_pa" | 0.00s feature "book@pt" => "book_pt" | 0.00s feature "book@ru" => "book_ru" | 0.00s feature "book@sw" => "book_sw" | 0.00s feature "book@syc" => "book_syc" | 0.00s feature "book@tr" => "book_tr" | 0.00s feature "book@ur" => "book_ur" | 0.00s feature "book@yo" => "book_yo" | 0.00s feature "book@zh" => "book_zh"

| 0.00s M code from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M det from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M dist from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M dist_unit from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M distributional_parent from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M domain from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M freq_occ from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M function from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M functional_parent from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M g_nme from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M g_nme_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M g_pfm from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M g_pfm_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M g_prs from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M g_prs_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M g_uvf from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M g_uvf_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M g_vbe from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M g_vbe_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M g_vbs from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M g_vbs_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M gn from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M instruction from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M is_root from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M kind from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M kq_hybrid from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M kq_hybrid_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M label from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M languageISO from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M lexeme_count from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M ls from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M mother_object_type from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M nametype from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M nme from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M nu from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.01s M number from /Users/dirk/github/etcbc/bhsa/tf/2017 | 0.00s M omap@2016-2017 from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s feature "omap@2016-2017" => "omap_2016_2017"

| 0.00s M otext@phono from /Users/dirk/github/etcbc/phono/tf/2017

| 0.00s M pargr from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M pdp from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M pfm from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M prs from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M prs_gn from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M prs_nu from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M prs_ps from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M ps from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M rank_lex from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M rank_occ from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M rela from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M root from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M st from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M suffix_gender from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M suffix_number from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M suffix_person from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M tab from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M txt from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M typ from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M uvf from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M vbe from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M vbs from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M voc_lex from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M vs from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s M vt from /Users/dirk/github/etcbc/bhsa/tf/2017

0.22s 114 features to export to MQL ...

0.22s Loading 114 features

| 0.05s B code from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.20s B det from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.15s B dist from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.21s B dist_unit from /Users/dirk/github/etcbc/bhsa/tf/2017

| 1.89s B distributional_parent from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.03s B domain from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.15s B freq_occ from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.15s B function from /Users/dirk/github/etcbc/bhsa/tf/2017

| 2.23s B functional_parent from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.10s B g_nme from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.20s B g_nme_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.12s B g_pfm from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.11s B g_pfm_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.11s B g_prs from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.12s B g_prs_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.08s B g_uvf from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.07s B g_uvf_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.08s B g_vbe from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.08s B g_vbe_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.07s B g_vbs from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.07s B g_vbs_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.10s B gn from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.03s B instruction from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.03s B is_root from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.03s B kind from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.10s B kq_hybrid from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.12s B kq_hybrid_utf8 from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.03s B label from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.20s B languageISO from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.13s B lexeme_count from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.20s B ls from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.10s B mother_object_type from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s B nametype from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.16s B nme from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.13s B nu from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.26s B number from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.81s B omap@2016-2017 from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.03s B pargr from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.15s B pdp from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.13s B pfm from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.13s B prs from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.14s B prs_gn from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.14s B prs_nu from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.13s B prs_ps from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.13s B ps from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.09s B rank_lex from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.09s B rank_occ from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.23s B rela from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.00s B root from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.11s B st from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.13s B suffix_gender from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.13s B suffix_number from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.14s B suffix_person from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.02s B tab from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.03s B txt from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.23s B typ from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.12s B uvf from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.13s B vbe from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.13s B vbs from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.01s B voc_lex from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.14s B vs from /Users/dirk/github/etcbc/bhsa/tf/2017

| 0.17s B vt from /Users/dirk/github/etcbc/bhsa/tf/2017

12s Writing enumerations

book_am : 39 values, 39 not a name, e.g. «መኃልየ_መኃልይ_ዘሰሎሞን»

book_ar : 39 values, 39 not a name, e.g. «1_اخبار»

book_bn : 39 values, 39 not a name, e.g. «আদিপুস্তক»

book_da : 39 values, 13 not a name, e.g. «1.Kongebog»

book_de : 39 values, 7 not a name, e.g. «1_Chronik»

book_el : 39 values, 39 not a name, e.g. «Άσμα_Ασμάτων»

book_en : 39 values, 6 not a name, e.g. «1_Chronicles»

book_es : 39 values, 22 not a name, e.g. «1_Crónicas»

book_fa : 39 values, 39 not a name, e.g. «استر»

book_fr : 39 values, 19 not a name, e.g. «1_Chroniques»

book_he : 39 values, 39 not a name, e.g. «איוב»

book_hi : 39 values, 39 not a name, e.g. «1_इतिहास»

book_id : 39 values, 7 not a name, e.g. «1_Raja-raja»

book_ja : 39 values, 39 not a name, e.g. «アモス書»

book_ko : 39 values, 39 not a name, e.g. «나훔»

book_nl : 39 values, 8 not a name, e.g. «1_Koningen»

book_pa : 39 values, 39 not a name, e.g. «1_ਇਤਹਾਸ»

book_pt : 39 values, 21 not a name, e.g. «1_Crônicas»

book_ru : 39 values, 39 not a name, e.g. «1-я_Паралипоменон»

book_sw : 39 values, 6 not a name, e.g. «1_Mambo_ya_Nyakati»

book_syc : 39 values, 39 not a name, e.g. «ܐ_ܒܪܝܡܝܢ»

book_tr : 39 values, 16 not a name, e.g. «1_Krallar»

book_ur : 39 values, 39 not a name, e.g. «احبار»

book_yo : 39 values, 8 not a name, e.g. «Amọsi»

book_zh : 38 values, 37 not a name, e.g. «以斯帖记»

domain : 4 values, 1 not a name, e.g. «?»

g_nme : 108 values, 108 not a name, e.g. «»

g_nme_utf8 : 106 values, 106 not a name, e.g. «»

g_pfm : 87 values, 87 not a name, e.g. «»

g_pfm_utf8 : 86 values, 86 not a name, e.g. «»

g_prs : 127 values, 127 not a name, e.g. «»

g_prs_utf8 : 126 values, 126 not a name, e.g. «»

g_uvf : 19 values, 19 not a name, e.g. «»

g_uvf_utf8 : 17 values, 17 not a name, e.g. «»

g_vbe : 101 values, 101 not a name, e.g. «»

g_vbe_utf8 : 97 values, 97 not a name, e.g. «»

g_vbs : 66 values, 66 not a name, e.g. «»

g_vbs_utf8 : 65 values, 65 not a name, e.g. «»

instruction : 35 values, 20 not a name, e.g. «.#»

nametype : 9 values, 4 not a name, e.g. «gens,topo»

nme : 20 values, 7 not a name, e.g. «»

pfm : 11 values, 4 not a name, e.g. «»

phono_trailer : 4 values, 4 not a name, e.g. «»

prs : 22 values, 4 not a name, e.g. «H=»

qere_trailer : 5 values, 5 not a name, e.g. «»

qere_trailer_utf8: 5 values, 5 not a name, e.g. «»

root : 648 values, 187 not a name, e.g. «<Assyrian>»

trailer : 12 values, 12 not a name, e.g. «»

trailer_utf8 : 12 values, 12 not a name, e.g. «»

txt : 136 values, 59 not a name, e.g. «?»

uvf : 6 values, 1 not a name, e.g. «>»

vbe : 19 values, 6 not a name, e.g. «»

vbs : 11 values, 3 not a name, e.g. «>»

| 2.23s Writing an all-in-one enum with 232 values

14s Mapping 114 features onto 13 object types

20s Writing 114 features as data in 13 object types

| 0.00s word data ...

| | 4.58s batch of size 46.6MB with 50000 of 50000 words

| | 9.17s batch of size 46.6MB with 50000 of 100000 words

| | 14s batch of size 46.8MB with 50000 of 150000 words

| | 19s batch of size 46.8MB with 50000 of 200000 words

| | 24s batch of size 47.0MB with 50000 of 250000 words

| | 28s batch of size 47.0MB with 50000 of 300000 words

| | 34s batch of size 47.2MB with 50000 of 350000 words

| | 39s batch of size 47.0MB with 50000 of 400000 words

| | 42s batch of size 24.9MB with 26584 of 426584 words

| 42s word data: 426584 objects

| 0.00s subphrase data ...

| | 0.63s batch of size 7.2MB with 50000 of 50000 subphrases

| | 1.26s batch of size 7.1MB with 50000 of 100000 subphrases

| | 1.44s batch of size 2.0MB with 13784 of 113784 subphrases

| 1.44s subphrase data: 113784 objects

| 0.00s phrase_atom data ...

| | 0.98s batch of size 10.9MB with 50000 of 50000 phrase_atoms

| | 1.94s batch of size 10.9MB with 50000 of 100000 phrase_atoms

| | 2.91s batch of size 11.0MB with 50000 of 150000 phrase_atoms

| | 3.88s batch of size 11.0MB with 50000 of 200000 phrase_atoms

| | 4.87s batch of size 11.0MB with 50000 of 250000 phrase_atoms

| | 5.22s batch of size 3.9MB with 17519 of 267519 phrase_atoms

| 5.22s phrase_atom data: 267519 objects

| 0.00s phrase data ...

| | 0.90s batch of size 9.8MB with 50000 of 50000 phrases

| | 1.79s batch of size 9.9MB with 50000 of 100000 phrases

| | 2.87s batch of size 9.9MB with 50000 of 150000 phrases

| | 3.83s batch of size 9.9MB with 50000 of 200000 phrases

| | 4.73s batch of size 9.9MB with 50000 of 250000 phrases

| | 4.80s batch of size 649.0KB with 3187 of 253187 phrases

| 4.80s phrase data: 253187 objects

| 0.00s clause_atom data ...

| | 1.29s batch of size 13.3MB with 50000 of 50000 clause_atoms

| | 2.31s batch of size 10.8MB with 40669 of 90669 clause_atoms

| 2.32s clause_atom data: 90669 objects

| 0.00s clause data ...

| | 1.24s batch of size 12.2MB with 50000 of 50000 clauses

| | 2.21s batch of size 9.3MB with 38101 of 88101 clauses

| 2.21s clause data: 88101 objects

| 0.00s sentence_atom data ...

| | 0.58s batch of size 6.6MB with 50000 of 50000 sentence_atoms

| | 0.75s batch of size 1.9MB with 14486 of 64486 sentence_atoms

| 0.75s sentence_atom data: 64486 objects

| 0.00s sentence data ...

| | 0.46s batch of size 5.1MB with 50000 of 50000 sentences

| | 0.58s batch of size 1.4MB with 13711 of 63711 sentences

| 0.58s sentence data: 63711 objects

| 0.00s half_verse data ...

| | 0.42s batch of size 4.5MB with 45180 of 45180 half_verses

| 0.42s half_verse data: 45180 objects

| 0.00s verse data ...

| | 0.34s batch of size 3.4MB with 23213 of 23213 verses

| 0.34s verse data: 23213 objects

| 0.00s lex data ...

| | 0.54s batch of size 4.7MB with 9233 of 9233 lexs

| 0.54s lex data: 9233 objects

| 0.00s chapter data ...

| | 0.07s batch of size 110.2KB with 929 of 929 chapters

| 0.07s chapter data: 929 objects

| 0.00s book data ...

| | 0.05s batch of size 28.3KB with 39 of 39 books

| 0.05s book data: 39 objects

1m 20s Done

Now you have a file ~/Downloads/mybhsa.mql of 530 MB.

You can import it into an Emdros database by saying:

cd ~/Downloads

rm mybhsa.mql

mql -b 3 < mybhsa.mql

The result is an SQLite3 database mybhsa in the same directory (168 MB).

You can run a query against it by creating a text file test.mql with this contents:

select all objects where

[lex gloss ~ 'make'

[word FOCUS]

]

And then say

mql -b 3 -d mybhsa test.mql

You will see raw query results: all word occurrences that belong to lexemes with make in their gloss.

It is not very pretty, and probably you should use a more visual Emdros tool to run those queries. You see a lot of node numbers, but the good thing is, you can look those node numbers up in Text-Fabric.

Clean caches¶

Text-Fabric pre-computes data for you, so that it can be loaded faster. If the original data is updated, Text-Fabric detects it, and will recompute that data.

But there are cases, when the algorithms of Text-Fabric have changed, without any changes in the data, that you might want to clear the cache of precomputed results.

There are two ways to do that:

- Locate the

.tfdirectory of your dataset, and remove all.tfxfiles in it. This might be a bit awkward to do, because the.tfdirectory is hidden on Unix-like systems. - Call

TF.clearCache(), which does exactly the same.

It is not handy to execute the following cell all the time, that's why I have commented it out. So if you really want to clear the cache, remove the comment sign below.

# TF.clearCache()