Instructions¶

To run any of Eden's notebooks, please check the guides on our Wiki page.

There you will find instructions on how to deploy the notebooks on your local system, on Google Colab, or on MyBinder, as well as other useful links, troubleshooting tips, and more.

For this notebook you will need to download the Grape vine-210520-Healthy-zz-V1-20210225102831, datasets from Eden Library, and you may want to use the eden_tensorflow_transfer_learning.yml file to recreate a suitable conda environment.

Note: If you find any issues while executing the notebook, don't hesitate to open an issue on Github. We will try to reply as soon as possible.

Background¶

In this notebook, we are going to train an autoencoder using Keras and TensorFlow for removing noise from input images.

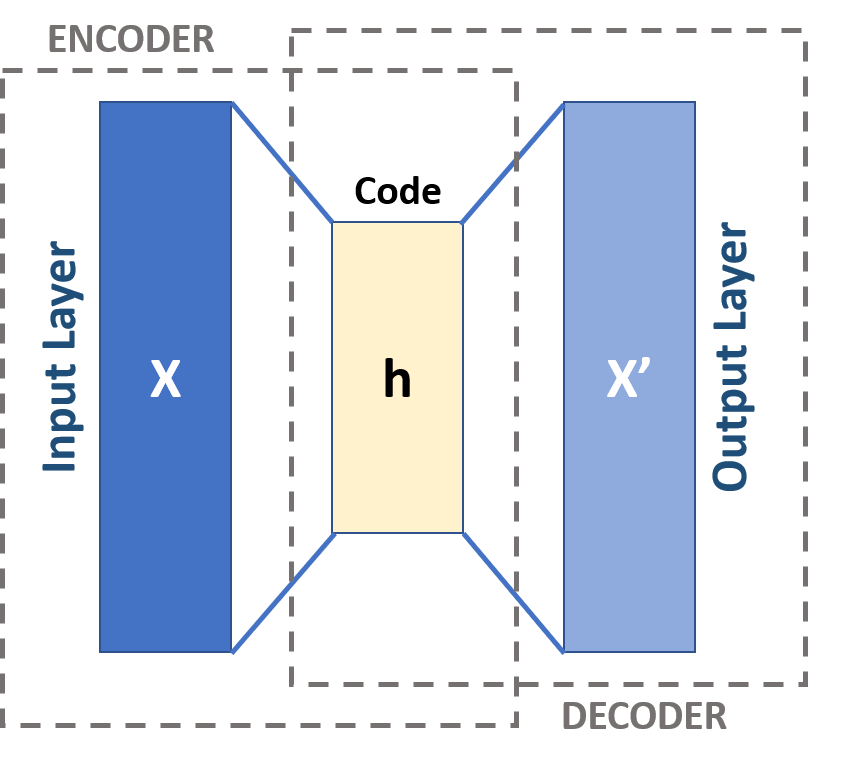

Autoencoders are a type of unsupervised neural network (i.e., no class labels or labeled data). As shown in the figure below, typically, autoencoders have two components/subnetworks:

- Encoder: Accepts the input data and compresses it into a latent-space representation (i.e., a single vector that compresses and quantifies the input).

- Decoder: The decoder is responsible for accepting the latent-space representation and then reconstructing the original input.

Autoencoders are basically a technique for data compression, similar to the way an audio file is compressed using MP3, or an image file is compressed using JPEG. The aim of the autoencoder is to find a model (weights) which will require the minimum information to encode the image such that it can be recreated on the decoder side.

If we use too few neurons in the bottleneck layer (last layer), the capacity to recreate the image will be limited and created images could be blurry or distorted. On the other hand, if the encoder uses too many neurons, then there is no compression.

Moreover, autoencoders are typically used for:

- Dimensionality reduction: If all the activation functions used within the autoencoder are linear, the latent variables present at the bottleneck, correspond to the principal components from PCA.

- Denoising

- Anomaly/outlier detection (e.g.: detecting mislabeled data points in a dataset or detecting when an input data point falls well outside our typical data distribution).

In agriculture, researchers have used autoencoders for anomaly/novelty detection (Alexandridis et al., 2017) and denoising (Wen et al., 2015).

Library Imports¶

import warnings

warnings.filterwarnings("ignore")

import numpy as np

import cv2

import os

import random

import matplotlib.pyplot as plt

from tqdm import tqdm

from glob import glob

from pathlib import Path

import tensorflow as tf

from tensorflow.keras.utils import to_categorical

from tensorflow.keras.applications import *

from tensorflow.keras import layers

from tensorflow.keras.models import Model

from tensorflow.keras.optimizers import Adam

from tensorflow.keras.callbacks import EarlyStopping, ReduceLROnPlateau

from sklearn.model_selection import train_test_split

from sklearn.metrics import f1_score

from sklearn.utils import shuffle

Auxiliar functions¶

# Function for plotting images.

def plot_sample(X):

# Plotting 9 sample images

nb_rows = 3

nb_cols = 3

fig, axs = plt.subplots(nb_rows, nb_cols, figsize=(8, 8))

for i in range(0, nb_rows):

for j in range(0, nb_cols):

axs[i, j].axis("off")

axs[i, j].imshow(X[random.randint(0, X.shape[0] - 1)])

def read_data(path_list, im_size=(224, 224)):

X = []

y = []

# Exctract the file-names of the datasets we read and create a label dictionary.

tag2idx = {tag.split(os.path.sep)[-1]: i for i, tag in enumerate(path_list)}

print(tag2idx)

for path in path_list:

for im_file in tqdm(glob(path + "*/*")): # Read all files in path

try:

# os.path.separator is OS agnostic (either '/' or '\'),[-2] to grab folder name.

label = im_file.split(os.path.sep)[-2]

im = cv2.imread(im_file, cv2.IMREAD_COLOR)

# By default OpenCV read with BGR format, return back to RGB.

im = cv2.cvtColor(im, cv2.COLOR_BGR2RGB)

# Resize to appropriate dimensions.You can try different interpolation methods.

# im = quantize_image(im)

im = cv2.resize(im, im_size, interpolation=cv2.INTER_AREA)

X.append(im)

y.append(tag2idx[label]) # Append the label name to y

except Exception as e:

# In case annotations or metadata are found

print("Not a picture")

X = np.array(X) # Convert list to numpy array.

y = np.eye(len(np.unique(y)))[y].astype(np.uint8)

return X, y, tag2idx

Experimental Constants¶

INPUT_SHAPE = (256, 256, 3)

IM_SIZE = (256, 256)

NUM_EPOCHS = 500

BATCH_SIZE = 8

TEST_SPLIT = 0.15

BASE_LEARNING_RATE = 1e-3

RANDOM_STATE = 2021

VERBOSE_LEVEL = 1

# Datasets' paths we want to work on.

PATH_LIST = ["../eden_library_datasets/Grape vine-210520-Healthy-zz-V1-20210225102831"]

Loading Data¶

X, y, tag2idx = read_data(PATH_LIST, IM_SIZE)

0%| | 1/444 [00:00<01:09, 6.34it/s]

{'Grape vine-210520-Healthy-zz-V1-20210225102831': 0}

66%|██████▋ | 295/444 [00:52<00:20, 7.13it/s]

Not a picture

100%|██████████| 444/444 [01:19<00:00, 5.57it/s]

Displaying Original Images¶

plot_sample(X)

Splitting data between train and test¶

x_train, x_test, y_train, y_test = train_test_split(

X, y, test_size=TEST_SPLIT, shuffle=True, stratify=y, random_state=RANDOM_STATE

)

Pre-processing¶

x_train = x_train.astype("float32") / 255.0

x_test = x_test.astype("float32") / 255.0

Adding noise to the original images¶

noise_factor = 0.3

x_train_noisy = x_train + noise_factor * tf.random.normal(shape=x_train.shape)

x_test_noisy = x_test + noise_factor * tf.random.normal(shape=x_test.shape)

x_train_noisy = tf.clip_by_value(x_train_noisy, clip_value_min=0.0, clip_value_max=1.0)

x_test_noisy = tf.clip_by_value(x_test_noisy, clip_value_min=0.0, clip_value_max=1.0)

Displaying Noisy Images¶

plot_sample(x_train_noisy)

Defining Autoencoder¶

class Denoise(Model):

def __init__(self):

super(Denoise, self).__init__()

self.encoder = tf.keras.Sequential(

[

layers.Input(shape=(256, 256, 3)),

layers.Conv2D(

128, (3, 3), activation="relu", padding="same", strides=2

),

layers.Conv2D(64, (3, 3), activation="relu", padding="same", strides=2),

layers.Conv2D(

32, (3, 3), activation="relu", padding="same", strides=2

), # 32x32*32 VS 256X256X3

]

)

self.decoder = tf.keras.Sequential(

[

layers.Conv2DTranspose(

64, kernel_size=3, strides=2, activation="relu", padding="same"

),

layers.Conv2DTranspose(

128, kernel_size=3, strides=2, activation="relu", padding="same"

),

layers.Conv2DTranspose(

256, kernel_size=3, strides=2, activation="relu", padding="same"

),

layers.Conv2D(

3, kernel_size=(3, 3), activation="sigmoid", padding="same"

),

]

)

def call(self, x):

encoded = self.encoder(x)

decoded = self.decoder(encoded)

return decoded

Creating Autoencoder¶

autoencoder = Denoise()

autoencoder.compile(

optimizer=tf.keras.optimizers.Adam(learning_rate=1e-3),

loss=tf.keras.losses.MeanSquaredError(),

)

WARNING:tensorflow:Please add `keras.layers.InputLayer` instead of `keras.Input` to Sequential model. `keras.Input` is intended to be used by Functional model.

Defining callbaks for improving training efficiency¶

# If val_loss doesn't improve for a number of epochs set with 'patience' parameter

# the current learning rate is multiplied by 'factor'(0.8).

reduce_lr = (

ReduceLROnPlateau(

monitor="val_loss", mode="min", factor=0.8, patience=NUM_EPOCHS // 20, verbose=1

),

)

# If val_loss doesn't improve for a number of epochs set with 'patience' parameter

# training will stop to avoid overfitting.

early_stopping = (

EarlyStopping(

monitor="val_loss",

mode="min",

patience=NUM_EPOCHS // 15,

verbose=1,

restore_best_weights=True,

),

)

Training the AutoEncoder for removing noise¶

autoencoder.fit(

x_train_noisy,

x_train,

epochs=NUM_EPOCHS,

batch_size=BATCH_SIZE,

shuffle=True,

validation_data=(x_test_noisy, x_test),

callbacks=[early_stopping, reduce_lr],

)

Epoch 1/500 47/47 [==============================] - 3s 51ms/step - loss: 0.0550 - val_loss: 0.0328 Epoch 2/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0261 - val_loss: 0.0198 Epoch 3/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0182 - val_loss: 0.0165 Epoch 4/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0156 - val_loss: 0.0153 Epoch 5/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0144 - val_loss: 0.0139 Epoch 6/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0135 - val_loss: 0.0130 Epoch 7/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0131 - val_loss: 0.0125 Epoch 8/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0123 - val_loss: 0.0123 Epoch 9/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0120 - val_loss: 0.0116 Epoch 10/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0121 - val_loss: 0.0115 Epoch 11/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0113 - val_loss: 0.0112 Epoch 12/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0115 - val_loss: 0.0110 Epoch 13/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0109 - val_loss: 0.0108 Epoch 14/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0109 - val_loss: 0.0105 Epoch 15/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0105 - val_loss: 0.0104 Epoch 16/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0108 - val_loss: 0.0130 Epoch 17/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0115 - val_loss: 0.0104 Epoch 18/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0102 - val_loss: 0.0101 Epoch 19/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0100 - val_loss: 0.0098 Epoch 20/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0101 - val_loss: 0.0098 Epoch 21/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0098 - val_loss: 0.0097 Epoch 22/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0099 - val_loss: 0.0096 Epoch 23/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0102 - val_loss: 0.0096 Epoch 24/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0096 - val_loss: 0.0095 Epoch 25/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0096 - val_loss: 0.0094 Epoch 26/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0096 - val_loss: 0.0094 Epoch 27/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0094 - val_loss: 0.0094 Epoch 28/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0094 - val_loss: 0.0092 Epoch 29/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0093 - val_loss: 0.0093 Epoch 30/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0094 - val_loss: 0.0092 Epoch 31/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0093 - val_loss: 0.0091 Epoch 32/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0093 - val_loss: 0.0091 Epoch 33/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0092 - val_loss: 0.0090 Epoch 34/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0092 - val_loss: 0.0090 Epoch 35/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0091 - val_loss: 0.0091 Epoch 36/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0091 - val_loss: 0.0090 Epoch 37/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0090 - val_loss: 0.0089 Epoch 38/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0090 - val_loss: 0.0089 Epoch 39/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0091 - val_loss: 0.0089 Epoch 40/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0089 - val_loss: 0.0095 Epoch 41/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0089 - val_loss: 0.0090 Epoch 42/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0089 - val_loss: 0.0088 Epoch 43/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0090 - val_loss: 0.0091 Epoch 44/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0088 - val_loss: 0.0088 Epoch 45/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0088 - val_loss: 0.0088 Epoch 46/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0088 - val_loss: 0.0088 Epoch 47/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0088 - val_loss: 0.0090 Epoch 48/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0087 - val_loss: 0.0087 Epoch 49/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0087 - val_loss: 0.0089 Epoch 50/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0087 - val_loss: 0.0087 Epoch 51/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0086 - val_loss: 0.0085 Epoch 52/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0086 - val_loss: 0.0085 Epoch 53/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0088 - val_loss: 0.0087 Epoch 54/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0086 - val_loss: 0.0086 Epoch 55/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0087 - val_loss: 0.0090 Epoch 56/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0086 - val_loss: 0.0087 Epoch 57/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0085 - val_loss: 0.0087 Epoch 58/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0086 - val_loss: 0.0085 Epoch 59/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0085 - val_loss: 0.0084 Epoch 60/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0086 - val_loss: 0.0084 Epoch 61/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0085 - val_loss: 0.0087 Epoch 62/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0085 - val_loss: 0.0084 Epoch 63/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0085 - val_loss: 0.0083 Epoch 64/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0086 - val_loss: 0.0084 Epoch 65/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0084 - val_loss: 0.0084 Epoch 66/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0084 - val_loss: 0.0083 Epoch 67/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0085 - val_loss: 0.0084 Epoch 68/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0084 - val_loss: 0.0085 Epoch 69/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0084 - val_loss: 0.0083 Epoch 70/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0085 - val_loss: 0.0085 Epoch 71/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0084 - val_loss: 0.0083 Epoch 72/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0083 - val_loss: 0.0084 Epoch 73/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0083 - val_loss: 0.0084 Epoch 74/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0086 - val_loss: 0.0082 Epoch 75/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0083 - val_loss: 0.0082 Epoch 76/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0083 - val_loss: 0.0083 Epoch 77/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0083 - val_loss: 0.0082 Epoch 78/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0083 - val_loss: 0.0083 Epoch 79/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0083 - val_loss: 0.0083 Epoch 80/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0086 - val_loss: 0.0082 Epoch 81/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0082 - val_loss: 0.0082 Epoch 82/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0082 - val_loss: 0.0083 Epoch 83/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0083 - val_loss: 0.0082 Epoch 84/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0082 - val_loss: 0.0082 Epoch 85/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0083 - val_loss: 0.0082 Epoch 86/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0082 - val_loss: 0.0081 Epoch 87/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0083 - val_loss: 0.0087 Epoch 88/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0082 - val_loss: 0.0082 Epoch 89/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0082 - val_loss: 0.0081 Epoch 90/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0082 - val_loss: 0.0081 Epoch 91/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0083 - val_loss: 0.0088 Epoch 92/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0083 - val_loss: 0.0081 Epoch 93/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0081 - val_loss: 0.0082 Epoch 94/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0081 - val_loss: 0.0081 Epoch 95/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0082 - val_loss: 0.0081 Epoch 96/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0082 - val_loss: 0.0082 Epoch 97/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0081 - val_loss: 0.0081 Epoch 98/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0081 - val_loss: 0.0081 Epoch 99/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0081 - val_loss: 0.0083 Epoch 100/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0084 - val_loss: 0.0081 Epoch 101/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0081 - val_loss: 0.0081 Epoch 102/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0081 - val_loss: 0.0081 Epoch 103/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0081 - val_loss: 0.0081 Epoch 104/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0083 - val_loss: 0.0081 Epoch 105/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0081 - val_loss: 0.0081 Epoch 106/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0081 - val_loss: 0.0080 Epoch 107/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0081 - val_loss: 0.0081 Epoch 108/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0081 - val_loss: 0.0080 Epoch 109/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0080 - val_loss: 0.0081 Epoch 110/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0082 - val_loss: 0.0081 Epoch 111/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0080 Epoch 112/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0082 - val_loss: 0.0080 Epoch 113/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0081 Epoch 114/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0080 Epoch 115/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0080 Epoch 116/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0080 Epoch 117/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0081 - val_loss: 0.0080 Epoch 118/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0080 Epoch 119/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0080 Epoch 120/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0081 - val_loss: 0.0081 Epoch 121/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0080 Epoch 122/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0080 Epoch 123/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0081 - val_loss: 0.0080 Epoch 124/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0081 Epoch 125/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0081 - val_loss: 0.0086 Epoch 126/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0082 - val_loss: 0.0080 Epoch 127/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0079 - val_loss: 0.0079 Epoch 128/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0079 Epoch 129/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0079 - val_loss: 0.0079 Epoch 130/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0080 Epoch 131/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0080 Epoch 132/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0079 - val_loss: 0.0079 Epoch 133/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0081 Epoch 134/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0080 Epoch 135/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0079 - val_loss: 0.0079 Epoch 136/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0079 - val_loss: 0.0079 Epoch 137/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0079 - val_loss: 0.0079 Epoch 138/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0080 - val_loss: 0.0082 Epoch 139/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0079 - val_loss: 0.0079 Epoch 00139: ReduceLROnPlateau reducing learning rate to 0.000800000037997961. Epoch 140/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0078 - val_loss: 0.0079 Epoch 141/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0079 Epoch 142/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 143/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 144/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0079 Epoch 145/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0079 - val_loss: 0.0079 Epoch 146/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0079 - val_loss: 0.0079 Epoch 147/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0079 - val_loss: 0.0079 Epoch 148/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 149/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0080 Epoch 150/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0079 Epoch 151/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0079 Epoch 152/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0079 Epoch 153/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 154/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0079 Epoch 155/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 156/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 157/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0079 - val_loss: 0.0079 Epoch 158/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 159/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0081 Epoch 160/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0079 - val_loss: 0.0078 Epoch 161/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0079 Epoch 162/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0079 - val_loss: 0.0078 Epoch 163/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 164/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 165/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 166/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 167/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 00167: ReduceLROnPlateau reducing learning rate to 0.0006400000303983689. Epoch 168/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 169/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 170/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 171/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 172/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 173/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 174/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 175/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 176/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 177/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 178/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 179/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 180/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 181/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 182/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 183/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 184/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0079 Epoch 185/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 186/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 187/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 188/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 189/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 190/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 191/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0078 - val_loss: 0.0078 Epoch 192/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 00192: ReduceLROnPlateau reducing learning rate to 0.0005120000336319208. Epoch 193/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 194/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 195/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 196/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 197/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 198/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 199/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 200/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 201/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 202/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 203/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 204/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 205/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 206/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 207/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 208/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 209/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 210/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 211/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 212/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 213/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 214/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 215/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 216/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0078 Epoch 217/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0077 - val_loss: 0.0078 Epoch 218/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0078 Epoch 219/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0077 - val_loss: 0.0077 Epoch 220/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 00220: ReduceLROnPlateau reducing learning rate to 0.00040960004553198815. Epoch 221/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 222/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 223/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 224/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 225/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 226/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 227/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 228/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 229/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 230/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 231/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 232/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0078 Epoch 233/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 234/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 235/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 236/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 237/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 238/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 239/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 240/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 241/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0078 Epoch 242/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 243/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 244/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 245/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 00245: ReduceLROnPlateau reducing learning rate to 0.00032768002711236477. Epoch 246/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 247/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 248/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 249/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 250/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 251/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 252/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 253/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 254/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 255/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 256/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 257/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 258/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 259/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 260/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 261/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 262/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 263/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 264/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 265/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 266/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 267/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 268/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 269/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 270/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 00270: ReduceLROnPlateau reducing learning rate to 0.0002621440216898918. Epoch 271/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 272/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 273/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 274/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 275/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 276/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 277/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 278/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 279/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 280/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 281/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 282/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 283/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 284/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 285/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 286/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 287/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 288/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 289/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 290/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 291/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 292/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 293/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 294/500 47/47 [==============================] - 2s 46ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 295/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0076 - val_loss: 0.0077 Epoch 00295: ReduceLROnPlateau reducing learning rate to 0.00020971521735191345. Epoch 296/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 297/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 298/500 47/47 [==============================] - 2s 51ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 299/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 300/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 301/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 302/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 303/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 304/500 47/47 [==============================] - 2s 51ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 305/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 306/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 307/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 308/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 309/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 310/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 311/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 312/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 313/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 314/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 315/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 316/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 317/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 318/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 319/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 320/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 00320: ReduceLROnPlateau reducing learning rate to 0.00016777217388153076. Epoch 321/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 322/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 323/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 324/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 325/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 326/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 327/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 328/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 329/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 330/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 331/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 332/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 333/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 334/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 335/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 336/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 337/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 338/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 339/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 340/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 341/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 342/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 343/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 344/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 345/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 346/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 347/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 348/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 349/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0077 Epoch 350/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 351/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 352/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 353/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 354/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 355/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 356/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 357/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 358/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 359/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 360/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 361/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 362/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 363/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 364/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 365/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 366/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 367/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 368/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 00368: ReduceLROnPlateau reducing learning rate to 0.00013421773910522462. Epoch 369/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 370/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 371/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 372/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 373/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 374/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 375/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 376/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 377/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 378/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 379/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 380/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 381/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 382/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 383/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 384/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 385/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 386/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 387/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 388/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 389/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 390/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 391/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 392/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 393/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 00393: ReduceLROnPlateau reducing learning rate to 0.00010737419361248613. Epoch 394/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 395/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 396/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 397/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 398/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 399/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 400/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 401/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 402/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 403/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 404/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 405/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 406/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 407/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 408/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 409/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 410/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 411/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 412/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 413/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 414/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 415/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 416/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 417/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 418/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 00418: ReduceLROnPlateau reducing learning rate to 8.589935605414213e-05. Epoch 419/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 420/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 421/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 422/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 423/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 424/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 425/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 426/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 427/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 428/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 429/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 430/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 431/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 432/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 433/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 434/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 435/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 436/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 437/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 438/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 439/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 440/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 441/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 442/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 443/500 47/47 [==============================] - 2s 51ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 00443: ReduceLROnPlateau reducing learning rate to 6.871948717162013e-05. Epoch 444/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 445/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 446/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 447/500 47/47 [==============================] - 2s 47ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 448/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 449/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 450/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 451/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 452/500 47/47 [==============================] - 2s 51ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 453/500 47/47 [==============================] - 2s 52ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 454/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 455/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 456/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 457/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 458/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 459/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 460/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 461/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 462/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 463/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 464/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 465/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 466/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 467/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 468/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 00468: ReduceLROnPlateau reducing learning rate to 5.497558740898967e-05. Epoch 469/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 470/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 471/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 472/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 473/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 474/500 47/47 [==============================] - 2s 50ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 475/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 476/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 477/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 478/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 479/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 480/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 481/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 482/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 483/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 484/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 485/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 486/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 487/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 488/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 489/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 490/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0075 - val_loss: 0.0076 Epoch 491/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 492/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 493/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 00493: ReduceLROnPlateau reducing learning rate to 4.398046876303852e-05. Epoch 494/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 495/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 496/500 47/47 [==============================] - 2s 49ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 497/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 498/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 499/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076 Epoch 500/500 47/47 [==============================] - 2s 48ms/step - loss: 0.0074 - val_loss: 0.0076

<tensorflow.python.keras.callbacks.History at 0x7f49abcec8d0>

Removing noise with the trained autoencoder¶

encoded_imgs = autoencoder.encoder(x_test_noisy).numpy()

decoded_imgs = autoencoder.decoder(encoded_imgs).numpy()

Displaying noisy and denoised images¶

n = 3

plt.figure(figsize=(8, 4), dpi=100)

for i in range(n):

# display original + noise

ax = plt.subplot(2, n, i + 1)

plt.title("original + noise", fontsize=8)

plt.imshow(x_test_noisy[i])

ax.axis("off")

# display reconstruction

bx = plt.subplot(2, n, i + n + 1)

plt.title("reconstructed", fontsize=8)

plt.imshow(decoded_imgs[i])

bx.axis("off")

plt.subplots_adjust(wspace=-0.5, hspace=0.25)

plt.show()

Image Compression¶

256 x 256 x 3 (196,608) -> 32 x 32 x 32 (32,768)

autoencoder.summary()

Model: "denoise_12" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= sequential_26 (Sequential) (None, 32, 32, 32) 95840 _________________________________________________________________ sequential_27 (Sequential) (None, 256, 256, 3) 394435 ================================================================= Total params: 490,275 Trainable params: 490,275 Non-trainable params: 0 _________________________________________________________________

Possible Extensions¶

- Change the number of epochs used while training (500 in this notebook).

- Use other dataset from the Eden platform.

- Change the learning rate used while fine-tuning (1e-3 in this notebook).

References¶

Alexandridis, T., Tamouridou, A.A., Pantazi, X., Lagopodi, A., Kashefi, J., Ovakoglou, G., Polychronos, V., & Moshou, D. (2017). Novelty Detection Classifiers in Weed Mapping: Silybum marianum Detection on UAV Multispectral Images. Sensors (Basel, Switzerland), 17.

Wen, C., Wu, D., Hu, H., & Pan, W. (2015). Pose estimation-dependent identification method for field moth images using deep learning architecture. Biosystems Engineering, 136, 117-128.

https://towardsdatascience.com/generating-images-with-autoencoders-77fd3a8dd368

https://blog.keras.io/building-autoencoders-in-keras.html

https://www.pyimagesearch.com/2020/02/17/autoencoders-with-keras-tensorflow-and-deep-learning/