DIY Redaction Art¶

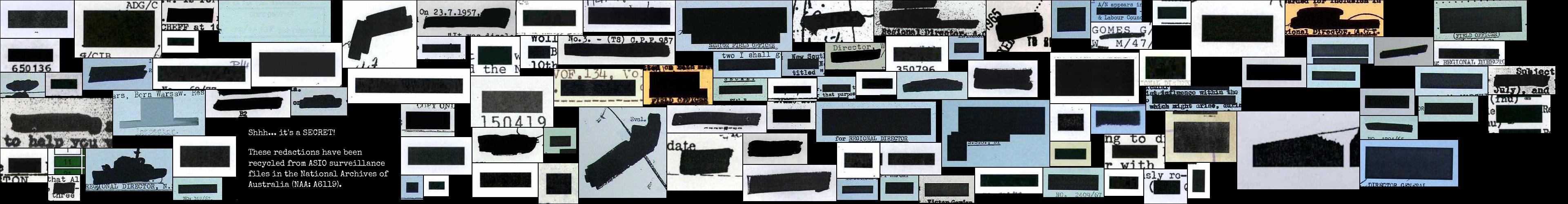

Redactions are a way of restricting access, of witholding information – they're dead ends. But here you can recycle redactions into something interesting, something creative, perhaps even something beautiful.

The redactions used here were extracted from surveillance files created by the Australian Security Intelligence Organisation (ASIO). The files recorded information about the activities of people who were deemed of interest to the government – due to their background, their beliefs, or perhaps just their friends. We don't know how many surveillance files have been created by ASIO over the years, but there are currently more than 7,800 files on individuals available in series A6119 at the National Archives of Australia. Of these, 2,606 have been digitised.

Most of these files are 'Open with exception', which means that the public versions have pages removed and redactions applied – many, many redactions. In April 2021, I downloaded all the digitised files from A6119, comprising 280,134 page images. Using a machine learning model based on YOLOv5, I found 404,653 redactions in the images. Of the 280,134 pages, 151,102 (54%) included redactions. The redaction finding model isn't perfect, but the number of false positives seems very small (probably less than 1 percent). I'll be sharing more information about the process shortly.

To make your own redaction art collages, just set the desired size of your final image and click on the button below. A random sample of redactions will be obtained from the dataset and packed into the image dimensions. Once it's finished you'll be able to download both the finished collage, and a CSV dataset containing metadata that describes all the redactions used, including original file references. If you're not happy with the result, try again. Every piece of redaction art is unique. Please share your creations using the #redactionart tag.

Keep a look out for an assortment of redaction art critters and doodles which I found living in the files. There should be at least one in every collage.

# Import what we need

import io

import os

import random

from datetime import datetime

from pathlib import Path

import ipywidgets as widgets

import pandas as pd

import requests

from IPython.display import HTML, display

from PIL import Image, ImageOps

from rectpack import SORT_NONE, newPacker

from tqdm.auto import tqdm

def choose_art():

"""

Select a random piece of redactionart to include in the composite.

"""

redactionart = Path("data", "redactionart.txt").read_text().split("\n")

red = random.choice(redactionart)

img, w, h = red.split()

return (img, int(w), int(h))

def get_image_data(width, height, max_size):

"""

Get a randomly selected list of redactions to be packed into the composite.

Insert the citation image and a piece of redactionart.

"""

# Make an estimate of how many redactions are needed

# This might need to be changed if the image max_size is reduced

# by increasing the final factor.

with out:

print("Gathering data...")

sample_size = round(((width * height) / 1000000) * 70)

images = []

# Open the redactions dataset as a dataframe

redactions = pd.read_csv(Path("data", "A6119-redactions.csv"))

# Select a random sample of redactions and loop through them

for red in redactions.sample(sample_size).itertuples():

img_w = red.img_width

img_h = red.img_height

# Only incude redactions smaller than the max_size

if img_w < max_size and img_h < max_size:

images.append((img_w + 2, img_h + 2, red.img_name))

# Select a random point in the first half of the list to insert the citation.

# We put it in the first half to try and make sure it gets included.

ref_loc = random.choice(range(1, round(sample_size / 2)))

images.insert(ref_loc, (402, 202, "redactions-citation.jpg"))

# Select a random point in the first half of the list to insert the redactionart.

art, art_w, art_h = choose_art()

art_loc = random.choice(range(1, round(sample_size / 2)))

images.insert(art_loc, (art_w + 2, art_h + 2, art))

return images

def pack_images(width, height, max_size):

"""

Pack a list of images into the space defined by width and height.

"""

images = get_image_data(width, height, max_size)

with out:

print("Packing images...")

packer = newPacker(sort_algo=SORT_NONE, rotation=False)

for i in images:

packer.add_rect(*i)

packer.add_bin(width, height)

packer.pack()

return len(images), packer.rect_list()

def create_file_list(rectangles):

redactions = [r[5] for r in rectangles]

df_redactions = pd.read_csv(Path("data", "A6119-redactions.csv"))

df_used = df_redactions.loc[df_redactions["img_name"].isin(redactions)]

df_items = pd.read_csv(Path("data", "A6119-items.csv"))

df_refs = pd.merge(

df_used, df_items, how="left", left_on="item_id", right_on="identifier"

)

df_refs["redaction_url"] = df_refs["img_name"].apply(

lambda x: f"https://asiodata.s3.amazonaws.com/a6119-redactions//a6119-redactions/{x}"

)

df_refs["recordsearch_url"] = df_refs["item_id"].apply(

lambda x: f"https://recordsearch.naa.gov.au/scripts/AutoSearch.asp?O=I&Number={x}"

)

return df_refs

def create_composite(

width=3840,

height=2400,

max_size=600,

bg_colour=(0, 0, 0),

img_path="",

output_file=None,

):

num_images, rectangles = pack_images(width, height, max_size)

comp = Image.new("RGB", (width, height), bg_colour)

with out:

print("Downloading images...")

for rect in tqdm(rectangles):

b, x, y, w, h, rid = rect

# print(x,y, w, h, rid)

# Get the citation image from the current directory

if rid == "redactions-citation.jpg":

red = Image.open(Path("redactions-citation.jpg"))

# Get image from local path if set

elif img_path:

red_path = Path(img_path, rid)

red = Image.open(red_path)

# Otherwise get image from s3

else:

img_url = f"https://asiodata.s3.amazonaws.com/a6119-redactions/{rid}"

data = requests.get(img_url).content

red = Image.open(io.BytesIO(data))

red = red.convert("RGB")

red_with_border = ImageOps.expand(red, border=1, fill=bg_colour)

comp.paste(red_with_border, (x, y, x + w, y + h))

if not output_file:

timestamp = int(datetime.now().timestamp())

output_file = f"redactions-{timestamp}-{width}-{height}"

output_image = Path(f"{output_file}.jpg")

output_csv = Path(f"{output_file}.csv")

comp.save(output_image)

refs = create_file_list(rectangles)

refs.to_csv(output_csv, index=False)

files_used = refs["item_id"].nunique()

out.clear_output()

with out:

display(

HTML(

f'{len(rectangles) - 1} redactions used from {files_used} files – <a href="{str(output_csv)}" download="{output_csv.name}">download CSV</a>'

)

)

display(

HTML(

f'<a href="{str(output_image)}" download="{output_image.name}">Download image</a>'

)

)

display(comp)

style = {"description_width": "initial"}

width = widgets.BoundedIntText(

value=3200,

min=500,

max=5000,

step=10,

description="Width:",

disabled=False,

style=style,

)

height = widgets.BoundedIntText(

value=1800,

min=500,

max=5000,

step=10,

description="Height:",

disabled=False,

style=style,

)

max_size = widgets.BoundedIntText(

value=400,

min=100,

max=1000,

step=10,

description="Max redaction size:",

disabled=False,

style=style,

)

bg_colour = widgets.ColorPicker(

concise=False,

description="Background colour",

value="black",

disabled=False,

style=style,

)

go = widgets.Button(

description="Go!",

disabled=False,

button_style="primary", # 'success', 'info', 'warning', 'danger' or ''

tooltip="Click me to make art",

icon="",

)

out = widgets.Output()

def start(b):

out.clear_output()

create_composite(

width=width.value,

height=height.value,

max_size=max_size.value,

bg_colour=bg_colour.value,

)

go.on_click(start)

display(width, height, max_size, bg_colour, go, out)

Notes¶

- Some of the redactions are just very big black boring boxes. To prevent them filling up your collage there's a maximum size value to filter redactions by size. The default value should produce good results in most cases.

- If your image size is large (greater than 4,000-ish), you might find that the packing algorithm becomes quite slow. It's still working, just be patient.

- I've set a limit of 5,000 x 5,000 pixels on the image size, just for performance reasons. But this is a Jupyter notebook, so if you want bigger you can always grab the code and modify it.

%%capture

# Load environment variables if available

%load_ext dotenv

%dotenv

# TESTING

if os.getenv("GW_STATUS") == "dev":

width.value = 500

height.value = 500

go.click()

Created by Tim Sherratt for the GLAM Workbench.

Support me by becoming a GitHub sponsor!