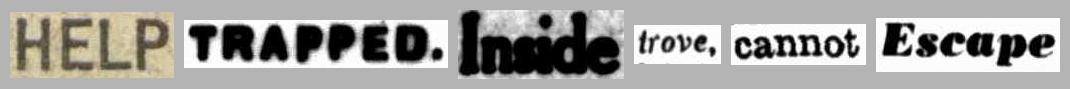

Create 'scissors and paste' messages from Trove newspaper articles¶

When you search for a term in Trove's digitised newspapers and click on individual article, you'll see your search terms are highlighted. If you look at the code you'll see the highlighted box around the word includes its page coordinates. That means that if we search for a word, we can find where it appears on a page, and by cropping the page to those coordinates we can create an image of an individual word. By combining these images we can create scissors and paste style messages!

In this notebook you can create your own 'scissors and paste' messages:

- Enter a word in the box below and click Add word.

- Because of OCR errors the result might not be what you want, click Retry last word to make another attempt.

- When you've finished adding words, click on the 'Download' link to save.

- To start again click Clear all.

Some things to note:

- If you don't have a Trove API key, you can [get one here](https://trove.nla.gov.au/about/create-something/using-api).

- The article used to extract the word is chosen randomly from the first 100 search results and, if the word appears multiple times in an article, one instance is chosen at random. This mixes up the results so that Retry last word should produce something different.

- Short, common words like 'I' and 'a' don't seem to work very well, so it's probably easiest to just leave them out of your message. Who cares about grammar!

In [ ]:

# This notebook is designed to run in Voila

# If you can see the code, just select 'Run all cells' from the menu to create the widgets.

In [ ]:

# Import what we need

import base64

import os

import random

from io import BytesIO

import ipywidgets as widgets

import requests

from bs4 import BeautifulSoup

from IPython.display import HTML, display

from PIL import Image

In [ ]:

%%capture

# Load env variables

%load_ext dotenv

%dotenv

In [ ]:

# Some global variables

words = []

last_kw = ""

# Widgets

results = widgets.Output()

status = widgets.Output()

key = widgets.Text(description="Your API key:")

word_to_add = widgets.Text(description="Word to add:")

button_add = widgets.Button(

description="Add word",

button_style="primary",

)

button_retry = widgets.Button(description="Retry last word")

button_clear = widgets.Button(description="Clear all")

def get_word_boxes(article_url):

"""

Get the boxes around highlighted search terms.

"""

boxes = []

# Get the article page

response = requests.get(article_url)

# Load in BS4

soup = BeautifulSoup(response.text, "lxml")

# Get the id of the newspaper page

page_id = soup.select("div.zone.onPage")[0]["data-page-id"]

# Find the highlighted terms

words = soup.select("span.highlightedTerm")

# Save the box coords

for word in words:

box = {

"page_id": page_id,

"left": int(word["data-x"]),

"top": int(word["data-y"]),

"width": int(word["data-w"]),

"height": int(word["data-h"]),

}

boxes.append(box)

return boxes

def crop_word(box):

"""

Crop the box coordinates from the full page image.

"""

global words

# Construct the url we need to download the page image

page_url = (

"https://trove.nla.gov.au/ndp/imageservice/nla.news-page{}/level{}".format(

box["page_id"], 7

)

)

# Download the page image

response = requests.get(page_url)

# Open download as an image for editing

img = Image.open(BytesIO(response.content))

word = img.crop(

(

box["left"] - 5,

box["top"] - 5,

box["left"] + box["width"] + 5,

box["top"] + box["height"] + 5,

)

)

img.close()

words.append(word)

display_words()

def get_word_sizes():

"""

Get the max word height and total length of the saved words.

These will be used for the height and width of the composite image.

"""

max_height = 0

total_width = 0

for word in words:

max_height = max(max_height, word.size[1])

total_width += word.size[0] + 10

return max_height + 20, total_width + 10

def display_words():

"""

Create a composite image from the saved words and display with a Download link.

"""

global words, word

results.clear_output()

word_to_add.value = ""

h, w = get_word_sizes()

# Create a new composite image

comp = Image.new("RGB", (w, h), (180, 180, 180))

left = 10

# Paste the words into the composite, adjusting the left (start) position as we go

for word in words:

# Centre the word in the composite

top = int(round((h / 2) - (word.size[1] / 2)))

comp.paste(word, (left, top))

# Move along the start point

left += word.size[0] + 10

# Create a buffer

image_file = BytesIO()

# Save the image into the file object

comp.save(image_file, "JPEG")

# Go to the start of the file object

image_file.seek(0)

# For the download link we can use a data uri -- a base64 encoded version of the file

# Encode the file

encoded_image = base64.b64encode(image_file.read()).decode()

# Create a data uri string

encoded_string = "data:image/png;base64," + encoded_image

# Reset to the beginning

image_file.seek(0)

status.clear_output()

with results:

# Create a download link using the data uri

display(HTML(f'<a download="words.jpg" href="{encoded_string}">Download</a>'))

# Display the image

display(widgets.Image(value=image_file.read(), format="jpg"))

def retry_last_word(b):

global words

words.pop()

get_article_from_search(last_kw)

def clear_all_words(b):

global words, last_kw

results.clear_output()

words.clear()

word_to_add.value = ""

last_kw = ""

def get_article_from_search(kw):

"""

Use the Trove API to find articles with the supplied keyword.

"""

global last_kw

last_kw = kw

with status:

display(HTML("Finding word..."))

params = {

"q": f'text:"{kw}"',

"zone": "newspaper",

"encoding": "json",

"n": 100,

"key": key.value,

}

response = requests.get("https://api.trove.nla.gov.au/v2/result", params=params)

data = response.json()

articles = data["response"]["zone"][0]["records"]["article"]

boxes = []

# Choose article at random and look for highlight boxes

# Continue until some boxes are found

while len(boxes) == 0:

article = random.choice(articles)

# print(article['troveUrl'])

try:

boxes = get_word_boxes(article["troveUrl"])

except KeyError:

pass

crop_word(random.choice(boxes))

def add_word(b):

get_article_from_search(word_to_add.value)

# Add events to the buttons

button_add.on_click(add_word)

button_retry.on_click(retry_last_word)

button_clear.on_click(clear_all_words)

# Display the widgets

display(key)

display(word_to_add)

display(widgets.HBox([button_add, button_retry, button_clear]))

display(status)

display(results)

In [ ]:

# TESTING

if os.getenv("GW_STATUS") == "dev" and os.getenv("TROVE_API_KEY"):

key.value = os.getenv("TROVE_API_KEY")

word_to_add.value = "testing"

button_add.click()

Created by Tim Sherratt for the GLAM Workbench.

Support this project by becoming a GitHub sponsor.