Tools in this Notebook:

- Workbench: Open Source Security Framework Workbench GitHub

- Bro Network Security Monitor (http://www.bro.org)

- Pandas: Python Data Analysis Library (http://pandas.pydata.org)

More Info:

- See Workbench Demo Notebook for a lot more info on using workbench.

Lets start up the workbench server...¶

Run the workbench server (from somewhere, for the demo we're just going to start a local one)

$ workbench_server

In [1]:

# Lets start to interact with workbench, please note there is NO specific client to workbench,

# Just use the ZeroRPC Python, Node.js, or CLI interfaces.

import zerorpc

c = zerorpc.Client()

c.connect("tcp://127.0.0.1:4242")

Out[1]:

[None]

So I'm confused what am I suppose to do with workbench?¶

Workbench is often confusing for new users (we're trying to work on that). Please see our github repository https://github.com/SuperCowPowers/workbench for the latest documentation and notebooks examples (the notebook examples can really help). New users can start by typing **c.help()** after they connect to workbench.

In [2]:

# I forgot what stuff I can do with workbench

print c.help()

Welcome to Workbench: Here's a list of help commands: - Run c.help_basic() for beginner help - Run c.help_commands() for command help - Run c.help_workers() for a list of workers - Run c.help_advanced() for advanced help See https://github.com/SuperCowPowers/workbench for more information

In [3]:

# Now lets get infomation about the dynamically loaded workers (your site may have many more!)

# Next to each worker name is the list of dependences that worker has declared

print c.help_workers()

Workbench Workers: json_meta ['sample', 'meta'] log_meta ['sample', 'meta'] meta ['sample'] meta_deep ['sample', 'meta'] my_meta ['sample', 'meta'] pcap_bro ['sample'] pcap_graph ['pcap_bro'] pcap_http_graph ['pcap_bro'] pe_classifier ['pe_features', 'pe_indicators'] pe_deep_sim ['meta_deep'] pe_features ['sample'] pe_indicators ['sample'] pe_peid ['sample'] strings ['sample'] swf_meta ['sample', 'meta'] unzip ['sample'] url ['strings'] view ['meta'] view_customer ['meta'] view_log_meta ['log_meta'] view_meta ['meta'] view_pcap ['pcap_bro'] view_pcap_details ['view_pcap'] view_pdf ['meta', 'strings'] view_pe ['meta', 'strings', 'pe_peid', 'pe_indicators', 'pe_classifier'] view_zip ['meta', 'unzip'] vt_query ['meta'] yara_sigs ['sample']

In [4]:

# Lets gets the infomation about the meta worker

print c.help_worker('meta')

Worker: meta ['sample'] This worker computes meta data for any file type.

In [5]:

# Okay lets load up a file, and see what this silly meta thing gives back

filename = '../data/pe/bad/9e42ff1e6f75ae3e60b24e48367c8f26'

with open(filename,'rb') as f:

my_md5 = c.store_sample(f.read(), filename, 'exe')

output = c.work_request('meta', my_md5)

output

Out[5]:

{'meta': {'customer': 'Huge Inc',

'encoding': 'binary',

'file_size': 51200,

'file_type': 'PE32 executable (console) Intel 80386, for MS Windows',

'filename': '../../data/pe/bad/9e42ff1e6f75ae3e60b24e48367c8f26',

'import_time': '2014-06-21T23:51:49.122000Z',

'length': 51200,

'md5': '9e42ff1e6f75ae3e60b24e48367c8f26',

'mime_type': 'application/x-dosexec',

'type_tag': 'exe'}}

In [6]:

# Pfff... my meta data worker will be WAY better!

# Err.. okay I'll just copy the meta worker file and see what happens.

# Note: obviously you'd just go to the shell and cp meta.py my_meta.py

# but since we're in IPython...

%cd /Users/briford/work/workbench/server/workers

%cp meta.py my_meta.py

%cd /Users/briford/work/workbench/notebooks

/Users/briford/work/workbench/server/workers

In [7]:

# Okay just cause I'm feeling crazy lets look at help_workers again

print c.help_workers()

Workbench Workers: json_meta ['sample', 'meta'] log_meta ['sample', 'meta'] meta ['sample'] meta_deep ['sample', 'meta'] my_meta ['sample', 'meta'] pcap_bro ['sample'] pcap_graph ['pcap_bro'] pcap_http_graph ['pcap_bro'] pe_classifier ['pe_features', 'pe_indicators'] pe_deep_sim ['meta_deep'] pe_features ['sample'] pe_indicators ['sample'] pe_peid ['sample'] strings ['sample'] swf_meta ['sample', 'meta'] unzip ['sample'] url ['strings'] view ['meta'] view_customer ['meta'] view_log_meta ['log_meta'] view_meta ['meta'] view_pcap ['pcap_bro'] view_pcap_details ['view_pcap'] view_pdf ['meta', 'strings'] view_pe ['meta', 'strings', 'pe_peid', 'pe_indicators', 'pe_classifier'] view_zip ['meta', 'unzip'] vt_query ['meta'] yara_sigs ['sample']

In [8]:

# My mind must be playing tricks, lets see if I can run my worker

output = c.work_request('my_meta', my_md5)

output

Out[8]:

{'my_meta': {'entropy': 7.250194413754419,

'md5': '9e42ff1e6f75ae3e60b24e48367c8f26',

'sha1': 'e0a6d12499ed16b33c71ddec42ca8aa7bcecaaf9',

'sha256': '88eea1726a149ac5c08b74547a05177398757f328c0faf821b822789d76863b7',

'ssdeep': '1536:pTrBy35F8qNwtqKiE/n5zTY+LK9lqB9HtZeV0D:hrEpF8q6qKiE/npi9UDHtZeV4'}}

- The plugin goes through several validation checks

- If the validation succeeds the plugin is dynamically loaded

- Your new plugins in now running on the local server

- Also all of the CI build/test/coverage/docs now include your plug in!

Okay I'm going to call BS... lets run the tests and see what happens!¶

In [12]:

# I've been around software... testing, server integration, test coverage all that stuff is

# a complete PITA, heck I spend half my time doing that.. there's no way all that just happened.

!./runtests

<<< Note: Most of these tests require a local server running >>> ............................ Name Stmts Miss Cover Missing ------------------------------------------------------- __init__ NoSource: No source for code: '/Users/briford/work/workbench/server/workers/__init__.py' json_meta 33 2 94% 22, 57 log_meta 30 1 97% 49 meta 40 1 98% 61 meta_deep 38 1 97% 58 my_meta 40 1 98% 61 pcap_bro 122 9 93% 23-26, 115-116, 119, 121, 123, 197 pcap_graph 112 6 95% 90, 117, 177, 181, 188, 231 pcap_http_graph 90 5 94% 65, 121, 125, 134, 177 pe_classifier 30 1 97% 54 pe_deep_sim 39 1 97% 64 pe_features 208 21 90% 98-100, 148-149, 167-171, 179, 200, 222, 233, 244, 283, 290, 301, 304-305, 349 pe_indicators 240 23 90% 52-53, 80, 103, 151, 159, 163, 173, 181, 193, 205, 217, 250, 260, 283, 301, 331, 349, 358, 386, 396-397, 439 pe_peid 38 3 92% 23-24, 64 strings 27 1 96% 44 swf_meta 23 1 96% 44 unzip 38 1 97% 60 url 28 1 96% 46 view 51 3 94% 25, 27, 86 view_customer 23 1 96% 42 view_log_meta 23 1 96% 40 view_meta 23 1 96% 41 view_pcap 31 1 97% 57 view_pcap_details 32 1 97% 78 view_pdf 28 2 93% 12, 46 view_pe 39 2 95% 13, 64 view_zip 36 2 94% 17, 61 vt_query 55 7 87% 18, 22, 36-37, 50-51, 98 workbench_keys/__init__ 1 0 100% yara_sigs 42 2 95% 20, 75 ------------------------------------------------------- TOTAL 1560 102 93% ---------------------------------------------------------------------- Ran 28 tests in 29.193s OK

The manager 'looks' at the new plugin and as long as it passes all the validation tests, it's automatically reloaded!!!¶

In [36]:

# You sir are on some sort of needle drug... so you're saying that all the new functionality

# that I just typed in is already available on the server? Help too?

print c.help_worker('my_meta')

output = c.work_request('my_meta', my_md5)

output

Worker: my_meta ['sample']

This worker computes my more super awesome meta-data

Seriously:

1) All the sha hashes

2) SSDeep (oh yeah)

3) Entropy (science!)

Out[36]:

{'my_meta': {'entropy': 2.440069216444288,

'md5': '0cb9aa6fb9c4aa3afad7a303e21ac0f3',

'sha1': '96e85768a12b2f319f2a4f0c048460e1b73aa573',

'sha256': '4ecf79302ba0439f62e15d0526a297975e6bb32ea25c8c70a608916a609e5a9c',

'ssdeep': '192:a8jJIFYrq9ATskBTp2jLDL3P1oynldvSo71nF:oFpNnnX1Tn'}}

Okay that was spiffy I'll give you that¶

But I want my new worker to have access to the output of other workers, like I want to look the mime_type that the 'meta' worker has and then do some cool stuff based on that.

Lets look at the changes we made to my_meta.py¶

We changed the dependency line and added the 'meta' worker (could be ANY worker)

We also pulled the data from the meta worker and added the line about packed file.

In [16]:

# Run my new code

output = c.work_request('my_meta', my_md5)

output

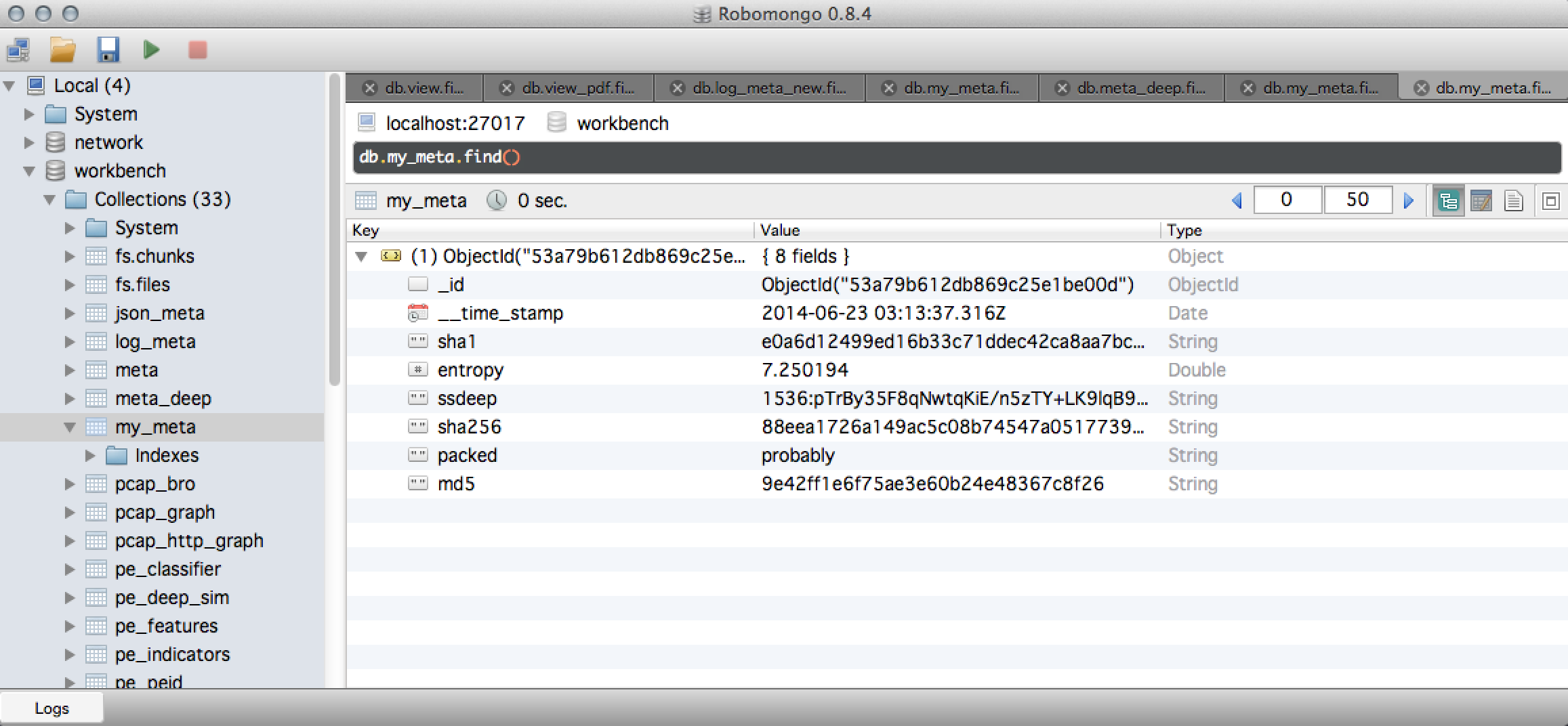

Out[16]:

{'my_meta': {'entropy': 7.250194413754419,

'md5': '9e42ff1e6f75ae3e60b24e48367c8f26',

'packed': 'probably',

'sha1': 'e0a6d12499ed16b33c71ddec42ca8aa7bcecaaf9',

'sha256': '88eea1726a149ac5c08b74547a05177398757f328c0faf821b822789d76863b7',

'ssdeep': '1536:pTrBy35F8qNwtqKiE/n5zTY+LK9lqB9HtZeV0D:hrEpF8q6qKiE/npi9UDHtZeV4'}}

Let enumerate all the neat things that just happened¶

- I changed my worker, the plugin manager saw the change, validated my worker and dynamically loaded it

- Although Workbench utilizes caching (no work is ever recomputed unless it needs to be) in this case it recognized that the 'modification time' of the worker was newer than the work results so it recomputes the results.

- Lets look at my new plugin output in MongoDB

In [17]:

# So lets do a more complicated worker just for hammering home what's happening..

# Workbench uses Directed Acyclic Graphs to pipeline workers together, it recursively

# satisfies dependencies with agressive caching, shallow memory copies and gevent based

# co-operative processes on the server side. Basicaly six slices of awesome...

output = c.work_request('view', my_md5)

output

Out[17]:

{'view': {'md5': '9e42ff1e6f75ae3e60b24e48367c8f26',

'view_pe': {'classification': 'Evil!',

'customer': 'Huge Inc',

'disass': 'plugin_failed',

'encoding': 'binary',

'file_size': 51200,

'file_type': 'PE32 executable (console) Intel 80386, for MS Windows',

'filename': '../../data/pe/bad/9e42ff1e6f75ae3e60b24e48367c8f26',

'import_time': '2014-06-21T23:51:49.122000Z',

'indicators': [{'category': 'PE_WARN',

'description': 'Suspicious flags set for section 0. Both IMAGE_SCN_MEM_WRITE and IMAGE_SCN_MEM_EXECUTE are set. This might indicate a packed executable.',

'severity': 2},

{'category': 'PE_WARN',

'description': 'Suspicious flags set for section 2. Both IMAGE_SCN_MEM_WRITE and IMAGE_SCN_MEM_EXECUTE are set. This might indicate a packed executable.',

'severity': 2},

{'attributes': ['queryperformancecounter', 'gettickcount'],

'category': 'ANTI_DEBUG',

'description': 'Imported symbols related to anti-debugging',

'severity': 3},

{'category': 'MALFORMED',

'description': 'Checksum of Zero',

'severity': 1},

{'category': 'MALFORMED',

'description': 'Reported Checksum does not match actual checksum',

'severity': 2},

{'category': 'MALFORMED',

'description': 'Image size does not match reported size',

'severity': 3},

{'attributes': ['lsicbkg'],

'category': 'MALFORMED',

'description': 'Section(s) with a non-standard name, tamper indication',

'severity': 3},

{'attributes': ['getmodulehandlea'],

'category': 'PROCESS_MANIPULATION',

'description': 'Imported symbols related to process manipulation/injection',

'severity': 3},

{'attributes': ['getsystemtimeasfiletime'],

'category': 'PROCESS_SPAWN',

'description': 'Imported symbols related to spawning a new process',

'severity': 2},

{'attributes': ['findfirstfilew', 'findnextfilew'],

'category': 'SYSTEM_PROBE',

'description': 'Imported symbols related to probing the system',

'severity': 2}],

'length': 51200,

'md5': '9e42ff1e6f75ae3e60b24e48367c8f26',

'mime_type': 'application/x-dosexec',

'peid_Matches': ['Microsoft Visual C++ v7.0'],

'type_tag': 'exe'}}}

In [21]:

# Yeah but I want to run my new worker on a LOTS of samples and I

# want to put the results into a Pandas dataframes and run some

# statistics, and do some Machine Learning and kewl plots!

# This is just throwing files at Workbench (could be pdfs, swfs, pcap, memory_images, etc)

import os

file_list = [os.path.join('../data/pe/bad', child) for child in os.listdir('../data/pe/bad')]

working_set = []

for filename in file_list:

with open(filename,'rb') as f:

md5 = c.store_sample(f.read(), filename, 'exe')

working_set.append(md5)

In [27]:

# Now just run a batch request against all the sample we just threw in

results = c.batch_work_request('my_meta', {'md5_list':working_set})

results

Out[27]:

<generator object iterator at 0x10684e5f0>

In [28]:

# Now toss that client-server generator into a dataframe (zero-copy and efficient)

import pandas as pd

df_meta = pd.DataFrame(results)

df_meta.head()

Out[28]:

| entropy | md5 | packed | sha1 | sha256 | ssdeep | |

|---|---|---|---|---|---|---|

| 0 | 7.894680 | 033d91aae8ad29ed9fbb858179271232 | probably | 83ab10907b254752f312c89125957f10d35cb9d4 | eb107c004e6e1bbd3b32ad7961661bbe28a577b0cb5dac... | 1536:h6+LbfPbI5dzmJu9Tgj5aOItvEqRCHW9pjVrs2ryr... |

| 1 | 2.440069 | 0cb9aa6fb9c4aa3afad7a303e21ac0f3 | probably not | 96e85768a12b2f319f2a4f0c048460e1b73aa573 | 4ecf79302ba0439f62e15d0526a297975e6bb32ea25c8c... | 192:a8jJIFYrq9ATskBTp2jLDL3P1oynldvSo71nF:oFpN... |

| 2 | 5.125292 | 0e882ec9b485979ea84c7843d41ba36f | probably not | 12fb0a1b7d9c2b2a41f4da9ce5bbfb140fb16939 | 616cf9e729c883d979212eb55178b7aac80dd9f58cb449... | 768:5HyLMqtEM1Htz8kDmP9l+nZZYp41oj7EZmJxl/N9j6... |

| 3 | 6.303055 | 0e8b030fb6ae48ffd29e520fc16b5641 | probably not | 82d57b8302b7497b2f6943f18e2d2687b9b0f5eb | feaf72bdad035e198d297bfb0b8d891645f1dacd78f0db... | 1536:1uNqjqzs1hQHhInEeJMzcmGqyF7Jwe9pvUo+5TDU4... |

| 4 | 7.593283 | 0eb9e990c521b30428a379700ec5ab3e | probably | b778fc55f0538de865d4853099a3faa0b29f311d | dc5e8176a5f012ebdb4835f9b570a12c045d059f6f5bdc... | 1536:KcE4iMgXjTJpdGaaJG6Mhawv7r9ZaobsLBq+h5ttB... |

5 rows × 6 columns

In [29]:

# Plotting defaults

import matplotlib.pyplot as plt

%matplotlib inline

plt.rcParams['font.size'] = 12.0

plt.rcParams['figure.figsize'] = 18.0, 8.0

In [31]:

# Plot stuff (yes this is a silly plot but it's just an example :)

df_meta.boxplot('entropy','packed')

plt.xlabel('Packed')

plt.ylabel('Entropy')

plt.title('Entropy of Sample')

plt.suptitle('')

Out[31]:

<matplotlib.text.Text at 0x10702f2d0>

In [33]:

# Groupby and Statistics (yes silly again but just an example)

df_meta.groupby('packed').describe()

Out[33]:

| entropy | ||

|---|---|---|

| packed | ||

| probably | count | 23.000000 |

| mean | 7.612472 | |

| std | 0.290949 | |

| min | 7.066162 | |

| 25% | 7.339412 | |

| 50% | 7.778263 | |

| 75% | 7.852313 | |

| max | 7.919686 | |

| probably not | count | 27.000000 |

| mean | 5.832247 | |

| std | 1.017639 | |

| min | 2.440069 | |

| 25% | 5.564519 | |

| 50% | 5.967721 | |

| 75% | 6.537146 | |

| max | 6.998708 |

16 rows × 1 columns

Wrap Up¶

Well for this notebook we illustrated how simple it is to add a worker to the Workbench project. We hope this exercise showed some neato functionality using Workbench, we encourage you to check out the GitHub repository and our other notebooks:

- PCAP_to_Graph for a short notebook on turning this PCAP into a Neo4j graph.

- Workbench Demo general introduction to Workbench.

- PCAP_DriveBy a detail look at a Web DriveBy from the ThreatGlass repository.

- PE File Sim Graph using Neo4j to generate a similarity graph using PE File features.

- Generator Pipelines using the client/server streaming generators to demonstrate 'chaining' generators.