Homework 3 - Convolutional Neural Network¶

This is the example code of homework 3 of the machine learning course by Prof. Hung-yi Lee.

In this homework, you are required to build a convolutional neural network for image classification, possibly with some advanced training tips.

There are three levels here:

Easy: Build a simple convolutional neural network as the baseline. (2 pts)

Medium: Design a better architecture or adopt different data augmentations to improve the performance. (2 pts)

Hard: Utilize provided unlabeled data to obtain better results. (2 pts)

About the Dataset¶

The dataset used here is food-11, a collection of food images in 11 classes.

For the requirement in the homework, TAs slightly modified the data. Please DO NOT access the original fully-labeled training data or testing labels.

Also, the modified dataset is for this course only, and any further distribution or commercial use is forbidden.

# Download the dataset

# You may choose where to download the data.

# Google Drive

!gdown --id '1awF7pZ9Dz7X1jn1_QAiKN-_v56veCEKy' --output food-11.zip

# Dropbox

# !wget https://www.dropbox.com/s/m9q6273jl3djall/food-11.zip -O food-11.zip

# MEGA

# !sudo apt install megatools

# !megadl "https://mega.nz/#!zt1TTIhK!ZuMbg5ZjGWzWX1I6nEUbfjMZgCmAgeqJlwDkqdIryfg"

# Unzip the dataset.

# This may take some time.

!unzip -q food-11.zip

/usr/local/lib/python3.7/dist-packages/gdown/cli.py:131: FutureWarning: Option `--id` was deprecated in version 4.3.1 and will be removed in 5.0. You don't need to pass it anymore to use a file ID. category=FutureWarning, Downloading... From: https://drive.google.com/uc?id=1awF7pZ9Dz7X1jn1_QAiKN-_v56veCEKy To: /content/food-11.zip 100% 963M/963M [00:03<00:00, 278MB/s] replace food-11/training/unlabeled/00/5176.jpg? [y]es, [n]o, [A]ll, [N]one, [r]ename: All

Import Packages¶

First, we need to import packages that will be used later.

In this homework, we highly rely on torchvision, a library of PyTorch.

# Import necessary packages.

import numpy as np

import torch

import torch.nn as nn

import torchvision.transforms as transforms

from PIL import Image

# "ConcatDataset" and "Subset" are possibly useful when doing semi-supervised learning.

from torch.utils.data import ConcatDataset, DataLoader, Subset

from torchvision.datasets import DatasetFolder

# This is for the progress bar.

# from tqdm.auto import tqdm # 这样会有bug: AssertionError: can only test a child process

from tqdm import tqdm

Dataset, Data Loader, and Transforms¶

Torchvision provides lots of useful utilities for image preprocessing, data wrapping as well as data augmentation.

Here, since our data are stored in folders by class labels, we can directly apply torchvision.datasets.DatasetFolder for wrapping data without much effort.

Please refer to PyTorch official website for details about different transforms.

# It is important to do data augmentation in training.

# However, not every augmentation is useful.

# Please think about what kind of augmentation is helpful for food recognition.

train_tfm = transforms.Compose([

# Resize the image into a fixed shape (height = width = 128)

# size (int): 保持长宽比,短边缩放至x

# size (sequence): 绝对缩放

transforms.Resize((128, 128)),

# You may add some transforms here.

# ToTensor() should be the last one of the transforms.

transforms.ToTensor(),

])

# We don't need augmentations in testing and validation.

# All we need here is to resize the PIL image and transform it into Tensor.

test_tfm = transforms.Compose([

transforms.Resize((128, 128)),

transforms.ToTensor(),

])

# Batch size for training, validation, and testing.

# A greater batch size usually gives a more stable gradient.

# But the GPU memory is limited, so please adjust it carefully.

batch_size = 128

# Construct datasets. 这个很好用嘛,厉害

# The argument "loader" tells how torchvision reads the data.

train_set = DatasetFolder("food-11/training/labeled", loader=lambda x: Image.open(x), extensions="jpg", transform=train_tfm)

valid_set = DatasetFolder("food-11/validation", loader=lambda x: Image.open(x), extensions="jpg", transform=test_tfm)

unlabeled_set = DatasetFolder("food-11/training/unlabeled", loader=lambda x: Image.open(x), extensions="jpg", transform=train_tfm)

test_set = DatasetFolder("food-11/testing", loader=lambda x: Image.open(x), extensions="jpg", transform=test_tfm)

# Construct data loaders.

train_loader = DataLoader(train_set, batch_size=batch_size, shuffle=True, num_workers=8, pin_memory=True)

valid_loader = DataLoader(valid_set, batch_size=batch_size, shuffle=True, num_workers=8, pin_memory=True)

test_loader = DataLoader(test_set, batch_size=batch_size, shuffle=False)

/usr/local/lib/python3.7/dist-packages/torch/utils/data/dataloader.py:560: UserWarning: This DataLoader will create 8 worker processes in total. Our suggested max number of worker in current system is 2, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary. cpuset_checked))

Model¶

The basic model here is simply a stack of convolutional layers followed by some fully-connected layers.

Since there are three channels for a color image (RGB), the input channels of the network must be three. In each convolutional layer, typically the channels of inputs grow, while the height and width shrink (or remain unchanged, according to some hyperparameters like stride and padding).

Before fed into fully-connected layers, the feature map must be flattened into a single one-dimensional vector (for each image). These features are then transformed by the fully-connected layers, and finally, we obtain the "logits" for each class.

WARNING -- You Must Know¶

You are free to modify the model architecture here for further improvement. However, if you want to use some well-known architectures such as ResNet50, please make sure NOT to load the pre-trained weights. Using such pre-trained models is considered cheating and therefore you will be punished. Similarly, it is your responsibility to make sure no pre-trained weights are used if you use torch.hub to load any modules.

For example, if you use ResNet-18 as your model:

model = torchvision.models.resnet18(pretrained=False) → This is fine.

model = torchvision.models.resnet18(pretrained=True) → This is NOT allowed.

class Classifier(nn.Module):

def __init__(self):

super(Classifier, self).__init__()

# The arguments for commonly used modules:

# torch.nn.Conv2d(in_channels, out_channels, kernel_size, stride, padding)

# torch.nn.MaxPool2d(kernel_size, stride, padding)

# input image size: [3, 128, 128]

self.cnn_layers = nn.Sequential(

# torch.nn.Conv2d(in_channels, out_channels, kernel_size, stride=1, padding=0, dilation=1, groups=1, bias=True, padding_mode='zeros', device=None, dtype=None)

nn.Conv2d(3, 64, 3, 1, 1), # output image size: [64, 128, 128]

# torch.nn.BatchNorm2d(num_features, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True, device=None, dtype=None)

nn.BatchNorm2d(64), # 不改变image size

# torch.nn.ReLU(inplace=False)

nn.ReLU(), # 加一个非线性变换

# torch.nn.MaxPool2d(kernel_size, stride=None, padding=0, dilation=1, return_indices=False, ceil_mode=False)

nn.MaxPool2d(2, 2, 0), # output image size: [64, 64, 64]

nn.Conv2d(64, 128, 3, 1, 1), # output image size: [128, 64, 64]

nn.BatchNorm2d(128),

nn.ReLU(),

nn.MaxPool2d(2, 2, 0), # output image size: [128, 32, 32]

nn.Conv2d(128, 256, 3, 1, 1), # output image size: [256, 32, 32]

nn.BatchNorm2d(256),

nn.ReLU(),

nn.MaxPool2d(4, 4, 0), # output image size: [256, 8, 8]

)

self.fc_layers = nn.Sequential(

nn.Linear(256 * 8 * 8, 256), # 全连接需要拉直

nn.ReLU(),

nn.Linear(256, 256), # ?

nn.ReLU(),

nn.Linear(256, 11)

)

def forward(self, x):

# input (x): [batch_size, 3, 128, 128]

# output: [batch_size, 11]

# Extract features by convolutional layers.

x = self.cnn_layers(x)

# The extracted feature map must be flatten before going to fully-connected layers.

x = x.flatten(1) # 拉直

# The features are transformed by fully-connected layers to obtain the final logits. logits: 最终的全连接层的输出

x = self.fc_layers(x)

return x

cnn_layers - First Layer

- Conv2d:

torch.nn.Conv2d(in_channels, out_channels, kernel_size, stride=1, padding=0, dilation=1, groups=1, bias=True, padding_mode='zeros', device=None, dtype=None)

卷积

$$ H_{out} = ⌊\frac{128+2*1-1*(3-1)-1}{1}+1⌋=128 $$$$ W_{out} = ⌊\frac{128+2*1-1*(3-1)-1}{1}+1⌋=128 $$- BatchNorm2d

torch.nn.BatchNorm2d(num_features, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True, device=None, dtype=None)

batch标准化

前三步类似概率论中的随机变量$X(𝜇, σ) ∼ N(0, 1)$,ϵ是参数eps,添加到mini-batch中,以保证值的稳定性。

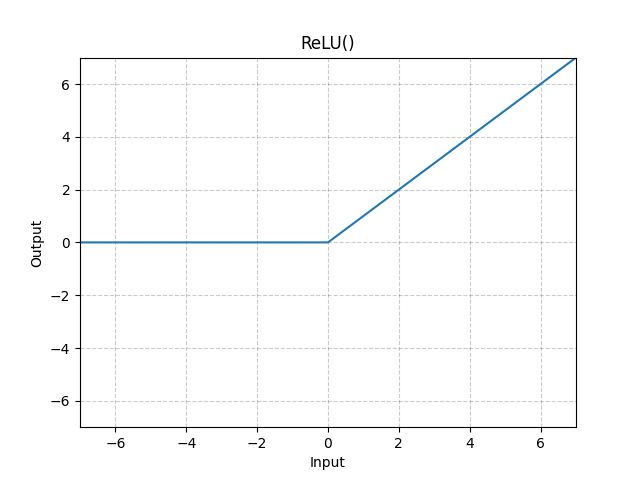

- ReLU

torch.nn.ReLU(inplace=False)

- MaxPool2d

torch.nn.MaxPool2d(kernel_size, stride=None, padding=0, dilation=1, return_indices=False, ceil_mode=False)

forward

x = x.flatten(1)

or

x = x.view(x.size()[0], -1)

Training¶

You can finish supervised learning by simply running the provided code without any modification.

The function "get_pseudo_labels" is used for semi-supervised learning. It is expected to get better performance if you use unlabeled data for semi-supervised learning. However, you have to implement the function on your own and need to adjust several hyperparameters manually.

For more details about semi-supervised learning, please refer to Prof. Lee's slides.

Again, please notice that utilizing external data (or pre-trained model) for training is prohibited.

def get_pseudo_labels(dataset, model, threshold=0.65):

# This functions generates pseudo-labels of a dataset using given model.

# It returns an instance of DatasetFolder containing images whose prediction confidences exceed a given threshold.

# You are NOT allowed to use any models trained on external data for pseudo-labeling.

device = "cuda" if torch.cuda.is_available() else "cpu"

# Construct a data loader.

data_loader = DataLoader(dataset, batch_size=batch_size, shuffle=False)

# Make sure the model is in eval mode.

model.eval()

# Define softmax function.

softmax = nn.Softmax(dim=-1)

# Iterate over the dataset by batches.

for batch in tqdm(data_loader):

img, _ = batch

# Forward the data

# Using torch.no_grad() accelerates the forward process.

with torch.no_grad():

logits = model(img.to(device))

# Obtain the probability distributions by applying softmax on logits.

probs = softmax(logits)

# ---------- TODO ----------

# Filter the data and construct a new dataset.

# # Turn off the eval mode.

model.train()

return dataset

# "cuda" only when GPUs are available.

device = "cuda" if torch.cuda.is_available() else "cpu"

# Initialize a model, and put it on the device specified.

model = Classifier().to(device)

model.device = device

# For the classification task, we use cross-entropy as the measurement of performance. 分类任务使用交叉熵

criterion = nn.CrossEntropyLoss()

# Initialize optimizer, you may fine-tune some hyperparameters such as learning rate on your own.

optimizer = torch.optim.Adam(model.parameters(), lr=0.0003, weight_decay=1e-5)

# The number of training epochs.

n_epochs = 80

# Whether to do semi-supervised learning. 半监督学习

do_semi = False

for epoch in range(n_epochs):

# ---------- TODO ----------

# In each epoch, relabel the unlabeled dataset for semi-supervised learning.

# Then you can combine the labeled dataset and pseudo-labeled dataset for the training.

if do_semi:

# Obtain pseudo-labels for unlabeled data using trained model.

pseudo_set = get_pseudo_labels(unlabeled_set, model)

# Construct a new dataset and a data loader for training.

# This is used in semi-supervised learning only.

concat_dataset = ConcatDataset([train_set, pseudo_set])

train_loader = DataLoader(concat_dataset, batch_size=batch_size, shuffle=True, num_workers=8, pin_memory=True)

# ---------- Training ----------

# Make sure the model is in train mode before training.

model.train() # 启用 Batch Normalization 和 Dropout 训练

# These are used to record information in training.

train_loss = []

train_accs = []

# Iterate the training set by batches.

for batch in tqdm(train_loader):

# A batch consists of image data and corresponding labels.

imgs, labels = batch

# Forward the data. (Make sure data and model are on the same device.)

logits = model(imgs.to(device))

# Calculate the cross-entropy loss.

# We don't need to apply softmax before computing cross-entropy as it is done automatically.

loss = criterion(logits, labels.to(device))

# Gradients stored in the parameters in the previous step should be cleared out first.

optimizer.zero_grad()

# Compute the gradients for parameters.

loss.backward()

# Clip the gradient norms for stable training.

grad_norm = nn.utils.clip_grad_norm_(model.parameters(), max_norm=10)

# Update the parameters with computed gradients.

optimizer.step()

# Compute the accuracy for current batch.

acc = (logits.argmax(dim=-1) == labels.to(device)).float().mean()

# Record the loss and accuracy.

train_loss.append(loss.item())

train_accs.append(acc)

# The average loss and accuracy of the training set is the average of the recorded values.

train_loss = sum(train_loss) / len(train_loss)

train_acc = sum(train_accs) / len(train_accs)

# Print the information.

print(f"[ Train | {epoch + 1:03d}/{n_epochs:03d} ] loss = {train_loss:.5f}, acc = {train_acc:.5f}")

# ---------- Validation ----------

# Make sure the model is in eval mode so that some modules like dropout are disabled and work normally.

model.eval() # 关闭 Batch Normalization 和 Dropout 训练,使用训练好的参数

# These are used to record information in validation.

valid_loss = []

valid_accs = []

# Iterate the validation set by batches.

for batch in tqdm(valid_loader):

# A batch consists of image data and corresponding labels.

imgs, labels = batch

# We don't need gradient in validation.

# Using torch.no_grad() accelerates the forward process.

with torch.no_grad():

logits = model(imgs.to(device))

# We can still compute the loss (but not the gradient).

loss = criterion(logits, labels.to(device))

# Compute the accuracy for current batch.

acc = (logits.argmax(dim=-1) == labels.to(device)).float().mean()

# Record the loss and accuracy.

valid_loss.append(loss.item())

valid_accs.append(acc)

# The average loss and accuracy for entire validation set is the average of the recorded values.

valid_loss = sum(valid_loss) / len(valid_loss)

valid_acc = sum(valid_accs) / len(valid_accs)

# Print the information.

print(f"[ Valid | {epoch + 1:03d}/{n_epochs:03d} ] loss = {valid_loss:.5f}, acc = {valid_acc:.5f}")

0%| | 0/25 [00:00<?, ?it/s]/usr/local/lib/python3.7/dist-packages/torch/utils/data/dataloader.py:560: UserWarning: This DataLoader will create 8 worker processes in total. Our suggested max number of worker in current system is 2, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary. cpuset_checked)) 100%|██████████| 25/25 [00:19<00:00, 1.28it/s]

[ Train | 001/080 ] loss = 2.34443, acc = 0.16750

100%|██████████| 6/6 [00:05<00:00, 1.17it/s]

[ Valid | 001/080 ] loss = 2.43480, acc = 0.16250

100%|██████████| 25/25 [00:19<00:00, 1.25it/s]

[ Train | 002/080 ] loss = 2.03105, acc = 0.28687

100%|██████████| 6/6 [00:05<00:00, 1.19it/s]

[ Valid | 002/080 ] loss = 2.11054, acc = 0.23828

100%|██████████| 25/25 [00:19<00:00, 1.27it/s]

[ Train | 003/080 ] loss = 1.83839, acc = 0.34719

100%|██████████| 6/6 [00:05<00:00, 1.19it/s]

[ Valid | 003/080 ] loss = 1.87634, acc = 0.30911

100%|██████████| 25/25 [00:19<00:00, 1.27it/s]

[ Train | 004/080 ] loss = 1.69898, acc = 0.40687

100%|██████████| 6/6 [00:05<00:00, 1.12it/s]

[ Valid | 004/080 ] loss = 1.77105, acc = 0.37656

100%|██████████| 25/25 [00:19<00:00, 1.30it/s]

[ Train | 005/080 ] loss = 1.53948, acc = 0.45188

100%|██████████| 6/6 [00:05<00:00, 1.01it/s]

[ Valid | 005/080 ] loss = 1.64888, acc = 0.43359

100%|██████████| 25/25 [00:19<00:00, 1.31it/s]

[ Train | 006/080 ] loss = 1.45473, acc = 0.49781

100%|██████████| 6/6 [00:05<00:00, 1.02it/s]

[ Valid | 006/080 ] loss = 1.69650, acc = 0.37448

100%|██████████| 25/25 [00:18<00:00, 1.32it/s]

[ Train | 007/080 ] loss = 1.31423, acc = 0.55312

100%|██████████| 6/6 [00:05<00:00, 1.14it/s]

[ Valid | 007/080 ] loss = 1.81194, acc = 0.35286

100%|██████████| 25/25 [00:19<00:00, 1.28it/s]

[ Train | 008/080 ] loss = 1.23088, acc = 0.57313

100%|██████████| 6/6 [00:04<00:00, 1.23it/s]

[ Valid | 008/080 ] loss = 1.59559, acc = 0.47578

100%|██████████| 25/25 [00:20<00:00, 1.23it/s]

[ Train | 009/080 ] loss = 1.10100, acc = 0.62344

100%|██████████| 6/6 [00:05<00:00, 1.19it/s]

[ Valid | 009/080 ] loss = 2.03977, acc = 0.37865

100%|██████████| 25/25 [00:19<00:00, 1.27it/s]

[ Train | 010/080 ] loss = 1.02243, acc = 0.66531

100%|██████████| 6/6 [00:05<00:00, 1.12it/s]

[ Valid | 010/080 ] loss = 1.60213, acc = 0.44063

100%|██████████| 25/25 [00:19<00:00, 1.30it/s]

[ Train | 011/080 ] loss = 0.92395, acc = 0.70906

100%|██████████| 6/6 [00:05<00:00, 1.01it/s]

[ Valid | 011/080 ] loss = 1.59998, acc = 0.46667

100%|██████████| 25/25 [00:18<00:00, 1.34it/s]

[ Train | 012/080 ] loss = 0.81873, acc = 0.73594

100%|██████████| 6/6 [00:05<00:00, 1.00it/s]

[ Valid | 012/080 ] loss = 1.86632, acc = 0.40703

100%|██████████| 25/25 [00:18<00:00, 1.33it/s]

[ Train | 013/080 ] loss = 0.76258, acc = 0.75375

100%|██████████| 6/6 [00:05<00:00, 1.03it/s]

[ Valid | 013/080 ] loss = 1.61422, acc = 0.45807

100%|██████████| 25/25 [00:18<00:00, 1.33it/s]

[ Train | 014/080 ] loss = 0.61992, acc = 0.81562

100%|██████████| 6/6 [00:05<00:00, 1.18it/s]

[ Valid | 014/080 ] loss = 1.84777, acc = 0.44193

100%|██████████| 25/25 [00:19<00:00, 1.26it/s]

[ Train | 015/080 ] loss = 0.58303, acc = 0.82875

100%|██████████| 6/6 [00:04<00:00, 1.22it/s]

[ Valid | 015/080 ] loss = 1.75295, acc = 0.43438

100%|██████████| 25/25 [00:19<00:00, 1.26it/s]

[ Train | 016/080 ] loss = 0.47521, acc = 0.85812

100%|██████████| 6/6 [00:05<00:00, 1.20it/s]

[ Valid | 016/080 ] loss = 2.03259, acc = 0.42109

100%|██████████| 25/25 [00:19<00:00, 1.27it/s]

[ Train | 017/080 ] loss = 0.43429, acc = 0.88156

100%|██████████| 6/6 [00:04<00:00, 1.21it/s]

[ Valid | 017/080 ] loss = 1.86211, acc = 0.46068

100%|██████████| 25/25 [00:19<00:00, 1.29it/s]

[ Train | 018/080 ] loss = 0.38475, acc = 0.90437

100%|██████████| 6/6 [00:05<00:00, 1.04it/s]

[ Valid | 018/080 ] loss = 1.94235, acc = 0.44036

100%|██████████| 25/25 [00:18<00:00, 1.34it/s]

[ Train | 019/080 ] loss = 0.36728, acc = 0.89219

100%|██████████| 6/6 [00:05<00:00, 1.02it/s]

[ Valid | 019/080 ] loss = 1.89044, acc = 0.47370

100%|██████████| 25/25 [00:18<00:00, 1.35it/s]

[ Train | 020/080 ] loss = 0.28030, acc = 0.92687

100%|██████████| 6/6 [00:06<00:00, 1.01s/it]

[ Valid | 020/080 ] loss = 1.88211, acc = 0.51068

100%|██████████| 25/25 [00:18<00:00, 1.33it/s]

[ Train | 021/080 ] loss = 0.19960, acc = 0.95875

100%|██████████| 6/6 [00:05<00:00, 1.13it/s]

[ Valid | 021/080 ] loss = 2.07585, acc = 0.46771

100%|██████████| 25/25 [00:19<00:00, 1.30it/s]

[ Train | 022/080 ] loss = 0.15475, acc = 0.97344

100%|██████████| 6/6 [00:04<00:00, 1.24it/s]

[ Valid | 022/080 ] loss = 1.97695, acc = 0.48359

100%|██████████| 25/25 [00:19<00:00, 1.27it/s]

[ Train | 023/080 ] loss = 0.11007, acc = 0.98750

100%|██████████| 6/6 [00:05<00:00, 1.18it/s]

[ Valid | 023/080 ] loss = 1.98879, acc = 0.47344

100%|██████████| 25/25 [00:19<00:00, 1.27it/s]

[ Train | 024/080 ] loss = 0.08960, acc = 0.99031

100%|██████████| 6/6 [00:05<00:00, 1.20it/s]

[ Valid | 024/080 ] loss = 2.43323, acc = 0.44349

100%|██████████| 25/25 [00:19<00:00, 1.28it/s]

[ Train | 025/080 ] loss = 0.13294, acc = 0.97250

100%|██████████| 6/6 [00:05<00:00, 1.16it/s]

[ Valid | 025/080 ] loss = 2.30003, acc = 0.45260

100%|██████████| 25/25 [00:18<00:00, 1.32it/s]

[ Train | 026/080 ] loss = 0.11813, acc = 0.97938

100%|██████████| 6/6 [00:05<00:00, 1.01it/s]

[ Valid | 026/080 ] loss = 2.09538, acc = 0.48828

100%|██████████| 25/25 [00:18<00:00, 1.37it/s]

[ Train | 027/080 ] loss = 0.10780, acc = 0.97375

100%|██████████| 6/6 [00:05<00:00, 1.01it/s]

[ Valid | 027/080 ] loss = 2.26111, acc = 0.48438

100%|██████████| 25/25 [00:18<00:00, 1.33it/s]

[ Train | 028/080 ] loss = 0.06275, acc = 0.98781

100%|██████████| 6/6 [00:05<00:00, 1.02it/s]

[ Valid | 028/080 ] loss = 2.15264, acc = 0.52266

100%|██████████| 25/25 [00:19<00:00, 1.31it/s]

[ Train | 029/080 ] loss = 0.05914, acc = 0.99344

100%|██████████| 6/6 [00:04<00:00, 1.20it/s]

[ Valid | 029/080 ] loss = 2.42067, acc = 0.45234

100%|██████████| 25/25 [00:19<00:00, 1.26it/s]

[ Train | 030/080 ] loss = 0.03840, acc = 0.99687

100%|██████████| 6/6 [00:04<00:00, 1.21it/s]

[ Valid | 030/080 ] loss = 2.44925, acc = 0.49531

100%|██████████| 25/25 [00:19<00:00, 1.27it/s]

[ Train | 031/080 ] loss = 0.04458, acc = 0.99437

100%|██████████| 6/6 [00:04<00:00, 1.24it/s]

[ Valid | 031/080 ] loss = 2.44101, acc = 0.45260

100%|██████████| 25/25 [00:19<00:00, 1.29it/s]

[ Train | 032/080 ] loss = 0.11299, acc = 0.96562

100%|██████████| 6/6 [00:05<00:00, 1.19it/s]

[ Valid | 032/080 ] loss = 2.73481, acc = 0.42266

100%|██████████| 25/25 [00:19<00:00, 1.28it/s]

[ Train | 033/080 ] loss = 0.07245, acc = 0.98188

100%|██████████| 6/6 [00:05<00:00, 1.04it/s]

[ Valid | 033/080 ] loss = 2.60078, acc = 0.47995

100%|██████████| 25/25 [00:18<00:00, 1.32it/s]

[ Train | 034/080 ] loss = 0.05508, acc = 0.98750

100%|██████████| 6/6 [00:05<00:00, 1.01it/s]

[ Valid | 034/080 ] loss = 2.65232, acc = 0.45208

100%|██████████| 25/25 [00:18<00:00, 1.33it/s]

[ Train | 035/080 ] loss = 0.04791, acc = 0.99125

100%|██████████| 6/6 [00:05<00:00, 1.01it/s]

[ Valid | 035/080 ] loss = 2.57021, acc = 0.43021

100%|██████████| 25/25 [00:18<00:00, 1.33it/s]

[ Train | 036/080 ] loss = 0.03548, acc = 0.99312

100%|██████████| 6/6 [00:05<00:00, 1.09it/s]

[ Valid | 036/080 ] loss = 2.86329, acc = 0.45990

100%|██████████| 25/25 [00:19<00:00, 1.29it/s]

[ Train | 037/080 ] loss = 0.03870, acc = 0.99531

100%|██████████| 6/6 [00:04<00:00, 1.22it/s]

[ Valid | 037/080 ] loss = 2.78237, acc = 0.45547

100%|██████████| 25/25 [00:19<00:00, 1.28it/s]

[ Train | 038/080 ] loss = 0.06900, acc = 0.97750

100%|██████████| 6/6 [00:04<00:00, 1.21it/s]

[ Valid | 038/080 ] loss = 2.84029, acc = 0.44297

100%|██████████| 25/25 [00:19<00:00, 1.27it/s]

[ Train | 039/080 ] loss = 0.05544, acc = 0.99219

100%|██████████| 6/6 [00:05<00:00, 1.19it/s]

[ Valid | 039/080 ] loss = 2.68335, acc = 0.47266

100%|██████████| 25/25 [00:19<00:00, 1.26it/s]

[ Train | 040/080 ] loss = 0.02517, acc = 0.99406

100%|██████████| 6/6 [00:05<00:00, 1.14it/s]

[ Valid | 040/080 ] loss = 2.64601, acc = 0.48490

100%|██████████| 25/25 [00:18<00:00, 1.32it/s]

[ Train | 041/080 ] loss = 0.03971, acc = 0.99000

100%|██████████| 6/6 [00:05<00:00, 1.04it/s]

[ Valid | 041/080 ] loss = 2.78689, acc = 0.48438

100%|██████████| 25/25 [00:18<00:00, 1.32it/s]

[ Train | 042/080 ] loss = 0.01862, acc = 0.99781

100%|██████████| 6/6 [00:05<00:00, 1.01it/s]

[ Valid | 042/080 ] loss = 2.65974, acc = 0.49167

100%|██████████| 25/25 [00:18<00:00, 1.34it/s]

[ Train | 043/080 ] loss = 0.01466, acc = 0.99937

100%|██████████| 6/6 [00:05<00:00, 1.00it/s]

[ Valid | 043/080 ] loss = 2.87220, acc = 0.45469

100%|██████████| 25/25 [00:18<00:00, 1.34it/s]

[ Train | 044/080 ] loss = 0.00687, acc = 1.00000

100%|██████████| 6/6 [00:05<00:00, 1.11it/s]

[ Valid | 044/080 ] loss = 2.91559, acc = 0.47813

100%|██████████| 25/25 [00:19<00:00, 1.31it/s]

[ Train | 045/080 ] loss = 0.00554, acc = 1.00000

100%|██████████| 6/6 [00:04<00:00, 1.20it/s]

[ Valid | 045/080 ] loss = 2.67901, acc = 0.49141

100%|██████████| 25/25 [00:19<00:00, 1.28it/s]

[ Train | 046/080 ] loss = 0.00587, acc = 1.00000

100%|██████████| 6/6 [00:04<00:00, 1.22it/s]

[ Valid | 046/080 ] loss = 2.66586, acc = 0.52214

100%|██████████| 25/25 [00:19<00:00, 1.26it/s]

[ Train | 047/080 ] loss = 0.02673, acc = 0.99375

100%|██████████| 6/6 [00:05<00:00, 1.19it/s]

[ Valid | 047/080 ] loss = 3.22420, acc = 0.47083

100%|██████████| 25/25 [00:19<00:00, 1.27it/s]

[ Train | 048/080 ] loss = 0.01578, acc = 0.99812

100%|██████████| 6/6 [00:04<00:00, 1.23it/s]

[ Valid | 048/080 ] loss = 2.86836, acc = 0.46823

100%|██████████| 25/25 [00:19<00:00, 1.28it/s]

[ Train | 049/080 ] loss = 0.00684, acc = 1.00000

100%|██████████| 6/6 [00:05<00:00, 1.02it/s]

[ Valid | 049/080 ] loss = 2.71167, acc = 0.51016

100%|██████████| 25/25 [00:18<00:00, 1.34it/s]

[ Train | 050/080 ] loss = 0.00401, acc = 1.00000

100%|██████████| 6/6 [00:05<00:00, 1.01it/s]

[ Valid | 050/080 ] loss = 2.76773, acc = 0.47552

100%|██████████| 25/25 [00:18<00:00, 1.32it/s]

[ Train | 051/080 ] loss = 0.00460, acc = 1.00000

100%|██████████| 6/6 [00:05<00:00, 1.02it/s]

[ Valid | 051/080 ] loss = 2.73334, acc = 0.51094

100%|██████████| 25/25 [00:18<00:00, 1.35it/s]

[ Train | 052/080 ] loss = 0.00917, acc = 0.99969

100%|██████████| 6/6 [00:05<00:00, 1.06it/s]

[ Valid | 052/080 ] loss = 2.90832, acc = 0.46953

100%|██████████| 25/25 [00:19<00:00, 1.32it/s]

[ Train | 053/080 ] loss = 0.03116, acc = 0.99250

100%|██████████| 6/6 [00:05<00:00, 1.17it/s]

[ Valid | 053/080 ] loss = 3.16132, acc = 0.48177

100%|██████████| 25/25 [00:19<00:00, 1.28it/s]

[ Train | 054/080 ] loss = 0.11769, acc = 0.96531

100%|██████████| 6/6 [00:05<00:00, 1.19it/s]

[ Valid | 054/080 ] loss = 3.22004, acc = 0.42448

100%|██████████| 25/25 [00:19<00:00, 1.29it/s]

[ Train | 055/080 ] loss = 0.09464, acc = 0.97094

100%|██████████| 6/6 [00:04<00:00, 1.24it/s]

[ Valid | 055/080 ] loss = 3.20812, acc = 0.44297

100%|██████████| 25/25 [00:19<00:00, 1.29it/s]

[ Train | 056/080 ] loss = 0.03831, acc = 0.99062

100%|██████████| 6/6 [00:04<00:00, 1.22it/s]

[ Valid | 056/080 ] loss = 2.86853, acc = 0.45729

100%|██████████| 25/25 [00:19<00:00, 1.30it/s]

[ Train | 057/080 ] loss = 0.06187, acc = 0.98188

100%|██████████| 6/6 [00:05<00:00, 1.20it/s]

[ Valid | 057/080 ] loss = 3.58281, acc = 0.41380

100%|██████████| 25/25 [00:19<00:00, 1.25it/s]

[ Train | 058/080 ] loss = 0.11841, acc = 0.96125

100%|██████████| 6/6 [00:05<00:00, 1.13it/s]

[ Valid | 058/080 ] loss = 3.20687, acc = 0.46016

100%|██████████| 25/25 [00:18<00:00, 1.32it/s]

[ Train | 059/080 ] loss = 0.08643, acc = 0.97656

100%|██████████| 6/6 [00:05<00:00, 1.01it/s]

[ Valid | 059/080 ] loss = 2.87604, acc = 0.49688

100%|██████████| 25/25 [00:19<00:00, 1.32it/s]

[ Train | 060/080 ] loss = 0.03724, acc = 0.99094

100%|██████████| 6/6 [00:05<00:00, 1.02it/s]

[ Valid | 060/080 ] loss = 3.42462, acc = 0.44115

100%|██████████| 25/25 [00:18<00:00, 1.33it/s]

[ Train | 061/080 ] loss = 0.02438, acc = 0.99531

100%|██████████| 6/6 [00:05<00:00, 1.01it/s]

[ Valid | 061/080 ] loss = 2.77505, acc = 0.52474

100%|██████████| 25/25 [00:18<00:00, 1.35it/s]

[ Train | 062/080 ] loss = 0.03341, acc = 0.99062

100%|██████████| 6/6 [00:05<00:00, 1.07it/s]

[ Valid | 062/080 ] loss = 3.09092, acc = 0.45651

100%|██████████| 25/25 [00:19<00:00, 1.31it/s]

[ Train | 063/080 ] loss = 0.01417, acc = 0.99812

100%|██████████| 6/6 [00:05<00:00, 1.19it/s]

[ Valid | 063/080 ] loss = 3.28436, acc = 0.49531

100%|██████████| 25/25 [00:18<00:00, 1.32it/s]

[ Train | 064/080 ] loss = 0.00537, acc = 0.99969

100%|██████████| 6/6 [00:04<00:00, 1.23it/s]

[ Valid | 064/080 ] loss = 3.28100, acc = 0.47500

100%|██████████| 25/25 [00:19<00:00, 1.27it/s]

[ Train | 065/080 ] loss = 0.00403, acc = 1.00000

100%|██████████| 6/6 [00:04<00:00, 1.23it/s]

[ Valid | 065/080 ] loss = 3.10975, acc = 0.49219

100%|██████████| 25/25 [00:19<00:00, 1.27it/s]

[ Train | 066/080 ] loss = 0.02055, acc = 0.99500

100%|██████████| 6/6 [00:04<00:00, 1.23it/s]

[ Valid | 066/080 ] loss = 3.32507, acc = 0.49714

100%|██████████| 25/25 [00:19<00:00, 1.26it/s]

[ Train | 067/080 ] loss = 0.07396, acc = 0.98281

100%|██████████| 6/6 [00:04<00:00, 1.24it/s]

[ Valid | 067/080 ] loss = 3.10141, acc = 0.47786

100%|██████████| 25/25 [00:19<00:00, 1.27it/s]

[ Train | 068/080 ] loss = 0.04053, acc = 0.99000

100%|██████████| 6/6 [00:04<00:00, 1.23it/s]

[ Valid | 068/080 ] loss = 3.23551, acc = 0.45339

100%|██████████| 25/25 [00:19<00:00, 1.27it/s]

[ Train | 069/080 ] loss = 0.05623, acc = 0.98125

100%|██████████| 6/6 [00:05<00:00, 1.16it/s]

[ Valid | 069/080 ] loss = 3.40882, acc = 0.49427

100%|██████████| 25/25 [00:19<00:00, 1.29it/s]

[ Train | 070/080 ] loss = 0.05050, acc = 0.98531

100%|██████████| 6/6 [00:05<00:00, 1.03it/s]

[ Valid | 070/080 ] loss = 3.77178, acc = 0.46042

100%|██████████| 25/25 [00:18<00:00, 1.33it/s]

[ Train | 071/080 ] loss = 0.01431, acc = 0.99781

100%|██████████| 6/6 [00:05<00:00, 1.02it/s]

[ Valid | 071/080 ] loss = 3.26253, acc = 0.43932

100%|██████████| 25/25 [00:18<00:00, 1.35it/s]

[ Train | 072/080 ] loss = 0.00360, acc = 1.00000

100%|██████████| 6/6 [00:06<00:00, 1.01s/it]

[ Valid | 072/080 ] loss = 3.04600, acc = 0.48750

100%|██████████| 25/25 [00:18<00:00, 1.34it/s]

[ Train | 073/080 ] loss = 0.00739, acc = 0.99500

100%|██████████| 6/6 [00:05<00:00, 1.02it/s]

[ Valid | 073/080 ] loss = 3.30978, acc = 0.47969

100%|██████████| 25/25 [00:18<00:00, 1.34it/s]

[ Train | 074/080 ] loss = 0.06233, acc = 0.98281

100%|██████████| 6/6 [00:05<00:00, 1.05it/s]

[ Valid | 074/080 ] loss = 3.78810, acc = 0.42812

100%|██████████| 25/25 [00:19<00:00, 1.31it/s]

[ Train | 075/080 ] loss = 0.05292, acc = 0.98094

100%|██████████| 6/6 [00:05<00:00, 1.16it/s]

[ Valid | 075/080 ] loss = 3.40485, acc = 0.47891

100%|██████████| 25/25 [00:19<00:00, 1.31it/s]

[ Train | 076/080 ] loss = 0.02980, acc = 0.99344

100%|██████████| 6/6 [00:04<00:00, 1.21it/s]

[ Valid | 076/080 ] loss = 3.04472, acc = 0.49531

100%|██████████| 25/25 [00:20<00:00, 1.25it/s]

[ Train | 077/080 ] loss = 0.01250, acc = 0.99750

100%|██████████| 6/6 [00:04<00:00, 1.22it/s]

[ Valid | 077/080 ] loss = 3.45230, acc = 0.45078

100%|██████████| 25/25 [00:19<00:00, 1.25it/s]

[ Train | 078/080 ] loss = 0.01533, acc = 0.99469

100%|██████████| 6/6 [00:04<00:00, 1.24it/s]

[ Valid | 078/080 ] loss = 3.26030, acc = 0.47734

100%|██████████| 25/25 [00:19<00:00, 1.26it/s]

[ Train | 079/080 ] loss = 0.01633, acc = 0.99594

100%|██████████| 6/6 [00:04<00:00, 1.21it/s]

[ Valid | 079/080 ] loss = 3.48171, acc = 0.45625

100%|██████████| 25/25 [00:19<00:00, 1.26it/s]

[ Train | 080/080 ] loss = 0.01063, acc = 0.99906

100%|██████████| 6/6 [00:05<00:00, 1.18it/s]

[ Valid | 080/080 ] loss = 3.21521, acc = 0.48438

# Compute the accuracy for current batch.

acc = (logits.argmax(dim=-1) == labels.to(device)).float().mean()

- argmax

取dim维度上每个列表的的最大值

== 即 torch.eq

float

mean

test code:

import torch

label = torch.tensor([1, 1, 1, 1, 1, 1, 1, 1])

print(label)

logits = torch.tensor([[0, 1],

[2, 0],

[0, 3],

[0, 4],

[5, 0],

[0, 6],

[7, 0],

[8, 0]])

predicted_label = logits.argmax(dim=-1)

print(predicted_label)

# bool_compare_result = torch.eq(predicted_label, label)

bool_compare_result = (predicted_label == label)

print(bool_compare_result)

float_compare_result = bool_compare_result.float()

print(float_compare_result)

accuracy = float_compare_result.mean()

print(accuracy)

accuracy = (logits.argmax(dim=-1) == label).float().mean()

print(accuracy)

output:

tensor([1, 1, 1, 1, 1, 1, 1, 1])

tensor([1, 0, 1, 1, 0, 1, 0, 0])

tensor([ True, False, True, True, False, True, False, False])

tensor([1., 0., 1., 1., 0., 1., 0., 0.])

tensor(0.5000)

tensor(0.5000)

Testing¶

For inference, we need to make sure the model is in eval mode, and the order of the dataset should not be shuffled ("shuffle=False" in test_loader).

Last but not least, don't forget to save the predictions into a single CSV file. The format of CSV file should follow the rules mentioned in the slides.

WARNING -- Keep in Mind¶

Cheating includes but not limited to:

- using testing labels,

- submitting results to previous Kaggle competitions,

- sharing predictions with others,

- copying codes from any creatures on Earth,

- asking other people to do it for you.

Any violations bring you punishments from getting a discount on the final grade to failing the course.

It is your responsibility to check whether your code violates the rules. When citing codes from the Internet, you should know what these codes exactly do. You will NOT be tolerated if you break the rule and claim you don't know what these codes do.

# Make sure the model is in eval mode.

# Some modules like Dropout or BatchNorm affect if the model is in training mode.

model.eval() # 关闭 Batch Normalization 和 Dropout 训练,使用训练好的参数

# Initialize a list to store the predictions.

predictions = []

# Iterate the testing set by batches.

for batch in tqdm(test_loader):

# A batch consists of image data and corresponding labels.

# But here the variable "labels" is useless since we do not have the ground-truth.

# If printing out the labels, you will find that it is always 0.

# This is because the wrapper (DatasetFolder) returns images and labels for each batch,

# so we have to create fake labels to make it work normally.

imgs, labels = batch

# We don't need gradient in testing, and we don't even have labels to compute loss.

# Using torch.no_grad() accelerates the forward process.

with torch.no_grad():

logits = model(imgs.to(device))

# Take the class with greatest logit as prediction and record it.

predictions.extend(logits.argmax(dim=-1).cpu().numpy().tolist())

100%|██████████| 27/27 [00:25<00:00, 1.08it/s]

# Save predictions into the file.

with open("predict.csv", "w") as f:

# The first row must be "Id, Category"

f.write("Id,Category\n")

# For the rest of the rows, each image id corresponds to a predicted class.

for i, pred in enumerate(predictions):

f.write(f"{i},{pred}\n")