Department of Data Science

Course: Tools and Techniques for Data Science

Instructor: Muhammad Arif Butt, Ph.D.

Learning agenda of this notebook¶

Section I: (Overview of Linear Algebra: Vectors)

- Overview of Vectors

- Scalars vs Vectors

- Mathematical and Graphical Representation of Vectors in $\mathbb{R}^2$

- Mathematical and Graphical Representation of Vectors in $\mathbb{R}^3$

- Hands on Implementation in Python

- Magnitude of a Vector (Vector Norms)

- Direction of a Vector

- Components of a Vector

- Components of a Vector in $\mathbb{R}^2$

- Components of a Vector in $\mathbb{R}^3$

- Two Fundamental Vector Operations

- Vector Addition

- Multiplying a Vector with Scalar value

- Basis and Unit Vectors

- Linear combination and Span of Vectors

- Vector to Vector Multiplication

- Vector Dot Product

- Vector Cross Product

Section II: (Overview of Linear Alagebra: Matrices)

- Overview of Matrices

- Matrices and its Types

- Row Vector

- Column Vector

- Zero Matrix

- Ones Matrix

- Random Integer Matrix

- Square Matrix

- Symmetric Matrix

- Triangular Matrix

- Diagonal Matrix

- Identity Matrix

- Scalar Matrix

- Orthogonal Matrix

- Matrix Operations

- Matrix Addition

- Matrix-Scalar Multiplication

- Matrix Multiplication (Hadamard Product)

- Matrix Multiplication (Dot Product)

- Matrix-Vector Multiplication

- Frobenius Norms

- Transpose of a Matrix

- Determinant of a Matrix

- Inverse of a Matrix

- Trace of a Matrix

- Rank of a Matrix

Section III: (Solving System of Linear Equations)

- An overview of Linear Equations

- What is a Linear Equation?

- What is a system of Linear Equations?

- How to solve a system of Linear Equations?

- Substitution strategy

- Elimination strategy

- Graphing strategy

- Consistent vs Inconsistent System of Linear Equations

- Plotting a Linear Equation with Three Variables

- Solving set of Three Linear Equations with Three variables

- Solving System of Linear Equations using Matrix Algebra

- Writing a system of Linear Equations in Matrix form

- Solving system of Linear Equations using Gaussian Elimination Method

- Solving system of Linear Equations using Gauss Jordan Method

- Solving system of Linear Equations using Cramer's Rule

- Solving system of Linear Equations using Matrix Inverse Method

- Limitations of Matrix Inversion Method

- Categories of System of Linear Equations

- Standard systems

- Overdetermined systems

- Underdetermined systems

- Solving Inconsistent Overdetermined System of Linear Equations using Least Squares Method

- Modeling Linear Equations in Machine Learning with

2variables - Modeling Linear Equations in Machine Learning with

mvariables - Simple Linear Regression using Least Squares Method

- Multiple Linear Regression using Ordinary Least Squares (OLS) Method

- Modeling Linear Equations in Machine Learning with

Section IV: (Linear Transformation and Matrices)

Section V: (Eigen Decomposition and its Applications)

Section VI: (Singular Value Decomposition and its Applications)

# Unlike the other modules, we have been working so far, you have to download and install...

# To install this library in Jupyter notebook

import sys

!{sys.executable} -m pip install -q --upgrade pip

import numpy as np

import numpy.linalg

import math

import scipy

from matplotlib import pyplot as plt

from plot_helper import * # Helper functions: plot_vector, plot_linear_transformation, plot_linear_transformations

Section 1: (Overview of Linear Alagebra: Vectors) ¶

Some of the codes of this notebook are adapted from:

1. Overview of Vectors¶

a. Scalar vs Vectors¶

- A quantity that has magnitude but no particular direction is called scalar. For example, length, speed, mass, density, pressure, work, power, temperature, area, volume.

- A quantity that has magnitude as well as direction is called vector. For example, displacement, velocity, weight, force.

- For example, to describe a body’s velocity completely, we will have to mention its magnitude and direction. This means that we will have to mention how fast it is going in terms of distance covered per unit time and describe what direction it is headed. So, if we say a car is moving at 40 km/hr. This statement only describes the speed of the body. If someone says a car is moving at 40 km/hr and is headed North. This statement is describing the velocity of the car. It tells us the magnitude by which the car is moving and the direction in which it is headed.

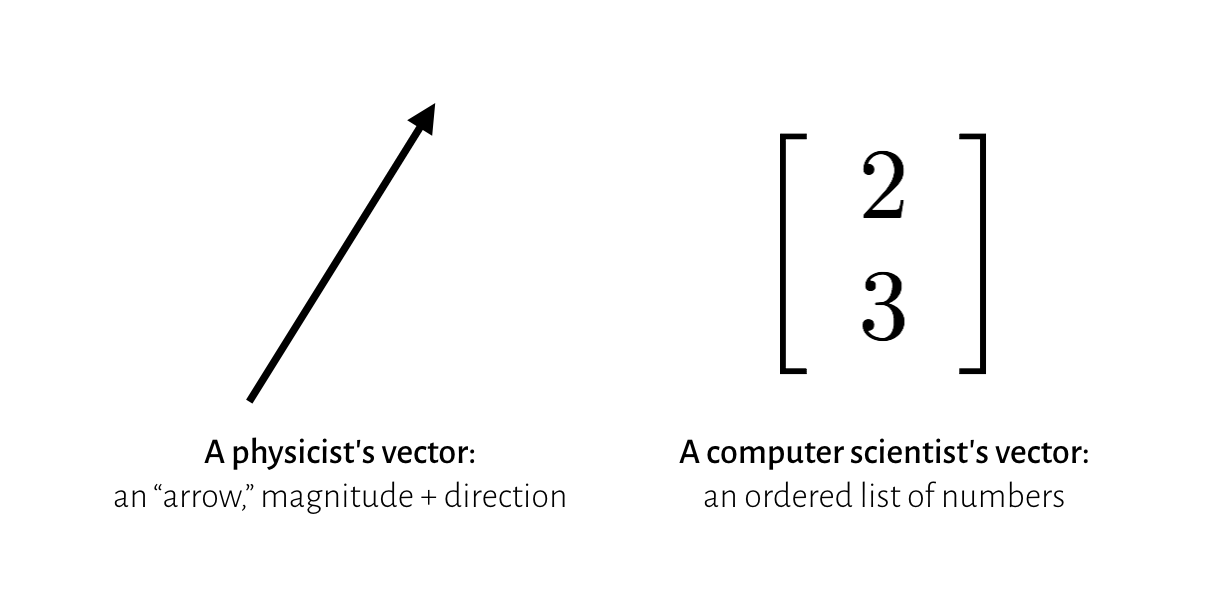

We come accross the concept of vectors in the domains of physics, engineering, mathematics, computer science and more. Each field's interpretation of what a vector is a bit different:

- In physics, we represent a vector as an arrow of specific length, representing its magnitude; and drawn at a specific angle, representing its direction. It can represent directional quantities like velocity, force, acceleration.

- In computer science, a vector is an ordered list of numbers, stored in order. For example the price, area and number of bedrooms in a house. Or may be the age, weight and blood pressure of a person.

- In mathematics, vectors are generic objects that behave in a certain way, when they are added or scaled: $\mathbf{u}+\mathbf{v}$, $\alpha\mathbf{v}$.

Vectors can be 2-dimensional, 3-dimensional and so on to N-dimensional. The two and three dimensional vecgtors are pretty easy to visualize. If we are able to understand and visualize the vector operations in 2-dimensions, we can map the concepts to larger dimensions as well. For example, to model the age, weight, daily hours of sleep, weekly hours of exercise, and blood pressure of an individual, we need a five dimensional vector.

b. Mathematical and Graphical Representation of Vectors in $\mathbb{R}^2$¶

Algebraically¶

- Algebraically, vectors are often represented using a lowercase character, having comma separated list of numbers written horizontally or may be numbers written from top to bottom.

- The length of the vector is the number of scalar values in the vector, and is also called the

order/rank/degree/dimension of the vector. - For example, in $\mathbb{R}2$ space, algebraically a vector $\overrightarrow{\rm v}$ can be written as:

$\hspace{2 cm}\overrightarrow{\rm v} = (a, b) = \begin{bmatrix} a \\ b \end{bmatrix} \hspace{2 cm}\overrightarrow{\rm v} = (2, 5) = \begin{bmatrix} 2 \\ 5 \end{bmatrix}$

$\hspace{2 cm}\overrightarrow{\rm v} = a\hat{i} + b\hat{j} \hspace{3 cm} \overrightarrow{\rm v} = 2\hat{i} + 5\hat{j}$

$\hspace{2 cm}\overrightarrow{\rm v} = a\begin{bmatrix} 1 \\ 0 \end{bmatrix} + b\begin{bmatrix} 0 \\ 1 \end{bmatrix} \hspace{1.5 cm} \overrightarrow{\rm v} = 2\begin{bmatrix} 1 \\ 0 \end{bmatrix} + 5\begin{bmatrix} 0 \\ 1 \end{bmatrix} $

Graphically/Geometrically¶

- One can think of a vector as a point in space. Graphically/Geometrically, vectors can be represented by a directed line segment in cartesian coordniate system, whose length is the magnitude of the vector and the angle represents its direction.

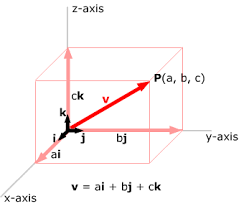

c. Mathematical and Graphical Representation of Vectors in $\mathbb{R}^3$¶

Algebraically¶

Algebraically, vectors of rank 3 consists of three scalar values written as comma separated list of numbers horizontally or may be from top to bottom.

The length of the vector is the number of scalar values in the vector, and is also called the

order/rank/degree/dimension of the vector, in this case it is three.Algebraically, in $\mathbb{R}3$ space, a vector $\overrightarrow{\rm v}$ can be written as:

$\hspace{2 cm}\overrightarrow{\rm v} = (a, b, c) = \begin{bmatrix} a \\ b \\ c \end{bmatrix} \hspace{5 cm}\overrightarrow{\rm v} = (2, 5, 3) = \begin{bmatrix} 2 \\ 5 \\ 3 \end{bmatrix}$

$\hspace{2 cm}\overrightarrow{\rm v} = a\hat{i} + b\hat{j} + c\hat{k} \hspace{5 cm} \overrightarrow{\rm v} = 2\hat{i} + 5\hat{j} + 3\hat{k}$

$\hspace{2 cm}\overrightarrow{\rm v} = a\begin{bmatrix} 1 \\ 0 \\0 \end{bmatrix} + b\begin{bmatrix} 0 \\ 1 \\0 \end{bmatrix} + c\begin{bmatrix} 0 \\ 0 \\1 \end{bmatrix} \hspace{3 cm} \overrightarrow{\rm v} = 2\begin{bmatrix} 1 \\ 0 \\0 \end{bmatrix} + 5\begin{bmatrix} 0 \\ 1 \\0 \end{bmatrix} + 3\begin{bmatrix} 0 \\ 0 \\1 \end{bmatrix}$

Graphically/Geometrically¶

- A 3-D coordinate system has 3 dimensions or can be regarded as having 3 perpendicular axes: x, y, and z-axes. Such a system is called a 3-dimensional rectangular coordinate system.

- Note, the third axis is the Z-axis, and all the three axis are perpedicular to each other.

- The vector $\overrightarrow{\rm v}$ is shown in bold red having its three components a, b and c along the x, y and z axis respectively.

- We can visualize vectors upto three dimensions, however, beyond three dimensions, we normally use algebraic notations.

d. Hands on Implementation in Python¶

Example 1: Creating Vector $[3,2]$ having tail at origin

# To better visualize and plot, you need to import following module/script having three helper functions

# plot_vector(), plot_linear_transformation, plot_linear_transformations

from plot_helper import *

v = [(3,2)] # A list having a single tuple of two elements representing x and y component of vector

plot_vector(v)

Example 2: Creating four vectors, one in each quadrant having their tails at origin

v = [(3, 3), (-3, 1), (-3,-2),(3,-2)] # A list having four tuples of two elements each

plot_vector(v)

Example 3: Creating a vector $[4,3]$ having its tail at $[2,2]$

v = [(4,3)] # A list having a single tuple of two elements representing x and y component of vector

tail = [(2,2)] # A list having a single tuple of two elements representing tail of vector

plot_vector(v, tail)

Example 4: Three vectors with their tails at $[2,2]$

v = [(1, 3), (3, 3), (4, 6)] # A list having three tuples of two elements each

tail = [(2, 2)] # A list having a single tuple of two elements

plot_vector(v, tail)

Example 5: Three vectors with different tails

v = [(1, 3), (4, 4), (4, 6)]

tails = [(3, 2), (-6, -6), (-1, 2)]

plot_vector(v, tails)

Example 6: Translate above three vectors to origin

v = [(1, 3), (4, 4), (4, 6)]

tails = [(0, 0), (0, 0), (0, 0)]

plot_vector(v, tails)

2. Magnitude of a Vector (Vector Norms)¶

- The length of a vector is a

non-negativenumber that describes the extent of the vector in space, and is sometimes referred to as the vector’s magnitude or the norm. - We can compute the magnitude of a 2-dimensional, 3-dimensional and so on to a n-dimensional vector using two ways:

- By graphically drawing the vector in a coordinate system

- By using different Vector Norms.

L2 Norm¶

- The most commonly used Norm that is used to calculate the magnitude of a vector is L2 Norm.

- The L2 norm calculates the distance of the vector coordinate from the origin of the vector space. As such, it is also known as the

Euclidean normas it is calculated as theEuclidean distance from the origin. The result is apositivedistance value. - The L2 norm is calculated as the

square rootof the sum of the squared vector values.

$\left\lVert x \right\rVert_2$ $=$ $\sqrt{\sum_{i=1}^n x_i^2}$

Squared L2 Norm¶

- The squared L2 Norm is computationally cheaper to use as compared to L2 Norm.

- The squared L2 Norm equals to the dot product of a vector with its transpose.

- The squared L2 norm is calculated as the sum of the squared vector values.

$\left\lVert x \right\rVert_2$ $=$ $\sum_{i=1}^n x_i^2$

L1 Norm¶

- Another way to calculate the magnitude of a vector is using the L1 Norm.

- The L1 norm is calculated as the sum of the absolute vector values. The L1 norm is also known as

taxicab normor theManhattan norm. - In several machine learning applications, it is important to discriminate between elements that are exactly zero and elements that are small but nonzero. In such cases, we use the L1 norm.

$\left\lVert x \right\rVert_1$ $=$ $\sum_{i=1}^n |x_i|$

Max Norm (L∞)¶

- The length of a vector can be calculated using the maximum norm, also called max norm (L∞). Max norm of a vector is referred to as (L∞)

- The max norm is calculated as returning the

maximum valueof the vector, hence the name.

$\left\lVert x \right\rVert_∞$ $=$ $\max_{i=1}^n$ $|x_i|$

Check your Concepts¶

Determine the magnitude of following vectors:

- X = 20m, North

- A = (-1, -2/3)

- F = (4, 10)

- V = (2, 5, 3)

- T = (0, 2, -1)

- $\overrightarrow{\rm AB}$ whose starting point is at A = (-1,0, 3) and ending point is B = (5,2,0)

Hands on Implementation in Python¶

Example: Determine the magnitude of vector $\overrightarrow{\rm AB}$ whose starting point is at $A = (-2, 2)$ and ending point is $B = (2, 8)$

import numpy as np

# Translating the vector to origin

a = np.array([-2, 2])

b = np.array([2, 8])

v = np.array([b[0]-a[0], b[1]-a[1]]) # v = (4,6)

# Plotting both the vectors

vectors = [v, v ] # A list having two vectors

tails = [[-2,2], [0,0]]

plot_vector(vectors, tails)

print("v = ", v)

# calculating L1 norm

l1 = numpy.linalg.norm(v, ord=1)

l1 = np.abs(v[0]) + np.abs(v[1])

print("L1 Norm = ",l1)

# calculating L2 norm

l2 = numpy.linalg.norm(v, ord=2)

l2 = (v[0]**2 + v[1]**2 )**(1/2)

print("L2 Norm = ",l2)

# calculating Squared L2 norm

sq_l2 = (numpy.linalg.norm(v, ord=2))**2

sq_l2 = (v[0]**2 + v[1]**2 )

print("Squared L2 Norm = ",sq_l2)

# calculating Max norm

maxnorm = numpy.linalg.norm(v, ord=np.inf)

maxnorm = np.max([np.abs(v[0]), np.abs(v[1])])

print("L∞ = ", maxnorm)

v = [4 6] L1 Norm = 10 L2 Norm = 7.211102550927978 Squared L2 Norm = 52 L∞ = 6

A vector having its tail at origin is called position vector

3. Direction of a Vector¶

- The direction of the vector v is the measure of the angle that it makes with the horizontal in the plane.

- There are two commonly used units of measurement for angles.

- Degrees: A circle is divided into 360 equal degrees, and a degree is further divided into 60 equal parts called minutes. So seven and a half degrees can be called 7 degrees and 30 minutes, written 7° 30'. Each minute is further divided into 60 equal parts called seconds, and, for instance, 2 degrees 5 minutes 30 seconds is written 2° 5' 30".

- Radians: The other common measurement for angles is radians. One radian is the angle made at the center of a circle by an arc whose length is equal to the radius of the circle. The circumference of a circle is 2π, so it follows that 360° equals 2π radians.

Geometrically Measuring the Direction of a Vector:

- The most common way is to measure the angle by the counterclockwise movement with the positive x-axis. This way the angle is always positive.

- Another way is to measure the smallest angle that a vector form along the horizontal axis. If it is measured clockwise the angle is written with a negative sign.

Mathematically Measuring the Direction of a Vector:

- By using Inverse Tangent Formula:

$\theta = tan^{-1} (y/x)$

- Note:

- The inverse Tangent formula returns the angle in radians, which you can convert to degrees by multiplying it by 180/pi

- The inverse Tangent formula gives the shortest angle from either the positive or negative x-axis in either clock-wise or counter-clockwise direction.

Example 1: Find the direction of a vector whose coordinates are $(4, 6)$

v = np.array([4, 6])

print("v = ", v)

theta_rad = math.atan(v[1]/v[0]) # acos, asin, and atan take a ratio as input and return an angle in radians.

theta_deg = theta_rad*(180/math.pi) # so we need to convert it into degrees

print("Angle in radians: ", theta_rad)

print("Shortest angle from x-axis: ", theta_deg)

# Since, both the coordinates are positive, that means the angle exists in the first quadrant

# So the angle is already computed from positive x-axis

theta_deg = theta_deg + 0

print("Counter-Clockwise angle in degrees from positive x-axis: ", theta_deg)

plot_vector([v])

v = [4 6] Angle in radians: 0.982793723247329 Shortest angle from x-axis: 56.309932474020215 Counter-Clockwise angle in degrees from positive x-axis: 56.309932474020215

Example 2: Find the direction of a vector whose coordinates are $(-4, 6)$

v = np.array([-4, 6])

print("v = ", v)

theta_rad = math.atan(v[1]/v[0]) # acos, asin, and atan take a ratio as input and return an angle in radians.

theta_deg = theta_rad*(180/math.pi) # so we need to convert it into degrees

print("Angle in radians: ", theta_rad)

print("Shortest angle from x-axis: ", theta_deg)

# Since, x-coordinate is negative and y-coordinate is positive, that means, the angle exists in the second

# quadrant. Since, the angle is negative, that means, it is measured from negative x-axis in clock-wise direction

# So we have to add 180 degree to get the angle from positive x-axis in counter-clockwise direction

theta_deg = theta_deg + 180

print("Counter-Clockwise angle in degrees from positive x-axis: ", theta_deg)

plot_vector([v])

v = [-4 6] Angle in radians: -0.982793723247329 Shortest angle from x-axis: -56.309932474020215 Counter-Clockwise angle in degrees from positive x-axis: 123.69006752597979

Example 3: Find the direction of a vector whose coordinates are $(-4, -6)$

v = np.array([-4, -6])

print("v = ", v)

theta_rad = math.atan(v[1]/v[0]) # acos, asin, and atan take a ratio as input and return an angle in radians.

theta_deg = theta_rad*(180/math.pi) # so we need to convert it into degrees

print("Angle in radians: ", theta_rad)

print("Angle in degrees: ", theta_deg)

# Since, both the coordinates are negative, that means, the angle exists in the third quadrant

# Since, the angle is positive, that means, it is measured from negative x-axis in counter-clockwise direction

# So we have to add 180 degree to get the angle from positive x-axis in counter-clockwise direction

theta_deg = theta_deg + 180

print("Angle in degrees from x-axis: ", theta_deg)

plot_vector([v])

v = [-4 -6] Angle in radians: 0.982793723247329 Angle in degrees: 56.309932474020215 Angle in degrees from x-axis: 236.30993247402023

Example 4: Find the direction of a vector whose coordinates are $(4, -6)$

v = np.array([4, -6])

print("v = ", v)

theta_rad = math.atan(v[1]/v[0]) # acos, asin, and atan take a ratio as input and return an angle in radians.

theta_deg = theta_rad*(180/math.pi) # so we need to convert it into degrees

print("Angle in radians: ", theta_rad)

print("Shortest angle from x-axis: ", theta_deg)

#Since, x-coordinate is positive and y-coordinate is negative, that means, the angle exists in the fourth quadrant

#Since, the angle is negative, that means, it is measured from positive x-axis in clockwise direction

#So we have to add 360 degree to get the angle from positive x-axis in counter-clockwise direction

theta_deg = theta_deg + 360

print("Counter-Clockwise angle in degrees from positive x-axis: ", theta_deg)

plot_vector([v])

v = [ 4 -6] Angle in radians: -0.982793723247329 Shortest angle from x-axis: -56.309932474020215 Counter-Clockwise angle in degrees from positive x-axis: 303.69006752597977

4. Components of a Vector¶

a. Components of a Vector in $\mathbb{R}^2$¶

- Splitting of an angled vector into two vectors directed towards the coordinate axes in a two-dimensional coordinate system are defined as vector components.

- The two components of any vector can be found through the method of vector resolution.

- This vector say $F$ is making an angle of 30 degrees with the positive x-axis in counter clockwise direction. The head of this vector is 5 units towards East and 2 units towards North. These two lines are the vector components of the vector $F$. Moreover, these two components are supposed to form a right-angled triangle.

- We can use these two components are then used to find the resultant vector’s magnitude and direction, which is AB.

$ cosθ = \frac{F_x}{F} \implies F_x = F.cosθ $

$ sinθ = \frac{F_y}{F} \implies F_y = F.sinθ $

Example 1: A force $\overrightarrow{\rm F}$ of 10 N is applied at an angle of 30º along the horizontal surface. Resolve the vector into its components. Verify your answer by calculating its magnitude and direction from its components.

# calculating x and y components of vector F

fx = 10*math.cos(30 * math.pi/180) # sin, cos and tan take input angle in radians and return a real value.

fy = 10*math.sin(30 * math.pi/180) # sin, cos and tan take input angle in radians and return a real value.

print("Fx: %.2f" %fx, "N")

print("Fy: %.2f" %fy, "N")

# calculating magnitude for verification

f = numpy.linalg.norm([fx, fy], ord=2)

print("|F| = ",f)

# calculating angle for verification

theta_rad = math.atan(fy/fx) # returned angle is in radians.

theta_deg = theta_rad*(180/math.pi) # convert it into degrees

# Since, x and y-components are both positive, that means the vector is in first quadrant

print("Angle: %.2f" % theta_deg, "degrees")

# Plot the vector

plot_vector([(fx,fy)])

Fx: 8.66 N Fy: 5.00 N |F| = 10.0 Angle: 30.00 degrees

Example 2: Given a vector $\mathbf{v}$ having magnitude of 4 and direction of 45 degrees in $\mathbb{R}^2$. Find out its x and y-components.

Verify your answer by calculating its magnitude and direction from its components.

# calculating x and y components of vector F

vx = 4*math.cos(45 * math.pi/180) # sin, cos and tan take input angle in radians and return a real value.

vy = 4*math.sin(45 * math.pi/180) # sin, cos and tan take input angle in radians and return a real value.

print("vx: %.2f" %vx)

print("vy: %.2f" %vy)

# calculating magnitude for verification

mag = numpy.linalg.norm([vx, vy], ord=2)

print("|v| = ", mag)

# calculating angle for verification

theta_rad = math.atan(vy/vx) # returned angle is in radians.

theta_deg = theta_rad*(180/math.pi) # convert it into degrees

# Since, x and y-components are both positive, that means the vector is in first quadrant

print("Angle: %.2f" % theta_deg, "degrees")

# Plot the vector

plot_vector([(vx,vy)])

vx: 2.83 vy: 2.83 |v| = 4.0 Angle: 45.00 degrees

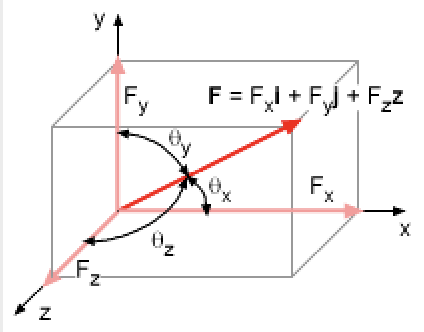

b. Components of a Vector in $\mathbb{R}^3$¶

In contrast to a vector in 2-D space having an x-component and a y-component with one angle in between, a vector in 3-D space will have an x-component, a y-component and a z-component with three angles in between.

Given the three angles and the magnitude of a vector, we can calculate its components using following formulae:

- $ F_x = F.cos\theta_x $, where, $\theta_x$ is the angle between the vector and x-axis

- $ F_y = F.cos\theta_y $, where, $\theta_y$ is the angle between the vector and y-axis

- $ F_z = F.cos\theta_z $, where, $\theta_z$ is the angle between the vector and z-axis

Consider a vector: $\overrightarrow{\rm v} = (4,6,4) = 4\hat{i} + 6\hat{j} + 4\hat{k}$

- Where $\hat{i}$, $\hat{j}$, and $\hat{k}$ are the unit vectors in the x, y and z directions, being multiplied by scalars 4, 6, and 4 respectively.

Example 1: Given a vector having magnitude of 8.2463 making angles of 60.98, 43.3, and 60.98 with x,y, and z-axis respectively in $\mathbb{R}^3$. Find out its x, y and z-components. Verify your answer by calculating the vector's magnitude from components.

# calculating x and y components of vector F

fx = 8.2463*math.cos(60.98 * math.pi/180)

fy = 8.2463*math.cos(43.3 * math.pi/180)

fz = 8.2463*math.cos(60.98 * math.pi/180)

print("fx: %.2f" %fx)

print("fy: %.2f" %fy)

print("fx: %.2f" %fz)

# calculating magnitude for verification

mag = numpy.linalg.norm([fx, fy, fz], ord=2)

print("|f| = %.3f" %mag)

fx: 4.00 fy: 6.00 fx: 4.00 |f| = 8.248

5. Two Fundamental Vector Operations¶

a. Vectors Addition¶

- Two vectors of equal length can be added together to create a new third vector, and is written as:

$ \hspace{5.0cm} \overrightarrow{\rm c} = \overrightarrow{\rm a} + \overrightarrow{\rm b} $

Vector addition can be performed graphically, using the head-to-tail as shown in this figure. (First, the two vectors

aandbare placed together such that the head of vectoraconnects the tail of vectorb. Next, to find the sum, a resultant vectorcis drawn such that it connects the tail ofato the head ofb.)Vection addition can also be done by simply performing an element by elementt addition.

$ \hspace{3.0cm} \overrightarrow{\rm c} = (a1 + b1, a2 + b2, a3 + b3) $

Example 1: Consider a vector $\overrightarrow{\rm a}$, having its tail at point $(-1, 3)$ and head at point $(5,2)$. Consider another vector $\overrightarrow{\rm b}$, having its tail at point $(1, -2)$ and head at point $(-2,2)$. Determine the resultant sum vector \overrightarrow{\rm c}. Also, give the magnitude and angle of the resultant vector.

# Translating the two vectors to origin

u = np.array([5-(-1), 2-3])

v = np.array([-2-1, 2-(-2)])

print("U = ", u)

print("V = ", v)

# calculate the resultant vector R

r = u + v

print("R = ", r)

# calculating magnitude of R from its components using L2 norm

mag = numpy.linalg.norm([r[0], r[1]], ord=2)

mag = (r[0]**2 + r[1]**2)**(1/2)

print("|R| = ", mag)

# calculating angle of resultant vector R

theta_rad = math.atan(r[1]/r[0]) # acos, asin, and atan take a ratio as input and return an angle in radians

theta_deg = theta_rad*(180/math.pi) # so we need to convert it into degrees

# Since, both x and y coordinates are positive, that means the angle exists in the first quadrant

# So no need to add anything

theta_deg = theta_deg + 0

print("Counter-Clockwise angle in degrees from positive x-axis: ", theta_deg)

vectors = [u, v, r]

tails = [(0,0), u, (0,0)]

plot_vector(vectors, tails)

U = [ 6 -1] V = [-3 4] R = [3 3] |R| = 4.242640687119285 Counter-Clockwise angle in degrees from positive x-axis: 45.0

Example 2: Given two vectors, U = 10 m, Φ = 30 degrees and V = 20m, Φ = 60 degrees, determine their sum. Then, calculate the magnitude and the angle of the resultant vector using the component method

import numpy as np

import math as m

# calculating x and y components of vector U

ux = 10*math.cos(30*math.pi/180) # sin, cos and tan take input angle in radians and return the ratio.

uy = 10*math.sin(30*math.pi/180)

print("Ux: %.2f" %ux, "m")

print("Uy: %.2f" %uy, "m")

# calculating x and y components of vector V

vx = 20*m.cos(60*math.pi/180)

vy = 20*m.sin(60*math.pi/180)

print("Vx: %.2f" %vx, "m")

print("Vy: %.2f" %vy, "m")

# calculate the sum of the two vectors R = U + V

rx = ux + vx

ry = uy + vy

r = np.array([rx, ry])

print("Resultant vector R: ", r)

# calculating magnitude of R from its components using L2 norm

mag = numpy.linalg.norm([rx, ry], ord=2)

mag = (rx**2 + ry**2)**(1/2)

print("|R| = ", mag)

# calculating angle of resultant vector R

theta_rad = math.atan(r[1]/r[0]) # acos, asin, and atan take a ratio as input and return an angle in radians

theta_deg = theta_rad*(180/math.pi) # so we need to convert it into degrees

# Since, both the coordinates are positive, that means the angle exists in the first quadrant

# So the angle is already computed from positive x-axis

theta_deg = math.degrees(theta_rad)

theta_deg = theta_deg + 0

print("Counter-Clockwise angle in degrees from positive x-axis: ", theta_deg)

vectors = [(ux,uy), (vx,vy), (rx,ry)]

tails = [(0,0), (ux,uy), (0,0)]

plot_vector(vectors, tails)

Ux: 8.66 m Uy: 5.00 m Vx: 10.00 m Vy: 17.32 m Resultant vector R: [18.66025404 22.32050808] |R| = 29.093129111764092 Counter-Clockwise angle in degrees from positive x-axis: 50.103909361017095

b. Multiplying a Vector with a Scalar Value (Scaling)¶

Multiplication by a scalar is a way of changing the magnitude and/or direction of a vector.

The multiplication of a scalar value with a vector A will yield another vector.

Example 1: Given the vector a = (-4, -6). If you multiply this vector by $-1/2$, its length (magnitude) halves and direction is reversed.

a = np.array([-4, -6])

print ("Vector A: ", a)

mag = numpy.linalg.norm(a, ord=2) # calculate magnitude using L2 norm

print("|A| = ",mag)

# calculating angle of vector A

theta_rad = math.atan(a[1]/a[0])

theta_deg = theta_rad*(180/math.pi)

# Since, both the coordinates are negative, that means, the angle exists in the third quadrant

# Since, the angle is positive, that means, it is measured from negative x-axis in counter-clockwise direction

# So we have to add 180 degree to get the angle from positive x-axis in counter-clockwise direction

theta_deg = theta_deg + 180

print("Counter-Clockwise angle in degrees from positive x-axis: ", theta_deg)

# Multiplying the vector A by -0.5 will give a new vector B

b = a * -0.5

print ("\nVector B: ", b)

# To calculate magnitude using L2 norm

mag = numpy.linalg.norm(b, ord=2)

mag = (b[0]**2 + b[1]**2) **(1/2)

print("|-A| = ",mag)

# calculating angle of vector B

theta_rad = m.atan(b[1]/b[0])

theta_deg = theta_rad*(180/m.pi)

# Since, both the coordinates are positive, that means, the angle exists in the first quadrant

# Since, the angle is positive, that means, it is measured from negative x-axis in counter-clockwise direction

# So need not to add anything

theta_deg = theta_deg + 0

print("Counter-Clockwise angle in degrees from positive x-axis: ", theta_deg)

vectors = [a, b]

plot_vector(vectors)

Vector A: [-4 -6] |A| = 7.211102550927978 Counter-Clockwise angle in degrees from positive x-axis: 236.30993247402023 Vector B: [2. 3.] |-A| = 3.605551275463989 Counter-Clockwise angle in degrees from positive x-axis: 56.309932474020215

Example 2: Given the vector a = (1, 1). If you multiply this vector by $3$, its length (magnitude) is trippled and direction remains the same.

$$ \mathbf{3a} = 3\left[ \begin{array}{c} 1 \\ 1 \end{array} \right] = \left[ \begin{array}{c} 3 \\ 3 \end{array} \right] $$# Given vector A

a = np.array([1, 1])

print ("Vector A: ", a)

# calculate magnitude using L2 norm

mag = numpy.linalg.norm(a, ord=2)

mag = (a[0]**2 + a[1]**2) **(1/2)

print("|A| = ",mag)

# calculating angle of vector A

theta_rad = math.atan(a[1]/a[0]) # acos, asin, and atan take a ratio as input and return an angle in radians

theta_deg = theta_rad*(180/math.pi) # so we need to convert it into degrees

# Since, both the coordinates are positive, that means, the angle exists in the first quadrant

# So we need not to add anything

theta_deg = theta_deg + 0

print("Counter-Clockwise angle in degrees from positive x-axis: ", theta_deg)

# Multiplying the vector A by 3 will give a new vector

b = a * 3

print ("\nVector B: ", b)

# To calculate magnitude using L2 norm

mag = numpy.linalg.norm(b, ord=2)

mag = (b[0]**2 + b[1]**2) **(1/2)

print("|-A| = ",mag)

# calculating angle of vector B

theta_rad = m.atan(b[1]/b[0]) # acos, asin, and atan take a ratio as input and return an angle in radians

theta_deg = theta_rad*(180/m.pi) # so we need to convert it into degrees

# Since, both the coordinates are positive, that means, the angle exists in the first quadrant

# So we need not to add anything

theta_deg = theta_deg + 0

print("Counter-Clockwise angle in degrees from positive x-axis: ", theta_deg)

vectors = [a, b]

plot_vector(vectors)

Vector A: [1 1] |A| = 1.4142135623730951 Counter-Clockwise angle in degrees from positive x-axis: 45.0 Vector B: [3 3] |-A| = 4.242640687119285 Counter-Clockwise angle in degrees from positive x-axis: 45.0

6. Unit Vectors and Unit Basis Vectors¶

a. Unit Vector¶

- Every vector $\overrightarrow{\rm v}$ in $\mathbb{R}^2$, $\mathbb{R}^3$,..., $\mathbb{R}^n$ will have a corresponding unit vector, which points in exactly the same direction as $\overrightarrow{\rm v}$, but has a magnitude of one.

- A unit vector of a vector $\overrightarrow{\rm v}$ is represented as $\hat{\rm v}$ and can be calculated as:

Example 1: Find the unit vector of $\overrightarrow{\rm v} =\begin{bmatrix} 3 \\2 \end{bmatrix}$

$$\hat{\rm v_1} = \frac{1}{3.6}\begin{bmatrix} 3 \\2 \end{bmatrix}=\begin{bmatrix} 0.832 \\0.554 \end{bmatrix}$$v = np.array((3,2))

mag_v = numpy.linalg.norm(v, ord=2)

vhat = (v[0]/mag_v, v[1]/mag_v)

mag_vhat = numpy.linalg.norm(vhat,ord=2)

print("vector v = ", v, " magnitude =", mag_v)

print("Unit vector of v = ", vhat, " magnitude =", mag_vhat)

vectors = [v, vhat]

plot_vector(vectors)

vector v = [3 2] magnitude = 3.605551275463989 Unit vector of v = (0.8320502943378437, 0.5547001962252291) magnitude = 1.0

Note: The unit vector $\overrightarrow{\rm u}$ of the given vector $\overrightarrow{\rm v} =(3,2)$ is one unit long, and sits right on top of $\overrightarrow{\rm v}$, pointing in the same direction as $\overrightarrow{\rm v}$.

The smaller triangle formed by the unit vector $\overrightarrow{\rm u}$ is similar to the larger triangle formed by the vector $\overrightarrow{\rm v}$

Example 2: Find the unit vector of $\overrightarrow{\rm v} =\begin{bmatrix} 12 \\3 \\-4 \end{bmatrix}$

$$\hat{\rm v_1} = \frac{1}{13}\begin{bmatrix} 12 \\3\\-4 \end{bmatrix}=\begin{bmatrix} 0.92 \\0.23 \\-0.3\end{bmatrix}$$v = np.array((12, 3, -4))

mag_v = numpy.linalg.norm(v, ord=2)

vhat = (v[0]/mag_v, v[1]/mag_v, v[2]/mag_v)

mag_vhat = numpy.linalg.norm(vhat,ord=2)

print("vector v = ", v, " magnitude =", mag_v)

print("Unit vector of v = ", vhat, " magnitude =", mag_vhat)

vector v = [12 3 -4] magnitude = 13.0 Unit vector of v = (0.9230769230769231, 0.23076923076923078, -0.3076923076923077) magnitude = 1.0

b. Basis Vectors¶

- Basis vectors are special unit vectors pointing along the x, y or the z-axis.

- In two-dimensional space $\mathbb{R}2$, we define two specific basis vectors, $\hat{i} = (1,0)$ and $\hat{j} = (0,1)$ and in three-dimensional space $\mathbb{R}3$, we define three specific basis vectors $\hat{i} = (1,0,0)$, $\hat{j} = (0,1,0)$, and $\hat{k} = (0,0,1)$.

- A vector can be represented as a linear combination of its basis vectors.

- Let me express the vector $\overrightarrow{\rm v}$ in two-dimensional space $\mathbb{R}2$ as its basis vectors:

$\overrightarrow{\rm v} \quad=\quad(6,4)\quad=\quad 6\hat{i} + 4\hat{j}\quad=\quad6 \left[ \begin{array}{c} 1 \\ 0 \end{array} \right] + 4 \left[ \begin{array}{c} 0 \\ 1 \end{array} \right] $

- Let me express the vector $\overrightarrow{\rm v}$ in three-dimensional space $\mathbb{R}3$ as its basis vectors:

$\overrightarrow{\rm v} \quad=\quad(-3,2,-1)\quad=\quad -3\hat{i} + 2\hat{j} - \hat{k}\quad=\quad -3 \left[ \begin{array}{c} 1 \\ 0 \\0 \end{array} \right] + 2 \left[ \begin{array}{c} 0 \\ 1 \\ 0 \end{array} \right] - \left[ \begin{array}{c} 0 \\ 0 \\ 0 \end{array} \right] $

Example 1: Write down the vector $\overrightarrow{\rm v} =(3,2)$, as linear combination of its unit vectors and visualize.

# create two basis vectors

i = numpy.array((1,0))

j = numpy.array((0,1))

# create a new vector, that is a scaled version of these two basis vectors

vec = 3*i + 2*j

vectors = [i, j, 3*i, 2*j, vec]

plot_vector(vectors)

c. Linear combination and Span¶

- Linear combination is adding together two scaled vectors.

- Span is the set of all possible linear combinations, that we can create from two vectors.

- Using the basis vectors for example in $\mathbb{R}2$, we can actually build every vector in two-dimensional space, simply by adding scaled combinations of $\hat{i}$ and $\hat{j}$.

- A span just describes the space reachable by linear combinations of some given vectors.

Example 1: Generate hundred random vectors from linear combinations of basis vectors, $ \hat{i}=(1,0)$ and $\hat{j}=(0,1)$. The scalar multiples $m$ and $n$ range from random values $-8$ to $+8$.

from numpy.random import randint

i = numpy.array((1,0))

j = numpy.array((0,1))

vectors = []

for _ in range(10000):

m = randint(-8,8)

n = randint(-8,8)

vectors.append(m*i + n*j)

plot_vector(vectors)

pyplot.title("Hundred random vectors, created from the basis vectors");

You can imagine that we can fill up the whole plane with infinite linear combinations, eventually filling up the entire 2D plane. Indeed, the span of the basis vectors is the whole 2D space. Remember, we are not forced to use the unit vectors $\mathbf{i}$ and $\mathbf{j}$ as our basis vectors: other pairs of vectors could form a basis.

- Question: Can we use another pair of vectors as basis and generates linear combinations?

Example 2: Generate hundred random vectors from linear combinations of two vectors, $a=(-2,1)$ and $b=(1,-3)$. The scalar multiples $m$ and $n$ range from random values $-8$ to $+8$.

a = numpy.array((-2,1))

b = numpy.array((1,-3))

vectors = []

for _ in range(100):

m = randint(-8,8)

n = randint(-8,8)

vectors.append(m*a + n*b)

plot_vector(vectors)

pyplot.title("Hundred random vectors, created from the basis vectors");

Example 3: Generate fifty random vectors from linear combinations of two vectors, $ \hat{c}=(-2,-1)$ and $\hat{d}=(1,0.5)$. The scalar multiples $m$ and $n$ range from random values $-8$ to $+8$.

c = numpy.array((-2,-1))

d = numpy.array((1,0.5))

vectors = []

for _ in range(50):

m = randint(-8,8)

n = randint(-8,8)

vectors.append(m*c + n*d)

plot_vector(vectors)

pyplot.title("Fifty linear combinations of the vectors $\mathbf{c}$ and $\mathbf{d}$.");

What's going on?

- The vector $\mathbf{d}$ is a scaled version of vector $\mathbf{c}$, so we say that the two vectors are colinear.

- Thus, all linear combinations of $\mathbf{c}$ and $\mathbf{d}$ end up on one line, which is their span. Their combinations are not able to travel all over the plane!

8. Vector to Vector Multiplication¶

a. Dot Product¶

- The multiplication of vectors is conducted through dot product such that the two vectors (same size) being multiplied produce a scalar value.

- This is the most commonly used operation in machine learning.

- Mathematically:

- The dot product of two vectors (when their components are known) is obtained by the summation of product of respective components:

- Geomatrically:

- The dot product of two vectors (when their magnitude and angle between the two vectors are known) is the product of the magnitude of the vectors and the cosine of the angle between them:

- The formula to compute the angle between two vectors using Dot Product is:

- The vector dot product is commutative in nature, i.e., $ u.v = v.u $

- The vector dot product can be to determine orthogonality, i.e., to check whether or not the two vectors are perpendicular to each other. If the vectors are perpendicular to each other then their dot product is zero

- The dot product is the key tool for calculating vector projections and vector decompositions as well

- The dot product is ubiquitous in deep learning: It is performed at every artificial neuron in a deep neural network, which may be made up of millions (or orders of magnitude more) of these neurons.

Example 1: Find the dot product of the two vectors A(2, 2, -1) and B(5, -3, 2), and also calculate the angle between them. $\hspace{8 cm}u \cdot v = \sum_{i=1}^{n} u_i v_i\hspace{2 cm}$ OR $\hspace{2 cm} u.v \hspace{.3 cm}=\hspace{.3 cm} |u| \hspace{.3 cm} |v| \hspace{.3 cm} Cos \theta $

$$ \theta \hspace{.3 cm}=\hspace{.3 cm} cos^{-1} \hspace{0.1 cm} \frac{u.v}{|u| |v|} $$import math

a = np.array([2, 2, -1])

b = np.array([5, -3, 2])

print("a = ", a)

print("b = ", b)

#calculate magnitude of vector A and B

mag1 = numpy.linalg.norm(a, ord=2)

mag2 = numpy.linalg.norm(b, ord=2)

print("|a| = ", mag1)

print("|b| = ", mag2)

# Calculate Dot Product mathematically

ab = np.dot(a,b)

ab = a[0]*b[0] + a[1]*b[1]+a[2]*b[2]

print("\na.dot(b) = ", ab)

theta_rad = math.acos(ab/(mag1*mag2))

theta_deg = theta_rad*(180/math.pi)

print("Angle: ", theta_deg)

# Calculate Dot Product geometrically

print("a.b = |a| |b| cos(𝜃) = ", mag1*mag2*math.cos(theta_rad))

a = [ 2 2 -1] b = [ 5 -3 2] |a| = 3.0 |b| = 6.164414002968976 a.dot(b) = 2 Angle: 83.79145537381414 a.b = |a| |b| cos(𝜃) = 2.0000000000000027

Example 2: Find out the angle between the given two vectors using dot product:

$a = 2i + 2j + 3k$

$b = 6i + 3j + 1k$

$\hspace{8 cm}u \cdot v = \sum_{i=1}^{n} u_i v_i\hspace{2 cm}$ OR $\hspace{2 cm} u.v \hspace{.3 cm}=\hspace{.3 cm} |u| \hspace{.3 cm} |v| \hspace{.3 cm} Cos \theta $

$$ \theta \hspace{.3 cm}=\hspace{.3 cm} cos^{-1} \hspace{0.1 cm} \frac{u.v}{|u| |v|} $$a = np.array([2, 2, 3])

b = np.array([6, 3, 1])

# printing original vectors

print("a = ", a)

print("b = ", b)

#calculate magnitude of vector A and B

mag1 = numpy.linalg.norm(a, ord=2)

mag2 = numpy.linalg.norm(b, ord=2)

print("|a| = ", mag1)

print("|b| = ", mag2)

# Calculate Dot Product mathematically

ab = np.dot(a,b)

ab = a[0]*b[0] + a[1]*b[1]+a[2]*b[2]

print("\na.dot(b) = ", ab)

theta_rad = math.acos(ab/(mag1*mag2))

theta_deg = theta_rad*(180/math.pi)

print("Angle: ", theta_deg)

# Calculate Dot Product geometrically

print("a.b = |a| |b| cos(𝜃) = ", mag1*mag2*math.cos(theta_rad))

a = [2 2 3] b = [6 3 1] |a| = 4.123105625617661 |b| = 6.782329983125268 a.dot(b) = 21 Angle: 41.32652841215388 a.b = |a| |b| cos(𝜃) = 21.0

b. Vector Cross Product¶

Unlike dot product, the cross product of two vectors produce a new vector and the direction of the resultant vector is given by the right-hand rule.

Mathematically:

- The cross product of two vectors (when their components are known) is obtained by using the determinant of the matrix as given below:

- Geomatrically:

- The cross product of two vectors (when their magnitude and angle between the two vectors are known) is the product of the magnitude of the vectors and the sine of the angle between them:

Where $\hat{n}$ is the unit vector perpendicular to the plane containing the given two vectors, in the direction given by the right-hand rule.

The formula to compute the angle between two vectors using Cross Product is:

- Properties:

- The cross product is zero in length when vectors A and B point in the same, or opposite, direction.

- The cross product is maximum in length when vectors A and B are at right angles.

- The vector cross product is NOT commutative in nature, i.e., $ a$ x $b \neq b$ x $a $

Example 1: Find the cross product of two vectors A(3,5,-7) and B(2,-6,4). Later prove that the resultant vector is perpendicular to both A and B.

$$ \vec{a}\times\vec{b} = \begin{pmatrix} \hat{i} & \hat{j} & \hat{k}\\ 3 & 5 & -7 \\ 2 & -6 & 4 \end{pmatrix} $$$$ = (20-42)\hat{i} - (12+14)\hat{j} +(-18-10)\hat{k} $$$$ = -22\hat{i}-26\hat{j}-28\hat{k} $$a = np.array([3, 5, -7])

b = np.array([2, -6, 4])

# printing original vectors

print("a = ", a)

print("b = ", b)

# Calculate Cross Product mathematically

ab = np.cross(a,b)

print("a x b = ", ab)

#calculate magnitude of vector a and b

mag1 = numpy.linalg.norm(a, ord=2)

mag2 = numpy.linalg.norm(b, ord=2)

mag3 = numpy.linalg.norm(ab, ord=2)

print("\n|a| = ", mag1)

print("|b| = ", mag2)

print("|axb| = ", mag2)

# Calculate the angle between two vectors

mag3 = numpy.linalg.norm(ab, ord=2)

theta_rad = math.asin(mag3/(mag1*mag2))

theta_deg = math.degrees(theta_rad) #theta_rad*(180/m.pi)

print("Angle: ", theta_deg)

a = [ 3 5 -7] b = [ 2 -6 4] a x b = [-22 -26 -28] |a| = 9.1104335791443 |b| = 7.483314773547883 |axb| = 7.483314773547883 Angle: 40.29462137837708

c. Orthogonal and Orthonormal Vectors:¶

Orthogonal Vector:

- A normal vector is a vector that makes an angle of 90° with another surface, vector, or axis.

- Two vectors are said to be orthogonal, if their dot product is equal to zero.

$\hspace{3 cm}\vec{a}.\vec{b} \hspace{.3 cm}=\hspace{.3 cm} |a| \hspace{.1 cm} |b| \hspace{.3 cm} cos(90) = 0$

- Two vectors are said to be orthogonal, if their cross product is equal to $|a| \hspace{.1 cm} |b|$.

$\hspace{3 cm} \vec{a}$ x $\vec{b} \hspace{.3 cm}=\hspace{.3 cm} |a| \hspace{.1 cm} |b| \hspace{.3 cm} sin (90) \hspace{.3 cm} = |a| \hspace{.1 cm} |b|$

Orthonormal Vector:

- Orthonormal vectors are special type of orthogonal vectors having a magnitude of one.

- So basis vectors are an example of orthonormal vector.

Example 1: Determine if the two vectors A(6, -2, -1) and B(2, 5, 2) are perpendicular to eachother. (If the vectors are perpendicular to each other then their dot product is zero) $$ u \cdot v = \sum_{i=1}^{n} u_i v_i $$

a = np.array([6, -2, -1])

b = np.array([2, 5, 2])

# printing original vectors

print("a = ", a)

print("b = ", b)

# Calculating Dot Product of Two Vectors

ab = a.dot(b)

ab = a[0]*b[0] + a[1]*b[1]+a[2]*b[2]

print("a.b = ", ab)

mag1 = numpy.linalg.norm(a, ord=2)

mag2 = numpy.linalg.norm(b, ord=2)

theta_rad = math.acos(ab/(mag1*mag2))

theta_deg = math.degrees(theta_rad) #theta_rad*(180/m.pi)

print("Angle: ", theta_deg)

lhs = mag1*mag2*math.sin(90*(math.pi/180))

rhs = mag1*mag2

print ("|a| |b| sin(90) = ", lhs)

print ("|a| |b| = ", rhs)

a = [ 6 -2 -1] b = [2 5 2] a.b = 0 Angle: 90.0 |a| |b| sin(90) = 36.78314831549904 |a| |b| = 36.78314831549904

Section II: (Overview of Linear Alagebra: Matrices) ¶

"Unfortunately, no one can be told what the Matrix is. You have to see it for yourself."

-Morpheus-

import numpy as np

import numpy.linalg

import math

import scipy

from matplotlib import pyplot as plt

from plot_helper import * # Helper functions: plot_vector, plot_linear_transformation, plot_linear_transformations

1. Overview of Matrices¶

- A matrix is a two-dimensional array of scalar values with one or more columns and one or more rows. They are also known as arrays.

- The numbers, variables, or expressions inside the matrix are called the entries or elements of a matrix.

- The notation for a matrix is often an uppercase letter, such as A, and dimensions of a matrix is denoted as

m × nfor the number of rows and the number of columns respectively. - The elements of a matrix are referred to by the row and the column subscript, such as ai,j.

- Matrices are a foundational element of linear algebra. Matrices are used throughout the field of machine learning in the description of algorithms and processes such as the input data variable (X) when training an algorithm.

2. Matrices and its Types¶

Example: Defining a 3x3 matrix using NumPy, $A =$ $\begin{bmatrix} -2 & 1 & 3\\ 1 & -3 & 5\\ -3 & 2 & 1 \end{bmatrix} $

A = np.array([[-2, 1, 3], [1, -3, 2], [-3, 2, 1]])

print("Matrix A = \n", A)

print("A.ndim: ", A.ndim)

print("A.shape: ", A.shape)

print("A.size: ", A.size)

Matrix A = [[-2 1 3] [ 1 -3 2] [-3 2 1]] A.ndim: 2 A.shape: (3, 3) A.size: 9

a. Row Vector¶

- A row vector is a matrix with exactly one row and one or many columns.

- A row vector with one row and n columns is shown below:

$\begin{bmatrix} b_1 & b_2 & b_3 & \cdots & b_n\\ \end{bmatrix} $

- Let us create a row vector having one row and three columns

B = np.array([-2, 1, 3])

print("Matrix B = \n", B)

print("A.ndim: ", B.ndim)

print("A.shape: ", B.shape)

# Taking the transpose of a matrix means to interchange the rows with columns.

# The rows become columns and the columns become rows.

Bt = B.T

print("\nTranspose of Matrix B = \n", Bt)

print("A.ndim: ", Bt.ndim)

print("A.shape: ", Bt.shape)

Matrix B = [-2 1 3] A.ndim: 1 A.shape: (3,) Transpose of Matrix B = [-2 1 3] A.ndim: 1 A.shape: (3,)

b. Column Vector¶

- A column vector is a matrix with exactly one column and one or many rows.

- A column vector with one column and n rows is shown below:

$\begin{bmatrix} b_1 \\ b_2 \\ b_3 \\ \vdots \\ b_n\\ \end{bmatrix} $

- Let us create a column vector having one column and three rows

B = np.array([[-2], [1], [3]])

print("Matrix B = \n", B)

print("A.ndim: ", B.ndim)

print("A.shape: ", B.shape)

# Taking the transpose of a matrix means to interchange the rows with columns.

# The rows become columns and the columns become rows.

Bt = B.T

print("\nTranspose of Matrix B = \n", Bt)

print("A.ndim: ", Bt.ndim)

print("A.shape: ", Bt.shape)

Matrix B = [[-2] [ 1] [ 3]] A.ndim: 2 A.shape: (3, 1) Transpose of Matrix B = [[-2 1 3]] A.ndim: 2 A.shape: (1, 3)

c. Zero Matrix¶

- A matrix having all its elements as zero is a zero matrix $A =$

$\begin{bmatrix} 0 & 0 & 0\\ 0 & 0 & 0 \end{bmatrix} $

- We can create a zero matrix using the

numpy.zeros()method as shown below:

A = np.zeros((2,3), dtype=np.int16)

print("Matrix A = \n", A)

Matrix A = [[0 0 0] [0 0 0]]

d. Ones Matrix¶

- A matrix having all its elements as ones is a ones matrix $A =$

$\begin{bmatrix} 1 & 1\\ 1 & 1\\ 1 & 1 \end{bmatrix} $

- We can create a ones matrix using the

numpy.ones()method as shown below:

A = np.ones((2, 3), dtype=int)

print("Matrix A = \n", A)

Matrix A = [[1 1 1] [1 1 1]]

e. Random Integer Matrix¶

- To create a Matrix of any size, with random values of low inclusive and high exclusive and size size, we can use the numpy.random.randint(low, high, size) method.

A = np.random.randint(0, 10, size =(5, 3))

print ("Matrix A = \n", A)

Matrix A = [[2 2 3] [0 2 4] [1 1 6] [7 0 3] [1 3 4]]

f. Square Matrix¶

- An

n × nmatrix is said to be a square matrix of ordern. - In simple words, when the number of rows and the number of columns in the matrix are equal then the matrix is called square matrix.

$A =$ $\begin{bmatrix} 6 & 3 & 8\\ 2 & 1 & 9\\ 8 & 2 & 7 \end{bmatrix} $

A = np.random.randint(1, 10, size =(3, 3))

np.random.seed(54)

print ("Matrix A = \n", A)

Matrix A = [[6 3 8] [2 1 9] [8 2 7]]

g. Symmetric Matrix¶

- A symmetric matrix is a type of square matrix where the

top-right triangleis the same as thebottom-lefttriangle. - Matrix A is a 3 × 3 symmetric matrix:

$A =$ $\begin{bmatrix} 4 & 1 & 7\\ 1 & -3 & 5\\ 7 & 5 & 2 \end{bmatrix} $

- In the above 3 × 3 square matrix A the diagonal 4, -3, 2 is the principal diagonal and 4, -3 and 2 are said to be the diagonal elements.

- The transpose of a symmetric matrix is the matrix itself. $A = A^T$

A = np.array([[4, 1, 7], [1, -3, 5], [7, 5, 2]])

print("Matrix A = \n", A)

# taking transpose of the matrix

print('\nTranspose of A = \n', A.T)

np.transpose(A)

Matrix A = [[ 4 1 7] [ 1 -3 5] [ 7 5 2]] Transpose of A = [[ 4 1 7] [ 1 -3 5] [ 7 5 2]]

array([[ 4, 1, 7],

[ 1, -3, 5],

[ 7, 5, 2]])

A.T

array([[ 4, 1, 7],

[ 1, -3, 5],

[ 7, 5, 2]])

h. Triangular Matrix¶

- Given a matrix A, having

mrows andncolumns.

- The

Upper triangular matrixof matrix $A$ has values only above the main diagonal, while the remaining elements are filled with zeros.

$\begin{pmatrix} a_{1,1} & a_{1,2} & a_{1,3}& \cdots & a_{1,n} \\ 0 & a_{2,2} & a_{2,3}& \cdots & a_{2,n} \\ 0 & 0 & a_{3,3}&\cdots & a_{3,n} \\ \vdots & \vdots & \ddots & \vdots \\ 0 & 0 & 0& \cdots & a_{m,n} \end{pmatrix} $

- The

Lower triangular matrixof matrix $A$ has values only below the main diagonal, while the remaining elements are filled with zeros.

$ \begin{pmatrix} a_{1,1} & 0 & 0& \cdots & 0 \\ a_{2,1} & a_{2,2} & 0& \cdots & 0 \\ a_{3,1} & a_{3,2} & a_{3,3}&\cdots & 0 \\ \vdots & \vdots & \ddots & \vdots \\ a_{m,1} & a_{m,2} & a_{m,3}& \cdots & a_{m,n} \end{pmatrix} $

A = np.array([[6, 3, 8, 5], [2, 1, 9, 7], [8, 2, 4, 7], [3, 1, 5, 8]])

print("Matrix A: \n", A)

# Lower triangular matrix of matrix A

lower = np.tril(A)

print("Lower Triangular Matrix of A:\n", lower)

# Upper triangular matrix of matrix A

upper = np.triu(A)

print("Upper Triangular Matrix of A: \n", upper)

Matrix A: [[6 3 8 5] [2 1 9 7] [8 2 4 7] [3 1 5 8]] Lower Triangular Matrix of A: [[6 0 0 0] [2 1 0 0] [8 2 4 0] [3 1 5 8]] Upper Triangular Matrix of A: [[6 3 8 5] [0 1 9 7] [0 0 4 7] [0 0 0 8]]

i. Diagonal Matrix¶

- A diagonal matrix is a square matrix in which all entries that are not on the main diagonal are zero.

- A diagonal matrix is a special square matrix that is BOTH upper and lower triangular since all elements, whether above or below the principal diagonal, are 0.

- A diagonal matrix is often denoted with the variable

D

There are two ways to represent a diagonal matrix:

- As a full matrix.

- As a vector of values on the main diagonal.

NumPy provides the function

np.diag()that can create a diagonal matrix from an existing matrix, or transform a vector into a diagonal matrix.

A = np.random.randint(1, 10, size =(4, 4))

print("Matrix A : \n", A)

# Extract a diagonal vector from a matrix

d = np.diag(A)

print("\nDiagonal Vector = ", d)

# create diagonal matrix from diognal vector

D = np.diag(d)

print("\nDiagonal Matrix : \n", D)

Matrix A : [[7 9 8 4] [3 2 3 7] [9 2 8 8] [3 8 8 9]] Diagonal Vector = [7 2 8 9] Diagonal Matrix : [[7 0 0 0] [0 2 0 0] [0 0 8 0] [0 0 0 9]]

j. Identity Matrix¶

- An identity matrix $I_n$, is a square matrix in which all the entries in the principal diagonal are 1 and all other elements are 0.

Properties of Identity Matrix:

- A vector of length

nremains unchanged when multiplied with $I_n$. - Multiplying a matrix or vector by its compatible identity matrix, will result the matrix itself. $AI = A$

- Multiplying a matrix by its inverse will result in an identity matrix of the same order. $AA^{-1} = I$

- The

trace(sum of elements in principal diagonal) of an identity matrix is equal to identity matrix’s order. - The determinant of an identity matrix is always equal to 1.

- A vector of length

The

numpy.eye(rows, cols, k, dtype)function is used to create an identigy matrix.- Where, default value of

kis zero, means main diagonal, a positive value refers to an upper diagonal, and a negative value refers to a lower diagonal. - Default

dtypeis float

- Where, default value of

I3 = np.eye(4, 4)

print("3x3 Identiy Matrix:\n", I3)

3x3 Identiy Matrix: [[1. 0. 0. 0.] [0. 1. 0. 0.] [0. 0. 1. 0.] [0. 0. 0. 1.]]

I3 = np.eye(4, 4, 1,dtype=np.uint8)

print("3x3 Identiy Matrix:\n", I3)

3x3 Identiy Matrix: [[0 1 0 0] [0 0 1 0] [0 0 0 1] [0 0 0 0]]

I3 = np.eye(4, 4, -1,dtype=np.uint8)

print("3x3 Identiy Matrix:\n", I3)

3x3 Identiy Matrix: [[0 0 0 0] [1 0 0 0] [0 1 0 0] [0 0 1 0]]

k. Scalar Matrix¶

- A scalar matrix is a type of square matrix in which its principal diagonal elements are all equal and off-diagonal elements are all 0.

- It is a multiplicative constant of an identity matrix.

- Some examples of 2x2 scalar matrices are given below:

$\begin{bmatrix} 2 & 0\\ 0 & 2 \end{bmatrix} \hspace{2 cm}\begin{bmatrix} -3 & 0\\ 0 & -3 \end{bmatrix} \hspace{2 cm}\begin{bmatrix} 4 & 0\\ 0 & 4 \end{bmatrix} $

- Some examples of 3x3 scalar matrices are given below:

$\begin{bmatrix} 4 & 0 & 0\\ 0 & 4 & 0\\ 0 & 0 & 4 \end{bmatrix} \hspace{2 cm}\begin{bmatrix} -3 & 0 & 0\\ 0 & -3 & 0\\ 0 & 0 & -3 \end{bmatrix} \hspace{2 cm}\begin{bmatrix} 2 & 0 & 0\\ 0 & 2 & 0\\ 0 & 0 & 2 \end{bmatrix} $

A = np.eye(3, 3, dtype=np.uint8)

print("Scalar Matrix A:\n", 2*A)

B = np.eye(4, 4, dtype=np.uint8)

print("Scalar Matrix B:\n", -3*B)

Scalar Matrix A: [[2 0 0] [0 2 0] [0 0 2]] Scalar Matrix B: [[-3 0 0 0] [ 0 -3 0 0] [ 0 0 -3 0] [ 0 0 0 -3]]

l. Orthogonal Matrix¶

- An orthogonal/orthonormal matrix is a type of square matrix whose column vectors and row vectors are orthonormal vectors, i.e., are mutually perpendicular and have magnitude equal to 1.

- An Orthogonal matrix is often denoted as uppercase $Q_{n,n}$.

$\hspace{3 cm} Q_{2,2} = \begin{bmatrix} 1 & 0\\ 0 & -1 \end{bmatrix}$ $\hspace{3 cm}Q_{3,3} = \begin{bmatrix} 1 & 0 & 0\\ 0 & -1 & 0 \\ 0 & 0 & 1 \end{bmatrix}$

- Properties:

- An orthogonal matrix is always a symmetric matrix, all identity matrices are hence orthogonal matrices.

- The product of two orthogonal matrices will also be an orthogonal matrix.$\hspace{.5 cm} Q_1.Q_2 = Q_3$

- The transpose of the orthogonal matrix will also be an orthogonal matrix.$\hspace{.5 cm} Q_1^T = Q_2$

- When an Orthogonal matrix is multiplied with its transpose, it will return an identity matrix.$\hspace{.5 cm} Q.Q^T = Q^T.Q = I$

- A matrix is orthogonal if its transpose is equal to its inverse.

- The determinant of the orthogonal matrix will always be +1 or -1.

- The eigenvalues of the orthogonal matrix will always be ±1

- Orthogonal matrices are mostly used a lot for

linear transformations, such asreflectionsandpermutations.

Q1 = np.array([ [1, 0, 0],[0, -1, 0], [0,0,1]])

Q2 = np.array([ [-1, 0, 0],[0, 1, 0], [0,0,1]])

print("Q1: \n", Q1)

print("Q2: \n", Q1)

# Product of two orthogonal matrices will also be an orthogonal matrix

print("\nQ1.Q2 = \n", np.dot(Q1,Q2))

# Transpose of the orthogonal matrix will also be an orthogonal matrix

print("\nQ1.T = \n", Q1.T)

# When an Orthogonal matrix is multiplied with its transpose, it will return an identity matrix

print("\nnp.dot(Q1, Q1.T) = \n", np.dot(Q1,(Q1.T)))

Q1: [[ 1 0 0] [ 0 -1 0] [ 0 0 1]] Q2: [[ 1 0 0] [ 0 -1 0] [ 0 0 1]] Q1.Q2 = [[-1 0 0] [ 0 -1 0] [ 0 0 1]] Q1.T = [[ 1 0 0] [ 0 -1 0] [ 0 0 1]] np.dot(Q1, Q1.T) = [[1 0 0] [0 1 0] [0 0 1]]

2. Matrix Operations¶

a. Matrix Addition¶

- Two matrices with the same order can be added together to create a third matrix of same order.

- It is element by element addition and can be performed in Python using the plus operator on two NumPy arrays as shown:

A = np.random.randint(-5, 6, size =(3, 3))

B = np.random.randint(-5, 6, size =(3, 3))

print("Matrix A : \n", A)

print("Matrix B : \n", B)

# adding two matrices

C = A + B

print("\nA + B = \n", C)

Matrix A : [[-2 -2 0] [ 2 3 -2] [ 0 3 -4]] Matrix B : [[-2 -3 -3] [ 3 -3 -2] [ 4 4 4]] A + B = [[-4 -5 -3] [ 5 0 -4] [ 4 7 0]]

b. Matrix-Scalar Multiplication¶

- A matrix can be multiplied by a scalar. This can be represented using the dot notation between the matrix and the scalar.

- The result is a matrix with the same size as the parent matrix where each element of the matrix is multiplied by the scalar value.

A = np.random.randint(1, 6, size =(3, 3))

print("Matrix A : \n", A)

b = 2

C = b*A

print("\n b*A: \n", C)

Matrix A : [[2 4 5] [5 4 5] [5 5 1]] b*A: [[ 4 8 10] [10 8 10] [10 10 2]]

c. Matrix Multiplication (Matrix Hadamard Product)¶

- Two matrices with the same size can be multiplied together, and this is often called

element-wise matrix multiplicationor theHadamard product. - It is not the typical operation meant when referring to matrix multiplication, therefore a different operator is often used, which is a circle $\odot$.

- As with element-wise subtraction and addition, element-wise multiplication involves the multiplication of elements from each parent matrix to calculate the values in the new matrix.

- We can implement this in Python using the

asterik *operator directly on the two NumPy arrays.

A = np.random.randint(1, 6, size =(2, 3))

B = np.random.randint(1, 6, size =(2, 3))

print("Matrix A : \n", A)

print("Matrix B : \n", B)

# Matrices Hadamard product

C = A * B

# printing product of two matrices

print("\n A * B = \n", C)

Matrix A : [[4 4 4] [1 5 3]] Matrix B : [[1 2 5] [5 4 3]] A * B = [[ 4 8 20] [ 5 20 9]]

d. Matrix Multiplication (Matrix Dot Product)¶

- Matrix multiplication, also called the

matrix dot productis more complicated than the previous operations and involves a rule as not all matrices can be multiplied together. - The rule for matrix multiplication is "the number of

columnsin the first matrix must equal the number ofrowsin the second matrix". The result is a new matrix with m rows and k columns. - The intuition for the matrix multiplication is that we are calculating the dot product between each row in matrix A with each column in matrix B.

- In Python, matrix multiplication operation can be performed the

numpy.dot()function or using thenewer @ operator, since Python version 3.5.

A = np.array([ [1, 2],[3, 4], [5, 6]])

B = np.array([ [1, 2],[3, 4]])

print("Matrix A = \n", A)

print("\nMatrix B = \n", B)

# multiply matrices using dot function

C = np.dot(A,B)

# print dot product matrix

print("\n A.dot(B) = \n", C)

# multiply matrices with @ operator

D = A @ B

# print dot product matrix

print("\nA @ B = \n", D)

Matrix A = [[1 2] [3 4] [5 6]] Matrix B = [[1 2] [3 4]] A.dot(B) = [[ 7 10] [15 22] [23 34]] A @ B = [[ 7 10] [15 22] [23 34]]

e. Matrix-Vector Multiplication¶

- A matrix and a vector can be multiplied together as long as the rule of matrix multiplication is observed. Specifically, that the number of columns in the matrix must equal the number of items in the vector.

- As with matrix multiplication, the operation can be written using the dot notation.

- Because the vector only has one column, the result is always a vector.

- In Python a matrix can be multiplied with a vector using the

numpy.dot()function or using thenewer @ operator, since Python version 3.5.

A = np.array([ [1, 2],[3, 4], [5, 6]])

v = np.array([2, 3])

print("Matrix A = \n", A)

print("\nMatrix v = \n", v)

C = A.dot(v)

print("\n A.dot(v) = \n", C)

D = A @ v

# print dot product matrix

print("\nA @ v = \n", D)

Matrix A = [[1 2] [3 4] [5 6]] Matrix v = [2 3] A.dot(v) = [ 8 18 28] A @ v = [ 8 18 28]

f. Frobenius Norm¶

- We have already talked about Norms with respect to vectors and we saw how they are used to calculate their magnitude from origin.

- Similar to L2 norm of a vector, the Frobenius norm of a matrix is calculated as the square root of the sum of the absolute squares of its elements.

- In simple words, Frobenius norm of a matrix is the sum of the magnitude of all vectors in a matrix.

$\left\lVert x \right\rVert_F$ $=$ $\sqrt{\sum_{i,j} x_{i,j}^2}$

A = np.array([ [0, 2, 1],[2, 0, 0]])

print("Matrix A = \n", A)

f = np.linalg.norm(A, ord=None)

print("\n Frobenius Norm =", f)

Matrix A = [[0 2 1] [2 0 0]] Frobenius Norm = 3.0

g. Transpose of a Matrix¶

- A defined matrix can be transposed, which creates a new matrix with the number of

columns and rows flipped. This is denoted by the superscript T next to the matrix AT.

- The operation has no effect if the matrix is symmetrical, e.g. has the same number of columns and rows and the same values at the same locations on both sides of the invisible diagonal line (The columns of AT are the rows of A).

- We can transpose a matrix in NumPy by calling the T attribute.

A = np.array([ [1, 2],

[3, 4],

[5, 6]])

print("Original Matrix A: \n", A)

# computing transpose of Matrix A using T attribute

C = A.T

C = np.transpose(A)

print("\nTranspose Matrix: \n", C)

Original Matrix A: [[1 2] [3 4] [5 6]] Transpose Matrix: [[1 3 5] [2 4 6]]

h. Determinant of a Matrix¶

- Matrix determinant can be thought of as a function whose input is a square matrix and output is a scalar value.

- The determinant of a matrrix is used to compute the inverse of a matrix.

- If the determinant of a matrix is zero, that means it is a singular matrix, is non-invertable and having linearly dependent columns.

- Moreover, determinant is used in solving linear equations and it also caputures the notions of how linear transformation change area or volume.

- The determinant also describes the way a matrix will scale another matrix when they are multiplied together. For example, if the determinant of a matrix is one, it preserves the space of other matrix.

- To calculate the determinant of a 2x2 matrix, we multiply the component

aby the determinant of the “submatrix” formed by ignoringa'srow and column. In this case, this submatrix is the 1×1 matrix consisting ofd, and its determinant is justd. So the first term of the determinant isad. Next, we proceed to the second component of the first row, which is the upper right componentb. We multiplybby the determinant of the submatrix formed by ignoringb'srow and column, which isc. So, the next term of the determinant isbc. The total determinant is simply the first term ad minus the second term. :

$\hspace{2 cm}A = \begin{bmatrix} a & b\\ c & d \end{bmatrix} $

$\hspace{2 cm}det(A) = |A| = ad - cb$

- Now that we know how to calculate determinant of a 2x2 matrix, we can generalize this technique to compute determinant of larger matrices using recursion.

- Let us calculate the determinant of a 3x3 matrix:

$\hspace{2 cm}B =$ $\begin{bmatrix} a & b & c\\ d & e & f\\ g & h & i \end{bmatrix} $

$\hspace{2 cm}det(B) = |B| = a\begin{vmatrix} e & f\\h & i \end{vmatrix} - b\begin{vmatrix} d & f\\g & i \end{vmatrix} +c\begin{vmatrix} d & e\\g & h \end{vmatrix} = a(ef-hf) - b(di-gf) + c(dh-ge)$

- In NumPy, the determinant of a matrix can be calculated using the

det()function.

A = np.array([[-3, 1],[6, -4]])

print("Matrix A: \n", A)

det1 = numpy.linalg.det(A)

print("det(A): ", det1)

B = np.array([[1, 2, 4],[2, -1, 3],[0, 5, 1]])

print("\nMatrix B: \n", B)

det2 = numpy.linalg.det(B)

print("det(B): ", det2)

Matrix A: [[-3 1] [ 6 -4]] det(A): 6.0 Matrix B: [[ 1 2 4] [ 2 -1 3] [ 0 5 1]] det(B): 19.999999999999996

A = np.array([[6, -3],[4, -2]])

print("Matrix A: \n", A)

det1 = numpy.linalg.det(A)

print("det(A): ", det1)

B = np.array([[1, 1, 1],[2, 3, 1],[0, -1, 1]])

print("\nMatrix B: \n", B)

det2 = numpy.linalg.det(B)

print("det(B): ", det2)

C = np.array([[2, 1, 2],[1, 0, 1],[4, 1, 4]])

print("\nMatrix C: \n", C)

det3 = numpy.linalg.det(C)

print("det(C): ", det3)

Matrix A: [[ 6 -3] [ 4 -2]] det(A): 0.0 Matrix B: [[ 1 1 1] [ 2 3 1] [ 0 -1 1]] det(B): 0.0 Matrix C: [[2 1 2] [1 0 1] [4 1 4]] det(C): 0.0

Note the determinant of matrix A is zero, that means matrix A is not invertable. This can be observed that the two columns of matrix A are not independent, or we can say that the two columns of matrix A are dependent. So the first column is a multiple of the second column, i.e., you can multiply the second column by -2 to get the first column. So that means the matrix A represent two parallel lines and it is impossible to solve this matrix for unknowns.

i. Inverse of a Matrix¶

- The way numbers has reciprocals, and when we multiply a number by its reciprocal we get a 1.

- Similarly a matrix can have an inverse, and when we multiply a matrix by its inverse, we get the identity matrix.

- The inverse of matrix is used of find the solution of linear equations through the matrix inversion method.

$\hspace{2 cm}AA^{-1} = A^{-1}A = I_n$

- For a matrix to have an inverse, it has to satisfy two conditions:

- The matrix needs to be a square matrix.

- The determinant of the matrix must not be zero.

- Almost all of us know the shortcut way to calculate the Inverse of a 2x2 matrix.

- Interchange the main diagonal elements (

aandd). - Negate the remaining two elements (

bandc). - Devide the resulting matrix with the determinant of the original matrix.

- Interchange the main diagonal elements (

:

$\hspace{2 cm}A = \begin{bmatrix}

a & b\\

c & d

\end{bmatrix} $

$\hspace{2 cm}A = \frac{1}{det(A)}\begin{bmatrix} d & -b\\ -c & a \end{bmatrix} $

- Formal steps to compute the inverse of a matrix are:

- Step 1: Find the

matrix of minorsfor the given matrix. - Step 2: Turn that matrix into the

matrix of cofactors. - Step 3: Find the adjugate or

adjoint of matrix. - Step 4: Divide adjugate matrix by

determinantof given matrix.

- Step 1: Find the

Example: Perform step by step calculations to calculate the Inverse of $\hspace{1 cm}A_{3,3} = \begin{bmatrix} 3 & 0 & 2\\ 2 & 0 & -2\\0 & 1 & 1\end{bmatrix} $

Step 1: Find the matrix of minors for the given matrix.

- For each element of the matrix:

- Ignore the values on the current row and column

- Calculate the determinant of the remaining values

- Put those determinants into a matrix and you get the

matrix of minors, as shown below:

Step 2: Turn that matrix into the matrix of cofactors.

- Multiply each element of matrix of minors with alternate +1 and -1.

- Start from first row (left to right), then second row and so on.

Step 3: Find the adjugate or adjoint of matrix.

- Take transpose of matrix of cofactors and you get the adjugate or adjoint matrix as shown below:

Step 4: Divide adjugate matrix by determinant of given matrix.

- Find the determinant of given matrix, which is 10.

- Divide each element of adjugate matrix by 10 to get the inverse of matrix as shown below:

- To rescue us from all this labour, Python gives us

numpy.linalg.inv()method to compute the inverse of a non-singular matrix (a square matrix having non-zero dterminant)

A = np.array([ [3,0,2],[2,0,-2],[0,1,1]])

print("Matrix A: \n", A)

print("det(A): ", numpy.linalg.det(A))

AI = numpy.linalg.inv(A)

print("\nInverse of Matrix A: \n", AI)

# Verify

I = np.dot(A,AI)

print("\nnp.dot(A,AI): \n", I.astype(int))

Matrix A: [[ 3 0 2] [ 2 0 -2] [ 0 1 1]] det(A): 10.000000000000002 Inverse of Matrix A: [[ 0.2 0.2 0. ] [-0.2 0.3 1. ] [ 0.2 -0.3 -0. ]] np.dot(A,AI): [[1 0 0] [0 1 0] [0 0 1]]

j. Trace of a Matrix¶

- The trace of a matrix is the sum of all of the diagonal entries of a matrix.

- We can calculate the trace of a matrix in NumPy using the

trace()method.

A = np.array([ [1, 2, 3], [4, 5, 6], [7, 8, 9]])

print("Original Matrix = \n", A)

print("\nTrace value = ", np.trace(A))

Original Matrix = [[1 2 3] [4 5 6] [7 8 9]] Trace value = 15

- Properties of Trace of a Matrix:

- Tr($A$) = Tr($A^T$): The trace of a matrix is equal to the trace of its transpose, because the main diagonal remains the same after transpose.

- Tr($ABC$) = Tr($CAB$) = Tr($BCA$): If we multiply three matrices in different combinations, their trace remains the same

- You can use trace to calculate a matrix's Frobenius norm: $$||A||_F = \sqrt{\sum_{i,j} x_{i,j}^2} = \sqrt{\mathrm{Tr}(AA^\mathrm{T})}$$

A = np.array([ [0, 2, 1],[2, 0, 0]])

print("Matrix A = \n", A)

f1 = np.linalg.norm(A, ord=None)

print("\n Frobenius Norm =", f1)

AT = A.T

AAT = np.dot(A, AT)

tr_AAT = np.trace(AAT)

f2 = (tr_AAT)**.5

print("\nnp.trace(np.dot(A,A.T))**.5 = ", f2)

Matrix A = [[0 2 1] [2 0 0]] Frobenius Norm = 3.0 np.trace(np.dot(A,A.T))**.5 = 3.0

k. Rank of a Matrix¶

- The maximum number of

linearly independentcolumns (or rows ) of a matrix is called its rank. (Two columns are linearly dependent if you can add+scale one column to make the other) - The rank of a $m \times n$ matrix is less than equal to the minimum of its rows and columns.

- The rank of a matrix would be zero only if the matrix had no elements. If a matrix had even one element, its minimum rank would be one.

- To calculate the rank of a matrix, we need to convert the matrix into its row-echlon form (which we will study iun the next section).

- In Python, we can calculate the rank of a matrkx using

np.linalg.matrix_rank()method.

mat = np.array([])

print("Rank of matrix having zero elements = ", np.linalg.matrix_rank(mat))

A = np.array([[1,2,3]])

print("\nA:\n", A)

print("Rank of A = ", np.linalg.matrix_rank(A))

B = np.array([[1,2,3], [3, 2, 0]])

print("\nB:\n",B)

print("Rank of B = ", np.linalg.matrix_rank(B))

C = np.array([[1,2,3], [5,6,9], [3, 2, 0]])

print("\nC:\n",C)

print("Rank of C = ", np.linalg.matrix_rank(C))

C = np.array([[1,2, 3, 4], [9, 6, 2, 4]])

print("\nC:\n", C)

print("Rank of C = ", np.linalg.matrix_rank(C))

Rank of matrix having zero elements = 0 A: [[1 2 3]] Rank of A = 1 B: [[1 2 3] [3 2 0]] Rank of B = 2 C: [[1 2 3] [5 6 9] [3 2 0]] Rank of C = 3 C: [[1 2 3 4] [9 6 2 4]] Rank of C = 2

# all columns are linearly dependent on each other

# 2nd = 3 times 1st col and 3rd col = 2 times 1st column

A = np.array([[1,3, 2], [2, 6, 4],[3, 9, 6]]) # all columns are dependent

print("A:\n", A)

print("Rank of A = ", np.linalg.matrix_rank(A))

# 1st and 2nd col are independent, but 3rd column = 1st col + 2nd col

B = np.array([[1,0, 1], [2, 2, 4],[3, 2, 5]])

print("B:\n", B)

print("Rank of B = ", np.linalg.matrix_rank(B))

A: [[1 3 2] [2 6 4] [3 9 6]] Rank of A = 1 B: [[1 0 1] [2 2 4] [3 2 5]] Rank of B = 2

Section III: (Solving System of Linear Equations) ¶

import numpy as np

import numpy.linalg

import math

import scipy

from matplotlib import pyplot as plt

from plot_helper import * # Helper functions: plot_vector, plot_linear_transformation, plot_linear_transformations

1. An Overview of Linear Equations¶

a. What is a Linear Equation?¶

- An equation in which the variable's highest power is one is called a linear equation. A linear equation can have one, two, three and so on to

nvariables. A linear equation having two variables can be written as:

- A linear equation of two variables when plotted on a graph gives a straight line. A straight line equation is shown below:

$$ y = c + mx$$

- Where, - $y$ is the dependent variable. - $x$ is the independent variable. - $c$ is the y-intercept or the value of $y$ when $x$ is zero. - $m$ is the slope/gradient of the line, which tells us two things - The line is rising or falling (Positive or negative relationship between the two variables) - Steepness of line (how closely related the two variables are)

Example 1: Let us first see how we can draw a line from a linear equation of two variables using Matplotlib. $$ 2x - y = -4 $$ $$ y = 4 + 2x $$

We need to calculate at least two (x,y) pair of points that statisfies this equation, and then we can draw a line connecting those points.

%matplotlib inline

x = np.array([0, 5])

y = 4 + 2 * x

fig, ax = plt.subplots()

plt.xlabel('x')

plt.ylabel('y')

plt.title("Line representing equation: y = 4 + 2x")

ax.set_xlim([0, 5])

ax.set_ylim([1, 14])

ax.plot(x, y)

plt.grid(True)

$$y=4+2x$$$$y=9+2x$$$$y=-2+2x$$Let us change the y-intercept, without changing the slope, and draw these three lines:

%matplotlib inline

x = np.linspace(-10, 20, 5) # start, finish, n points

y1 = -2 + 2*x

y2 = 4 + 2*x

y3 = 9 + 2*x

fig, ax = plt.subplots()

plt.xlabel('x')

plt.ylabel('y')

ax.set_xlim([0, 6])

ax.set_ylim([-2, 16])

plt.title("Line representing three equations")

ax.plot(x, y1, c='green')

ax.plot(x, y2, c='red')

ax.plot(x, y3, c='purple')

plt.grid(True)

$$y=4+2x$$$$y=4+0x$$$$y=4-2x$$Let us change the slope, without changing the y-intercept, and draw these three lines:

%matplotlib inline

x = np.linspace(-10, 20, 5) # start, finish, n points

y1 = 4 + 2*x

y2 = 4 + 0*x

y3 = 4 - 2*x

fig, ax = plt.subplots()

plt.xlabel('x')

plt.ylabel('y')

ax.set_xlim([0, 6])

ax.set_ylim([-4, 12])

plt.title("Line representing three equations")

ax.plot(x, y1, c='green')

ax.plot(x, y2, c='purple')

ax.plot(x, y3, c='red')

plt.grid(True)

Example 2:

- You went to a Rent-a-Car company to rant a car for few hours. The company representative told you that the base payment that you have to give is Rs5000/ and then for every hour you have to pay Rs2000/ additional price.

- Can you write a linear equation keeping in view of the dependent variable