Working with 3D city models in Python¶

Balázs Dukai @BalazsDukai, FOSS4G 2019

Tweet #CityJSON

3D geoinformation research group, TU Delft, Netherlands

Repo of this talk: https://github.com/balazsdukai/foss4g2019

3D + city + model ?¶

Probably the most well known 3d city model is what we see in Google Earth. And it is a very nice model to look at and it is improving continuously. However, certain applications require more information than what is stored in such a mesh model. They need to know what does an object in the model represent in the real world.

Semantic models¶

That is why we have semantic models, where for each object in the model we store a label of is meaning. Once we have labels on the object and on their parts, data preparation becomes more simple. An important property for analytical applications, such as wind flow simulations.

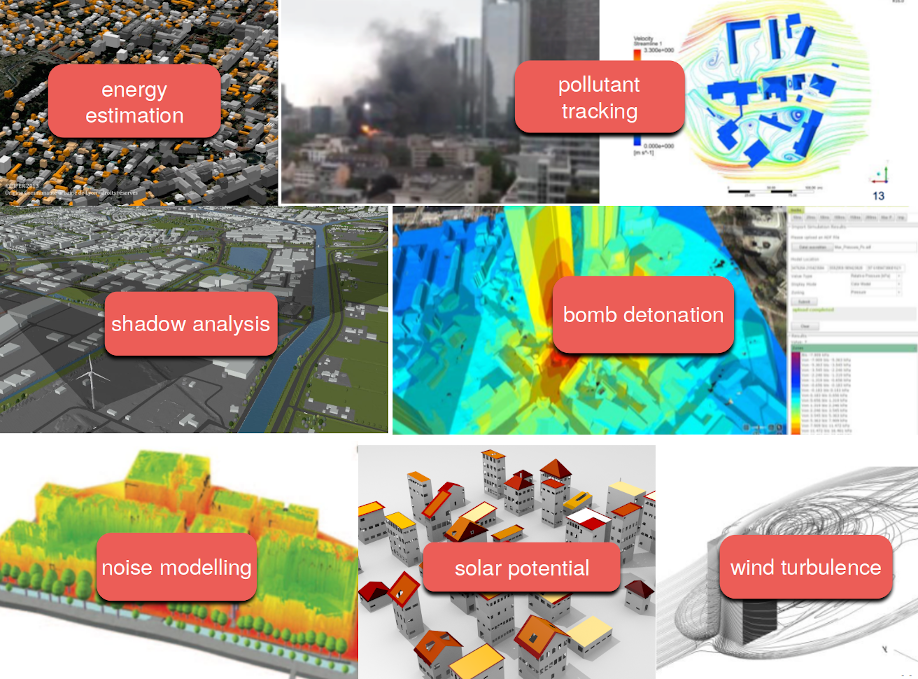

Useful for urban analysis¶

García-Sánchez, C., van Beeck, J., Gorlé, C., Predictive Large Eddy Simulations for Urban Flows: Challenges and Opportunities, Building and Environment, 139, 146-156, 2018.

But we can do much more with 3d city models. We can use them to better estimate the energy consumption in buildings, simulate noise in cities or analyse views and shadows. In the Netherlands sunshine is precious commodity, so we like to get as much as we can.

And many more...¶

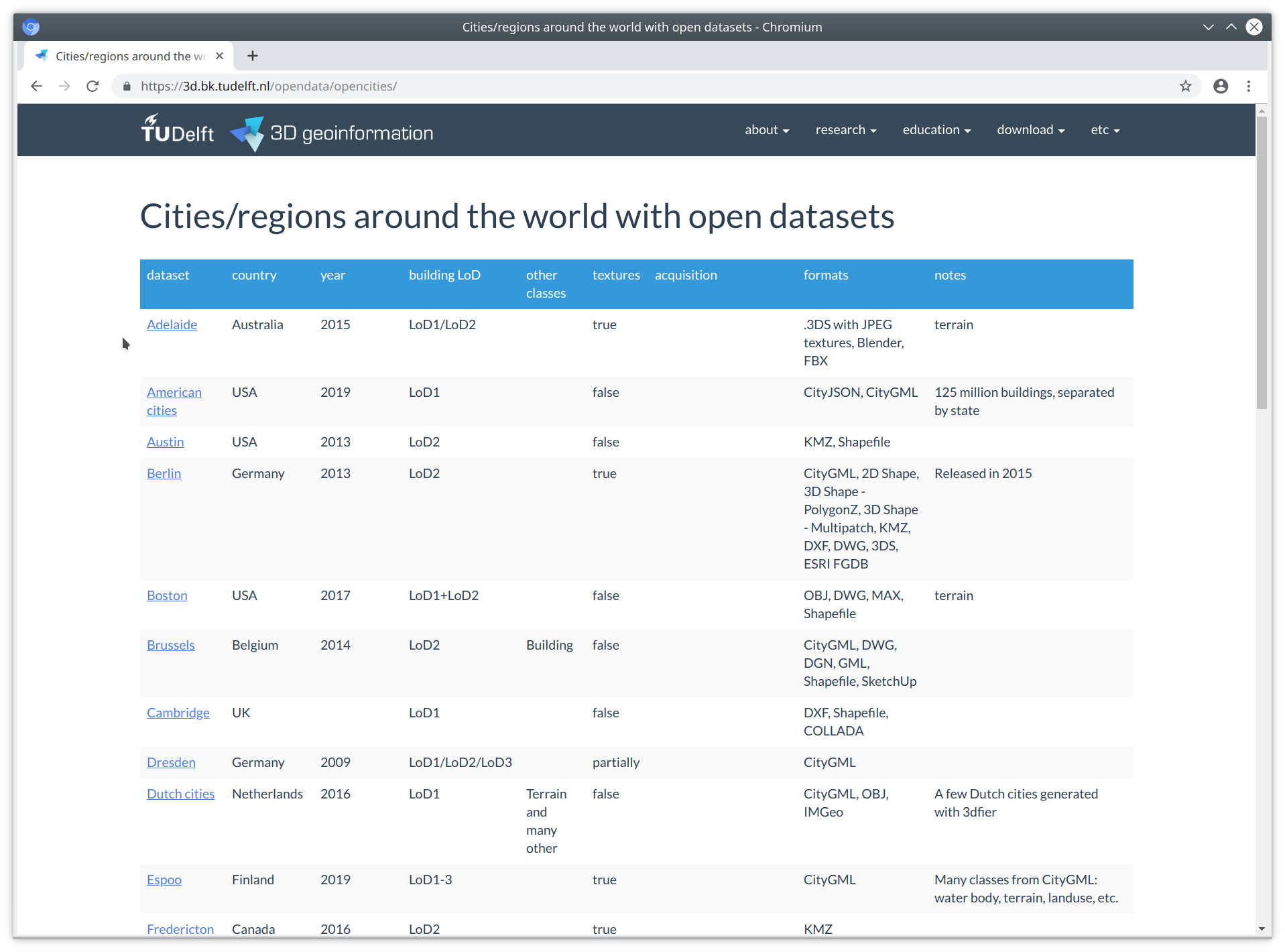

There are many open 3d city models available. They come in different formats and quality. However, at our group we are still waiting for the "year of the 3d city model" to come. We don't really see mainstream use, apart of visualisation. Which is nice, I belive they can provide much more value than having a nice thing to simply look at.

...mostly just production of the models¶

many available, but who uses them? For more than visualisation?

In truth, 3D CMs are a bit difficult to work with¶

Our built environment is complex, and the objects are complex too¶

Software are lagging behind¶

not many software supports 3D city models

if they do, mostly propietary data model and format

large, "eterprise"-type applications (think Esri, FME, Bentley ... )

few tools accessible for the individual developer / hobbyist

- GML doesn't help ( GML madness by Even Rouault )

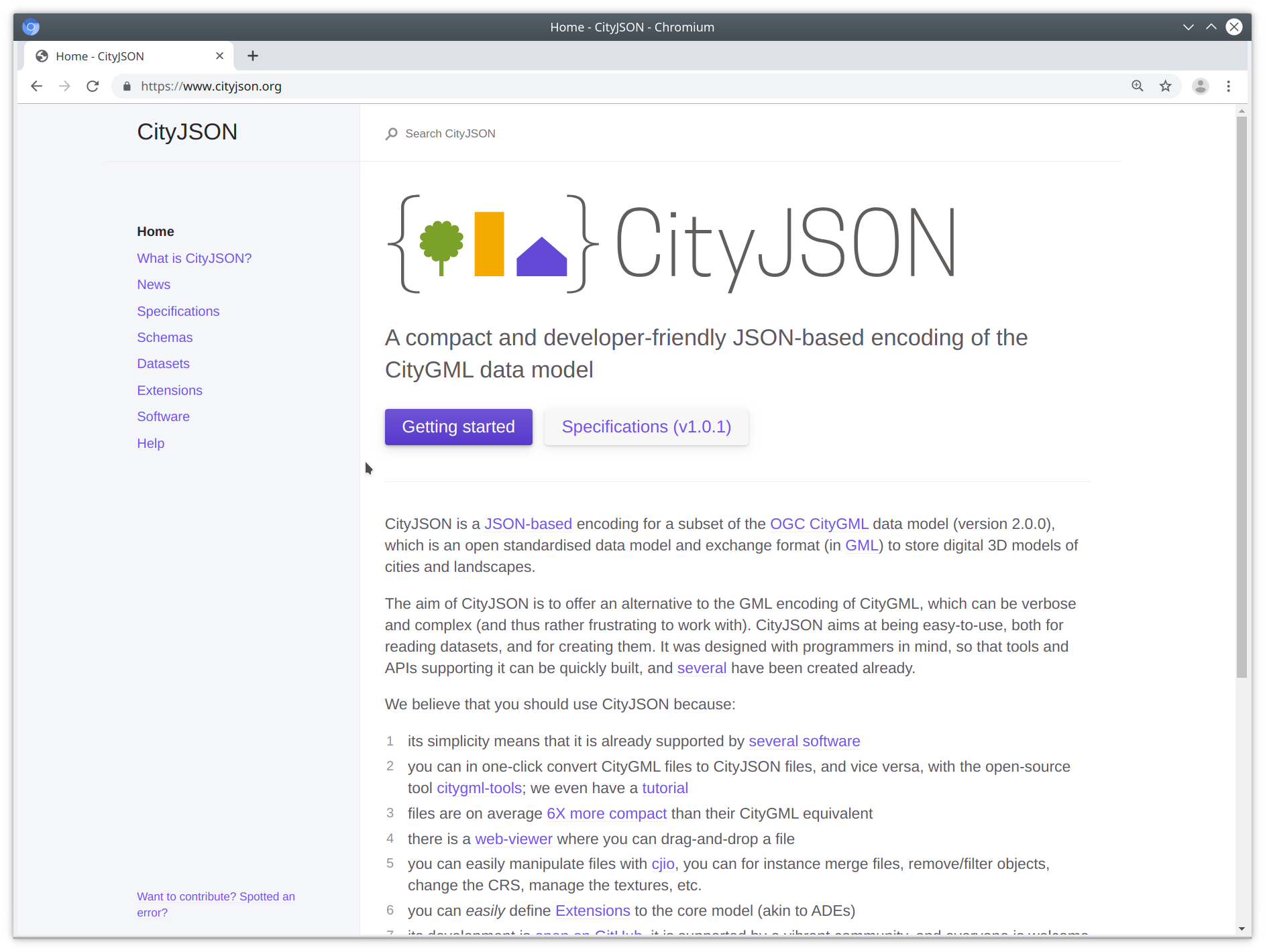

That is why we are developing CityJSON, which is a data format for 3d city models. Essentially, it aims to increase the value of 3d city models by making it more simple to work with them and lower the entry for a wider audience than cadastral organisations.

Key concepts of CityJSON¶

- simple, as in easy to implement

- designed with programmers in mind

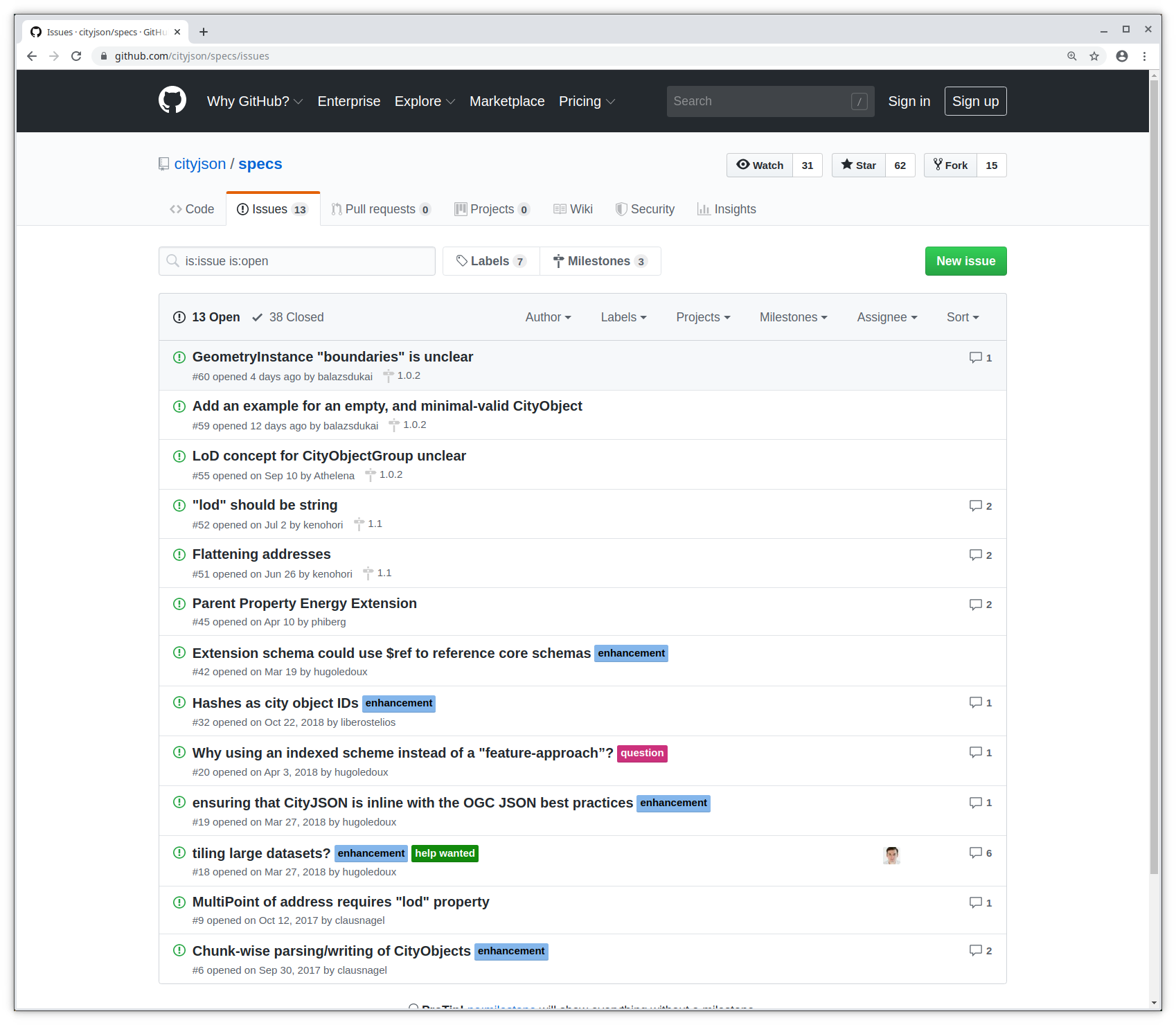

- fully developed in the open

- flattened hierarchy of objects

- implementation first

CityJSON implements the data model of CityGML. CityGML is an international standard for 3d city models and it is coupled with its GML-based encoding.

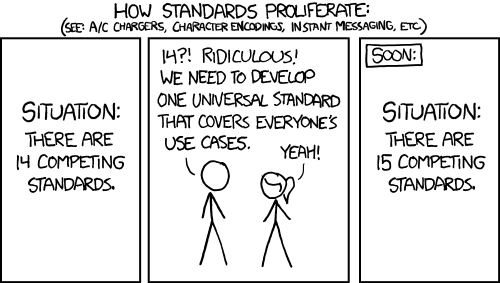

We don't really like GML, because it's verbose, files are deeply nested and large (often several GB). And there are many different ways to do one thing.

Also, I'm not a web-developer, but I would be surprised if anyone prefers GML over JSON for sending stuff around the web.

JSON-based encoding of the CityGML data model¶

I just got sent a CityGML file. pic.twitter.com/jnTVoRnVLS

— James Fee (@jamesmfee) June 29, 2016

- files are deeply nested, and large

- many "points of entry"

- many diff ways to do one thing (GML doesn't help, GML madness by Even Rouault )

The CityGML data model¶

Compression ~6x over CityGML¶

Compression¶

| file | CityGML size (original) | CityGML size (w/o spaces) | textures | CityJSON | compression |

|---|---|---|---|---|---|

| CityGML demo "GeoRes" | 4.3MB | 4.1MB | yes | 524KB | 8.0 |

| CityGML v2 demo "Railway" | 45MB | 34MB | yes | 4.3MB | 8.1 |

| Den Haag "tile 01" | 23MB | 18MB | no, material | 2.9MB | 6.2 |

| Montréal VM05 | 56MB | 42MB | yes | 5.4MB | 7.8 |

| New York LoD2 (DA13) | 590MB | 574MB | no | 105MB | 5.5 |

| Rotterdam Delfshaven | 16MB | 15MB | yes | 2.6MB | 5.8 |

| Vienna (the demo file) | 37MB | 36MB | no | 5.3MB | 6.8 |

| Zürich LoD2 | 3.03GB | 2.07GB | no | 292MB | 7.1 |

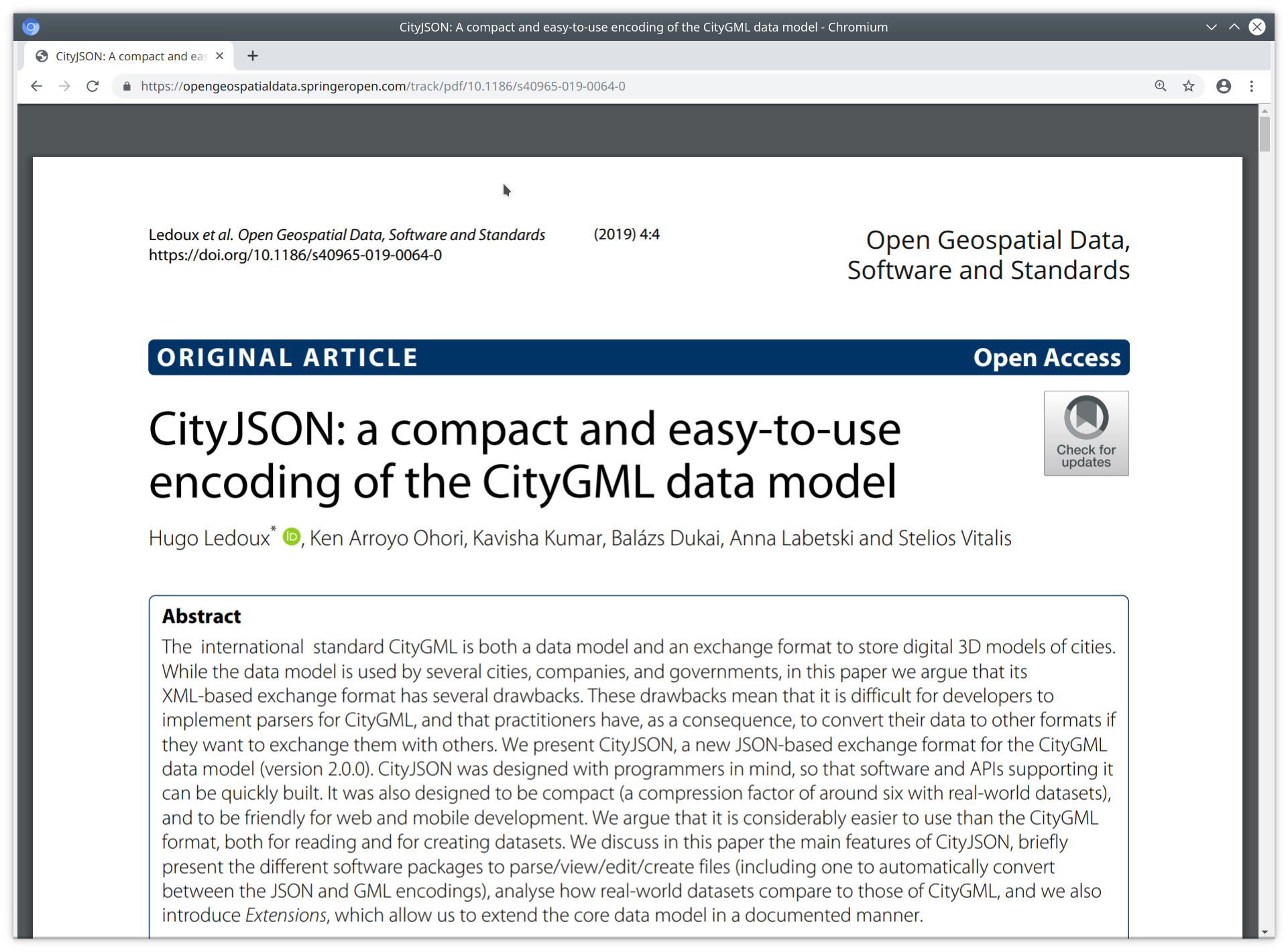

If you are interested in a more detailed comparison between CityGML and CityJSON you can read our article, its open access.

And yes, we are guilty of charge.

Let's have a look-see, shall we?¶

Now let's take a peek under the hood, what's going on in a CityJSON file.

An empty CityJSON file¶

In a city model we represent the real-world objects such as buildings, bridges, trees as different types of CityObjects. Each CityObject has its

- unique ID,

- attributes,

- geometry,

- and it can have children objects or it can be part of a parent object.

Note however, that CityObject are not nested. Each of them is stored at root and the hierachy represented by linking to object IDs.

A CityObject¶

Each CityObject has a geometry representation. This geometry is composed of boundaries and semantics.

Geometry¶

- boundaries definition uses vertex indices (inspired by Wavefront OBJ)

- We have a vertex list at the root of the document

- Vertices are not repeated (unlike Simple Features)

- semantics are linked to the boundary surfaces

This MulitSurface has

5 surfaces

[[0, 3, 2, 1]], [[4, 5, 6, 7]], [[0, 1, 5, 4]], [[0, 2, 3, 8]], [[10, 12, 23, 48]]

each surface has only an exterior ring (the first array)

[ [0, 3, 2, 1] ]

The semantic surfaces in the semantics json-object are linked to the boundary surfaces. The integers in the values property of surfaces are the 0-based indices of the surfaces of the boundary.

import json

import os

path = os.path.join('data', 'rotterdam_subset.json')

with open(path) as fin:

cm = json.loads(fin.read())

print(f"There are {len(cm['CityObjects'])} CityObjects")

# list all IDs

for id in cm['CityObjects']:

print(id, "\t")

There are 16 CityObjects

{C9D4A5CF-094A-47DA-97E4-4A3BFD75D3AE}

{71B60053-BC28-404D-BAB9-8A642AAC0CF4}

{6271F75F-E8D8-4EE4-AC46-9DB02771A031}

{DE77E78F-B110-43D2-A55C-8B61911192DE}

{19935DFC-F7B3-4D6E-92DD-C48EE1D1519A}

{953BC999-2F92-4B38-95CF-218F7E05AFA9}

{8D716FDE-18DD-4FB5-AB06-9D207377240E}

{C6AAF95B-8C09-4130-AB4D-6777A2A18A2E}

{72390BDE-903C-4C8C-8A3F-2DF5647CD9B4}

{8244B286-63E2-436E-9D4E-169B8ACFE9D0}

{87316D28-7574-4763-B9CE-BF6A2DF8092C}

{CD98680D-A8DD-4106-A18E-15EE2A908D75}

{64A9018E-4F56-47CD-941F-43F6F0C4285B}

{459F183A-D0C2-4F8A-8B5F-C498EFDE366D}

{237D41CC-991E-4308-8986-42ABFB4F7431}

{23D8CA22-0C82-4453-A11E-B3F2B3116DB4}

- Working with a CityJSON file is straightforward. One can open it with the standard library and get going.

- But you need to know the schema well.

- And you need to write everything from scratch.

That is why we are developing cjio.

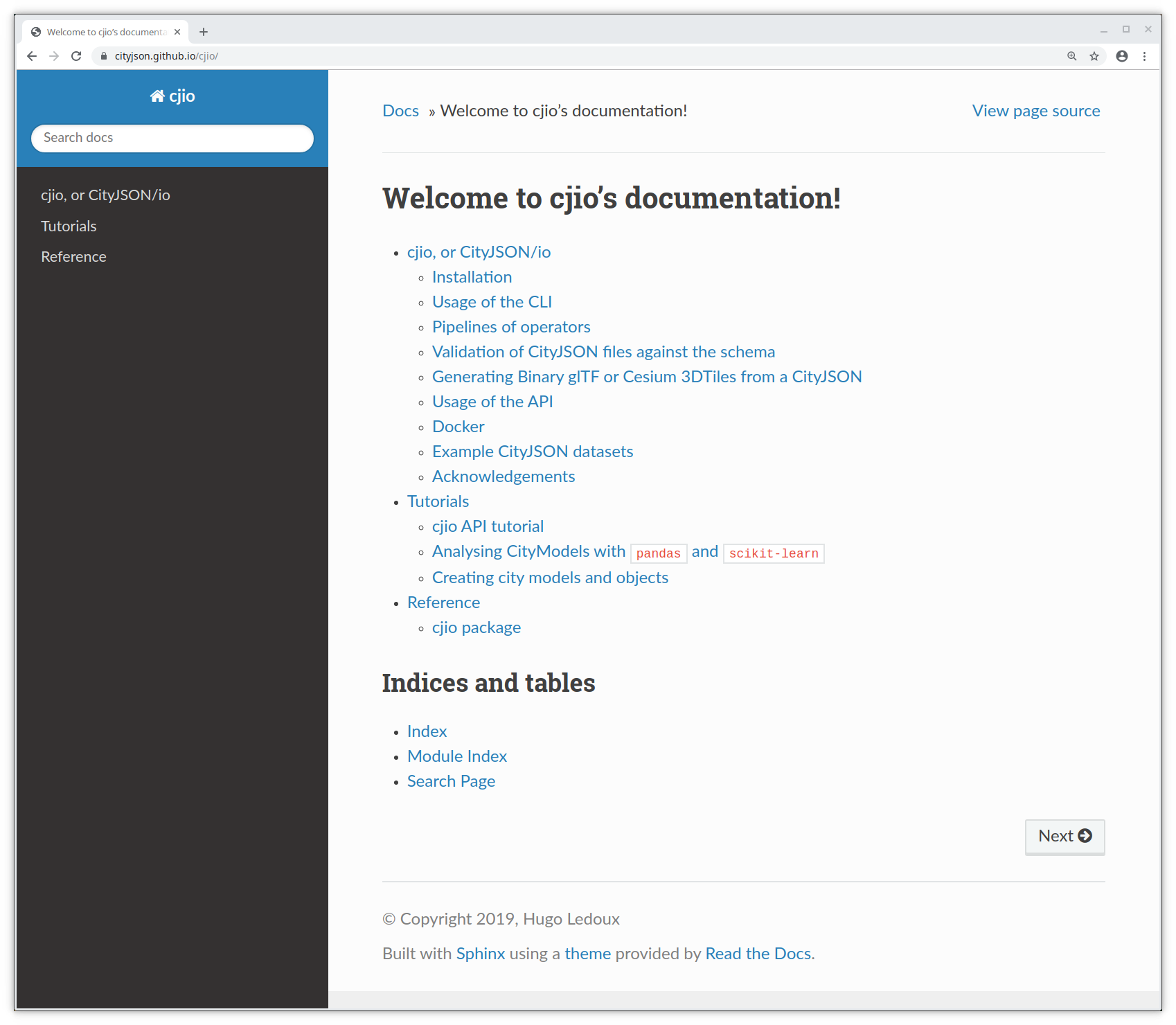

cjio is how we eat what we cook

Aims to help to actually work with and analyse 3D city models, and extract more value from them. Instead of letting them gather dust in some governmental repository.

cjio has a (quite) stable CLI¶

$ cjio city_model.json reproject 2056 export --format glb /out/model.glb

and an experimental API¶

from cjio import cityjson

cm = cityjson.load('city_model.json')

cm.get_cityobjects(type='building')

pip install cjio

This notebook is based on the develop branch.

pip install git+https://github.com/tudelft3d/cjio@develop

cjio's CLI¶

! cjio --help

Usage: cjio [OPTIONS] INPUT COMMAND1 [ARGS]... [COMMAND2 [ARGS]...]...

Process and manipulate a CityJSON file, and allow different outputs. The

different operators can be chained to perform several processing in one

step, the CityJSON model goes through the different operators.

To get help on specific command, eg for 'validate':

cjio validate --help

Usage examples:

cjio example.json info validate

cjio example.json assign_epsg 7145 remove_textures export output.obj

cjio example.json subset --id house12 save out.json

Options:

--version Show the version and exit.

--ignore_duplicate_keys Load a CityJSON file even if some City Objects have

the same IDs (technically invalid file)

--help Show this message and exit.

Commands:

assign_epsg Assign a (new) EPSG.

clean Clean = remove_duplicate_vertices +...

compress Compress a CityJSON file, ie stores its...

decompress Decompress a CityJSON file, ie remove the...

export Export the CityJSON to another format.

extract_lod Extract only one LoD for a dataset.

info Output info in simple JSON.

locate_textures Output the location of the texture files.

merge Merge the current CityJSON with others.

partition Partition the city model into tiles.

remove_duplicate_vertices Remove duplicate vertices a CityJSON file.

remove_materials Remove all materials from a CityJSON file.

remove_orphan_vertices Remove orphan vertices a CityJSON file.

remove_textures Remove all textures from a CityJSON file.

reproject Reproject the CityJSON to a new EPSG.

save Save the city model to a CityJSON file.

subset Create a subset of a CityJSON file.

translate Translate the file by its (-minx, -miny,...

update_bbox Update the bbox of a CityJSON file.

update_textures Update the location of the texture files.

upgrade_version Upgrade the CityJSON to the latest version.

validate Validate the CityJSON file: (1) against its...

! cjio data/rotterdam_subset.json info

Parsing data/rotterdam_subset.json

{

"cityjson_version": "1.0",

"epsg": 7415,

"bbox": [

90454.18900000001,

435614.88,

0.0,

91002.41900000001,

436048.217,

18.29

],

"transform/compressed": true,

"cityobjects_total": 16,

"cityobjects_present": [

"Building"

],

"materials": false,

"textures": true

}

! cjio data/rotterdam_subset.json validate

Parsing data/rotterdam_subset.json ===== Validation (with official CityJSON schemas) ===== -- Validating the syntax of the file (using the schemas 1.0.0) -- Validating the internal consistency of the file (see docs for list) --Vertex indices coherent --Specific for CityGroups --Semantic arrays coherent with geometry --Root properties --Empty geometries --Duplicate vertices --Orphan vertices --CityGML attributes ===== File is valid File has warnings --- WARNINGS --- WARNING: attributes 'TerrainHeight' not in CityGML schema (16 CityObjects have this warning) WARNING: attributes 'bron_tex' not in CityGML schema (16 CityObjects have this warning) WARNING: attributes 'voll_tex' not in CityGML schema (16 CityObjects have this warning) WARNING: attributes 'bron_geo' not in CityGML schema (16 CityObjects have this warning) WARNING: attributes 'status' not in CityGML schema (16 CityObjects have this warning) =====================================

! cjio data/rotterdam_subset.json \

subset --exclude --id "{CD98680D-A8DD-4106-A18E-15EE2A908D75}" \

merge data/rotterdam_one.json \

reproject 2056 \

save data/test_rotterdam.json

Parsing data/rotterdam_subset.json Subset of CityJSON Merging files Reproject to EPSG:2056 [####################################] 100% Saving CityJSON to a file /home/balazs/Reports/talk_cjio_foss4g_2019/data/test_rotterdam.json

The CLI was first, no plans for API

Works with whole city model only

Functions for the CLI work with the JSON directly, passing it along

Simple and effective architecture

cjio's API¶

Allow read --> explore --> modify --> write iteration

Work with CityObjects and their parts

Functions for common operations

Inspired by the tidyverse from the R ecosystem

import os

from copy import deepcopy

from cjio import cityjson

from shapely.geometry import Polygon

import matplotlib.pyplot as plt

plt.close('all')

from sklearn.preprocessing import FunctionTransformer

from sklearn import cluster

import numpy as np

In the following we work with a subset of the 3D city model of Rotterdam

Load a CityJSON¶

The load() method loads a CityJSON file into a CityJSON object.

path = os.path.join('data', 'rotterdam_subset.json')

cm = cityjson.load(path)

print(type(cm))

<class 'cjio.cityjson.CityJSON'>

Using the CLI commands in the API¶

You can use any of the CLI commands on a CityJSON object

However, not all CLI commands are mapped 1-to-1 to CityJSON methods

And we haven't harmonized the CLI and the API yet.

cm.validate()

-- Validating the syntax of the file (using the schemas 1.0.0) -- Validating the internal consistency of the file (see docs for list) --Vertex indices coherent --Specific for CityGroups --Semantic arrays coherent with geometry --Root properties --Empty geometries --Duplicate vertices --Orphan vertices --CityGML attributes

(True, False, [], ["WARNING: attributes 'TerrainHeight' not in CityGML schema", '\t(16 CityObjects have this warning)', "WARNING: attributes 'bron_tex' not in CityGML schema", '\t(16 CityObjects have this warning)', "WARNING: attributes 'voll_tex' not in CityGML schema", '\t(16 CityObjects have this warning)', "WARNING: attributes 'bron_geo' not in CityGML schema", '\t(16 CityObjects have this warning)', "WARNING: attributes 'status' not in CityGML schema", '\t(16 CityObjects have this warning)'])

Explore the city model¶

Print the basic information about the city model. Note that print() returns the same information as the info command in the CLI.

print(cm)

{

"cityjson_version": "1.0",

"epsg": 7415,

"bbox": [

90454.18900000001,

435614.88,

0.0,

91002.41900000001,

436048.217,

18.29

],

"transform/compressed": true,

"cityobjects_total": 16,

"cityobjects_present": [

"Building"

],

"materials": false,

"textures": true

}

Getting objects from the model¶

Get CityObjects by their type, or a list of types. Also by their IDs.

Note that get_cityobjects() == cm.cityobjects

buildings = cm.get_cityobjects(type='building')

# both Building and BuildingPart objects

buildings_parts = cm.get_cityobjects(type=['building', 'buildingpart'])

r_ids = ['{C9D4A5CF-094A-47DA-97E4-4A3BFD75D3AE}',

'{6271F75F-E8D8-4EE4-AC46-9DB02771A031}']

buildings_ids = cm.get_cityobjects(id=r_ids)

Properties and geometry of objects¶

b01 = buildings_ids['{C9D4A5CF-094A-47DA-97E4-4A3BFD75D3AE}']

print(b01)

{

"id": "{C9D4A5CF-094A-47DA-97E4-4A3BFD75D3AE}",

"type": "Building",

"attributes": {

"TerrainHeight": 3.03,

"bron_tex": "UltraCAM-X 10cm juni 2008",

"voll_tex": "complete",

"bron_geo": "Lidar 15-30 punten - nov. 2008",

"status": "1"

},

"children": null,

"parents": null,

"geometry_type": [

"MultiSurface"

],

"geometry_lod": [

2

],

"semantic_surfaces": [

"WallSurface",

"RoofSurface",

"GroundSurface"

]

}

b01.attributes

{'TerrainHeight': 3.03,

'bron_tex': 'UltraCAM-X 10cm juni 2008',

'voll_tex': 'complete',

'bron_geo': 'Lidar 15-30 punten - nov. 2008',

'status': '1'}

CityObjects can have children and parents

b01.children is None and b01.parents is None

True

CityObject geometry is a list of Geometry objects. That is because a CityObject can have multiple geometry representations in different levels of detail, eg. a geometry in LoD1 and a second geometry in LoD2.

b01.geometry

[<cjio.models.Geometry at 0x7f031ade39e8>]

geom = b01.geometry[0]

print("{}, lod {}".format(geom.type, geom.lod))

MultiSurface, lod 2

Geometry boundaries and Semantic Surfaces¶

On the contrary to a CityJSON file, the geometry boundaries are dereferenced when working with the API. This means that the vertex coordinates are included in the boundary definition, not only the vertex indices.

cjio doesn't provide specific geometry classes (yet), eg. MultiSurface or Solid class. If you are working with the geometry boundaries, you need to the geometric operations yourself, or cast the boundary to a geometry-class of some other library. For example shapely if 2D is enough.

Vertex coordinates are kept 'as is' on loading the geometry. CityJSON files are often compressed and coordinates are shifted and transformed into integers so probably you'll want to transform them back. Otherwise geometry operations won't make sense.

transformation_object = cm.transform

geom_transformed = geom.transform(transformation_object)

geom_transformed.boundaries[0][0]

[(90988.79100000001, 435638.657, 10.652000000000001), (90987.429, 435642.77, 10.652000000000001), (90986.46900000001, 435641.09, 10.652000000000001), (90985.781, 435640.846, 10.652000000000001), (90986.801, 435637.955, 10.652000000000001)]

But it might be easier to transform (decompress) the whole model on load.

cm_transformed = cityjson.load(path, transform=True)

print(cm_transformed)

{

"cityjson_version": "1.0",

"epsg": 7415,

"bbox": [

90454.18900000001,

435614.88,

0.0,

91002.41900000001,

436048.217,

18.29

],

"transform/compressed": false,

"cityobjects_total": 16,

"cityobjects_present": [

"Building"

],

"materials": false,

"textures": true

}

Semantic Surfaces are stored in a similar fashion as in a CityJSON file, in the surfaces attribute of a Geometry object.

geom.surfaces

{0: {'surface_idx': [[0], [1], [2]], 'type': 'RoofSurface'},

1: {'surface_idx': [[3]], 'type': 'GroundSurface'},

2: {'surface_idx': [[4],

[5],

[6],

[7],

[8],

[9],

[10],

[11],

[12],

[13],

[14],

[15],

[16],

[17],

[18],

[19]],

'type': 'WallSurface'}}

surfaces does not store geometry boundaries, just references (surface_idx). Use the get_surface_boundaries() method to obtain the boundary-parts connected to the semantic surface.

roofs = geom.get_surfaces(type='roofsurface')

roofs

{0: {'surface_idx': [[0], [1], [2]], 'type': 'RoofSurface'}}

roof_boundaries = []

for r in roofs.values():

roof_boundaries.append(geom.get_surface_boundaries(r))

roof_boundaries

[[[[[579471, 198217, 10652],

[578109, 202330, 10652],

[577149, 200650, 10652],

[576461, 200406, 10652],

[577481, 197515, 10652]]],

[[[580840, 194082, 15211],

[579471, 198217, 15211],

[577481, 197515, 15211],

[576461, 200406, 15211],

[572239, 198909, 15211],

[571839, 200119, 15211],

[571503, 201071, 15211],

[566651, 199359, 15211],

[569801, 190223, 15211],

[573253, 191430, 15211],

[574658, 191922, 15211]]],

[[[565589, 202439, 11036],

[566651, 199359, 11036],

[571503, 201071, 11036],

[571839, 200119, 11036],

[573299, 200640, 11036],

[572089, 204029, 11036],

[570629, 203440, 11036],

[570379, 204150, 11036]]]]]

Assigning attributes to Semantic Surfaces¶

- extract the surfaces,

- make the changes on the surface,

- overwrite the CityObjects with the changes.

cm_copy = deepcopy(cm)

new_cos = {}

for co_id, co in cm.cityobjects.items():

new_geoms = []

for geom in co.geometry:

# Only LoD >= 2 models have semantic surfaces

if geom.lod >= 2.0:

# Extract the surfaces

roofsurfaces = geom.get_surfaces('roofsurface')

for i, rsrf in roofsurfaces.items():

# Change the attributes

if 'attributes' in rsrf.keys():

rsrf['attributes']['cladding'] = 'tiles'

else:

rsrf['attributes'] = {}

rsrf['attributes']['cladding'] = 'tiles'

geom.surfaces[i] = rsrf

new_geoms.append(geom)

else:

# Use the unchanged geometry

new_geoms.append(geom)

co.geometry = new_geoms

new_cos[co_id] = co

cm_copy.cityobjects = new_cos

print(cm_copy.cityobjects['{C9D4A5CF-094A-47DA-97E4-4A3BFD75D3AE}'])

{

"id": "{C9D4A5CF-094A-47DA-97E4-4A3BFD75D3AE}",

"type": "Building",

"attributes": {

"TerrainHeight": 3.03,

"bron_tex": "UltraCAM-X 10cm juni 2008",

"voll_tex": "complete",

"bron_geo": "Lidar 15-30 punten - nov. 2008",

"status": "1"

},

"children": null,

"parents": null,

"geometry_type": [

"MultiSurface"

],

"geometry_lod": [

2

],

"semantic_surfaces": [

"WallSurface",

"RoofSurface",

"GroundSurface"

]

}

Create new Semantic Surfaces¶

The process is similar as previously. However, in this example we create new SemanticSurfaces that hold the values which we compute from the geometry. The input city model has a single semantic "WallSurface", without attributes, for all the walls of a building. The snippet below illustrates how to separate surfaces and assign the semantics to them.

new_cos = {}

for co_id, co in cm_copy.cityobjects.items():

new_geoms = []

for geom in co.geometry:

if geom.lod >= 2.0:

max_id = max(geom.surfaces.keys())

old_ids = []

for w_i, wsrf in geom.get_surfaces('wallsurface').items():

old_ids.append(w_i)

del geom.surfaces[w_i]

boundaries = geom.get_surface_boundaries(wsrf)

for j, boundary_geometry in enumerate(boundaries):

# The original geometry has the same Semantic for all wall,

# but we want to divide the wall surfaces by their orientation,

# thus we need to have the correct surface index

surface_index = wsrf['surface_idx'][j]

new_srf = {

'type': wsrf['type'],

'surface_idx': surface_index

}

for multisurface in boundary_geometry:

# Do any operation here

x, y, z = multisurface[0]

if j % 2 > 0:

orientation = 'north'

else:

orientation = 'south'

# Add the new attribute to the surface

if 'attributes' in wsrf.keys():

wsrf['attributes']['orientation'] = orientation

else:

wsrf['attributes'] = {}

wsrf['attributes']['orientation'] = orientation

new_srf['attributes'] = wsrf['attributes']

# if w_i in geom.surfaces.keys():

# del geom.surfaces[w_i]

max_id = max_id + 1

geom.surfaces[max_id] = new_srf

new_geoms.append(geom)

else:

# If LoD1, just add the geometry unchanged

new_geoms.append(geom)

co.geometry = new_geoms

new_cos[co_id] = co

cm_copy.cityobjects = new_cos

Analysing CityModels¶

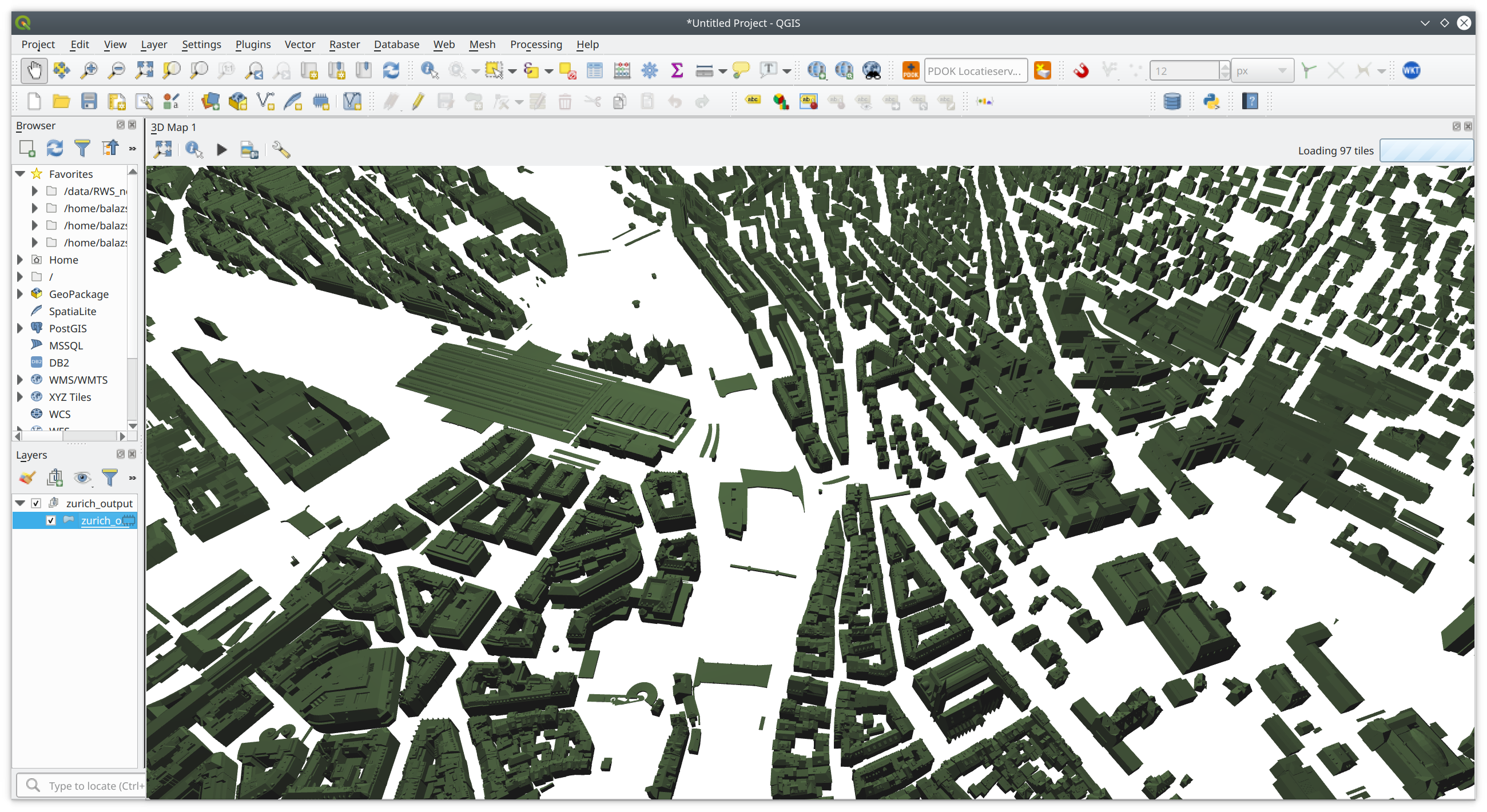

In the following I show how to compute some attributes from CityObject geometry and use these attributes as input for machine learning. For this we use the LoD2 model of Zürich.

Download the Zürich data set from https://3d.bk.tudelft.nl/opendata/cityjson/1.0/Zurich_Building_LoD2_V10.json

path = os.path.join('data', 'zurich.json')

zurich = cityjson.load(path, transform=True)

A simple geometry function¶

Here is a simple geometry function that computes the area of the groundsurface (footprint) of buildings in the model. It also show how to cast surfaces, in this case the ground surface, to Shapely Polygons.

def compute_footprint_area(co):

"""Compute the area of the footprint"""

footprint_area = 0

for geom in co.geometry:

# only LoD2 (or higher) objects have semantic surfaces

if geom.lod >= 2.0:

footprints = geom.get_surfaces(type='groundsurface')

# there can be many surfaces with label 'groundsurface'

for i,f in footprints.items():

for multisurface in geom.get_surface_boundaries(f):

for surface in multisurface:

# cast to Shapely polygon

shapely_poly = Polygon(surface)

footprint_area += shapely_poly.area

return footprint_area

Compute new attributes¶

Then we need to loop through the CityObjects and update add the new attributes. Note that the attributes CityObject attribute is just a dictionary.

Thus we compute the number of vertices of the CityObject and the area of is footprint. Then we going to cluster these two variables. This is completely arbitrary excercise which is simply meant to illustrate how to transform a city model into machine-learnable features.

for co_id, co in zurich.cityobjects.items():

co.attributes['nr_vertices'] = len(co.get_vertices())

co.attributes['fp_area'] = compute_footprint_area(co)

zurich.cityobjects[co_id] = co

It is possible to export the city model into a pandas DataFrame. Note that only the CityObject attributes are exported into the dataframe, with CityObject IDs as the index of the dataframe. Thus if you want to export the attributes of SemanticSurfaces for example, then you need to add them as CityObject attributes.

The function below illustrates this operation.

def assign_cityobject_attribute(cm):

"""Copy the semantic surface attributes to CityObject attributes.

Returns a copy of the citymodel.

"""

new_cos = {}

cm_copy = deepcopy(cm)

for co_id, co in cm.cityobjects.items():

for geom in co.geometry:

for srf in geom.surfaces.values():

if 'attributes' in srf:

for attr,a_v in srf['attributes'].items():

if (attr not in co.attributes) or (co.attributes[attr] is None):

co.attributes[attr] = [a_v]

else:

co.attributes[attr].append(a_v)

new_cos[co_id] = co

cm_copy.cityobjects = new_cos

return cm_copy

df = zurich.to_dataframe()

df.head()

| creationDate | Geomtype | nr_vertices | fp_area | class | Herkunft | QualitaetStatus | FileCreationDate | Region | GebaeudeStatus | |

|---|---|---|---|---|---|---|---|---|---|---|

| UUID_93fc5bae-4446-4336-9ff8-6679ebfdfde3 | 2017-01-23 | 1.0 | 24 | 65.209763 | NaN | NaN | NaN | NaN | NaN | NaN |

| UUID_c9884c4e-1cac-47f5-b88b-6fb074c0ae50 | 2017-01-23 | NaN | 0 | 0.000000 | BB01 | EE_LB_2007 | 1.0 | 2012-02-23 | 2.0 | 1.0 |

| UUID_a4a09780-153f-4385-ad19-3a92a6c4eec4 | 2017-01-23 | 1.0 | 38 | 20.784309 | NaN | NaN | NaN | NaN | NaN | NaN |

| UUID_ba0bb815-5276-4e35-b4c1-878cbf6ba934 | 2017-01-23 | NaN | 0 | 0.000000 | BB07 | EE_LB_2007 | 1.0 | 2012-02-23 | 2.0 | 1.0 |

| UUID_bb1835bc-7437-453f-ac08-885de0503aaa | 2017-01-23 | 1.0 | 87 | 69.363823 | NaN | NaN | NaN | NaN | NaN | NaN |

In order to have a nicer distribution of the data, we remove the missing values and apply a log-transform on the two variables. Note that the FuntionTransformer.transform transforms a DataFrame to a numpy array that is ready to be used in scikit-learn. The details of a machine learning workflow is beyond the scope of this tutorial however.

df_subset = df[df['Geomtype'].notnull() & df['fp_area'] > 0.0].loc[:, ['nr_vertices', 'fp_area']]

transformer = FunctionTransformer(np.log, validate=True)

df_logtransform = transformer.transform(df_subset)

fig = plt.figure()

ax = fig.add_subplot(1, 1, 1)

ax.scatter(df_logtransform[:,0], df_logtransform[:,1], alpha=0.3, s=1.0)

plt.show()

def plot_model_results(model, data):

fig = plt.figure()

ax = fig.add_subplot(1, 1, 1)

colormap = np.array(['lightblue', 'red', 'lime', 'blue','black'])

ax.scatter(data[:,0], data[:,1], c=colormap[model.labels_], s=10, alpha=0.5)

ax.set_xlabel('Number of vertices [log]')

ax.set_ylabel('Footprint area [log]')

plt.title(f"DBSCAN clustering with estimated {len(set(model.labels_))} clusters")

plt.show()

Since we transformed our DataFrame, we can fit any model in scikit-learn. I use DBSCAN because I wanted to find the data points on the fringes of the central cluster.

%matplotlib notebook

model = cluster.DBSCAN(eps=0.2).fit(df_logtransform)

plot_model_results(model, df_logtransform)

# merge the cluster labels back to the data frame

df_subset['dbscan'] = model.labels_

Save the results back to CityJSON¶

And merge the DataFrame with cluster labels back to the city model.

for co_id, co in zurich.cityobjects.items():

if co_id in df_subset.index:

ml_results = dict(df_subset.loc[co_id])

else:

ml_results = {'nr_vertices': 'nan', 'fp_area': 'nan', 'dbscan': 'nan'}

new_attrs = {**co.attributes, **ml_results}

co.attributes = new_attrs

zurich.cityobjects[co_id] = co

At the end, the save() method saves the edited city model into a CityJSON file.

path_out = os.path.join('data', 'zurich_output.json')

cityjson.save(zurich, path_out)

And view the results in QGIS again¶

However, you'll need to set up the styling based on the cluster labels by hand.

Other software¶

Online CityJSON viewer¶

QGIS plugin¶

Azul¶

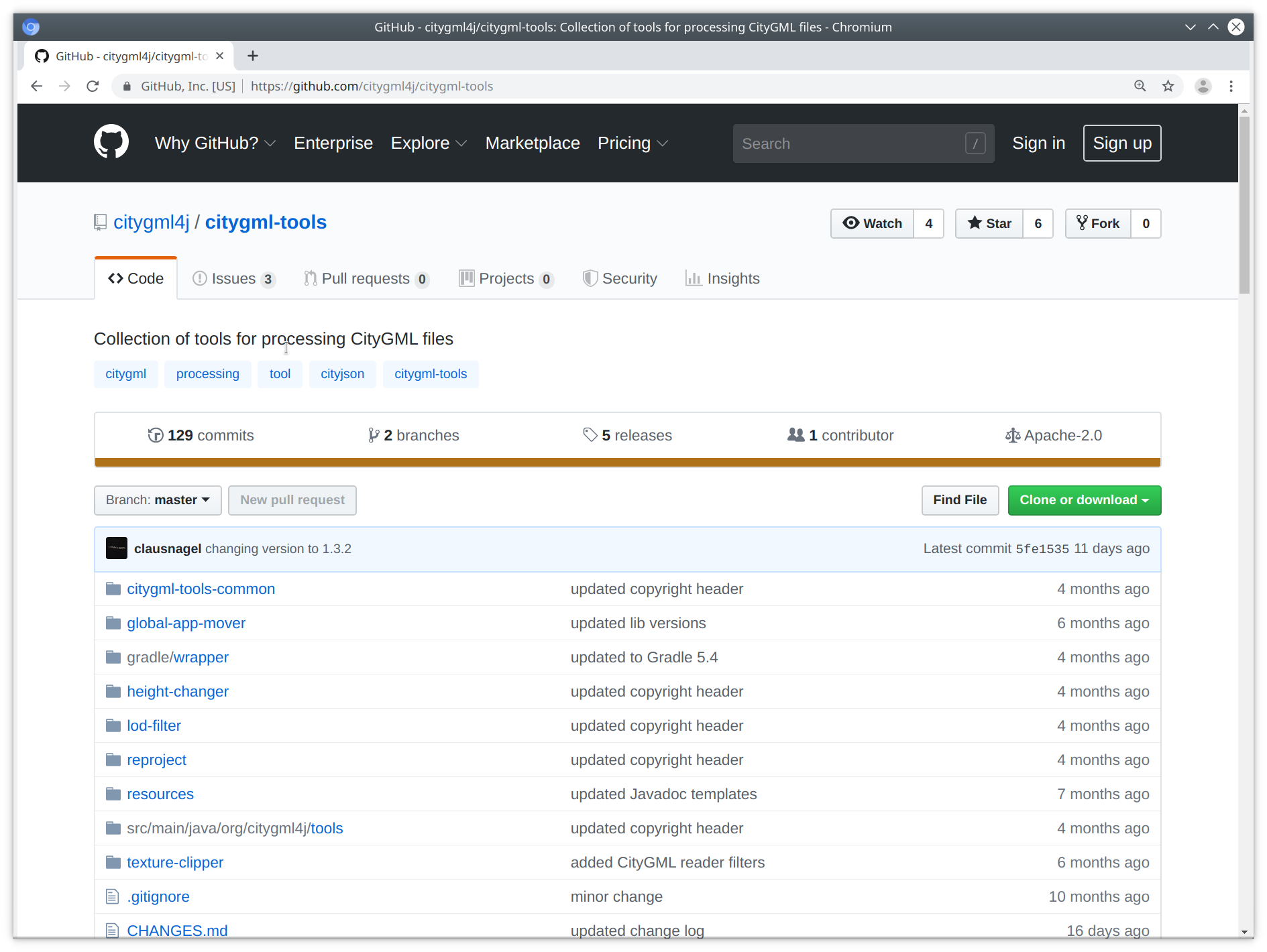

Full conversion CityGML <--> CityJSON¶

Thank you!¶

Balázs Dukai

b.dukai@tudelft.nl

@BalazsDukai

A few links¶

Repo of this talk: https://github.com/balazsdukai/foss4g2019

QGIS plugin: github.com/tudelft3d/cityjson-qgis-plugin

Azul – CityJSON viewer on Mac – check the AppStore