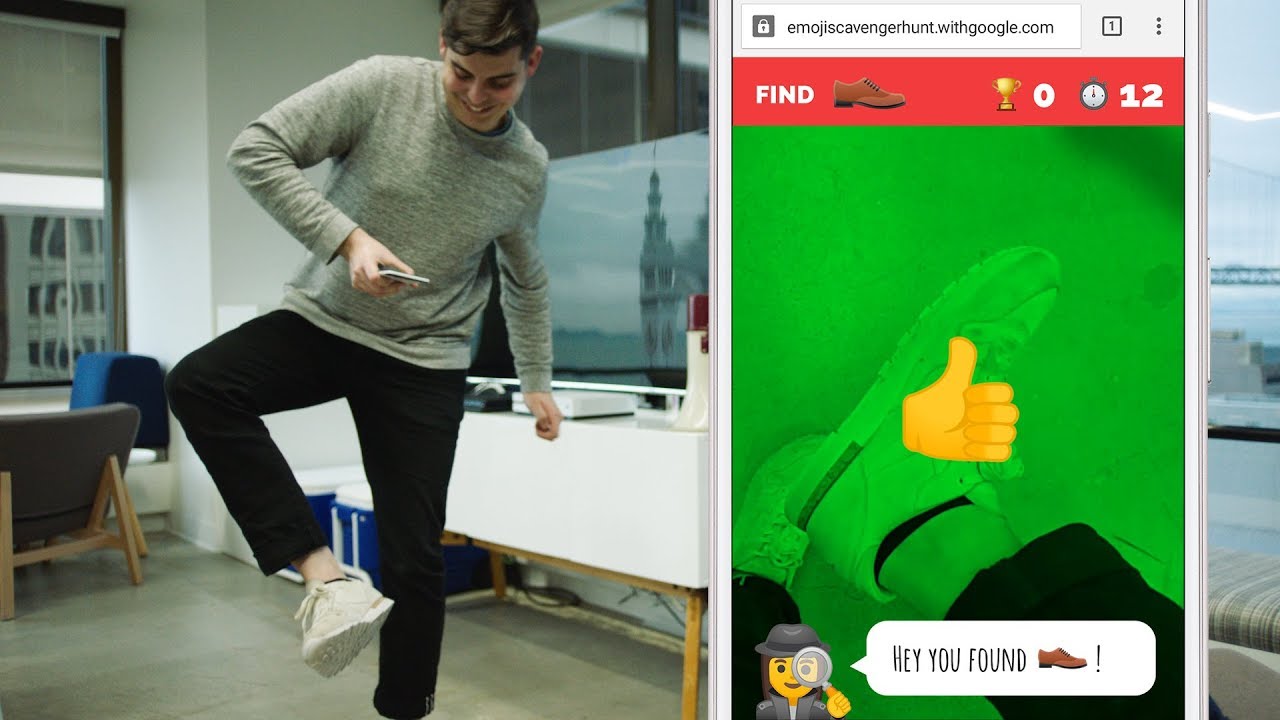

Scavenger hunt¶

"Identify emojis in the real world with your phone’s camera - Experiment / YouTube Presentation

Load libraries¶

In [ ]:

import torch

print("Torch version:", torch.__version__)

import torchvision

print("Torchvision version:", torchvision.__version__)

import numpy as np

print("Numpy version:", np.__version__)

import matplotlib

print("Matplotlib version:", matplotlib.__version__)

import PIL

print("PIL version:", PIL.__version__)

import IPython

print("IPython version:", IPython.__version__)

import cv2

print('OpenCV version:', cv2.__version__)

In [ ]:

# Setup Matplotlib

%matplotlib inline

#%config InlineBackend.figure_format = 'retina' # If you have a retina screen

import matplotlib.pyplot as plt

Pretrained models¶

In [ ]:

# Load a pretrained model

model = torchvision.models.resnet18(pretrained=True)

# Set model in "evaluation" model

_ = model.eval()

In [ ]:

from torchvision import transforms

# Define the input pipeline

pipeline = transforms.Compose([

transforms.ToPILImage(), # Convert webcam images to PIL format

transforms.ToTensor(), # Convert to PyTorch Tensor

transforms.Normalize( # Normalize using predefined values

mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]

)

])

In [ ]:

import json

# Load classes

imagenet_classes = json.load(open('imagenet-classes.json'))

# Function to transform label(s) to id(s)

to_id = {label: int(i) for i, label in imagenet_classes.items()} # label -> id

to_ids = lambda labels: [to_id[label] for label in labels] # labels -> ids

# Define items

items = {

'cup': ['cup', 'goblet', 'coffee_mug', 'espresso', 'eggnog', 'red_wine', 'beer_glass'],

'clock': ['digital_clock', 'digital_watch', 'analog_clock', 'wall_clock', 'stopwatch'],

'bottle': ['water_bottle', 'pop_bottle', 'wine_bottle', 'beer_bottle']

}

items_names = list(items.keys())

# The goal is to find all items

was_found = {c: False for c in items.keys()}

Test with webcam feed¶

In [ ]:

from IPython import display

import time

# Connect to webcam

if 'webcam' not in locals() or webcam is None:

webcam = cv2.VideoCapture(0)

try:

# Try to read from the webcam

webcam_found, _ = webcam.read()

if webcam_found:

# Create figure

fig, (ax1, ax2) = plt.subplots(nrows=1, ncols=2, figsize=(6, 2))

for i in range(1000):

# Take a picture with the webcam

_, image = webcam.read()

# Process it

image = cv2.resize(image, (224, 224)) # Resize to fit the model

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB) # To RGB

# Classify image

image_pytorch = pipeline(image)

output = model(torch.autograd.Variable(image_pytorch[np.newaxis, :]))

all_probs = torch.nn.functional.softmax(output, 1).view(-1).data.numpy()

# Get probabilities for our items

probs = [all_probs[to_ids(labels)].max() for c, labels in items.items()]

# Did we find an object?

if max(probs) > 0.2:

found_class = items_names[np.argmax(probs)]

was_found[found_class] = True

# Plot the image

ax1.cla()

ax1.barh(np.arange(len(items)), probs, height=0.5, tick_label=['{} [{}]'.format(c, '✓' if done else '✗') for c, done in was_found.items()])

ax1.set_xlim(0, 1)

ax2.cla()

ax2.imshow(image, aspect='auto')

ax2.set_title('webcam')

# Set title

if np.all(list(was_found.values())):

ax1.set_title(r'Bravo \°$\smile$°/ !')

else:

ax1.set_title('Find a ..')

# Jupyter trick

display.clear_output(wait=True)

display.display(fig)

# Rest a bit for CPU

time.sleep(0.2)

# Clear output

display.clear_output()

else:

print('Cannot read from webcam, do you have one connected?')

except KeyboardInterrupt:

# Clear output

display.clear_output()

finally:

# Disconnect webcam

del(webcam)