Identifying Location Trends in Foursquare¶

from IPython.display import HTML

HTML('<iframe src="http://en.wikipedia.org/wiki/Foursquare" width=100% height=350></iframe>')

Task:¶

- Find trending locations on Foursquare by comparing some metrics at t1 to t0

- Find metrics that could be useful

- Map the data

Initialization¶

First, you have to find your access tokens to use the Foursquare API with reasonable rate limits.

If you have an access token, you can use that, otherwise register an app an use the client id and secret for the following steps. https://de.foursquare.com/developers/register

import foursquare

import pandas as pd

#ACCESS_TOKEN = ""

#client = foursquare.Foursquare(access_token=ACCESS_TOKEN)

CLIENT_ID = ""

CLIENT_SECRET = ""

client = foursquare.Foursquare(client_id=CLIENT_ID, client_secret=CLIENT_SECRET)

Fetching the Foursquare location links¶

- Starting at Munich Marienplatz as seed venue

- Fetching the 5 next venues (from API nextvenues, see: https://developer.foursquare.com/docs/venues/nextvenues)

- For each of the 5 venues fetch the 5 next venues

- Repeat until saturation (no new locations)

bbox = [11.109872,47.815652,12.068588,48.397136] # bounding box for Munich

# bbox = [13.088400,52.338120,13.761340,52.675499] # bounding box for Berlin

#bbox = [5.866240,47.270210,15.042050,55.058140] # bounding box for Germany

new_crawl = [] # list of locations to be crawled

done = [] # list of crawled locations

links = [] # list of tuples that represent links between locations

venues = pd.DataFrame() # dictionary of locations id => meta-data on location

Set seed values for Marienplatz, Airport and Central Station.

Depth is the number of recursive crawling processes.

to_crawl = ["4ade0ccef964a520246921e3", "4cbd1bfaf50e224b160503fc", "4b0674e2f964a520f4eb22e3"]

depth = 25

for i in range(depth):

new_crawl = []

print "Step " + str(i) + ": " + str(len(venues)) + " locations and " + str(len(links)) + " links. " + str(len(to_crawl)) + " venues to go."

for v in to_crawl:

if v not in venues:

res = client.venues(v)

venues = venues.append(pd.DataFrame({"name":res["venue"]["name"],"users":res["venue"]["stats"]["usersCount"],

"checkins":res["venue"]["stats"]["checkinsCount"], "lat":res["venue"]["location"]["lat"],

"lng":res["venue"]["location"]["lng"]}, index=[v]))

next_venues = client.venues.nextvenues(v)

for nv in next_venues['nextVenues']['items']:

if ((nv["location"]["lat"] > bbox[1]) & (nv["location"]["lat"] < bbox[3]) &

(nv["location"]["lng"] > bbox[0]) & (nv["location"]["lng"] < bbox[2])):

if nv["id"] not in venues:

venues = venues.append(pd.DataFrame({"name":nv["name"],"users":nv["stats"]["usersCount"],

"checkins":nv["stats"]["checkinsCount"], "lat":nv["location"]["lat"],

"lng":nv["location"]["lng"]}, index=[nv["id"]]))

if (nv["id"] not in done) & (nv["id"] not in to_crawl) & (nv["id"] not in new_crawl):

new_crawl.append(nv["id"])

links.append((v, nv["id"]))

done.append(v)

to_crawl = new_crawl

Step 0: 0 locations and 0 links. 3 venues to go. Step 1: 13 locations and 10 links. 8 venues to go. Step 2: 59 locations and 48 links. 19 venues to go. Step 3: 167 locations and 137 links. 14 venues to go. Step 4: 240 locations and 196 links. 18 venues to go. Step 5: 327 locations and 265 links. 22 venues to go. Step 6: 425 locations and 341 links. 25 venues to go. Step 7: 556 locations and 447 links. 31 venues to go. Step 8: 720 locations and 580 links. 31 venues to go. Step 9: 876 locations and 705 links. 39 venues to go. Step 10: 1046 locations and 836 links. 27 venues to go. Step 11: 1134 locations and 897 links. 19 venues to go. Step 12: 1201 locations and 945 links. 12 venues to go. Step 13: 1232 locations and 964 links. 5 venues to go. Step 14: 1250 locations and 977 links. 8 venues to go. Step 15: 1286 locations and 1005 links. 11 venues to go. Step 16: 1329 locations and 1037 links. 4 venues to go. Step 17: 1351 locations and 1055 links. 7 venues to go. Step 18: 1377 locations and 1074 links. 3 venues to go. Step 19: 1388 locations and 1082 links. 1 venues to go. Step 20: 1391 locations and 1084 links. 0 venues to go. Step 21: 1391 locations and 1084 links. 0 venues to go. Step 22: 1391 locations and 1084 links. 0 venues to go. Step 23: 1391 locations and 1084 links. 0 venues to go. Step 24: 1391 locations and 1084 links. 0 venues to go.

Generating the network¶

We're importing networkx to build the network out of our crawled venues (= nodes) and links between them.

venues = venues.reset_index().drop_duplicates(cols='index',take_last=True).set_index('index')

venues.head()

| checkins | lat | lng | name | users | |

|---|---|---|---|---|---|

| index | |||||

| 4cbd1bfaf50e224b160503fc | 244992 | 48.352599 | 11.780992 | München Flughafen "Franz Josef Strauß" (MUC) | 91098 |

| 4b0674e2f964a520f4eb22e3 | 91099 | 48.140547 | 11.555772 | München Hauptbahnhof | 19551 |

| 4b56f6eef964a520ec2028e3 | 1913 | 48.137558 | 11.579466 | Augustiner am Platzl | 1533 |

| 4bbc6329afe1b7136d4d304b | 2979 | 48.135282 | 11.576350 | Biergarten am Viktualienmarkt | 1691 |

| 4b335a36f964a520d51825e3 | 3406 | 48.134848 | 11.575170 | Der Pschorr | 2409 |

labels = venues["name"].to_dict()

import networkx as nx

G = nx.DiGraph()

G.add_nodes_from(venues.index)

for f,t in links:

G.add_edge(f, t)

nx.info(G)

'Name: \nType: DiGraph\nNumber of nodes: 307\nNumber of edges: 1084\nAverage in degree: 3.5309\nAverage out degree: 3.5309'

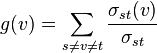

Calculate some useful metrics:

- Betweenness Centrality: Number of shortest paths in the network that pass through this node

- Page Rank: Random Walk through city

pagerank = nx.pagerank(G,alpha=0.9)

betweenness = nx.betweenness_centrality(G)

venues['pagerank'] = [pagerank[n] for n in venues.index]

venues['betweenness'] = [betweenness[n] for n in venues.index]

Plot some stats¶

%pylab inline

import matplotlib.pyplot as plt

fig = plt.figure(figsize=(8, 6), dpi=150)

ax = fig.add_subplot(111)

venues.sort('users', inplace=True)

venues.set_index('name')[-20:].users.plot(kind='barh')

ax.set_ylabel('Location')

ax.set_xlabel('Users')

ax.set_title('Top 20 Locations by Users')

plt.show()

Populating the interactive namespace from numpy and matplotlib

WARNING: pylab import has clobbered these variables: ['f'] `%matplotlib` prevents importing * from pylab and numpy

fig = plt.figure(figsize=(8, 6), dpi=150)

ax = fig.add_subplot(111)

venues.sort('checkins', inplace=True)

venues.set_index('name')[-20:].checkins.plot(kind='barh')

ax.set_ylabel('Location')

ax.set_xlabel('Checkins')

ax.set_title('Top 20 Locations by Checkins')

plt.show()

fig = plt.figure(figsize=(8, 6), dpi=150)

ax = fig.add_subplot(111)

venues.sort('pagerank', inplace=True)

venues.set_index('name')[-20:].pagerank.plot(kind='barh')

ax.set_ylabel('Location')

ax.set_xlabel('Pagerank')

ax.set_title('Top 20 Locations by Pagerank')

plt.show()

fig = plt.figure(figsize=(8, 6), dpi=150)

ax = fig.add_subplot(111)

venues.sort('betweenness', inplace=True)

venues.set_index('name')[-20:].betweenness.plot(kind='barh')

ax.set_ylabel('Location')

ax.set_xlabel('Pagerank')

ax.set_title('Top 20 Locations by Betweenness Centrality')

plt.show()

Visualize the network

fig = plt.figure(figsize=(16, 9), dpi=150)

graph_pos=nx.spring_layout(G)

nodesize = [10000*n for n in pagerank.values()]

nx.draw_networkx_nodes(G,graph_pos,node_size=nodesize, alpha=0.5, node_color='blue')

nx.draw_networkx_edges(G,graph_pos,width=0.8, alpha=0.4,edge_color='blue')

nx.draw_networkx_labels(G, graph_pos, labels=labels, font_size=10, font_family='Arial')

plt.axis('off')

plt.show()

Finally, save the network for further analysis e.g. in Gephi

nx.write_graphml(G, "./fs_loc_muc_l_jul14.graphml")

Trend Research with location data¶

Loading historical location data gathered with the same method in May 2014

F = nx.read_graphml("fs_loc_muc_l_may14.graphml")

pagerank_old = nx.pagerank(F,alpha=0.9,max_iter=200)

betweenness_old = nx.betweenness_centrality(F)

venues_old['pagerank'] = [pagerank_old[n] for n in venues_old.index]

venues_old['betweenness'] = [betweenness_old[n] for n in venues_old.index]

fig = plt.figure(figsize=(16, 9), dpi=150)

graph_pos=nx.spring_layout(F)

nodesize = [10000*n for n in pagerank_old.values()]

nx.draw_networkx_nodes(F,graph_pos,node_size=nodesize, alpha=0.5, node_color='red')

nx.draw_networkx_edges(F,graph_pos,width=0.8, alpha=0.4,edge_color='red')

nx.draw_networkx_labels(F, graph_pos, labels=venues_old["name"].to_dict(), font_size=10, font_family='Arial')

plt.axis('off')

plt.show()

Recreating the data set and populating the columns from the network data

venues_old = pd.DataFrame()

venues_old['name'] = nx.get_node_attributes(F,'name').values()

venues_old['checkins_may'] = nx.get_node_attributes(F,'visits').values()

venues_old['users_may'] = nx.get_node_attributes(F,'users').values()

venues_old['betweenness_may'] = nx.betweenness_centrality(F).values()

venues_old['pagerank_may'] = nx.pagerank(F,alpha=0.9,max_iter=200).values()

venues_old.index = nx.get_node_attributes(F,'name')

venues_old = venues_old.reset_index().drop_duplicates(cols='index',take_last=True).set_index('index')

Merging both datasets on the index (not on name - e.g. there are multiple Starbucks)

venues_both = venues.merge(venues_old, left_index=True, right_index=True)

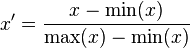

Calculating the relative differences of our variables between May and July 2014

venues_both['checkins_delta'] = (venues_both['checkins']-venues_both['checkins_may'])/venues_both['checkins_may']

venues_both['betweenness_delta'] = (venues_both['betweenness']-venues_both['betweenness_may'])/venues_both['betweenness_may']

venues_both['pagerank_delta'] = (venues_both['pagerank']-venues_both['pagerank_may'])/venues_both['pagerank_may']

Top 10 Locations with the largest increase in footfall (# checkins)¶

venues_both.sort('checkins_delta', ascending=False)[['name_x', 'checkins_may', 'checkins', 'checkins_delta']][:10]

| name_x | checkins_may | checkins | checkins_delta | |

|---|---|---|---|---|

| index | ||||

| 507d6e96498ec32f6e7bfa06 | Spielplatz Nähe Eisbachwelle | 40 | 64 | 0.600000 |

| 4bd44bfa046076b0fa967771 | Hirschau | 693 | 850 | 0.226551 |

| 5246e0cc11d24223cc125f78 | Hans im Glück | 732 | 889 | 0.214481 |

| 4c0b8af36071a5936b61e132 | Eisbach | 1350 | 1628 | 0.205926 |

| 51b37d63498eb9785e670720 | Bazi's Schlemmerkucherl | 163 | 193 | 0.184049 |

| 4bd1e0e1462cb7134215db07 | Muffathalle Biergarten | 1266 | 1484 | 0.172196 |

| 4c6ec458e6b7b1f7c78fad8e | Olympia Alm | 488 | 571 | 0.170082 |

| 504260c7e4b04fbe5321813b | Korkenzieher | 32 | 37 | 0.156250 |

| 4d9865c361a3a1cd246ccc42 | Amorino Gelato Italiano | 166 | 191 | 0.150602 |

| 4ddc0e2d52b177ff2e6480b0 | Sausalitos Sommerbar | 102 | 117 | 0.147059 |

Top 10 Locations with the largest increase in betweenness centrality¶

venues_both.sort('betweenness_delta', ascending=False)[['name_x', 'betweenness_may', 'betweenness', 'betweenness_delta']][:10]

| name_x | betweenness_may | betweenness | betweenness_delta | |

|---|---|---|---|---|

| index | ||||

| 5246e0cc11d24223cc125f78 | Hans im Glück | 0.000000 | 0.000735 | inf |

| 507ea5998acaf6098efe5fb5 | BMW i | 0.000000 | 0.002818 | inf |

| 4f68ba09e4b0551e6ca95cd0 | Milka Welt | 0.000000 | 0.017679 | inf |

| 4ade0d08f964a520636a21e3 | Filmcasino | 0.000000 | 0.000469 | inf |

| 4dcc250bd4c0dfa030e1120d | Königin 43 | 0.000003 | 0.002818 | 1028.997386 |

| 4bf9444f8d30d13a47e50118 | Jessas Eisdiele | 0.000196 | 0.008775 | 43.817695 |

| 4ade0cc8f964a520066921e3 | Dom zu Unserer Lieben Frau | Frauenkirche | 0.002508 | 0.034780 | 12.865210 |

| 4c23c5c6f1272d7ffaf681c5 | Filmwirtschaft | 0.000007 | 0.000095 | 11.972876 |

| 4dce54fcd164679b8cfc787c | i love leo | 0.000141 | 0.001427 | 9.113625 |

| 4bcfee37462cb71376a1d707 | Coffee Fellows | 0.003693 | 0.036468 | 8.874570 |

Top 10 Locations with the largest decrease in betweenness centrality¶

venues_both[venues_both.betweenness > 0].sort('betweenness_delta', ascending=True)[['name_x', 'betweenness_may', 'betweenness', 'betweenness_delta']][:10]

| name_x | betweenness_may | betweenness | betweenness_delta | |

|---|---|---|---|---|

| index | ||||

| 4b8153bdf964a5209c9f30e3 | Kiosk an der Reichenbachbrücke | 0.243131 | 0.007391 | -0.969599 |

| 4f3594dae4b08533d05103c8 | Rubybar | 0.230612 | 0.009512 | -0.958754 |

| 4b052a4df964a5204b5722e3 | Starbucks | 0.000553 | 0.000025 | -0.954765 |

| 4ade0cd2f964a520396921e3 | Alter Peter (Katholische Stadtpfarrei St. Peter) | 0.000678 | 0.000038 | -0.943406 |

| 4dbc66aa1e72b351cab229fd | Café Kong | 0.013326 | 0.001420 | -0.893461 |

| 4b2b8d2bf964a5207ab724e3 | Netzer & Overath | 0.244587 | 0.030052 | -0.877132 |

| 4ade0cb0f964a520896821e3 | Ampere | 0.012437 | 0.001738 | -0.860283 |

| 4ade0c9bf964a5200e6821e3 | Wirtshaus in der Au | 0.019885 | 0.005790 | -0.708823 |

| 4b113a3ff964a520127923e3 | Kaimug | 0.000984 | 0.000315 | -0.679800 |

| 4ade0d28f964a520196b21e3 | Olympia-Schwimmhalle | 0.002836 | 0.000926 | -0.673452 |

Top 10 Locations with the largest increase in Pagerank¶

venues_both.sort('pagerank_delta', ascending=False)[['name_x', 'pagerank_may', 'pagerank', 'pagerank_delta']][:10]

| name_x | pagerank_may | pagerank | pagerank_delta | |

|---|---|---|---|---|

| index | ||||

| 4ade0ccef964a520246921e3 | Marienplatz | 0.003094 | 0.085931 | 26.770680 |

| 4b2135f8f964a520503824e3 | Hard Rock Cafe Munich | 0.000591 | 0.014885 | 24.174650 |

| 4ade0d29f964a520216b21e3 | Allianz Arena | 0.000982 | 0.023729 | 23.162940 |

| 4ade0cecf964a520cf6921e3 | Olympiaturm | 0.000536 | 0.007821 | 13.594360 |

| 4b9536bff964a520bc9534e3 | Rotkreuzplatz | 0.000412 | 0.005999 | 13.556653 |

| 4b09134ef964a520281423e3 | McDonald’s | 0.000591 | 0.007429 | 11.564500 |

| 4ade0cacf964a520736821e3 | Sarcletti | 0.000406 | 0.004885 | 11.036822 |

| 4ae74e23f964a52038aa21e3 | Niederlassung | 0.000379 | 0.004547 | 10.992942 |

| 4fc5fc17e4b0c6d2d64b5357 | FC Bayern München Megastore | 0.000660 | 0.007901 | 10.969469 |

| 4ade0cdaf964a520666921e3 | BMW Museum | 0.000642 | 0.007027 | 9.950578 |

Top 10 Locations with the largest decrease in PageRank¶

venues_both.sort('pagerank_delta', ascending=True)[['name_x', 'pagerank_may', 'pagerank', 'pagerank_delta']][:10]

| name_x | pagerank_may | pagerank | pagerank_delta | |

|---|---|---|---|---|

| index | ||||

| 4bc08b8d461576b0c4417a32 | LeBuffet | 0.015450 | 0.000804 | -0.947980 |

| 4b519094f964a520aa4f27e3 | Wimmer | 0.015555 | 0.000916 | -0.941118 |

| 4adf7208f964a520a07a21e3 | Burg Pappenheim | 0.004978 | 0.000890 | -0.821275 |

| 4bfa555f508c0f47c9f33f31 | Zum Augustiner | 0.025902 | 0.005384 | -0.792146 |

| 4ade0d08f964a520636a21e3 | Filmcasino | 0.002434 | 0.000536 | -0.779625 |

| 4baf75a8f964a52082013ce3 | Riva Schwabing | 0.005536 | 0.001249 | -0.774352 |

| 4b7aa67cf964a52061352fe3 | Deutsche Eiche | 0.005747 | 0.001318 | -0.770728 |

| 4b067d01f964a52043ec22e3 | Cafe Munich | 0.002158 | 0.000506 | -0.765360 |

| 4bd25fc7b221c9b63dacd7d0 | Silans Kebap | 0.003947 | 0.000971 | -0.754060 |

| 4dbc66aa1e72b351cab229fd | Café Kong | 0.002485 | 0.000660 | -0.734585 |

Hotspots

venues_both.head()

| checkins | lat | lng | name_x | users | pagerank | betweenness | name_y | checkins_may | users_may | betweenness_may | pagerank_may | checkins_delta | betweenness_delta | pagerank_delta | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| index | |||||||||||||||

| 4db9efd55da389d2c2303339 | 743 | 48.128940 | 11.576543 | Zephyr Bar | 368 | 0.000564 | 0.079590 | Zephyr Bar | 683 | 342 | 0.190142 | 0.000431 | 0.087848 | -0.581415 | 0.309915 |

| 4e49454d1f6e29f10dca054a | 2937 | 48.134527 | 11.574656 | Schrannenhalle | 1576 | 0.015118 | 0.020432 | Schrannenhalle | 2813 | 1508 | 0.002219 | 0.013223 | 0.044081 | 8.207843 | 0.143315 |

| 4dac08d60cb6a89c628e690a | 235 | 48.130329 | 11.583377 | Deutsches Museum Shop | 178 | 0.000853 | 0.000000 | Deutsches Museum Shop | 228 | 171 | 0.000000 | 0.000624 | 0.030702 | NaN | 0.366716 |

| 4b5b9d19f964a520040b29e3 | 868 | 48.131803 | 11.576211 | del fiore officine alimentari | 559 | 0.001755 | 0.000000 | del fiore officine alimentari | 807 | 522 | 0.000000 | 0.001337 | 0.075589 | NaN | 0.312735 |

| 4b0ad1b6f964a520662823e3 | 18938 | 48.136874 | 11.574547 | Apple Store | 10227 | 0.052095 | 0.046068 | Apple Store | 18621 | 10058 | 0.052891 | 0.048365 | 0.017024 | -0.128992 | 0.077120 |

figure(figsize=(12,8))

plt.hexbin(venues.lng, venues.lat, gridsize=50, C=venues.checkins, reduce_C_function = np.sum)

cb = plt.colorbar()

cb.set_label('# Checkins')

plt.show()

venues[venues.lng > 11.7]

| checkins | lat | lng | name | users | pagerank | betweenness | |

|---|---|---|---|---|---|---|---|

| index | |||||||

| 4cbd1bfaf50e224b160503fc | 244992 | 48.352599 | 11.780992 | München Flughafen "Franz Josef Strauß" (MUC) | 91098 | 0.000404 | 0.000000 |

| 4b90b43ff964a520df9433e3 | 746 | 48.354053 | 11.788584 | Erdinger Weißbier Sportsbar | 624 | 0.002281 | 0.000000 |

| 4e0569e06284d9ee92c8b000 | 513 | 48.353983 | 11.788284 | FC Bayern Fanshop | 355 | 0.001052 | 0.000000 |

| 4f1ba985e4b035290e8d2ad5 | 48 | 48.353982 | 11.788082 | Backstube Wünsche | 36 | 0.000974 | 0.000000 |

| 4fabab2de4b034d5346ae815 | 293 | 48.353987 | 11.787693 | Surf and Turf | 185 | 0.000974 | 0.000000 |

| 4c4940ec20ab1b8d1aab8716 | 3832 | 48.354013 | 11.788097 | München Airport Center | 2630 | 0.002917 | 0.000012 |

| 4b7912c0f964a520c8ea2ee3 | 3338 | 48.353854 | 11.788523 | Starbucks | 2061 | 0.003600 | 0.000029 |

| 4bacf0b6f964a5200c1c3be3 | 2344 | 48.353931 | 11.788532 | McDonald's | 1375 | 0.003600 | 0.000079 |

| 4b588c99f964a520675d28e3 | 6336 | 48.353982 | 11.788330 | Airbräu Brauhaus | 4150 | 0.003909 | 0.000115 |

| 4b696e03f964a520fca12be3 | 1153 | 48.353340 | 11.787751 | EDEKA | 636 | 0.003166 | 0.000150 |

figure(figsize=(12,8))

plt.hexbin(venues_both.lng, venues_both.lat, gridsize=50, C=venues_both.checkins_delta, reduce_C_function = np.sum)

cb = plt.colorbar()

cb.set_label('Checkins Change')

plt.show()

figure(figsize=(12,8))

plt.hexbin(venues_both.lng, venues_both.lat, gridsize=50, C=venues_both.pagerank_delta, reduce_C_function = np.mean)

cb = plt.colorbar()

cb.set_label('Pagerank Change')

plt.show()

Rescaling the geodata to fit our basemap:

venues_both['x'] = 1477*(venues_both.lng-11.455135345458984) / (11.626796722412108-11.455135345458984)

venues_both['y'] = 1040 - 1040*(venues_both.lat-48.113391315998626) / (48.194052718454039-48.113391315998626)

venues_both.sort('pagerank_delta', ascending=False)[['name_x', 'x', 'y', 'pagerank_delta', 'lat', 'lng']][:10]

venues_both = venues_both[venues_both.x >= 0 & (venues_both.y >= 0)]

venues_both.head()[['name_x', 'x', 'y']]

| name_x | x | y | |

|---|---|---|---|

| index | |||

| 4db9efd55da389d2c2303339 | Zephyr Bar | 1044.608250 | 839.521081 |

| 4e49454d1f6e29f10dca054a | Schrannenhalle | 1028.370113 | 767.484438 |

| 4dac08d60cb6a89c628e690a | Deutsches Museum Shop | 1103.411313 | 821.609290 |

| 4b5b9d19f964a520040b29e3 | del fiore officine alimentari | 1041.750467 | 802.616195 |

| 4b0ad1b6f964a520662823e3 | Apple Store | 1027.438125 | 737.227268 |

Plotting the data on an OpenStreetMap basemap that can be retrieved by

render.openstreetmap.org/cgi-bin/export?bbox=11.455135345458984,48.113391315998626,11.626796722412108,48.194052718454039&scale=46176&format=png

figure(figsize=(29.54,20.8))

img = imread("munich_osm.png")

plt.imshow(img, zorder=0, alpha=0.8)

plt.hexbin(venues_both.x, venues_both.y, gridsize=25, C=venues_both.pagerank_delta, reduce_C_function = np.mean, zorder=1, alpha=0.6)

plt.axis([0,1477,1040,0])

cb = plt.colorbar()

cb.set_label('Pagerank Delta')

plt.show()