Artificial Intelligence Engineer Nanodegree - Probabilistic Models¶

Project: Sign Language Recognition System¶

Introduction¶

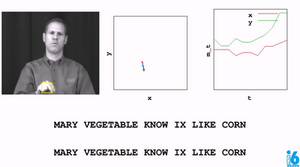

The overall goal of this project is to build a word recognizer for American Sign Language video sequences, demonstrating the power of probabalistic models. In particular, this project employs hidden Markov models (HMM's) to analyze a series of measurements taken from videos of American Sign Language (ASL) collected for research (see the RWTH-BOSTON-104 Database). In this video, the right-hand x and y locations are plotted as the speaker signs the sentence.

The raw data, train, and test sets are pre-defined. You will derive a variety of feature sets (explored in Part 1), as well as implement three different model selection criterion to determine the optimal number of hidden states for each word model (explored in Part 2). Finally, in Part 3 you will implement the recognizer and compare the effects the different combinations of feature sets and model selection criteria.

At the end of each Part, complete the submission cells with implementations, answer all questions, and pass the unit tests. Then submit the completed notebook for review!

PART 1: Data¶

Features Tutorial¶

Load the initial database¶

A data handler designed for this database is provided in the student codebase as the AslDb class in the asl_data module. This handler creates the initial pandas dataframe from the corpus of data included in the data directory as well as dictionaries suitable for extracting data in a format friendly to the hmmlearn library. We'll use those to create models in Part 2.

To start, let's set up the initial database and select an example set of features for the training set. At the end of Part 1, you will create additional feature sets for experimentation.

%load_ext autoreload

%autoreload 2

The autoreload extension is already loaded. To reload it, use: %reload_ext autoreload

import numpy as np

import pandas as pd

from asl_data import AslDb

asl = AslDb() # initializes the database

asl.df.head() # displays the first five rows of the asl database, indexed by video and frame

| left-x | left-y | right-x | right-y | nose-x | nose-y | speaker | ||

|---|---|---|---|---|---|---|---|---|

| video | frame | |||||||

| 98 | 0 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 |

| 1 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | |

| 2 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | |

| 3 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | |

| 4 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 |

asl.df.ix[98, 1] # look at the data available for an individual frame

left-x 149 left-y 181 right-x 170 right-y 175 nose-x 161 nose-y 62 speaker woman-1 Name: (98, 1), dtype: object

The frame represented by video 98, frame 1 is shown here:

Feature selection for training the model¶

The objective of feature selection when training a model is to choose the most relevant variables while keeping the model as simple as possible, thus reducing training time. We can use the raw features already provided or derive our own and add columns to the pandas dataframe asl.df for selection. As an example, in the next cell a feature named 'grnd-ry' is added. This feature is the difference between the right-hand y value and the nose y value, which serves as the "ground" right y value.

asl.df['grnd-ry'] = asl.df['right-y'] - asl.df['nose-y']

asl.df.head() # the new feature 'grnd-ry' is now in the frames dictionary

| left-x | left-y | right-x | right-y | nose-x | nose-y | speaker | grnd-ry | ||

|---|---|---|---|---|---|---|---|---|---|

| video | frame | ||||||||

| 98 | 0 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 |

| 1 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 | |

| 2 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 | |

| 3 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 | |

| 4 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 |

Try it!¶

from asl_utils import test_features_tryit

# TODO add df columns for 'grnd-rx', 'grnd-ly', 'grnd-lx' representing

# differences between hand and nose locations

asl.df['grnd-ly'] = asl.df['left-y'] - asl.df['nose-y']

asl.df['grnd-lx'] = asl.df['left-x'] - asl.df['nose-x']

asl.df['grnd-rx'] = asl.df['right-x'] - asl.df['nose-x']

# test the code

test_features_tryit(asl)

asl.df sample

| left-x | left-y | right-x | right-y | nose-x | nose-y | speaker | grnd-ry | grnd-ly | grnd-lx | grnd-rx | ||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| video | frame | |||||||||||

| 98 | 0 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 | 119 | -12 | 9 |

| 1 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 | 119 | -12 | 9 | |

| 2 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 | 119 | -12 | 9 | |

| 3 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 | 119 | -12 | 9 | |

| 4 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 | 119 | -12 | 9 |

# collect the features into a list

features_ground = ['grnd-rx', 'grnd-ry', 'grnd-lx', 'grnd-ly']

# show a single set of features for a given (video, frame) tuple

[asl.df.ix[98, 1][v] for v in features_ground]

[9, 113, -12, 119]

Build the training set¶

Now that we have a feature list defined, we can pass that list to the build_training method to collect the features for all the words in the training set. Each word in the training set has multiple examples from various videos. Below we can see the unique words that have been loaded into the training set:

training = asl.build_training(features_ground)

print("Training words: {}".format(training.words))

Training words: ['JOHN', 'WRITE', 'HOMEWORK', 'IX-1P', 'SEE', 'YESTERDAY', 'IX', 'LOVE', 'MARY', 'CAN', 'GO', 'GO1', 'FUTURE', 'GO2', 'PARTY', 'FUTURE1', 'HIT', 'BLAME', 'FRED', 'FISH', 'WONT', 'EAT', 'BUT', 'CHICKEN', 'VEGETABLE', 'CHINA', 'PEOPLE', 'PREFER', 'BROCCOLI', 'LIKE', 'LEAVE', 'SAY', 'BUY', 'HOUSE', 'KNOW', 'CORN', 'CORN1', 'THINK', 'NOT', 'PAST', 'LIVE', 'CHICAGO', 'CAR', 'SHOULD', 'DECIDE', 'VISIT', 'MOVIE', 'WANT', 'SELL', 'TOMORROW', 'NEXT-WEEK', 'NEW-YORK', 'LAST-WEEK', 'WILL', 'FINISH', 'ANN', 'READ', 'BOOK', 'CHOCOLATE', 'FIND', 'SOMETHING-ONE', 'POSS', 'BROTHER', 'ARRIVE', 'HERE', 'GIVE', 'MAN', 'NEW', 'COAT', 'WOMAN', 'GIVE1', 'HAVE', 'FRANK', 'BREAK-DOWN', 'SEARCH-FOR', 'WHO', 'WHAT', 'LEG', 'FRIEND', 'CANDY', 'BLUE', 'SUE', 'BUY1', 'STOLEN', 'OLD', 'STUDENT', 'VIDEOTAPE', 'BORROW', 'MOTHER', 'POTATO', 'TELL', 'BILL', 'THROW', 'APPLE', 'NAME', 'SHOOT', 'SAY-1P', 'SELF', 'GROUP', 'JANA', 'TOY1', 'MANY', 'TOY', 'ALL', 'BOY', 'TEACHER', 'GIRL', 'BOX', 'GIVE2', 'GIVE3', 'GET', 'PUTASIDE']

The training data in training is an object of class WordsData defined in the asl_data module. in addition to the words list, data can be accessed with the get_all_sequences, get_all_Xlengths, get_word_sequences, and get_word_Xlengths methods. We need the get_word_Xlengths method to train multiple sequences with the hmmlearn library. In the following example, notice that there are two lists; the first is a concatenation of all the sequences(the X portion) and the second is a list of the sequence lengths(the Lengths portion).

training.get_word_Xlengths('CHOCOLATE')

(array([[-11, 48, 7, 120],

[-11, 48, 8, 109],

[ -8, 49, 11, 98],

[ -7, 50, 7, 87],

[ -4, 54, 7, 77],

[ -4, 54, 6, 69],

[ -4, 54, 6, 69],

[-13, 52, 6, 69],

[-13, 52, 6, 69],

[ -8, 51, 6, 69],

[ -8, 51, 6, 69],

[ -8, 51, 6, 69],

[ -8, 51, 6, 69],

[ -8, 51, 6, 69],

[-10, 59, 7, 71],

[-15, 64, 9, 77],

[-17, 75, 13, 81],

[ -4, 48, -4, 113],

[ -2, 53, -4, 113],

[ -4, 55, 2, 98],

[ -4, 58, 2, 98],

[ -1, 59, 2, 89],

[ -1, 59, -1, 84],

[ -1, 59, -1, 84],

[ -7, 63, -1, 84],

[ -7, 63, -1, 84],

[ -7, 63, 3, 83],

[ -7, 63, 3, 83],

[ -7, 63, 3, 83],

[ -7, 63, 3, 83],

[ -7, 63, 3, 83],

[ -7, 63, 3, 83],

[ -7, 63, 3, 83],

[ -4, 70, 3, 83],

[ -4, 70, 3, 83],

[ -2, 73, 5, 90],

[ -3, 79, -4, 96],

[-15, 98, 13, 135],

[ -6, 93, 12, 128],

[ -2, 89, 14, 118],

[ 5, 90, 10, 108],

[ 4, 86, 7, 105],

[ 4, 86, 7, 105],

[ 4, 86, 13, 100],

[ -3, 82, 14, 96],

[ -3, 82, 14, 96],

[ 6, 89, 16, 100],

[ 6, 89, 16, 100],

[ 7, 85, 17, 111]], dtype=int64), [17, 20, 12])

More feature sets¶

So far we have a simple feature set that is enough to get started modeling. However, we might get better results if we manipulate the raw values a bit more, so we will go ahead and set up some other options now for experimentation later. For example, we could normalize each speaker's range of motion with grouped statistics using Pandas stats functions and pandas groupby. Below is an example for finding the means of all speaker subgroups.

df_means = asl.df.groupby('speaker').mean()

df_means

| left-x | left-y | right-x | right-y | nose-x | nose-y | grnd-ry | grnd-ly | grnd-lx | grnd-rx | |

|---|---|---|---|---|---|---|---|---|---|---|

| speaker | ||||||||||

| man-1 | 206.248203 | 218.679449 | 155.464350 | 150.371031 | 175.031756 | 61.642600 | 88.728430 | 157.036848 | 31.216447 | -19.567406 |

| woman-1 | 164.661438 | 161.271242 | 151.017865 | 117.332462 | 162.655120 | 57.245098 | 60.087364 | 104.026144 | 2.006318 | -11.637255 |

| woman-2 | 183.214509 | 176.527232 | 156.866295 | 119.835714 | 170.318973 | 58.022098 | 61.813616 | 118.505134 | 12.895536 | -13.452679 |

To select a mean that matches by speaker, use the pandas map method:

asl.df['left-x-mean'] = asl.df['speaker'].map(df_means['left-x'])

asl.df.head()

| left-x | left-y | right-x | right-y | nose-x | nose-y | speaker | grnd-ry | grnd-ly | grnd-lx | grnd-rx | left-x-mean | ||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| video | frame | ||||||||||||

| 98 | 0 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 | 119 | -12 | 9 | 164.661438 |

| 1 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 | 119 | -12 | 9 | 164.661438 | |

| 2 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 | 119 | -12 | 9 | 164.661438 | |

| 3 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 | 119 | -12 | 9 | 164.661438 | |

| 4 | 149 | 181 | 170 | 175 | 161 | 62 | woman-1 | 113 | 119 | -12 | 9 | 164.661438 |

Try it!¶

from asl_utils import test_std_tryit

# TODO Create a dataframe named `df_std` with standard deviations grouped

# by speaker

df_std = asl.df.groupby("speaker").std()

# test the code

test_std_tryit(df_std)

df_std

| left-x | left-y | right-x | right-y | nose-x | nose-y | grnd-ry | grnd-ly | grnd-lx | grnd-rx | left-x-mean | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| speaker | |||||||||||

| man-1 | 15.154425 | 36.328485 | 18.901917 | 54.902340 | 6.654573 | 5.520045 | 53.487999 | 36.572749 | 15.080360 | 20.269032 | 0.0 |

| woman-1 | 17.573442 | 26.594521 | 16.459943 | 34.667787 | 3.549392 | 3.538330 | 33.972660 | 27.117393 | 17.328941 | 16.764706 | 0.0 |

| woman-2 | 15.388711 | 28.825025 | 14.890288 | 39.649111 | 4.099760 | 3.416167 | 39.128572 | 29.320655 | 15.050938 | 16.191324 | 0.0 |

Features Implementation Submission¶

Implement four feature sets and answer the question that follows.

normalized Cartesian coordinates

- use mean and standard deviation statistics and the standard score equation to account for speakers with different heights and arm length

polar coordinates

- calculate polar coordinates with Cartesian to polar equations

- use the np.arctan2 function and swap the x and y axes to move the $0$ to $2\pi$ discontinuity to 12 o'clock instead of 3 o'clock; in other words, the normal break in radians value from $0$ to $2\pi$ occurs directly to the left of the speaker's nose, which may be in the signing area and interfere with results. By swapping the x and y axes, that discontinuity move to directly above the speaker's head, an area not generally used in signing.

delta difference

- as described in Thad's lecture, use the difference in values between one frame and the next frames as features

- pandas diff method and fillna method will be helpful for this one

custom features

- These are your own design; combine techniques used above or come up with something else entirely. We look forward to seeing what you come up with!

Some ideas to get you started: - normalize using a feature scaling equation - normalize the polar coordinates - adding additional deltas

# TODO add features for normalized by speaker values of left, right, x, y

# Name these 'norm-rx', 'norm-ry', 'norm-lx', and 'norm-ly'

# using Z-score scaling (X-Xmean)/Xstd

def normalize(df, label):

numerator = df[label] - df['speaker'].map(df.groupby("speaker").mean()[label])

denominator = df['speaker'].map(df.groupby("speaker").std()[label])

return numerator / denominator

asl.df['norm-rx'] = normalize(asl.df, 'right-x')

asl.df['norm-ry'] = normalize(asl.df, 'right-y')

asl.df['norm-lx'] = normalize(asl.df, 'left-x')

asl.df['norm-ly'] = normalize(asl.df, 'left-y')

features_norm = ['norm-rx', 'norm-ry', 'norm-lx', 'norm-ly']

# TODO add features for polar coordinate values where the nose is the origin

# Name these 'polar-rr', 'polar-rtheta', 'polar-lr', and 'polar-ltheta'

# Note that 'polar-rr' and 'polar-rtheta' refer to the radius and angle

def cart2polar(x_series, y_series):

r = np.sqrt(x_series**2 + y_series**2)

theta = np.arctan2(x_series, y_series)

return r, theta

asl.df['polar-rr'], asl.df['polar-rtheta'] = cart2polar(

asl.df['grnd-rx'], asl.df['grnd-ry'])

asl.df['polar-lr'], asl.df['polar-ltheta'] = cart2polar(

asl.df['grnd-lx'], asl.df['grnd-ly'])

features_polar = ['polar-rr', 'polar-rtheta', 'polar-lr', 'polar-ltheta']

# TODO add features for left, right, x, y differences by one time step, i.e. the "delta" values discussed in the lecture

# Name these 'delta-rx', 'delta-ry', 'delta-lx', and 'delta-ly'

asl.df['delta-rx'] = asl.df['right-x'].diff()

asl.df['delta-lx'] = asl.df['left-x'].diff()

asl.df['delta-ry'] = asl.df['right-y'].diff()

asl.df['delta-ly'] = asl.df['left-y'].diff()

beg_idx = [(vid, frame) for vid, frame in asl.df.index.tolist() if frame == 0]

asl.df.loc[beg_idx, ['delta-rx', 'delta-lx', 'delta-ry', 'delta-ly']] = 0

features_delta = ['delta-rx', 'delta-ry', 'delta-lx', 'delta-ly']

# TODO add features of your own design, which may be a combination of the above or something else

# Name these whatever you would like

asl.df['delta-norm-rx'] = normalize(asl.df, 'delta-rx')

asl.df['delta-norm-ry'] = normalize(asl.df, 'delta-ry')

asl.df['delta-norm-lx'] = normalize(asl.df, 'delta-lx')

asl.df['delta-norm-ly'] = normalize(asl.df, 'delta-ly')

# TODO define a list named 'features_custom' for building the training set

features_custom = ['delta-norm-rx', 'delta-norm-ry', 'delta-norm-lx', 'delta-norm-ly']

features_all = [features_delta, features_ground, features_norm, features_polar, features_custom]

features_all = sum(features_all, [])

features_all

['delta-rx', 'delta-ry', 'delta-lx', 'delta-ly', 'grnd-rx', 'grnd-ry', 'grnd-lx', 'grnd-ly', 'norm-rx', 'norm-ry', 'norm-lx', 'norm-ly', 'polar-rr', 'polar-rtheta', 'polar-lr', 'polar-ltheta', 'delta-norm-rx', 'delta-norm-ry', 'delta-norm-lx', 'delta-norm-ly']

Question 1: What custom features did you choose for the features_custom set and why?

Answer 1:

I chose delta-norm features which is obtained by standardizing delta features.

By standardizing, I mean

Why did I choose these features?

- Each person has a different body shape(arm width, height, etc.) Hence, it can result in significantly different signals

- It also keeps numbers small such that it makes the prediction easier because predicting the range of -1 ~ 1 is easier than predicting -100 ~ 100.

Features Unit Testing¶

Run the following unit tests as a sanity check on the defined "ground", "norm", "polar", and 'delta" feature sets. The test simply looks for some valid values but is not exhaustive. However, the project should not be submitted if these tests don't pass.

import unittest

# import numpy as np

class TestFeatures(unittest.TestCase):

def test_features_ground(self):

sample = (asl.df.ix[98, 1][features_ground]).tolist()

self.assertEqual(sample, [9, 113, -12, 119])

def test_features_norm(self):

sample = (asl.df.ix[98, 1][features_norm]).tolist()

np.testing.assert_almost_equal(sample, [1.153, 1.663, -0.891, 0.742],

3)

def test_features_polar(self):

sample = (asl.df.ix[98, 1][features_polar]).tolist()

np.testing.assert_almost_equal(sample,

[113.3578, 0.0794, 119.603, -0.1005], 3)

def test_features_delta(self):

sample = (asl.df.ix[98, 0][features_delta]).tolist()

self.assertEqual(sample, [0, 0, 0, 0])

sample = (asl.df.ix[98, 18][features_delta]).tolist()

self.assertTrue(sample in [[-16, -5, -2, 4], [-14, -9, 0, 0]],

"Sample value found was {}".format(sample))

suite = unittest.TestLoader().loadTestsFromModule(TestFeatures())

unittest.TextTestRunner().run(suite)

.... ---------------------------------------------------------------------- Ran 4 tests in 0.005s OK

<unittest.runner.TextTestResult run=4 errors=0 failures=0>

PART 2: Model Selection¶

Model Selection Tutorial¶

The objective of Model Selection is to tune the number of states for each word HMM prior to testing on unseen data. In this section you will explore three methods:

- Log likelihood using cross-validation folds (CV)

- Bayesian Information Criterion (BIC)

- Discriminative Information Criterion (DIC)

Train a single word¶

Now that we have built a training set with sequence data, we can "train" models for each word. As a simple starting example, we train a single word using Gaussian hidden Markov models (HMM). By using the fit method during training, the Baum-Welch Expectation-Maximization (EM) algorithm is invoked iteratively to find the best estimate for the model for the number of hidden states specified from a group of sample seequences. For this example, we assume the correct number of hidden states is 3, but that is just a guess. How do we know what the "best" number of states for training is? We will need to find some model selection technique to choose the best parameter.

import warnings

from hmmlearn.hmm import GaussianHMM

def train_a_word(word, num_hidden_states, features):

warnings.filterwarnings("ignore", category=DeprecationWarning)

training = asl.build_training(features)

X, lengths = training.get_word_Xlengths(word)

model = GaussianHMM(n_components=num_hidden_states,

n_iter=1000).fit(X, lengths)

logL = model.score(X, lengths)

return model, logL

demoword = 'BOOK'

model, logL = train_a_word(demoword, 3, features_ground)

print("Number of states trained in model for {} is {}".format(

demoword, model.n_components))

print("logL = {}".format(logL))

Number of states trained in model for BOOK is 3 logL = -2331.1138127433196

The HMM model has been trained and information can be pulled from the model, including means and variances for each feature and hidden state. The log likelihood for any individual sample or group of samples can also be calculated with the score method.

def show_model_stats(word, model):

print("Number of states trained in model for {} is {}".format(

word, model.n_components))

variance = np.array([np.diag(model.covars_[i])

for i in range(model.n_components)])

for i in range(model.n_components): # for each hidden state

print("hidden state #{}".format(i))

print("mean = ", model.means_[i])

print("variance = ", variance[i])

print()

show_model_stats(demoword, model)

Number of states trained in model for BOOK is 3 hidden state #0 mean = [ -3.46504869 50.66686933 14.02391587 52.04731066] variance = [ 49.12346305 43.04799144 39.35109609 47.24195772] hidden state #1 mean = [ -11.45300909 94.109178 19.03512475 102.2030162 ] variance = [ 77.403668 203.35441965 26.68898447 156.12444034] hidden state #2 mean = [ -1.12415027 69.44164191 17.02866283 77.7231196 ] variance = [ 19.70434594 16.83041492 30.51552305 11.03678246]

Try it!¶

Experiment by changing the feature set, word, and/or num_hidden_states values in the next cell to see changes in values.

my_testword = 'CHOCOLATE'

# Experiment here with different parameters

model, logL = train_a_word(my_testword, 3, features_ground)

show_model_stats(my_testword, model)

print("logL = {}".format(logL))

Number of states trained in model for CHOCOLATE is 3 hidden state #0 mean = [ -9.30211403 55.32333876 6.92259936 71.24057775] variance = [ 16.16920957 46.50917372 3.81388185 15.79446427] hidden state #1 mean = [ 0.58333333 87.91666667 12.75 108.5 ] variance = [ 39.41055556 18.74388889 9.855 144.4175 ] hidden state #2 mean = [ -5.40587658 60.1652424 2.32479599 91.3095432 ] variance = [ 7.95073876 64.13103127 13.68077479 129.5912395 ] logL = -601.3291470028638

Visualize the hidden states¶

We can plot the means and variances for each state and feature. Try varying the number of states trained for the HMM model and examine the variances. Are there some models that are "better" than others? How can you tell? We would like to hear what you think in the classroom online.

%matplotlib inline

import math

from matplotlib import (cm, pyplot as plt, mlab)

def visualize(word, model):

""" visualize the input model for a particular word """

variance = np.array([np.diag(model.covars_[i])

for i in range(model.n_components)])

figures = []

for parm_idx in range(len(model.means_[0])):

xmin = int(min(model.means_[:, parm_idx]) - max(variance[:, parm_idx]))

xmax = int(max(model.means_[:, parm_idx]) + max(variance[:, parm_idx]))

fig, axs = plt.subplots(model.n_components, sharex=True, sharey=False)

colours = cm.rainbow(np.linspace(0, 1, model.n_components))

for i, (ax, colour) in enumerate(zip(axs, colours)):

x = np.linspace(xmin, xmax, 100)

mu = model.means_[i, parm_idx]

sigma = math.sqrt(np.diag(model.covars_[i])[parm_idx])

ax.plot(x, mlab.normpdf(x, mu, sigma), c=colour)

ax.set_title(

"{} feature {} hidden state #{}".format(word, parm_idx, i))

ax.grid(True)

figures.append(plt)

for p in figures:

p.show()

visualize(my_testword, model)

ModelSelector class¶

Review the SelectorModel class from the codebase found in the my_model_selectors.py module. It is designed to be a strategy pattern for choosing different model selectors. For the project submission in this section, subclass SelectorModel to implement the following model selectors. In other words, you will write your own classes/functions in the my_model_selectors.py module and run them from this notebook:

SelectorCV: Log likelihood with CVSelectorBIC: BICSelectorDIC: DIC

You will train each word in the training set with a range of values for the number of hidden states, and then score these alternatives with the model selector, choosing the "best" according to each strategy. The simple case of training with a constant value for n_components can be called using the provided SelectorConstant subclass as follow:

from my_model_selectors import SelectorConstant

# Experiment here with different feature sets defined in part 1

training = asl.build_training(features_ground)

word = 'VEGETABLE' # Experiment here with different words

model = SelectorConstant(training.get_all_sequences(),

training.get_all_Xlengths(),

word, n_constant=3).select()

print("Number of states trained in model for {} is {}".format(

word, model.n_components))

Number of states trained in model for VEGETABLE is 3

Cross-validation folds¶

If we simply score the model with the Log Likelihood calculated from the feature sequences it has been trained on, we should expect that more complex models will have higher likelihoods. However, that doesn't tell us which would have a better likelihood score on unseen data. The model will likely be overfit as complexity is added. To estimate which topology model is better using only the training data, we can compare scores using cross-validation. One technique for cross-validation is to break the training set into "folds" and rotate which fold is left out of training. The "left out" fold scored. This gives us a proxy method of finding the best model to use on "unseen data". In the following example, a set of word sequences is broken into three folds using the scikit-learn Kfold class object. When you implement SelectorCV, you will use this technique.

from sklearn.model_selection import KFold

from asl_utils import combine_sequences

# Experiment here with different feature sets

training = asl.build_training(features_ground)

word = 'VEGETABLE' # Experiment here with different words

word_sequences = training.get_word_sequences(word)

split_method = KFold()

for cv_train_idx, cv_test_idx in split_method.split(word_sequences):

print("Train fold indices:{} Test fold indices:{}".format(

cv_train_idx, cv_test_idx)) # view indices of the folds

X, y = combine_sequences(cv_train_idx, word_sequences)

Train fold indices:[2 3 4 5] Test fold indices:[0 1] Train fold indices:[0 1 4 5] Test fold indices:[2 3] Train fold indices:[0 1 2 3] Test fold indices:[4 5]

Tip: In order to run hmmlearn training using the X,lengths tuples on the new folds, subsets must be combined based on the indices given for the folds. A helper utility has been provided in the asl_utils module named combine_sequences for this purpose.

Scoring models with other criterion¶

Scoring model topologies with BIC balances fit and complexity within the training set for each word. In the BIC equation, a penalty term penalizes complexity to avoid overfitting, so that it is not necessary to also use cross-validation in the selection process. There are a number of references on the internet for this criterion. These slides include a formula you may find helpful for your implementation.

The advantages of scoring model topologies with DIC over BIC are presented by Alain Biem in this reference (also found here). DIC scores the discriminant ability of a training set for one word against competing words. Instead of a penalty term for complexity, it provides a penalty if model liklihoods for non-matching words are too similar to model likelihoods for the correct word in the word set.

Model Selection Implementation Submission¶

Implement SelectorCV, SelectorBIC, and SelectorDIC classes in the my_model_selectors.py module. Run the selectors on the following five words. Then answer the questions about your results.

Tip: The hmmlearn library may not be able to train or score all models. Implement try/except contructs as necessary to eliminate non-viable models from consideration.

words_to_train = ['FISH', 'BOOK', 'VEGETABLE', 'FUTURE', 'JOHN']

import timeit

# TODO: Implement SelectorCV in my_model_selector.py

from my_model_selectors import SelectorCV

# Experiment here with different feature sets defined in part 1

training = asl.build_training(features_ground)

sequences = training.get_all_sequences()

Xlengths = training.get_all_Xlengths()

cv_model_list = []

for word in words_to_train:

start = timeit.default_timer()

model = SelectorCV(sequences,

Xlengths,

word,

min_n_components=2,

max_n_components=15,

random_state=14).select()

end = timeit.default_timer() - start

if model is not None:

print("Training complete for {} with {} states with time {} seconds".

format(word, model.n_components, end))

cv_model_list.append(model)

else:

print("Training failed for {}".format(word))

Training complete for FISH with 11 states with time 0.23446067998884246 seconds Training complete for BOOK with 6 states with time 2.337669961998472 seconds Training complete for VEGETABLE with 2 states with time 0.9285565570171457 seconds Training complete for FUTURE with 2 states with time 2.1497781430080067 seconds Training complete for JOHN with 12 states with time 24.004929588001687 seconds

# TODO: Implement SelectorBIC in module my_model_selectors.py

from my_model_selectors import SelectorBIC

# Experiment here with different feature sets defined in part 1

training = asl.build_training(features_ground)

sequences = training.get_all_sequences()

Xlengths = training.get_all_Xlengths()

bic_model_list = []

for word in words_to_train:

start = timeit.default_timer()

model = SelectorBIC(sequences,

Xlengths,

word,

min_n_components=2,

max_n_components=15,

random_state=14, verbose=False).select()

end = timeit.default_timer() - start

if model is not None:

print("Training complete for {} with {} states with time {} seconds".format(

word, model.n_components, end))

bic_model_list.append(model)

else:

print("Training failed for {}".format(word))

Training complete for FISH with 5 states with time 0.1925643530266825 seconds Training complete for BOOK with 8 states with time 1.1851199230004568 seconds Training complete for VEGETABLE with 9 states with time 0.39815885000280105 seconds Training complete for FUTURE with 9 states with time 1.2749047129764222 seconds Training complete for JOHN with 13 states with time 12.217969883000478 seconds

# TODO: Implement SelectorDIC in module my_model_selectors.py

from my_model_selectors import SelectorDIC

# Experiment here with different feature sets defined in part 1

training = asl.build_training(features_ground)

sequences = training.get_all_sequences()

Xlengths = training.get_all_Xlengths()

dic_model_list = []

for word in words_to_train:

start = timeit.default_timer()

model = SelectorDIC(sequences,

Xlengths,

word,

min_n_components=2,

max_n_components=15,

random_state=14).select()

end = timeit.default_timer() - start

if model is not None:

print("Training complete for {} with {} states with time {} seconds".format(

word, model.n_components, end))

dic_model_list.append(model)

else:

print("Training failed for {}".format(word))

Training complete for FISH with 3 states with time 0.4397394340194296 seconds Training complete for BOOK with 15 states with time 2.3636560129816644 seconds Training complete for VEGETABLE with 15 states with time 1.5425242250203155 seconds Training complete for FUTURE with 15 states with time 2.397094385989476 seconds Training complete for JOHN with 15 states with time 13.0246704040037 seconds

from io import StringIO

table = StringIO("""Type,Word,States,Seconds

CV,FISH,11,0.23446068

CV,BOOK,6,2.337669962

CV,VEGETABLE,2,0.928556557

CV,FUTURE,2,2.149778143

CV,JOHN,12,24.004929588

BIC,FISH,5,0.192564353

BIC,BOOK,8,1.185119923

BIC,VEGETABLE,9,0.39815885

BIC,FUTURE,9,1.274904713

BIC,JOHN,13,12.217969883

DIC,FISH,3,0.439739434

DIC,BOOK,15,2.363656013

DIC,VEGETABLE,15,1.542524225

DIC,FUTURE,15,2.397094386

DIC,JOHN,15,13.024670404

""")

df = pd.read_csv(table, sep=",")

df = df.groupby("Type").mean()[['Seconds', "States"]]

df.plot(kind='bar', grid=True);

Question 2: Compare and contrast the possible advantages and disadvantages of the various model selectors implemented.

Answer 2:

| Type | Pros | Cons |

|---|---|---|

| BIC | Fast and good perforamce by utilizing a penalty term | As the number of features increases, it's difficult to find a right answer |

| CV | The least number of states implies it's probably generalized well or at least not overfitting | Slow because of cross validations |

| DIC | Its performance is likely to be better than BIC because of high number of parameters | Too many hidden states implies possible overfitting and more complex model |

Model Selector Unit Testing¶

Run the following unit tests as a sanity check on the implemented model selectors. The test simply looks for valid interfaces but is not exhaustive. However, the project should not be submitted if these tests don't pass.

from asl_test_model_selectors import TestSelectors

suite = unittest.TestLoader().loadTestsFromModule(TestSelectors())

unittest.TextTestRunner().run(suite)

.... ---------------------------------------------------------------------- Ran 4 tests in 28.669s OK

<unittest.runner.TextTestResult run=4 errors=0 failures=0>

PART 3: Recognizer¶

The objective of this section is to "put it all together". Using the four feature sets created and the three model selectors, you will experiment with the models and present your results. Instead of training only five specific words as in the previous section, train the entire set with a feature set and model selector strategy.

Recognizer Tutorial¶

Train the full training set¶

The following example trains the entire set with the example features_ground and SelectorConstant features and model selector. Use this pattern for you experimentation and final submission cells.

# autoreload for automatically reloading changes made in my_model_selectors and my_recognizer

%load_ext autoreload

%autoreload 2

from my_model_selectors import SelectorConstant

def train_all_words(features, model_selector):

training = asl.build_training(features) # Experiment here with different feature sets defined in part 1

sequences = training.get_all_sequences()

Xlengths = training.get_all_Xlengths()

model_dict = {}

for word in training.words:

model = model_selector(sequences, Xlengths, word,

n_constant=3).select()

model_dict[word]=model

return model_dict

models = train_all_words(features_ground, SelectorConstant)

print("Number of word models returned = {}".format(len(models)))

The autoreload extension is already loaded. To reload it, use: %reload_ext autoreload Number of word models returned = 112

Load the test set¶

The build_test method in ASLdb is similar to the build_training method already presented, but there are a few differences:

- the object is type

SinglesData - the internal dictionary keys are the index of the test word rather than the word itself

- the getter methods are

get_all_sequences,get_all_Xlengths,get_item_sequencesandget_item_Xlengths

test_set = asl.build_test(features_ground)

print("Number of test set items: {}".format(test_set.num_items))

print("Number of test set sentences: {}".format(len(test_set.sentences_index)))

Number of test set items: 178 Number of test set sentences: 40

Recognizer Implementation Submission¶

For the final project submission, students must implement a recognizer following guidance in the my_recognizer.py module. Experiment with the four feature sets and the three model selection methods (that's 12 possible combinations). You can add and remove cells for experimentation or run the recognizers locally in some other way during your experiments, but retain the results for your discussion. For submission, you will provide code cells of only three interesting combinations for your discussion (see questions below). At least one of these should produce a word error rate of less than 60%, i.e. WER < 0.60 .

Tip: The hmmlearn library may not be able to train or score all models. Implement try/except contructs as necessary to eliminate non-viable models from consideration.

# TODO implement the recognize method in my_recognizer

from my_recognizer import recognize

from asl_utils import show_errors

from itertools import combinations

from tqdm import tqdm

def get_WER(guesses, test_set):

""" Get WER """

S = 0

N = len(test_set.wordlist)

num_test_words = len(test_set.wordlist)

for word_id in range(num_test_words):

if guesses[word_id] != test_set.wordlist[word_id]:

S += 1

return S / N

def run_train(feat, selector):

models = train_all_words(feat, selector)

test_set = asl.build_test(feat)

probabilities, guesses = recognize(models, test_set)

return get_WER(guesses, test_set)

features_list = [

"features_ground",

"features_delta",

"features_all",

"features_custom",

"features_ground",

"features_norm",

"features_polar"

]

model_selector_list = ["SelectorCV", "SelectorDIC", "SelectorBIC"]

result_list = []

for features in tqdm(features_list):

for model_selector in model_selector_list:

score = run_train(eval(features), eval(model_selector))

result = (features, model_selector, score)

result_list.append(result)

0%| | 0/7 [00:00<?, ?it/s] 100%|██████████| 7/7 [25:51<00:00, 217.23s/it]

result_frame = pd.DataFrame(sorted(result_list, key=lambda x: x[-1]), columns=["feature", "selector", "WER"])

result_frame.head()

| feature | selector | WER | |

|---|---|---|---|

| 0 | features_all | SelectorBIC | 0.438202 |

| 1 | features_all | SelectorDIC | 0.494382 |

| 2 | features_all | SelectorCV | 0.516854 |

| 3 | features_ground | SelectorCV | 0.528090 |

| 4 | features_ground | SelectorCV | 0.528090 |

# TODO Choose a feature set and model selector

features = features_all # change as needed

model_selector = SelectorBIC # change as needed

# TODO Recognize the test set and display the result with the show_errors method

models = train_all_words(features, model_selector)

test_set = asl.build_test(features)

probabilities, guesses = recognize(models, test_set)

show_errors(guesses, test_set)

**** WER = 0.43820224719101125

Total correct: 100 out of 178

Video Recognized Correct

=====================================================================================================

2: JOHN WRITE *ARRIVE JOHN WRITE HOMEWORK

7: JOHN *PEOPLE GO CAN JOHN CAN GO CAN

12: JOHN CAN *WHAT CAN JOHN CAN GO CAN

21: JOHN *ARRIVE *JOHN *JOHN *CAR *CAR *FUTURE *MARY JOHN FISH WONT EAT BUT CAN EAT CHICKEN

25: JOHN LIKE *LOVE IX IX JOHN LIKE IX IX IX

28: JOHN LIKE IX *LIKE IX JOHN LIKE IX IX IX

30: JOHN LIKE *MARY IX IX JOHN LIKE IX IX IX

36: MARY *JOHN *IX IX *MARY *MARY MARY VEGETABLE KNOW IX LIKE CORN1

40: JOHN IX *CORN MARY *MARY JOHN IX THINK MARY LOVE

43: JOHN *JOHN BUY HOUSE JOHN MUST BUY HOUSE

50: *SOMETHING-ONE JOHN BUY CAR *JOHN FUTURE JOHN BUY CAR SHOULD

54: JOHN *FUTURE *FUTURE BUY HOUSE JOHN SHOULD NOT BUY HOUSE

57: JOHN *JOHN *IX MARY JOHN DECIDE VISIT MARY

67: JOHN FUTURE *MARY BUY HOUSE JOHN FUTURE NOT BUY HOUSE

71: JOHN *FUTURE VISIT MARY JOHN WILL VISIT MARY

74: *IX *MARY *MARY MARY JOHN NOT VISIT MARY

77: *JOHN BLAME MARY ANN BLAME MARY

84: *JOHN *JOHN *JOHN BOOK IX-1P FIND SOMETHING-ONE BOOK

89: *FUTURE IX *IX *IX IX NEW COAT JOHN IX GIVE MAN IX NEW COAT

90: JOHN *IX IX *IX *MARY BOOK JOHN GIVE IX SOMETHING-ONE WOMAN BOOK

92: JOHN *IX IX *WOMAN WOMAN BOOK JOHN GIVE IX SOMETHING-ONE WOMAN BOOK

100: POSS NEW CAR BREAK-DOWN POSS NEW CAR BREAK-DOWN

105: JOHN *SEE JOHN LEG

107: JOHN *IX *JOHN *MARY *MARY JOHN POSS FRIEND HAVE CANDY

108: *JOHN ARRIVE WOMAN ARRIVE

113: IX CAR *IX *JOHN *BOX IX CAR BLUE SUE BUY

119: *MARY *BUY1 IX CAR *IX SUE BUY IX CAR BLUE

122: JOHN *GIVE1 BOOK JOHN READ BOOK

139: JOHN *BUY1 WHAT *GIVE1 BOOK JOHN BUY WHAT YESTERDAY BOOK

142: JOHN BUY YESTERDAY WHAT BOOK JOHN BUY YESTERDAY WHAT BOOK

158: LOVE JOHN WHO LOVE JOHN WHO

167: JOHN IX *IX LOVE MARY JOHN IX SAY LOVE MARY

171: JOHN *JOHN BLAME JOHN MARY BLAME

174: *JOHN *GIVE1 GIVE1 *IX *BLAME PEOPLE GROUP GIVE1 JANA TOY

181: JOHN *BOX JOHN ARRIVE

184: *IX *IX *GIVE1 TEACHER APPLE ALL BOY GIVE TEACHER APPLE

189: JOHN *IX *JOHN BOX JOHN GIVE GIRL BOX

193: JOHN *IX *IX BOX JOHN GIVE GIRL BOX

199: *JOHN *ARRIVE *JOHN LIKE CHOCOLATE WHO

201: JOHN *MAN *IX *WOMAN BUY HOUSE JOHN TELL MARY IX-1P BUY HOUSE

# TODO Choose a feature set and model selector

features = features_all # change as needed

model_selector = SelectorDIC # change as needed

# TODO Recognize the test set and display the result with the show_errors method

models = train_all_words(features, model_selector)

test_set = asl.build_test(features)

probabilities, guesses = recognize(models, test_set)

show_errors(guesses, test_set)

**** WER = 0.4943820224719101

Total correct: 90 out of 178

Video Recognized Correct

=====================================================================================================

2: JOHN *ARRIVE *ARRIVE JOHN WRITE HOMEWORK

7: JOHN CAN *IX CAN JOHN CAN GO CAN

12: JOHN CAN *WHAT CAN JOHN CAN GO CAN

21: JOHN *JOHN *JOHN *JOHN *CAR *CAR *FUTURE *MARY JOHN FISH WONT EAT BUT CAN EAT CHICKEN

25: JOHN *JOHN *LOVE IX *JOHN JOHN LIKE IX IX IX

28: JOHN *MARY IX *JOHN IX JOHN LIKE IX IX IX

30: JOHN *MARY *MARY IX IX JOHN LIKE IX IX IX

36: MARY *JOHN *IX IX *MARY *MARY MARY VEGETABLE KNOW IX LIKE CORN1

40: JOHN IX *CORN MARY *MARY JOHN IX THINK MARY LOVE

43: JOHN *JOHN BUY HOUSE JOHN MUST BUY HOUSE

50: *JOHN JOHN BUY CAR *JOHN FUTURE JOHN BUY CAR SHOULD

54: JOHN *FUTURE *MARY BUY HOUSE JOHN SHOULD NOT BUY HOUSE

57: JOHN *JOHN *IX MARY JOHN DECIDE VISIT MARY

67: JOHN FUTURE *MARY BUY HOUSE JOHN FUTURE NOT BUY HOUSE

71: JOHN *FUTURE VISIT MARY JOHN WILL VISIT MARY

74: *IX *MARY *MARY MARY JOHN NOT VISIT MARY

77: *JOHN BLAME MARY ANN BLAME MARY

84: *JOHN *JOHN *JOHN BOOK IX-1P FIND SOMETHING-ONE BOOK

89: *MARY IX *IX *IX IX *ARRIVE *ARRIVE JOHN IX GIVE MAN IX NEW COAT

90: JOHN *IX IX *IX *MARY BOOK JOHN GIVE IX SOMETHING-ONE WOMAN BOOK

92: JOHN *IX IX *IX *JOHN BOOK JOHN GIVE IX SOMETHING-ONE WOMAN BOOK

100: *IX NEW CAR BREAK-DOWN POSS NEW CAR BREAK-DOWN

105: JOHN *POSS JOHN LEG

107: JOHN *IX *JOHN *MARY *MARY JOHN POSS FRIEND HAVE CANDY

108: *JOHN ARRIVE WOMAN ARRIVE

113: IX CAR *IX *JOHN *JOHN IX CAR BLUE SUE BUY

119: *MARY *BUY1 IX *JOHN *IX SUE BUY IX CAR BLUE

122: JOHN *GIVE1 BOOK JOHN READ BOOK

139: JOHN *ARRIVE WHAT *JOHN BOOK JOHN BUY WHAT YESTERDAY BOOK

142: JOHN BUY YESTERDAY WHAT BOOK JOHN BUY YESTERDAY WHAT BOOK

158: LOVE JOHN WHO LOVE JOHN WHO

167: JOHN IX *IX LOVE MARY JOHN IX SAY LOVE MARY

171: JOHN *JOHN BLAME JOHN MARY BLAME

174: *JOHN *GIVE1 GIVE1 *IX *JOHN PEOPLE GROUP GIVE1 JANA TOY

181: JOHN *JOHN JOHN ARRIVE

184: *IX BOY *GIVE1 TEACHER *IX ALL BOY GIVE TEACHER APPLE

189: JOHN *MARY *JOHN BOX JOHN GIVE GIRL BOX

193: JOHN *IX *IX BOX JOHN GIVE GIRL BOX

199: *JOHN *ARRIVE *JOHN LIKE CHOCOLATE WHO

201: JOHN *MAN *IX *JOHN BUY HOUSE JOHN TELL MARY IX-1P BUY HOUSE

# TODO Choose a feature set and model selector

features = features_all # change as needed

model_selector = SelectorCV # change as needed

# TODO Recognize the test set and display the result with the show_errors method

models = train_all_words(features, model_selector)

test_set = asl.build_test(features)

probabilities, guesses = recognize(models, test_set)

show_errors(guesses, test_set)

**** WER = 0.5168539325842697

Total correct: 86 out of 178

Video Recognized Correct

=====================================================================================================

2: JOHN *BOOK *ARRIVE JOHN WRITE HOMEWORK

7: JOHN *PEOPLE *HAVE *HAVE JOHN CAN GO CAN

12: JOHN CAN *GO1 CAN JOHN CAN GO CAN

21: JOHN *VIDEOTAPE *VISIT *MARY *CAR *CAR *FUTURE *MARY JOHN FISH WONT EAT BUT CAN EAT CHICKEN

25: JOHN *MARY *MARY *JOHN IX JOHN LIKE IX IX IX

28: JOHN *MARY IX *JOHN IX JOHN LIKE IX IX IX

30: JOHN LIKE *MARY *MARY *MARY JOHN LIKE IX IX IX

36: MARY *JOHN *IX *GIVE *MARY *MARY MARY VEGETABLE KNOW IX LIKE CORN1

40: JOHN *MARY *CORN MARY *MARY JOHN IX THINK MARY LOVE

43: JOHN *FUTURE BUY HOUSE JOHN MUST BUY HOUSE

50: *JOHN *FRANK BUY CAR *JOHN FUTURE JOHN BUY CAR SHOULD

54: JOHN SHOULD *MARY BUY HOUSE JOHN SHOULD NOT BUY HOUSE

57: *MARY *JOHN *IX MARY JOHN DECIDE VISIT MARY

67: JOHN FUTURE *MARY BUY HOUSE JOHN FUTURE NOT BUY HOUSE

71: JOHN *FUTURE *BLAME MARY JOHN WILL VISIT MARY

74: *MARY *MARY *MARY MARY JOHN NOT VISIT MARY

77: *JOHN BLAME MARY ANN BLAME MARY

84: *JOHN *ARRIVE *FUTURE BOOK IX-1P FIND SOMETHING-ONE BOOK

89: *MARY IX *IX *IX IX NEW COAT JOHN IX GIVE MAN IX NEW COAT

90: JOHN *IX IX *ALL *MARY BOOK JOHN GIVE IX SOMETHING-ONE WOMAN BOOK

92: JOHN *IX IX *IX *IX BOOK JOHN GIVE IX SOMETHING-ONE WOMAN BOOK

100: *IX NEW CAR BREAK-DOWN POSS NEW CAR BREAK-DOWN

105: JOHN *WHO JOHN LEG

107: JOHN *JOHN *HAVE *MARY *JOHN JOHN POSS FRIEND HAVE CANDY

108: *MARY ARRIVE WOMAN ARRIVE

113: *JOHN CAR BLUE *JOHN *ARRIVE IX CAR BLUE SUE BUY

119: *PREFER *BUY1 IX CAR *JOHN SUE BUY IX CAR BLUE

122: JOHN *HOUSE BOOK JOHN READ BOOK

139: JOHN *BUY1 WHAT YESTERDAY BOOK JOHN BUY WHAT YESTERDAY BOOK

142: JOHN BUY YESTERDAY WHAT BOOK JOHN BUY YESTERDAY WHAT BOOK

158: LOVE *MARY WHO LOVE JOHN WHO

167: JOHN *SOMETHING-ONE *MARY LOVE MARY JOHN IX SAY LOVE MARY

171: *MARY *JOHN BLAME JOHN MARY BLAME

174: *GIVE1 *GIVE1 GIVE1 *JOHN *CAN PEOPLE GROUP GIVE1 JANA TOY

181: JOHN ARRIVE JOHN ARRIVE

184: *IX BOY *GIVE1 TEACHER APPLE ALL BOY GIVE TEACHER APPLE

189: JOHN *JOHN *JOHN *ARRIVE JOHN GIVE GIRL BOX

193: JOHN *SOMETHING-ONE *IX BOX JOHN GIVE GIRL BOX

199: *JOHN CHOCOLATE *MARY LIKE CHOCOLATE WHO

201: JOHN *SHOULD *IX *IX BUY HOUSE JOHN TELL MARY IX-1P BUY HOUSE

Question 3: Summarize the error results from three combinations of features and model selectors. What was the "best" combination and why? What additional information might we use to improve our WER? For more insight on improving WER, take a look at the introduction to Part 4.

Answer 3:

For the best performance,

I have to use every features including my custom features and SelectorBIC. The reason for such high performance is the standardization of features because each person has different body shapes hence different signals.

However, the best score is still low and I believe it can improve further by standardizing every other features which I didn't because of the time limit.

Recognizer Unit Tests¶

Run the following unit tests as a sanity check on the defined recognizer. The test simply looks for some valid values but is not exhaustive. However, the project should not be submitted if these tests don't pass.

from asl_test_recognizer import TestRecognize

suite = unittest.TestLoader().loadTestsFromModule(TestRecognize())

unittest.TextTestRunner().run(suite)

.. ---------------------------------------------------------------------- Ran 2 tests in 16.490s OK

<unittest.runner.TextTestResult run=2 errors=0 failures=0>

PART 4: (OPTIONAL) Improve the WER with Language Models¶

We've squeezed just about as much as we can out of the model and still only get about 50% of the words right! Surely we can do better than that. Probability to the rescue again in the form of statistical language models (SLM). The basic idea is that each word has some probability of occurrence within the set, and some probability that it is adjacent to specific other words. We can use that additional information to make better choices.

Additional reading and resources¶

- Introduction to N-grams (Stanford Jurafsky slides)

- Speech Recognition Techniques for a Sign Language Recognition System, Philippe Dreuw et al see the improved results of applying LM on this data!

- SLM data for this ASL dataset

Optional challenge¶

The recognizer you implemented in Part 3 is equivalent to a "0-gram" SLM. Improve the WER with the SLM data provided with the data set in the link above using "1-gram", "2-gram", and/or "3-gram" statistics. The probabilities data you've already calculated will be useful and can be turned into a pandas DataFrame if desired (see next cell).

Good luck! Share your results with the class!

# create a DataFrame of log likelihoods for the test word items

df_probs = pd.DataFrame(data=probabilities)

df_probs.head()