Least Squares¶

... or, $A\mathbf{x} = \mathbf{b}$ has no solutions! What do I do?!

# for conversion to PDF use these settings

# %matplotlib inline

# qr_setting = 'url'

# qrviz_setting = 'show'

#

# for lecture use notebook

%matplotlib notebook

qr_setting = 'url'

qrviz_setting = 'save'

#

%config InlineBackend.figure_format='retina'

# import libraries

import numpy as np

import matplotlib as mp

import pandas as pd

import matplotlib.pyplot as plt

import laUtilities as ut

import slideUtilities as sl

import demoUtilities as dm

import pandas as pd

from importlib import reload

from datetime import datetime

from IPython.display import Image

from IPython.display import display_html

from IPython.display import display

from IPython.display import Math

from IPython.display import Latex

from IPython.display import HTML

reload(sl)

reload(ut);

# image credit: http://en.wikipedia.org/wiki/Carl_Friedrich_Gauss#mediaviewer/File:Carl_Friedrich_Gauss.jpg

display(Image("images/Carl_Friedrich_Gauss.jpg", width=450))

Let's go back to week 1. A long time ago!

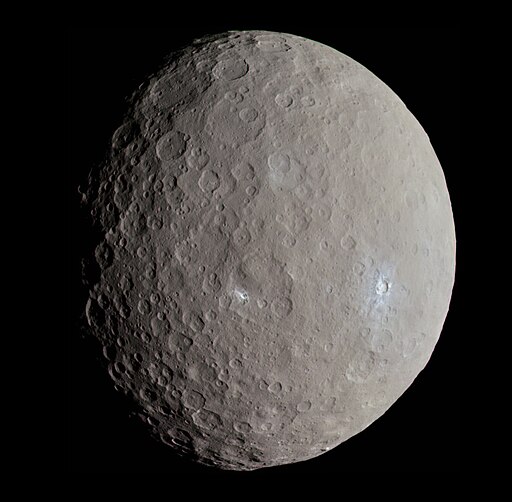

Recall Gauss's remarkable accomplishment in his early 20s. He took the set of measurements made by Piazzi of the dwarf planet Ceres and predicted where Ceres subsequently would appear in the sky (after it was lost behind the sun). This told Olbers exactly where to look, and lo and behold . . .

We can understand now a little better what Gauss had to do.

Kepler had discovered, and Newton had explained, that each planet orbits the sun following the path of an ellipse.

To describe the orbit of Ceres, Gauss had to construct the equation for its ellipse:

$$a_1 x_1^2 + a_2 x_2^2 + a_3 x_1x_2 + a_4 x_1 + a_5 x_2 + a_6 = 0.$$He had many measurements of $(x_1, x_2)$ pairs and had to find the $a_1, \dots, a_6.$

This is actually a linear system:

$$\begin{bmatrix}x_{11}^2 &x_{21}^2&x_{11}x_{21}&x_{11}&x_{21}&1\\x_{12}^2 &x_{22}^2&x_{12}x_{22}&x_{12}&x_{22}&1\\ \vdots&\vdots&\vdots&\vdots&\vdots&\vdots\\x_{1n}^2 &x_{2n}^2&x_{1n}x_{2n}&x_{1n}&x_{2n}&1\end{bmatrix} \begin{bmatrix}a_1\\a_2\\a_3\\a_4\\a_5\\a_6\end{bmatrix} = \mathbf{0}$$Now, according to Newton, this is a consistent linear system. The equation for the ellipse is exactly correct and all we need is six $(x_1, x_2)$ sets of measurements to know the orbit of Ceres exactly.

What could go wrong? :)

Obviously, there are going to be measurement errors in Piazzi's observations. If we just solve the system using six measurements, we will probably get incorrect values for the coefficients $a_1, \dots, a_6.$

A better idea is to use all of the $n$ measurement data available, and try to find a way to cancel out errors.

So, using all the $n$ data measurements available, we construct a linear system:

$$ X\mathbf{a} = \mathbf{b}$$where $X$ is $n\times 6$ and $\mathbf{b} \in \mathbb{R}^n$.

But now, due to measurement errors, we can't expect $\mathbf{b}$ will lie in the column space of $X$. We have an incosistent system.

This system has no solutions!

What can we do if $A\mathbf{x} = \mathbf{b}$ has no solutions?

We now understand if $A$ is $m\times n$ and $A\mathbf{x} = \mathbf{b}$ has no solutions, that is because the columns of $A$ do not span $\mathbb{R}^m$, and $\mathbf{b}$ is not in the column space of $A$.

In many cases we will be quite satisfied to find an $\mathbf{x}$ that makes $A\mathbf{x}$ as close as possible to $\mathbf{b}.$

In other words, we are looking for an $\mathbf{x}$ such that $A\mathbf{x}$ makes a good approximation to $\mathbf{b}.$

We can think of the quality of the approximation of $A\mathbf{x}$ to $\mathbf{b}$ as the distance from $A\mathbf{x}$ to $\mathbf{b},$ which is

$$\Vert A\mathbf{x} - \mathbf{b}\Vert.$$The general least-squares problem is to find an $\mathbf{x}$ that makes $\Vert A\mathbf{x}-\mathbf{b}\Vert$ as small as possible.

This is called "least squares" because it is equivalent to minimizing $\Vert A\mathbf{x}-\mathbf{b}\Vert^2,$ which is the sum of squared differences.

Just to make this explicit: say that we denote $A\mathbf{x}$ by $\mathbf{y}$. Then

$$\Vert A\mathbf{x}-\mathbf{b}\Vert^2 = \sum_i (y_i-b_i)^2$$Where we interpret $y_i$ as the estimated value and $b_i$ as the measured value.

So this expression is the sum of squared error. This is the most common measure of error used in statistics.

This is a key principle!

Minimizing the length of $A\mathbf{x} - \mathbf{b}$ is the same as minimizing the sum of squared error.

Definition. If A is $m\times n$ and $\mathbf{b}$ is in $\mathbb{R}^m,$ a least squares solution of $A\mathbf{x} =\mathbf{b}$ is an $\mathbf{\hat{x}}$ in $\mathbb{R}^n$ such that

$$\Vert A\mathbf{\hat{x}} - \mathbf{b}\Vert \leq \Vert A\mathbf{x} - \mathbf{b}\Vert$$for all $\mathbf{x}$ in $\mathbb{R}^n$.

An equivalent (and more common) way to express this is:

$$\hat{\mathbf{x}} = \arg\min_\mathbf{x} \Vert A\mathbf{x} - \mathbf{b}\Vert.$$which emphasizes that this is a minimization problem, also called an optimization problem.

Interpretation of the Least Squares Problem. The point to remember is that no matter what $\mathbf{x}$ is, $A\mathbf{x}$ will be in the column space of $A$, $\operatorname{Col}\ A$.

So $\mathbf{b}$ is outside $\operatorname{Col}\ A$, and we are looking for $\mathbf{x}$ that specifies the closest point in $\operatorname{Col}\ A$ to $\mathbf{b}$.

fig = ut.three_d_figure('Figure 22.1', 'Least Squares looks for the Closest Point in Col A',

-15, 15, -15, 15, -15, 15, (8, 8), qr = None)

a2 = np.array([5.0,-13.0,-3.0])

a1 = np.array([1,-2.0,3])

v = -3*a1 + a2

y = np.array([12.0, 8.0, -5.0])

A = np.array([a1, a2]).T

#

# plotting the span of v

fig.plotSpan(a1, a2, 'Green')

#

yhat = A @ np.linalg.inv(A.T @ A) @ A.T @ y

fig.plotPoint(yhat[0], yhat[1], yhat[2], 'b')

fig.text(yhat[0]+0.5, yhat[1]+0.5, yhat[2]+0.5, r'$\mathbf{A\hat{x}}$', 'Ax-hat', size=18)

#

fig.plotPoint(y[0], y[1], y[2],'b')

fig.text(y[0]+0.5, y[1]+0.5, y[2]+0.5, r'$\bf b$', 'b', size=18)

#

# origin seems unhelpful

#fig.plotPoint(0, 0, 0, 'b')

#fig.text(0-1.5, 0-1.5, 0-1.5, r'$\bf 0$', '0', size=18)

#

x1 = y + np.array([8, 8, 8])

x1hat = A @ np.linalg.inv(A.T @ A) @ A.T @ x1

fig.plotPoint(x1hat[0], x1hat[1], x1hat[2], 'r')

#

x2 = y + np.array([-4, -6, 6])

x2hat = A @ np.linalg.inv(A.T @ A) @ A.T @ x2

fig.plotPoint(x2hat[0], x2hat[1], x2hat[2], 'r')

#

wpos = -4.5*a1

fig.text(wpos[0], wpos[1], wpos[2], r'Col $A$', 'Col A', size=22)

#

# lines

fig.plotLine([y, yhat], 'b', '-')

fig.plotLine([y, x1hat], 'r', '--')

fig.plotLine([y, x2hat], 'r', '--')

#ut.plotPoint3d(ax,0,0,0,'b')

fig.ax.view_init(azim=100,elev=-20.0)

fig.hideAxes()

qrcode = fig.save('Fig22.1', qrviz = qrviz_setting)

The vector $\mathbf{b}$ is closer to $A\mathbf{\hat{x}}$ than it is to $A\mathbf{x}$ for any other $\mathbf{x}$.

Solving the General Least Squares Problem.

The last lecture developed methods for finding the point in a 1D subspace that is closest to a given point.

We just need to generalize what we learned to the case of an arbitrary subspace. This leads to two theorems: the Orthogonal Decomposition Theorem and the Best Approximation Theorem.

The Orthogonal Decomposition Theorem. Let $W$ be a subspace of $\mathbb{R}^n$. Then each $\mathbf{y}$ in $\mathbb{R}^n$ can be written uniquely in the form

$$ \mathbf{y} = \hat{\mathbf{y}} + \mathbf{z}$$where $\hat{\mathbf{y}}$ is in $W$ and $\mathbf{z}$ is orthogonal to every vector in $W$.

Proof. In the book (straightforward).

Just as in the case of a 1D subspace (in the last lecture), we say that $\hat{\mathbf{y}}$ is the orthogonal projection of $\mathbf{y}$ onto $W$ and write $\hat{\mathbf{y}} = \mbox{proj}_W \mathbf{y}.$

The Best Approximation Theorem. Let $W$ be a subspace of $\mathbb{R}^n$, let $\mathbf{y}$ be any vector in $\mathbb{R}^n$, and let $\hat{\mathbf{y}}$ be the orthogonal projection of $\mathbf{y}$ onto $W$. Then $\hat{\mathbf{y}}$ is the closest point in $W$ to $\mathbf{y}$, in the sense that

$$\Vert \mathbf{y}-\hat{\mathbf{y}} \Vert < \Vert \mathbf{y} - \mathbf{v} \Vert$$for all $\mathbf{v}$ in W distinct from $\hat{\mathbf{y}}$.

Proof.

Take $\mathbf{v}$ in $W$ distinct from $\hat{\mathbf{y}}$. Here is what the setup looks like:

fig = ut.three_d_figure('Figure 22.2', 'Comparison of v to the projection of y',

-15, 15, -15, 15, -15, 15, (9,9), qr = None)

a2 = np.array([5.0,-13.0,-3.0])

a1 = np.array([1,-2.0,3])

v = -3*a1 + a2

y = np.array([12.0, 8.0, -5.0])

A = np.array([a1, a2]).T

#

# plotting the span of v

fig.plotSpan(a1, a2, 'Green')

#

yhat = A.dot(np.linalg.inv(A.T.dot(A))).dot(A.T).dot(y)

fig.plotPoint(yhat[0], yhat[1], yhat[2], 'b')

fig.text(yhat[0]+0.5, yhat[1]+0.5, yhat[2]+0.5, r'$\mathbf{\hat{y}}$', 'y-hat', size=18)

#

fig.plotPoint(y[0], y[1], y[2],'b')

fig.text(y[0]+0.5, y[1]+0.5, y[2]+0.5, r'$\bf y$', 'y', size=18)

#

fig.plotPoint(v[0], v[1], v[2], 'b')

fig.text(v[0]-1.5, v[1]-1.5, v[2]-1.5, r'$\bf v$', 'v', size=18)

#

m1 = (y + v) / 2

m1 = m1 + [2,-6,0]

fig.text(m1[0], m1[1], m1[2], r'$\Vert \bf y - \bf v\Vert$', '||y - v||', size=18)

m2 = (y + yhat) / 2

m2 = m2 + [0,1,0]

fig.text(m2[0], m2[1], m2[2], r'$\Vert \bf y - \hat{\bf y}\Vert$', '||y - y-hat||', size=18)

m3 = (yhat + v) / 2

m3 = m3 + [-1,0,-4]

fig.text(m3[0], m3[1], m3[2], r'$\Vert \hat{\bf y} - \bf v\Vert$', '||y - v||', size=18)

#

wpos = -4.5*a1

fig.text(wpos[0], wpos[1], wpos[2], r'$W$', 'W', size=22)

#

# lines

fig.plotLine([y, yhat], 'b')

fig.plotLine([v, yhat], 'b')

fig.plotLine([y, v], 'b')

#ut.plotPoint3d(ax,0,0,0,'b')

fig.ax.view_init(azim=57.0,elev=-69.0)

fig.hideAxes()

qrcode = fig.save('Fig22.2', qrviz = qrviz_setting)

Both $\hat{\mathbf{y}}$ and $\mathbf{v}$ are in $W$, so $\hat{\mathbf{y}} - \mathbf{v}$ is in $W$.

By the orthogonal decomposition theorem, $\mathbf{y} - \hat{\mathbf{y}}$ is orthogonal to every vector in $W$, so it is orthogonal to $\hat{\mathbf{y}} - \mathbf{v}.$

Now, these three points form a (right) triangle because

$$ \mathbf{y} - \mathbf{v} = (\mathbf{y} - \hat{\mathbf{y}}) + (\hat{\mathbf{y}} - \mathbf{v}). $$So the Pythagorean Theorem tells us that

$$ \Vert\mathbf{y} - \mathbf{v}\Vert^2 = \Vert\mathbf{y} - \hat{\mathbf{y}}\Vert^2 + \Vert\hat{\mathbf{y}} - \mathbf{v}\Vert^2. $$Now $\hat{\mathbf{y}} - \mathbf{v} \neq {\bf 0}$ because $\mathbf{y}$ is distinct from $\mathbf{v}$.

So

$$\Vert \hat{\mathbf{y}} - \mathbf{v} \Vert > 0.$$So

$$ \Vert\mathbf{y} - \mathbf{v}\Vert^2 > \Vert\mathbf{y} - \hat{\mathbf{y}}\Vert^2. $$Let's apply these ideas to solving the least squares problem:

$$\hat{\mathbf{x}} = \arg\min_\mathbf{x} \Vert A\mathbf{x} - \mathbf{b}\Vert.$$We know that the closest point to $\mathbf{b}$ in a subspace $W$ is the projection of $\mathbf{b}$ onto $W.$

So the point we are looking for, which we'll call $\hat{\mathbf{b}},$ is:

$$\hat{\mathbf{b}} = \mbox{proj}_{\operatorname{Col}\ A} \mathbf{b}$$The key is that $\hat{\mathbf{b}}$ is in the column space of $A$. So this equation is consistent, and we can solve it:

$$A\mathbf{\hat{x}} = \hat{\mathbf{b}}.$$Since $\hat{\mathbf{b}}$ is the closest point in $\operatorname{Col}\ A$ to $\mathbf{b},$ a vector $\hat{\mathbf{x}}$ is a least-squares solution of $A\mathbf{x}=\mathbf{b}$ if and only if $\mathbf{\hat{x}}$ satisfies $A\mathbf{\hat{x}} = \hat{\mathbf{b}}.$

(Note: we know that $A\mathbf{\hat{x}} =\hat{\mathbf{b}}$ is consistent (by definition), so there exists at least one solution. However note that if $A$ has free variables -- the columns of $A$ are not independent -- then there would be many solutions of $A\mathbf{\hat{x}} =\hat{\mathbf{b}}$.)

Here is another picture of what we are doing:

sl.hide_code_in_slideshow()

display(Image("images/Lay-fig-6-5-2.png", width=450))

Now let's look at a specific case.

Say that

$$A = \begin{bmatrix}\mathbf{a}_1&\mathbf{a}_2\end{bmatrix} = \begin{bmatrix}1&5\\-2&-13\\3&-3\end{bmatrix}$$and

$$\mathbf{b} = \begin{bmatrix}6\\8\\-5\end{bmatrix}.$$We have only two columns $\mathbf{a}_1$ and $\mathbf{a}_2$ so they can not span $\mathbb{R}^3$.

So $\mathbf{b}$ may not lie in $\operatorname{Col}\ A$, and indeed it does not:

fig = ut.three_d_figure('Figure 22.3', 'b is not in Col A',

-15, 15, -15, 15, -15, 15, (8,8), qr = None)

a2 = np.array([5.0,-13.0,-3.0])

a1 = np.array([1,-2.0,3])

b = np.array([8.0, 8.0, -5.0])

A = np.array([a1, a2]).T

bhat = A @ np.linalg.inv(A.T @ A) @ A.T @ b

fig.text(a1[0], a1[1], a1[2], r'$\bf a_1$', 'a1', size=18)

fig.text(a2[0], a2[1], a2[2], r'$\bf a_2$', 'a2', size=18)

fig.text(b[0], b[1], b[2], r'$\bf b$', 'b', size=18)

#ax.text(1,-4,-10,r'Span{$\bf a,b$}',size=16)

#ax.text(0.2,0.2,-4,r'$\bf 0$',size=20)

# plotting the span of v

fig.plotSpan(a1, a2, 'Green')

fig.plotPoint(a1[0], a1[1], a1[2], 'r')

fig.plotPoint(a2[0], a2[1], a2[2], 'r')

fig.plotPoint(b[0], b[1], b[2], 'b')

#ut.plotPoint3d(ax,0,0,0,'b')

fig.ax.view_init(azim=-10, elev=-70.0)

fig.save('Fig22.3', qrviz = qrviz_setting)

fig = ut.three_d_figure('Figure 22.4', 'b-hat is closest to b',

-15, 15, -15, 15, -15, 15, (7,7), qr = None)

a2 = np.array([5.0,-13.0,-3.0])

a1 = np.array([1,-2.0,3])

b = np.array([8.0, 8.0, -5.0])

A = np.array([a1, a2]).T

bhat = A @ np.linalg.inv(A.T @ A) @ A.T @ b

fig.text(a1[0], a1[1], a1[2], r'$\bf a_1$', 'a1', size=18)

fig.text(a2[0], a2[1], a2[2], r'$\bf a_2$', 'a2', size=18)

fig.text(b[0], b[1], b[2], r'$\bf b$', 'b', size=18)

fig.text(bhat[0], bhat[1], bhat[2], r'$A\mathbf{\hat{x}} = \mathbf{\hat{b}}$', 'Ax-hat = b-hat', size=20)

#ax.text(1,-4,-10,r'Span{$\bf a,b$}',size=16)

#ax.text(0.2,0.2,-4,r'$\bf 0$',size=20)

# plotting the span of v

fig.plotSpan(a1, a2, 'Green')

fig.plotPoint(a1[0], a1[1], a1[2], 'r')

fig.plotPoint(a2[0], a2[1], a2[2], 'r')

fig.plotPoint(b[0], b[1], b[2], 'b')

fig.plotPoint(bhat[0], bhat[1], bhat[2], 'b')

fig.plotLine([b, bhat], 'b', '--')

#ut.plotPoint3d(ax,0,0,0,'b')

fig.ax.view_init(azim=30, elev=-80.0)

fig.save('Fig22.4', qrviz = qrviz_setting)

OK, how are we going to find this projection $\hat{\mathbf{b}}$?

Here is the key idea:

We know that the projection $\hat{\mathbf{b}}$ has the property that $\hat{\mathbf{b}}-\mathbf{b}$ is orthogonal to $\operatorname{Col}\ A.$

Suppose $\hat{\mathbf{b}}$ is $\mbox{proj}_{\operatorname{Col}\ A}\mathbf{b},$ and that $\mathbf{\hat{x}}$ satisfies $A\mathbf{\hat{x}} = \hat{\mathbf{b}}$.

So $A\mathbf{\hat{x}} - \mathbf{b}$ is orthogonal to each column of $A$.

If $\mathbf{a}_j$ is any column of $A$, then

$$\mathbf{a}_j^T(A\mathbf{\hat{x}} - \mathbf{b}) = 0.$$Now, each $\mathbf{a}_j^T$ is a row of $A^T$. We can collect all of the equations for all the $\mathbf{a}_j$ as:

$$A^T(A\mathbf{\hat{x}} - \mathbf{b}) = {\bf 0}.$$So

$$A^TA\mathbf{\hat{x}} - A^T\mathbf{b} = {\bf 0}$$So

$$A^TA\mathbf{\hat{x}} = A^T\mathbf{b}$$Looking at this, we see that $A^T\mathbf{b}$ is a vector, and $A^TA$ is a matrix, so this is a standard linear system.

This linear system is called the normal equations for $A\mathbf{x} = \mathbf{b}.$

It's solution is usually denoted $\mathbf{\hat{x}}$.

Theorem The set of least-squares solutions of $A\mathbf{x} = \mathbf{b}$ is equal to the (nonempty) set of solutions of the normal equations $A^TA\mathbf{x} = A^T\mathbf{b}.$

Proof.

(1) The set of solutions is nonempty. The matrix on the left has the same column space as $A^T$ and the vector on the right is a vector in the column space of $A^T.$

And, by the arguments above, any least-squares solution of $A\mathbf{x} = \mathbf{b}$ must satisfy the normal equations $A^TA\mathbf{x} = A^T\mathbf{b}.$

(2) Now let's show that any solution of $A^TA\mathbf{x} = A^T\mathbf{b}$ is a least squares solution of $A\mathbf{x} = \mathbf{b}$.

If $\mathbf{\hat{x}}$ satisfies $A^TA\mathbf{x} = A^T\mathbf{b},$ then $A^T(A\mathbf{\hat{x}} -\mathbf{b}) = {\bf 0},$

which shows that $A\mathbf{\hat{x}} - \mathbf{b}$ is orthogonal to the rows of $A^T,$ and so is orthogonal to the columns of $A$.

So the vector $A\mathbf{\hat{x}} - \mathbf{b}$ is orthogonal to $\operatorname{Col}\ A$.

So the equation

$$\mathbf{b} = A\mathbf{\hat{x}} - (\mathbf{b} - A\mathbf{\hat{x}})$$is a decomposition of $\mathbf{b}$ into the sum of a vector in $\operatorname{Col}\ A$ and a vector orthogonal to $\operatorname{Col}\ A$.

Since the orthogonal decomposition is unique, $A\mathbf{\hat{x}}$ must tbe the orthogonal projection of $\mathbf{b}$ onto the column space of $A$.

So $A\mathbf{\hat{x}} = \hat{\mathbf{b}}$ and $\mathbf{\hat{x}}$ is a least-squares solution.

Example. Find the least squares solution of the inconsistent system $A\mathbf{x} = \mathbf{b}$ for

$$A = \begin{bmatrix}4&0\\0&2\\1&1\end{bmatrix}, \;\;\; \mathbf{b} = \begin{bmatrix}2\\0\\11\end{bmatrix}.$$Solution.

We will use the normal equations $A^TA\mathbf{x} = A^T\mathbf{b}.$

$$A^TA = \begin{bmatrix}4&0&1\\0&2&1\end{bmatrix} \begin{bmatrix}4&0\\0&2\\1&1\end{bmatrix} = \begin{bmatrix}17&1\\1&5\end{bmatrix}$$$$A^T\mathbf{b} = \begin{bmatrix}4&0&1\\0&2&1\end{bmatrix} \begin{bmatrix}2\\0\\11\end{bmatrix} = \begin{bmatrix}19\\11\end{bmatrix}$$So the normal equations are:

$$ \begin{bmatrix}17&1\\1&5\end{bmatrix}\begin{bmatrix}x_1\\x_2\end{bmatrix} = \begin{bmatrix}19\\11\end{bmatrix}$$We can solve this using row operations, or by inverting $A^TA$.

$$(A^TA)^{-1} = \frac{1}{84}\begin{bmatrix}5&-1\\-1&17\end{bmatrix}$$And we can then solve $A^TA\mathbf{x} = A^T\mathbf{b}$ as

$$\mathbf{\hat{x}} = (A^TA)^{-1}A^T\mathbf{b}$$So we conclude that $\mathbf{\hat{x}} = \begin{bmatrix}1\\2\end{bmatrix}$ is the vector that minimizes $\Vert A\mathbf{x} -\mathbf{b}\Vert.$

When there are multiple solutions.

We have seen that the normal equations always have a solution. Is there always a unique solution?

No, there can be multiple solutions that all minimize $\Vert A\mathbf{x} - \mathbf{b}\Vert.$

Let's remind ourselves of what is going on when a linear system has multiple solutions.

We know that a linear system has multiple solutions when there are columns that are not pivot columns.

Equivalently, whern there are multiple solutions the columns of $A$ are linearly dependent.

Here is a picture of what is going on:

sl.hide_code_in_slideshow()

ax = ut.plotSetup3d(-15,15,-15,15,-15,15,(9,6))

a2 = np.array([5.0,-13.0,-3.0])

a1 = np.array([1,-2.0,3])

a3 = -2*a1 + a2

b = np.array([6.0, 8.0, -5.0])

A = np.array([a1, a2]).T

bhat = A.dot(np.linalg.inv(A.T.dot(A))).dot(A.T).dot(b)

ax.text(a1[0],a1[1],a1[2],r'$\bf a_1$',size=20)

ax.text(a2[0],a2[1],a2[2],r'$\bf a_2$',size=20)

ax.text(a3[0],a3[1],a3[2],r'$\bf a_3$',size=20)

ax.text(b[0],b[1],b[2],r'$\bf b$',size=20)

ax.text(bhat[0],bhat[1],bhat[2],r'$A\mathbf{\hat{x}} = \mathbf{\hat{b}}$',size=20)

#ax.text(1,-4,-10,r'Span{$\bf a,b$}',size=16)

#ax.text(0.2,0.2,-4,r'$\bf 0$',size=20)

# plotting the span of v

ut.plotSpan3d(ax,a1,a2,'Green')

ut.plotPoint3d(ax,a1[0],a1[1],a1[2],'r')

ut.plotPoint3d(ax,a2[0],a2[1],a2[2],'r')

ut.plotPoint3d(ax,a3[0],a3[1],a3[2],'r')

ut.plotPoint3d(ax,b[0],b[1],b[2],'b')

ut.plotPoint3d(ax,bhat[0],bhat[1],bhat[2],'b')

ax.plot([b[0],bhat[0]],[b[1],bhat[1]],'b--',zs=[b[2],bhat[2]],lw=2)

#ut.plotPoint3d(ax,0,0,0,'b')

plt.title(r'$A = [\mathbf{a_1}\;\mathbf{a_2}\;\mathbf{a_3}]$',size=20)

ax.view_init(azim=26.0,elev=-77.0)

Example.

Find a least-squares solution for $A\mathbf{x} = \mathbf{b}$ for

$$A = \begin{bmatrix}1&1&0&0\\1&1&0&0\\1&0&1&0\\1&0&1&0\\1&0&0&1\\1&0&0&1\end{bmatrix},\;\;\; \mathbf{b} = \begin{bmatrix}-3\\-1\\0\\2\\5\\1\end{bmatrix}.$$Solution. Compute

$$A^TA = \begin{bmatrix}1&1&1&1&1&1\\1&1&0&0&0&0\\0&0&1&1&0&0\\0&0&0&0&1&1\end{bmatrix}\begin{bmatrix}1&1&0&0\\1&1&0&0\\1&0&1&0\\1&0&1&0\\1&0&0&1\\1&0&0&1\end{bmatrix} = \begin{bmatrix}6&2&2&2\\2&2&0&0\\2&0&2&0\\2&0&0&2\end{bmatrix}$$$$A^T\mathbf{b} = \begin{bmatrix}1&1&1&1&1&1\\1&1&0&0&0&0\\0&0&1&1&0&0\\0&0&0&0&1&1\end{bmatrix}\begin{bmatrix}-3\\-1\\0\\2\\5\\1\end{bmatrix} = \begin{bmatrix}4\\-4\\2\\6\end{bmatrix}$$To solve $A^TA\mathbf{x} = A^T\mathbf{b},$ we'll use row reduction. The augmented matrix $[A^TA\; A^T\mathbf{b}]$ is:

$$\begin{bmatrix}6&2&2&2&4\\2&2&0&0&-4\\2&0&2&0&2\\2&0&0&2&6\end{bmatrix} \sim \begin{bmatrix}1&0&0&1&3\\0&1&0&-1&-5\\0&0&1&-1&-2\\0&0&0&0&0\end{bmatrix}$$Since there is a row of zeros, we know the columns of $A^TA$ are linearly dependent. This occurs because the columns of $A$ are linearly dependent.

The general solution is $x_1 = 3-x_4$, $x_2 = -5+x_4$, $x_3 = -2 + x_4$, and $x_4$ is free.

So the general least-squares solution of $A\mathbf{x} = \mathbf{b}$ has the form

$$\mathbf{\hat{x}} = \begin{bmatrix}3\\-5\\-2\\0\end{bmatrix} + x_4\begin{bmatrix}-1\\1\\1\\1\end{bmatrix}$$Keep in mind that the orthogonal projection $\hat{\mathbf{b}}$ is always unique.

The reason that there are multiple solutions to this least squares problem is that there are multiple ways to construct $\hat{\mathbf{b}}$.

The reason that there are multiple ways to construct $\hat{\mathbf{b}}$ is that the columns of $A$ are linearly dependent, so any vector in the column space of $A$ can be constructed in multiple ways.

Here is a theorem that allows use to identify when there are multiple least-squares solutions.

Theorem. Let $A$ be an $m\times n$ matrix. The following statements are equivalent:

- The equation $A\mathbf{x} = \mathbf{b}$ has a unique least-squares solution for each $\mathbf{b}$ in $\mathbb{R}^m.$

- The columns of $A$ are linearly independent.

- The matrix $A^TA$ is invertible.

When these statements are true, the least-squares solution $\mathbf{\hat{x}}$ is given by:

$$\mathbf{\hat{x}} = (A^TA)^{-1}A^T\mathbf{b}$$Finding $\hat{\mathbf{b}}$ directly.

When $A^TA$ is invertible, and $\hat{\mathbf{b}}$ is unique, we can put together the two equations

$$\mathbf{\hat{x}} = (A^TA)^{-1}A^T\mathbf{b}$$and

$$A\mathbf{\hat{x}} = \hat{\mathbf{b}}$$to get:

$$\hat{\mathbf{b}} = A(A^TA)^{-1}A^T\mathbf{b}$$Let's stop and look at this another way. Up until now we have seen how to project a point onto a line, or on to a subspace with an orthogonal basis.

Now here is a formula for projection onto a subspace given an arbitrary basis. This is a general formula that can be very useful.