SciPy optimize provides functions for minimizing (or maximizing) objective functions, possibly subject to constraints. It includes solvers for nonlinear problems (with support for both local and global optimization algorithms), linear programing, constrained and nonlinear least-squares, root finding and curve fitting.

- Scalar Functions Optimization

- Local (Multivariate) Optimization

‘Nelder-Mead’ ,‘Powell’ ,‘CG’ ,‘BFGS’ ,‘Newton-CG’

‘L-BFGS-B’ ,‘TNC’ ,‘COBYLA’ ,‘SLSQP’ ,‘trust-constr’

‘dogleg’ ,‘trust-ncg’ ,‘trust-exact’ ,‘trust-krylov’

- Global Optimization

- Least-squares and Curve Fitting

- Root finding

Cojugate Gradient Method (Local Optimization)¶

Method CG uses a nonlinear conjugate gradient algorithm by Polak and Ribiere, a variant of the Fletcher-Reeves method described in [5] pp.120-122. Only the first derivatives are used.

In [1]:

import numpy as np

import numpy.linalg as la

import scipy.optimize as sopt

from scipy.optimize import minimize

import matplotlib.pyplot as pt

from mpl_toolkits.mplot3d import axes3d

%matplotlib inline

import seaborn as sns

sns.set()

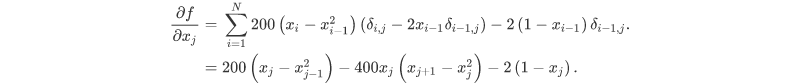

The minimum value of this function is 0 which is achieved when x =1

In [2]:

def rosen(x):

"""The Rosenbrock function"""

return sum(100.0*(x[1:]-x[:-1]**2.0)**2.0 + (1-x[:-1])**2.0)

In [5]:

x0 = np.array([1.3, 0.7, 0.8, 1.9, 1.2])

In [11]:

res = minimize(rosen, x0, method='CG',options={'disp': True})

Optimization terminated successfully.

Current function value: 0.000000

Iterations: 67

Function evaluations: 973

Gradient evaluations: 139

In [12]:

res.x

Out[12]:

array([0.99999927, 0.99999853, 0.99999706, 0.99999411, 0.99998819])

References¶

- https://andreask.cs.illinois.edu/cs357-s15/public/demos/12-optimization/Steepest%20Descent.html

- https://scipy-lectures.org/advanced/mathematical_optimization/auto_examples/plot_gradient_descent.html

- https://docs.scipy.org/doc/scipy/reference/tutorial/optimize.html

- https://scipy-cookbook.readthedocs.io/index.html

- http://folk.ntnu.no/leifh/teaching/tkt4140/._main000.html