Ungraded Lab: Hyperparameter tuning and model training with TFX¶

In this lab, you will be again doing hyperparameter tuning but this time, it will be within a Tensorflow Extended (TFX) pipeline.

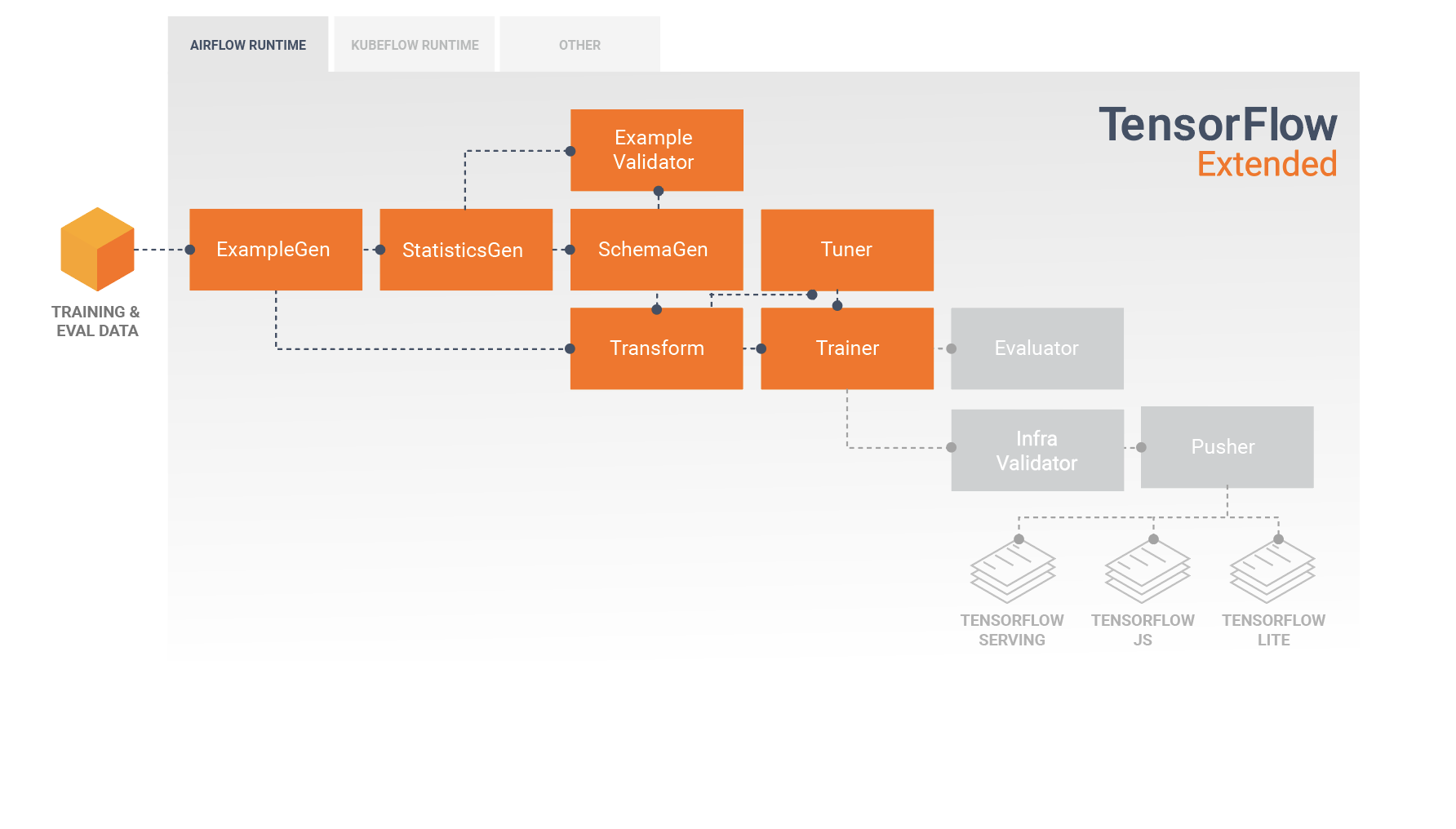

We have already introduced some TFX components in Course 2 of this specialization related to data ingestion, validation, and transformation. In this notebook, you will get to work with two more which are related to model development and training: Tuner and Trainer.

image source: https://www.tensorflow.org/tfx/guide

image source: https://www.tensorflow.org/tfx/guide

- The Tuner utilizes the Keras Tuner API under the hood to tune your model's hyperparameters.

- You can get the best set of hyperparameters from the Tuner component and feed it into the Trainer component to optimize your model for training.

You will again be working with the FashionMNIST dataset and will feed it though the TFX pipeline up to the Trainer component.You will quickly review the earlier components from Course 2, then focus on the two new components introduced.

Let's begin!

Setup¶

!pip install -U pip

!pip install -U tfx==1.3

# These are downgraded to work with the packages used by TFX 1.3

# Please do not delete because it will cause import errors in the next cell

!pip install --upgrade tensorflow-estimator==2.6.0

!pip install --upgrade keras==2.6.0

Looking in indexes: https://pypi.org/simple, https://us-python.pkg.dev/colab-wheels/public/simple/

Requirement already satisfied: pip in /usr/local/lib/python3.7/dist-packages (21.1.3)

Collecting pip

Downloading pip-22.1.2-py3-none-any.whl (2.1 MB)

|████████████████████████████████| 2.1 MB 36.2 MB/s

Installing collected packages: pip

Attempting uninstall: pip

Found existing installation: pip 21.1.3

Uninstalling pip-21.1.3:

Successfully uninstalled pip-21.1.3

Successfully installed pip-22.1.2

Looking in indexes: https://pypi.org/simple, https://us-python.pkg.dev/colab-wheels/public/simple/

Collecting tfx==1.3

Downloading tfx-1.3.0-py3-none-any.whl (2.4 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 2.4/2.4 MB 31.4 MB/s eta 0:00:00

Requirement already satisfied: click<8,>=7 in /usr/local/lib/python3.7/dist-packages (from tfx==1.3) (7.1.2)

Collecting google-cloud-bigquery<3,>=2.26.0

Downloading google_cloud_bigquery-2.34.4-py2.py3-none-any.whl (206 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 206.6/206.6 kB 21.1 MB/s eta 0:00:00

Requirement already satisfied: protobuf<4,>=3.13 in /usr/local/lib/python3.7/dist-packages (from tfx==1.3) (3.17.3)

Requirement already satisfied: tensorflow-hub<0.13,>=0.9.0 in /usr/local/lib/python3.7/dist-packages (from tfx==1.3) (0.12.0)

Collecting tensorflow-data-validation<1.4.0,>=1.3.0

Downloading tensorflow_data_validation-1.3.0-cp37-cp37m-manylinux_2_12_x86_64.manylinux2010_x86_64.whl (1.4 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.4/1.4 MB 36.7 MB/s eta 0:00:00

Collecting tfx-bsl<1.4.0,>=1.3.0

Downloading tfx_bsl-1.3.0-cp37-cp37m-manylinux_2_12_x86_64.manylinux2010_x86_64.whl (19.0 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 19.0/19.0 MB 43.7 MB/s eta 0:00:00

Requirement already satisfied: jinja2<4,>=2.7.3 in /usr/local/lib/python3.7/dist-packages (from tfx==1.3) (2.11.3)

Collecting pyarrow<3,>=1

Downloading pyarrow-2.0.0-cp37-cp37m-manylinux2014_x86_64.whl (17.7 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 17.7/17.7 MB 46.1 MB/s eta 0:00:00

Collecting google-apitools<1,>=0.5

Downloading google_apitools-0.5.32-py3-none-any.whl (135 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 135.7/135.7 kB 7.4 MB/s eta 0:00:00

Collecting tensorflow-serving-api!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15

Downloading tensorflow_serving_api-2.9.0-py2.py3-none-any.whl (37 kB)

Collecting ml-pipelines-sdk==1.3.0

Downloading ml_pipelines_sdk-1.3.0-py3-none-any.whl (1.2 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.2/1.2 MB 32.8 MB/s eta 0:00:00

Collecting absl-py<0.13,>=0.9

Downloading absl_py-0.12.0-py3-none-any.whl (129 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 129.4/129.4 kB 13.7 MB/s eta 0:00:00

Requirement already satisfied: grpcio<2,>=1.28.1 in /usr/local/lib/python3.7/dist-packages (from tfx==1.3) (1.46.3)

Requirement already satisfied: pyyaml<6,>=3.12 in /usr/local/lib/python3.7/dist-packages (from tfx==1.3) (3.13)

Requirement already satisfied: google-api-python-client<2,>=1.8 in /usr/local/lib/python3.7/dist-packages (from tfx==1.3) (1.12.11)

Requirement already satisfied: portpicker<2,>=1.3.1 in /usr/local/lib/python3.7/dist-packages (from tfx==1.3) (1.3.9)

Collecting tensorflow-model-analysis<0.35,>=0.34.1

Downloading tensorflow_model_analysis-0.34.1-py3-none-any.whl (1.8 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.8/1.8 MB 50.7 MB/s eta 0:00:00

Collecting ml-metadata<1.4.0,>=1.3.0

Downloading ml_metadata-1.3.0-cp37-cp37m-manylinux_2_12_x86_64.manylinux2010_x86_64.whl (6.5 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 6.5/6.5 MB 54.7 MB/s eta 0:00:00

Collecting apache-beam[gcp]<3,>=2.32

Downloading apache_beam-2.40.0-cp37-cp37m-manylinux2010_x86_64.whl (10.9 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 10.9/10.9 MB 54.3 MB/s eta 0:00:00

Collecting kubernetes<13,>=10.0.1

Downloading kubernetes-12.0.1-py2.py3-none-any.whl (1.7 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.7/1.7 MB 53.4 MB/s eta 0:00:00

Collecting attrs<21,>=19.3.0

Downloading attrs-20.3.0-py2.py3-none-any.whl (49 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 49.3/49.3 kB 5.7 MB/s eta 0:00:00

Collecting keras-tuner<2,>=1.0.4

Downloading keras_tuner-1.1.2-py3-none-any.whl (133 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 133.7/133.7 kB 14.7 MB/s eta 0:00:00

Collecting google-cloud-aiplatform<2,>=0.5.0

Downloading google_cloud_aiplatform-1.15.0-py2.py3-none-any.whl (2.1 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 2.1/2.1 MB 76.6 MB/s eta 0:00:00

Collecting docker<5,>=4.1

Downloading docker-4.4.4-py2.py3-none-any.whl (147 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 147.0/147.0 kB 17.5 MB/s eta 0:00:00

Collecting numpy<1.20,>=1.16

Downloading numpy-1.19.5-cp37-cp37m-manylinux2010_x86_64.whl (14.8 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 14.8/14.8 MB 28.8 MB/s eta 0:00:00

Collecting packaging<21,>=20

Downloading packaging-20.9-py2.py3-none-any.whl (40 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 40.9/40.9 kB 5.0 MB/s eta 0:00:00

Requirement already satisfied: tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2 in /usr/local/lib/python3.7/dist-packages (from tfx==1.3) (2.8.2+zzzcolab20220527125636)

Collecting tensorflow-transform<1.4.0,>=1.3.0

Downloading tensorflow_transform-1.3.0-py3-none-any.whl (407 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 407.7/407.7 kB 39.3 MB/s eta 0:00:00

Requirement already satisfied: six in /usr/local/lib/python3.7/dist-packages (from absl-py<0.13,>=0.9->tfx==1.3) (1.15.0)

Collecting proto-plus<2,>=1.7.1

Downloading proto_plus-1.20.6-py3-none-any.whl (46 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 46.4/46.4 kB 6.2 MB/s eta 0:00:00

Requirement already satisfied: httplib2<0.21.0,>=0.8 in /usr/local/lib/python3.7/dist-packages (from apache-beam[gcp]<3,>=2.32->tfx==1.3) (0.17.4)

Requirement already satisfied: python-dateutil<3,>=2.8.0 in /usr/local/lib/python3.7/dist-packages (from apache-beam[gcp]<3,>=2.32->tfx==1.3) (2.8.2)

Requirement already satisfied: pydot<2,>=1.2.0 in /usr/local/lib/python3.7/dist-packages (from apache-beam[gcp]<3,>=2.32->tfx==1.3) (1.3.0)

Requirement already satisfied: pytz>=2018.3 in /usr/local/lib/python3.7/dist-packages (from apache-beam[gcp]<3,>=2.32->tfx==1.3) (2022.1)

Collecting cloudpickle<3,>=2.1.0

Downloading cloudpickle-2.1.0-py3-none-any.whl (25 kB)

Collecting pymongo<4.0.0,>=3.8.0

Downloading pymongo-3.12.3-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (508 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 508.1/508.1 kB 45.5 MB/s eta 0:00:00

Collecting requests<3.0.0,>=2.24.0

Downloading requests-2.28.1-py3-none-any.whl (62 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 62.8/62.8 kB 7.7 MB/s eta 0:00:00

Requirement already satisfied: crcmod<2.0,>=1.7 in /usr/local/lib/python3.7/dist-packages (from apache-beam[gcp]<3,>=2.32->tfx==1.3) (1.7)

Collecting dill<0.3.2,>=0.3.1.1

Downloading dill-0.3.1.1.tar.gz (151 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 152.0/152.0 kB 2.1 MB/s eta 0:00:00

Preparing metadata (setup.py) ... done

Requirement already satisfied: typing-extensions>=3.7.0 in /usr/local/lib/python3.7/dist-packages (from apache-beam[gcp]<3,>=2.32->tfx==1.3) (4.1.1)

Collecting orjson<4.0

Downloading orjson-3.7.7-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (272 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 272.8/272.8 kB 26.9 MB/s eta 0:00:00

Collecting fastavro<2,>=0.23.6

Downloading fastavro-1.5.2-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (2.3 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 2.3/2.3 MB 78.0 MB/s eta 0:00:00

Collecting hdfs<3.0.0,>=2.1.0

Downloading hdfs-2.7.0-py3-none-any.whl (34 kB)

Requirement already satisfied: cachetools<5,>=3.1.0 in /usr/local/lib/python3.7/dist-packages (from apache-beam[gcp]<3,>=2.32->tfx==1.3) (4.2.4)

Collecting google-cloud-dlp<4,>=3.0.0

Downloading google_cloud_dlp-3.7.1-py2.py3-none-any.whl (118 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 118.2/118.2 kB 15.1 MB/s eta 0:00:00

Collecting google-cloud-pubsublite<2,>=1.2.0

Downloading google_cloud_pubsublite-1.4.2-py2.py3-none-any.whl (265 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 265.8/265.8 kB 28.7 MB/s eta 0:00:00

Collecting google-cloud-bigquery-storage>=2.6.3

Downloading google_cloud_bigquery_storage-2.13.2-py2.py3-none-any.whl (180 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 180.2/180.2 kB 20.4 MB/s eta 0:00:00

Collecting google-cloud-spanner<2,>=1.13.0

Downloading google_cloud_spanner-1.19.3-py2.py3-none-any.whl (255 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 255.6/255.6 kB 28.9 MB/s eta 0:00:00

Collecting grpcio-gcp<1,>=0.2.2

Downloading grpcio_gcp-0.2.2-py2.py3-none-any.whl (9.4 kB)

Requirement already satisfied: google-cloud-datastore<2,>=1.8.0 in /usr/local/lib/python3.7/dist-packages (from apache-beam[gcp]<3,>=2.32->tfx==1.3) (1.8.0)

Requirement already satisfied: google-auth<3,>=1.18.0 in /usr/local/lib/python3.7/dist-packages (from apache-beam[gcp]<3,>=2.32->tfx==1.3) (1.35.0)

Requirement already satisfied: google-cloud-core<2,>=0.28.1 in /usr/local/lib/python3.7/dist-packages (from apache-beam[gcp]<3,>=2.32->tfx==1.3) (1.0.3)

Collecting google-cloud-videointelligence<2,>=1.8.0

Downloading google_cloud_videointelligence-1.16.3-py2.py3-none-any.whl (183 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 183.9/183.9 kB 24.3 MB/s eta 0:00:00

Collecting google-auth-httplib2<0.2.0,>=0.1.0

Downloading google_auth_httplib2-0.1.0-py2.py3-none-any.whl (9.3 kB)

Collecting google-apitools<1,>=0.5

Downloading google-apitools-0.5.31.tar.gz (173 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 173.5/173.5 kB 22.3 MB/s eta 0:00:00

Preparing metadata (setup.py) ... done

Collecting google-cloud-language<2,>=1.3.0

Downloading google_cloud_language-1.3.2-py2.py3-none-any.whl (83 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 83.6/83.6 kB 11.5 MB/s eta 0:00:00

Collecting google-cloud-vision<2,>=0.38.0

Downloading google_cloud_vision-1.0.2-py2.py3-none-any.whl (435 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 435.1/435.1 kB 42.1 MB/s eta 0:00:00

Collecting google-cloud-pubsub<3,>=2.1.0

Downloading google_cloud_pubsub-2.13.0-py2.py3-none-any.whl (234 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 234.5/234.5 kB 28.1 MB/s eta 0:00:00

Collecting google-cloud-bigtable<2,>=0.31.1

Downloading google_cloud_bigtable-1.7.2-py2.py3-none-any.whl (267 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 267.7/267.7 kB 29.9 MB/s eta 0:00:00

Collecting google-cloud-recommendations-ai<=0.2.0,>=0.1.0

Downloading google_cloud_recommendations_ai-0.2.0-py2.py3-none-any.whl (180 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 180.2/180.2 kB 14.7 MB/s eta 0:00:00

Collecting websocket-client>=0.32.0

Downloading websocket_client-1.3.3-py3-none-any.whl (54 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 54.3/54.3 kB 7.0 MB/s eta 0:00:00

Requirement already satisfied: google-api-core<3dev,>=1.21.0 in /usr/local/lib/python3.7/dist-packages (from google-api-python-client<2,>=1.8->tfx==1.3) (1.31.6)

Requirement already satisfied: uritemplate<4dev,>=3.0.0 in /usr/local/lib/python3.7/dist-packages (from google-api-python-client<2,>=1.8->tfx==1.3) (3.0.1)

Collecting fasteners>=0.14

Downloading fasteners-0.17.3-py3-none-any.whl (18 kB)

Requirement already satisfied: oauth2client>=1.4.12 in /usr/local/lib/python3.7/dist-packages (from google-apitools<1,>=0.5->tfx==1.3) (4.1.3)

Collecting google-cloud-storage<3.0.0dev,>=1.32.0

Downloading google_cloud_storage-2.4.0-py2.py3-none-any.whl (106 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 107.0/107.0 kB 14.1 MB/s eta 0:00:00

Collecting google-cloud-resource-manager<3.0.0dev,>=1.3.3

Downloading google_cloud_resource_manager-1.5.1-py2.py3-none-any.whl (230 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 230.2/230.2 kB 28.2 MB/s eta 0:00:00

Collecting protobuf<4,>=3.13

Downloading protobuf-3.20.1-cp37-cp37m-manylinux_2_5_x86_64.manylinux1_x86_64.whl (1.0 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.0/1.0 MB 59.9 MB/s eta 0:00:00

Collecting google-cloud-core<2,>=0.28.1

Downloading google_cloud_core-1.7.2-py2.py3-none-any.whl (28 kB)

Collecting google-resumable-media<3.0dev,>=0.6.0

Downloading google_resumable_media-2.3.3-py2.py3-none-any.whl (76 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 76.9/76.9 kB 10.9 MB/s eta 0:00:00

Requirement already satisfied: MarkupSafe>=0.23 in /usr/local/lib/python3.7/dist-packages (from jinja2<4,>=2.7.3->tfx==1.3) (2.0.1)

Requirement already satisfied: tensorboard in /usr/local/lib/python3.7/dist-packages (from keras-tuner<2,>=1.0.4->tfx==1.3) (2.8.0)

Collecting kt-legacy

Downloading kt_legacy-1.0.4-py3-none-any.whl (9.6 kB)

Requirement already satisfied: ipython in /usr/local/lib/python3.7/dist-packages (from keras-tuner<2,>=1.0.4->tfx==1.3) (5.5.0)

Requirement already satisfied: setuptools>=21.0.0 in /usr/local/lib/python3.7/dist-packages (from kubernetes<13,>=10.0.1->tfx==1.3) (57.4.0)

Requirement already satisfied: certifi>=14.05.14 in /usr/local/lib/python3.7/dist-packages (from kubernetes<13,>=10.0.1->tfx==1.3) (2022.6.15)

Requirement already satisfied: requests-oauthlib in /usr/local/lib/python3.7/dist-packages (from kubernetes<13,>=10.0.1->tfx==1.3) (1.3.1)

Requirement already satisfied: urllib3>=1.24.2 in /usr/local/lib/python3.7/dist-packages (from kubernetes<13,>=10.0.1->tfx==1.3) (1.24.3)

Requirement already satisfied: pyparsing>=2.0.2 in /usr/local/lib/python3.7/dist-packages (from packaging<21,>=20->tfx==1.3) (3.0.9)

Requirement already satisfied: google-pasta>=0.1.1 in /usr/local/lib/python3.7/dist-packages (from tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (0.2.0)

Requirement already satisfied: keras<2.9,>=2.8.0rc0 in /usr/local/lib/python3.7/dist-packages (from tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (2.8.0)

Requirement already satisfied: keras-preprocessing>=1.1.1 in /usr/local/lib/python3.7/dist-packages (from tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (1.1.2)

Requirement already satisfied: gast>=0.2.1 in /usr/local/lib/python3.7/dist-packages (from tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (0.5.3)

Requirement already satisfied: h5py>=2.9.0 in /usr/local/lib/python3.7/dist-packages (from tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (3.1.0)

Collecting tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2

Downloading tensorflow-2.9.1-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (511.7 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 511.7/511.7 MB 3.3 MB/s eta 0:00:00

Collecting keras<2.10.0,>=2.9.0rc0

Downloading keras-2.9.0-py2.py3-none-any.whl (1.6 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.6/1.6 MB 19.7 MB/s eta 0:00:00

Collecting tensorboard

Downloading tensorboard-2.9.1-py3-none-any.whl (5.8 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 5.8/5.8 MB 90.7 MB/s eta 0:00:00

Collecting tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2

Downloading tensorflow-2.9.0-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (511.7 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 511.7/511.7 MB 3.6 MB/s eta 0:00:00

Downloading https://us-python.pkg.dev/colab-wheels/public/tensorflow/tensorflow-2.8.2%2Bzzzcolab20220629235552-cp37-cp37m-linux_x86_64.whl (668.6 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 668.6/668.6 MB 2.8 MB/s eta 0:00:00

Downloading https://us-python.pkg.dev/colab-wheels/public/tensorflow/tensorflow-2.8.2%2Bzzzcolab20220523105045-cp37-cp37m-linux_x86_64.whl (668.6 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 668.6/668.6 MB 2.6 MB/s eta 0:00:00

Downloading tensorflow-2.8.2-cp37-cp37m-manylinux2010_x86_64.whl (497.9 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 497.9/497.9 MB 3.7 MB/s eta 0:00:00

Downloading https://us-python.pkg.dev/colab-wheels/public/tensorflow/tensorflow-2.8.1%2Bzzzcolab20220518083849-cp37-cp37m-linux_x86_64.whl (668.6 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 668.6/668.6 MB 2.6 MB/s eta 0:00:00

Downloading https://us-python.pkg.dev/colab-wheels/public/tensorflow/tensorflow-2.8.1%2Bzzzcolab20220516111314-cp37-cp37m-linux_x86_64.whl (668.6 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 668.6/668.6 MB 2.5 MB/s eta 0:00:00

Downloading tensorflow-2.8.1-cp37-cp37m-manylinux2010_x86_64.whl (497.9 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 497.9/497.9 MB 3.5 MB/s eta 0:00:00

Downloading https://us-python.pkg.dev/colab-wheels/public/tensorflow/tensorflow-2.8.0%2Bzzzcolab20220506162203-cp37-cp37m-linux_x86_64.whl (668.3 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 668.3/668.3 MB 2.6 MB/s eta 0:00:00

Collecting tf-estimator-nightly==2.8.0.dev2021122109

Downloading tf_estimator_nightly-2.8.0.dev2021122109-py2.py3-none-any.whl (462 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 462.5/462.5 kB 37.6 MB/s eta 0:00:00

Collecting tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2

Downloading tensorflow-2.8.0-cp37-cp37m-manylinux2010_x86_64.whl (497.5 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 497.5/497.5 MB 3.5 MB/s eta 0:00:00

Downloading https://us-python.pkg.dev/colab-wheels/public/tensorflow/tensorflow-2.7.3%2Bzzzcolab20220523111007-cp37-cp37m-linux_x86_64.whl (671.4 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 671.4/671.4 MB 2.5 MB/s eta 0:00:00

Collecting tensorflow-estimator<2.8,~=2.7.0rc0

Downloading tensorflow_estimator-2.7.0-py2.py3-none-any.whl (463 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 463.1/463.1 kB 40.5 MB/s eta 0:00:00

Collecting keras<2.8,>=2.7.0rc0

Downloading keras-2.7.0-py2.py3-none-any.whl (1.3 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.3/1.3 MB 35.8 MB/s eta 0:00:00

Requirement already satisfied: opt-einsum>=2.3.2 in /usr/local/lib/python3.7/dist-packages (from tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (3.3.0)

Requirement already satisfied: termcolor>=1.1.0 in /usr/local/lib/python3.7/dist-packages (from tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (1.1.0)

Requirement already satisfied: wrapt>=1.11.0 in /usr/local/lib/python3.7/dist-packages (from tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (1.14.1)

Requirement already satisfied: astunparse>=1.6.0 in /usr/local/lib/python3.7/dist-packages (from tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (1.6.3)

Requirement already satisfied: wheel<1.0,>=0.32.0 in /usr/local/lib/python3.7/dist-packages (from tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (0.37.1)

Requirement already satisfied: flatbuffers<3.0,>=1.12 in /usr/local/lib/python3.7/dist-packages (from tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (2.0)

Requirement already satisfied: libclang>=9.0.1 in /usr/local/lib/python3.7/dist-packages (from tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (14.0.1)

Collecting protobuf<4,>=3.13

Downloading protobuf-3.19.4-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (1.1 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.1/1.1 MB 32.4 MB/s eta 0:00:00

Requirement already satisfied: tensorflow-io-gcs-filesystem>=0.21.0 in /usr/local/lib/python3.7/dist-packages (from tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (0.26.0)

Collecting gast<0.5.0,>=0.2.1

Downloading gast-0.4.0-py3-none-any.whl (9.8 kB)

Requirement already satisfied: pandas<2,>=1.0 in /usr/local/lib/python3.7/dist-packages (from tensorflow-data-validation<1.4.0,>=1.3.0->tfx==1.3) (1.3.5)

Collecting joblib<0.15,>=0.12

Downloading joblib-0.14.1-py2.py3-none-any.whl (294 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 294.9/294.9 kB 30.2 MB/s eta 0:00:00

Collecting tensorflow-metadata<1.3,>=1.2

Downloading tensorflow_metadata-1.2.0-py3-none-any.whl (48 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 48.5/48.5 kB 6.5 MB/s eta 0:00:00

Collecting ipython

Downloading ipython-7.34.0-py3-none-any.whl (793 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 793.8/793.8 kB 34.7 MB/s eta 0:00:00

Requirement already satisfied: ipywidgets<8,>=7 in /usr/local/lib/python3.7/dist-packages (from tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (7.7.0)

Requirement already satisfied: scipy<2,>=1.4.1 in /usr/local/lib/python3.7/dist-packages (from tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (1.4.1)

INFO: pip is looking at multiple versions of tensorflow-serving-api to determine which version is compatible with other requirements. This could take a while.

Collecting tensorflow-serving-api!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15

Downloading tensorflow_serving_api-2.8.2-py2.py3-none-any.whl (37 kB)

Downloading tensorflow_serving_api-2.8.0-py2.py3-none-any.whl (37 kB)

Downloading tensorflow_serving_api-2.7.0-py2.py3-none-any.whl (37 kB)

Downloading tensorflow_serving_api-2.6.5-py2.py3-none-any.whl (37 kB)

Collecting tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2

Downloading https://us-python.pkg.dev/colab-wheels/public/tensorflow/tensorflow-2.6.5%2Bzzzcolab20220523104206-cp37-cp37m-linux_x86_64.whl (570.3 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 570.3/570.3 MB 2.5 MB/s eta 0:00:00

Downloading tensorflow-2.6.5-cp37-cp37m-manylinux2010_x86_64.whl (464.2 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 464.2/464.2 MB 3.8 MB/s eta 0:00:00

INFO: pip is looking at multiple versions of tensorflow-transform to determine which version is compatible with other requirements. This could take a while.

Collecting tensorflow-serving-api!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15

Downloading tensorflow_serving_api-2.6.3-py2.py3-none-any.whl (37 kB)

Collecting tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2

Downloading https://us-python.pkg.dev/colab-wheels/public/tensorflow/tensorflow-2.6.4%2Bzzzcolab20220516125453-cp37-cp37m-linux_x86_64.whl (570.3 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 570.3/570.3 MB 2.7 MB/s eta 0:00:00

Downloading tensorflow-2.6.4-cp37-cp37m-manylinux2010_x86_64.whl (464.2 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 464.2/464.2 MB 3.7 MB/s eta 0:00:00

Downloading tensorflow-2.6.3-cp37-cp37m-manylinux2010_x86_64.whl (463.8 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 463.8/463.8 MB 3.8 MB/s eta 0:00:00

Collecting tensorflow-serving-api!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15

Downloading tensorflow_serving_api-2.6.2-py2.py3-none-any.whl (37 kB)

Collecting tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2

Downloading tensorflow-2.6.2-cp37-cp37m-manylinux2010_x86_64.whl (458.3 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 458.3/458.3 MB 3.8 MB/s eta 0:00:00

Collecting tensorflow-serving-api!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15

Downloading tensorflow_serving_api-2.6.1-py2.py3-none-any.whl (37 kB)

Collecting tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2

Downloading tensorflow-2.6.1-cp37-cp37m-manylinux2010_x86_64.whl (458.3 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 458.3/458.3 MB 3.7 MB/s eta 0:00:00

INFO: pip is looking at multiple versions of tensorflow-serving-api to determine which version is compatible with other requirements. This could take a while.

Collecting tensorflow-serving-api!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15

Downloading tensorflow_serving_api-2.6.0-py2.py3-none-any.whl (37 kB)

Collecting tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2

Downloading https://us-python.pkg.dev/colab-wheels/public/tensorflow/tensorflow-2.6.0%2Bzzzcolab20220506153740-cp37-cp37m-linux_x86_64.whl (564.4 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 564.4/564.4 MB 2.6 MB/s eta 0:00:00

Collecting clang~=5.0

Downloading clang-5.0.tar.gz (30 kB)

Preparing metadata (setup.py) ... done

Collecting wrapt>=1.11.0

Downloading wrapt-1.12.1.tar.gz (27 kB)

Preparing metadata (setup.py) ... done

Collecting typing-extensions>=3.7.0

Downloading typing_extensions-3.7.4.3-py3-none-any.whl (22 kB)

Collecting flatbuffers<3.0,>=1.12

Downloading flatbuffers-1.12-py2.py3-none-any.whl (15 kB)

Requirement already satisfied: googleapis-common-protos<2.0dev,>=1.6.0 in /usr/local/lib/python3.7/dist-packages (from google-api-core<3dev,>=1.21.0->google-api-python-client<2,>=1.8->tfx==1.3) (1.56.2)

Requirement already satisfied: rsa<5,>=3.1.4 in /usr/local/lib/python3.7/dist-packages (from google-auth<3,>=1.18.0->apache-beam[gcp]<3,>=2.32->tfx==1.3) (4.8)

Requirement already satisfied: pyasn1-modules>=0.2.1 in /usr/local/lib/python3.7/dist-packages (from google-auth<3,>=1.18.0->apache-beam[gcp]<3,>=2.32->tfx==1.3) (0.2.8)

Collecting grpc-google-iam-v1<0.13dev,>=0.12.3

Downloading grpc_google_iam_v1-0.12.4-py2.py3-none-any.whl (26 kB)

Collecting grpcio-status>=1.16.0

Downloading grpcio_status-1.47.0-py3-none-any.whl (10.0 kB)

Collecting overrides<7.0.0,>=6.0.1

Downloading overrides-6.1.0-py3-none-any.whl (14 kB)

Collecting google-cloud-storage<3.0.0dev,>=1.32.0

Downloading google_cloud_storage-2.3.0-py2.py3-none-any.whl (107 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 107.1/107.1 kB 14.3 MB/s eta 0:00:00

Downloading google_cloud_storage-2.2.1-py2.py3-none-any.whl (107 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 107.1/107.1 kB 14.0 MB/s eta 0:00:00

Collecting google-crc32c<2.0dev,>=1.0

Downloading google_crc32c-1.3.0-cp37-cp37m-manylinux_2_12_x86_64.manylinux2010_x86_64.whl (38 kB)

Requirement already satisfied: cached-property in /usr/local/lib/python3.7/dist-packages (from h5py>=2.9.0->tensorflow!=2.0.*,!=2.1.*,!=2.2.*,!=2.3.*,!=2.4.*,!=2.5.*,<3,>=1.15.2->tfx==1.3) (1.5.2)

Requirement already satisfied: docopt in /usr/local/lib/python3.7/dist-packages (from hdfs<3.0.0,>=2.1.0->apache-beam[gcp]<3,>=2.32->tfx==1.3) (0.6.2)

Requirement already satisfied: decorator in /usr/local/lib/python3.7/dist-packages (from ipython->keras-tuner<2,>=1.0.4->tfx==1.3) (4.4.2)

Requirement already satisfied: traitlets>=4.2 in /usr/local/lib/python3.7/dist-packages (from ipython->keras-tuner<2,>=1.0.4->tfx==1.3) (5.1.1)

Collecting prompt-toolkit!=3.0.0,!=3.0.1,<3.1.0,>=2.0.0

Downloading prompt_toolkit-3.0.30-py3-none-any.whl (381 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 381.7/381.7 kB 32.1 MB/s eta 0:00:00

Requirement already satisfied: backcall in /usr/local/lib/python3.7/dist-packages (from ipython->keras-tuner<2,>=1.0.4->tfx==1.3) (0.2.0)

Requirement already satisfied: jedi>=0.16 in /usr/local/lib/python3.7/dist-packages (from ipython->keras-tuner<2,>=1.0.4->tfx==1.3) (0.18.1)

Requirement already satisfied: matplotlib-inline in /usr/local/lib/python3.7/dist-packages (from ipython->keras-tuner<2,>=1.0.4->tfx==1.3) (0.1.3)

Requirement already satisfied: pickleshare in /usr/local/lib/python3.7/dist-packages (from ipython->keras-tuner<2,>=1.0.4->tfx==1.3) (0.7.5)

Requirement already satisfied: pygments in /usr/local/lib/python3.7/dist-packages (from ipython->keras-tuner<2,>=1.0.4->tfx==1.3) (2.6.1)

Requirement already satisfied: pexpect>4.3 in /usr/local/lib/python3.7/dist-packages (from ipython->keras-tuner<2,>=1.0.4->tfx==1.3) (4.8.0)

Requirement already satisfied: ipykernel>=4.5.1 in /usr/local/lib/python3.7/dist-packages (from ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (4.10.1)

Requirement already satisfied: widgetsnbextension~=3.6.0 in /usr/local/lib/python3.7/dist-packages (from ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (3.6.0)

Requirement already satisfied: nbformat>=4.2.0 in /usr/local/lib/python3.7/dist-packages (from ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (5.4.0)

Requirement already satisfied: ipython-genutils~=0.2.0 in /usr/local/lib/python3.7/dist-packages (from ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (0.2.0)

Requirement already satisfied: jupyterlab-widgets>=1.0.0 in /usr/local/lib/python3.7/dist-packages (from ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (1.1.0)

Requirement already satisfied: pyasn1>=0.1.7 in /usr/local/lib/python3.7/dist-packages (from oauth2client>=1.4.12->google-apitools<1,>=0.5->tfx==1.3) (0.4.8)

Requirement already satisfied: idna<4,>=2.5 in /usr/local/lib/python3.7/dist-packages (from requests<3.0.0,>=2.24.0->apache-beam[gcp]<3,>=2.32->tfx==1.3) (2.10)

Requirement already satisfied: charset-normalizer<3,>=2 in /usr/local/lib/python3.7/dist-packages (from requests<3.0.0,>=2.24.0->apache-beam[gcp]<3,>=2.32->tfx==1.3) (2.0.12)

Requirement already satisfied: werkzeug>=0.11.15 in /usr/local/lib/python3.7/dist-packages (from tensorboard->keras-tuner<2,>=1.0.4->tfx==1.3) (1.0.1)

Requirement already satisfied: tensorboard-plugin-wit>=1.6.0 in /usr/local/lib/python3.7/dist-packages (from tensorboard->keras-tuner<2,>=1.0.4->tfx==1.3) (1.8.1)

Requirement already satisfied: markdown>=2.6.8 in /usr/local/lib/python3.7/dist-packages (from tensorboard->keras-tuner<2,>=1.0.4->tfx==1.3) (3.3.7)

Requirement already satisfied: tensorboard-data-server<0.7.0,>=0.6.0 in /usr/local/lib/python3.7/dist-packages (from tensorboard->keras-tuner<2,>=1.0.4->tfx==1.3) (0.6.1)

Requirement already satisfied: google-auth-oauthlib<0.5,>=0.4.1 in /usr/local/lib/python3.7/dist-packages (from tensorboard->keras-tuner<2,>=1.0.4->tfx==1.3) (0.4.6)

Requirement already satisfied: oauthlib>=3.0.0 in /usr/local/lib/python3.7/dist-packages (from requests-oauthlib->kubernetes<13,>=10.0.1->tfx==1.3) (3.2.0)

Collecting grpcio<2,>=1.28.1

Downloading grpcio-1.47.0-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (4.5 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 4.5/4.5 MB 61.3 MB/s eta 0:00:00

Requirement already satisfied: tornado>=4.0 in /usr/local/lib/python3.7/dist-packages (from ipykernel>=4.5.1->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (5.1.1)

Requirement already satisfied: jupyter-client in /usr/local/lib/python3.7/dist-packages (from ipykernel>=4.5.1->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (5.3.5)

Requirement already satisfied: parso<0.9.0,>=0.8.0 in /usr/local/lib/python3.7/dist-packages (from jedi>=0.16->ipython->keras-tuner<2,>=1.0.4->tfx==1.3) (0.8.3)

Requirement already satisfied: importlib-metadata>=4.4 in /usr/local/lib/python3.7/dist-packages (from markdown>=2.6.8->tensorboard->keras-tuner<2,>=1.0.4->tfx==1.3) (4.11.4)

Requirement already satisfied: jsonschema>=2.6 in /usr/local/lib/python3.7/dist-packages (from nbformat>=4.2.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (4.3.3)

Requirement already satisfied: jupyter-core in /usr/local/lib/python3.7/dist-packages (from nbformat>=4.2.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (4.10.0)

Requirement already satisfied: fastjsonschema in /usr/local/lib/python3.7/dist-packages (from nbformat>=4.2.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (2.15.3)

Collecting typing-utils>=0.0.3

Downloading typing_utils-0.1.0-py3-none-any.whl (10 kB)

Requirement already satisfied: ptyprocess>=0.5 in /usr/local/lib/python3.7/dist-packages (from pexpect>4.3->ipython->keras-tuner<2,>=1.0.4->tfx==1.3) (0.7.0)

Requirement already satisfied: wcwidth in /usr/local/lib/python3.7/dist-packages (from prompt-toolkit!=3.0.0,!=3.0.1,<3.1.0,>=2.0.0->ipython->keras-tuner<2,>=1.0.4->tfx==1.3) (0.2.5)

Requirement already satisfied: notebook>=4.4.1 in /usr/local/lib/python3.7/dist-packages (from widgetsnbextension~=3.6.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (5.3.1)

Requirement already satisfied: zipp>=0.5 in /usr/local/lib/python3.7/dist-packages (from importlib-metadata>=4.4->markdown>=2.6.8->tensorboard->keras-tuner<2,>=1.0.4->tfx==1.3) (3.8.0)

Requirement already satisfied: pyrsistent!=0.17.0,!=0.17.1,!=0.17.2,>=0.14.0 in /usr/local/lib/python3.7/dist-packages (from jsonschema>=2.6->nbformat>=4.2.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (0.18.1)

Requirement already satisfied: importlib-resources>=1.4.0 in /usr/local/lib/python3.7/dist-packages (from jsonschema>=2.6->nbformat>=4.2.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (5.7.1)

Requirement already satisfied: terminado>=0.8.1 in /usr/local/lib/python3.7/dist-packages (from notebook>=4.4.1->widgetsnbextension~=3.6.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (0.13.3)

Requirement already satisfied: nbconvert in /usr/local/lib/python3.7/dist-packages (from notebook>=4.4.1->widgetsnbextension~=3.6.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (5.6.1)

Requirement already satisfied: Send2Trash in /usr/local/lib/python3.7/dist-packages (from notebook>=4.4.1->widgetsnbextension~=3.6.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (1.8.0)

Requirement already satisfied: pyzmq>=13 in /usr/local/lib/python3.7/dist-packages (from jupyter-client->ipykernel>=4.5.1->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (23.1.0)

Requirement already satisfied: pandocfilters>=1.4.1 in /usr/local/lib/python3.7/dist-packages (from nbconvert->notebook>=4.4.1->widgetsnbextension~=3.6.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (1.5.0)

Requirement already satisfied: defusedxml in /usr/local/lib/python3.7/dist-packages (from nbconvert->notebook>=4.4.1->widgetsnbextension~=3.6.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (0.7.1)

Requirement already satisfied: entrypoints>=0.2.2 in /usr/local/lib/python3.7/dist-packages (from nbconvert->notebook>=4.4.1->widgetsnbextension~=3.6.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (0.4)

Requirement already satisfied: mistune<2,>=0.8.1 in /usr/local/lib/python3.7/dist-packages (from nbconvert->notebook>=4.4.1->widgetsnbextension~=3.6.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (0.8.4)

Requirement already satisfied: testpath in /usr/local/lib/python3.7/dist-packages (from nbconvert->notebook>=4.4.1->widgetsnbextension~=3.6.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (0.6.0)

Requirement already satisfied: bleach in /usr/local/lib/python3.7/dist-packages (from nbconvert->notebook>=4.4.1->widgetsnbextension~=3.6.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (5.0.0)

Requirement already satisfied: webencodings in /usr/local/lib/python3.7/dist-packages (from bleach->nbconvert->notebook>=4.4.1->widgetsnbextension~=3.6.0->ipywidgets<8,>=7->tensorflow-model-analysis<0.35,>=0.34.1->tfx==1.3) (0.5.1)

Building wheels for collected packages: google-apitools, clang, dill, wrapt

Building wheel for google-apitools (setup.py) ... done

Created wheel for google-apitools: filename=google_apitools-0.5.31-py3-none-any.whl size=131039 sha256=12792bbb192212a7b2a8e4fca526a7a40124b9a99df855107da1d4693aaa0bcf

Stored in directory: /root/.cache/pip/wheels/19/b5/2f/1cc3cf2b31e7a9cd1508731212526d9550271274d351c96f16

Building wheel for clang (setup.py) ... done

Created wheel for clang: filename=clang-5.0-py3-none-any.whl size=30694 sha256=8b0601c614fa84bf7dde017c9e25c4d0359e691557b1202a6ac0875d8bfbdcf0

Stored in directory: /root/.cache/pip/wheels/98/91/04/971b4c587cf47ae952b108949b46926f426c02832d120a082a

Building wheel for dill (setup.py) ... done

Created wheel for dill: filename=dill-0.3.1.1-py3-none-any.whl size=78544 sha256=850855ee2ae8f7ab6429d70ec8411bbe7f62cc026f2bb211ae4e2314b8e44719

Stored in directory: /root/.cache/pip/wheels/a4/61/fd/c57e374e580aa78a45ed78d5859b3a44436af17e22ca53284f

Building wheel for wrapt (setup.py) ... done

Created wheel for wrapt: filename=wrapt-1.12.1-cp37-cp37m-linux_x86_64.whl size=68719 sha256=e63cd05a6e18767e996732096a0a68b59b1c34c73b1f3a2e382534a0e89f05a6

Stored in directory: /root/.cache/pip/wheels/62/76/4c/aa25851149f3f6d9785f6c869387ad82b3fd37582fa8147ac6

Successfully built google-apitools clang dill wrapt

Installing collected packages: wrapt, typing-extensions, tensorflow-estimator, kt-legacy, keras, joblib, flatbuffers, clang, websocket-client, typing-utils, requests, pymongo, protobuf, prompt-toolkit, packaging, orjson, numpy, grpcio, google-crc32c, gast, fasteners, fastavro, dill, cloudpickle, attrs, absl-py, pyarrow, proto-plus, overrides, ml-metadata, ipython, hdfs, grpcio-gcp, google-resumable-media, docker, tensorflow-metadata, kubernetes, grpcio-status, google-auth-httplib2, google-apitools, apache-beam, grpc-google-iam-v1, google-cloud-core, tensorflow, ml-pipelines-sdk, keras-tuner, google-cloud-vision, google-cloud-videointelligence, google-cloud-storage, google-cloud-spanner, google-cloud-resource-manager, google-cloud-recommendations-ai, google-cloud-pubsub, google-cloud-language, google-cloud-dlp, google-cloud-bigtable, google-cloud-bigquery-storage, google-cloud-bigquery, tensorflow-serving-api, google-cloud-pubsublite, google-cloud-aiplatform, tfx-bsl, tensorflow-transform, tensorflow-model-analysis, tensorflow-data-validation, tfx

Attempting uninstall: wrapt

Found existing installation: wrapt 1.14.1

Uninstalling wrapt-1.14.1:

Successfully uninstalled wrapt-1.14.1

Attempting uninstall: typing-extensions

Found existing installation: typing_extensions 4.1.1

Uninstalling typing_extensions-4.1.1:

Successfully uninstalled typing_extensions-4.1.1

Attempting uninstall: tensorflow-estimator

Found existing installation: tensorflow-estimator 2.8.0

Uninstalling tensorflow-estimator-2.8.0:

Successfully uninstalled tensorflow-estimator-2.8.0

Attempting uninstall: keras

Found existing installation: keras 2.8.0

Uninstalling keras-2.8.0:

Successfully uninstalled keras-2.8.0

Attempting uninstall: joblib

Found existing installation: joblib 1.1.0

Uninstalling joblib-1.1.0:

Successfully uninstalled joblib-1.1.0

Attempting uninstall: flatbuffers

Found existing installation: flatbuffers 2.0

Uninstalling flatbuffers-2.0:

Successfully uninstalled flatbuffers-2.0

Attempting uninstall: requests

Found existing installation: requests 2.23.0

Uninstalling requests-2.23.0:

Successfully uninstalled requests-2.23.0

Attempting uninstall: pymongo

Found existing installation: pymongo 4.1.1

Uninstalling pymongo-4.1.1:

Successfully uninstalled pymongo-4.1.1

Attempting uninstall: protobuf

Found existing installation: protobuf 3.17.3

Uninstalling protobuf-3.17.3:

Successfully uninstalled protobuf-3.17.3

Attempting uninstall: prompt-toolkit

Found existing installation: prompt-toolkit 1.0.18

Uninstalling prompt-toolkit-1.0.18:

Successfully uninstalled prompt-toolkit-1.0.18

Attempting uninstall: packaging

Found existing installation: packaging 21.3

Uninstalling packaging-21.3:

Successfully uninstalled packaging-21.3

Attempting uninstall: numpy

Found existing installation: numpy 1.21.6

Uninstalling numpy-1.21.6:

Successfully uninstalled numpy-1.21.6

Attempting uninstall: grpcio

Found existing installation: grpcio 1.46.3

Uninstalling grpcio-1.46.3:

Successfully uninstalled grpcio-1.46.3

Attempting uninstall: gast

Found existing installation: gast 0.5.3

Uninstalling gast-0.5.3:

Successfully uninstalled gast-0.5.3

Attempting uninstall: dill

Found existing installation: dill 0.3.5.1

Uninstalling dill-0.3.5.1:

Successfully uninstalled dill-0.3.5.1

Attempting uninstall: cloudpickle

Found existing installation: cloudpickle 1.3.0

Uninstalling cloudpickle-1.3.0:

Successfully uninstalled cloudpickle-1.3.0

Attempting uninstall: attrs

Found existing installation: attrs 21.4.0

Uninstalling attrs-21.4.0:

Successfully uninstalled attrs-21.4.0

Attempting uninstall: absl-py

Found existing installation: absl-py 1.1.0

Uninstalling absl-py-1.1.0:

Successfully uninstalled absl-py-1.1.0

Attempting uninstall: pyarrow

Found existing installation: pyarrow 6.0.1

Uninstalling pyarrow-6.0.1:

Successfully uninstalled pyarrow-6.0.1

Attempting uninstall: ipython

Found existing installation: ipython 5.5.0

Uninstalling ipython-5.5.0:

Successfully uninstalled ipython-5.5.0

Attempting uninstall: google-resumable-media

Found existing installation: google-resumable-media 0.4.1

Uninstalling google-resumable-media-0.4.1:

Successfully uninstalled google-resumable-media-0.4.1

Attempting uninstall: tensorflow-metadata

Found existing installation: tensorflow-metadata 1.8.0

Uninstalling tensorflow-metadata-1.8.0:

Successfully uninstalled tensorflow-metadata-1.8.0

Attempting uninstall: google-auth-httplib2

Found existing installation: google-auth-httplib2 0.0.4

Uninstalling google-auth-httplib2-0.0.4:

Successfully uninstalled google-auth-httplib2-0.0.4

Attempting uninstall: google-cloud-core

Found existing installation: google-cloud-core 1.0.3

Uninstalling google-cloud-core-1.0.3:

Successfully uninstalled google-cloud-core-1.0.3

Attempting uninstall: tensorflow

Found existing installation: tensorflow 2.8.2+zzzcolab20220527125636

Uninstalling tensorflow-2.8.2+zzzcolab20220527125636:

Successfully uninstalled tensorflow-2.8.2+zzzcolab20220527125636

Attempting uninstall: google-cloud-storage

Found existing installation: google-cloud-storage 1.18.1

Uninstalling google-cloud-storage-1.18.1:

Successfully uninstalled google-cloud-storage-1.18.1

Attempting uninstall: google-cloud-language

Found existing installation: google-cloud-language 1.2.0

Uninstalling google-cloud-language-1.2.0:

Successfully uninstalled google-cloud-language-1.2.0

Attempting uninstall: google-cloud-bigquery-storage

Found existing installation: google-cloud-bigquery-storage 1.1.2

Uninstalling google-cloud-bigquery-storage-1.1.2:

Successfully uninstalled google-cloud-bigquery-storage-1.1.2

Attempting uninstall: google-cloud-bigquery

Found existing installation: google-cloud-bigquery 1.21.0

Uninstalling google-cloud-bigquery-1.21.0:

Successfully uninstalled google-cloud-bigquery-1.21.0

ERROR: pip's dependency resolver does not currently take into account all the packages that are installed. This behaviour is the source of the following dependency conflicts.

xarray-einstats 0.2.2 requires numpy>=1.21, but you have numpy 1.19.5 which is incompatible.

pandas-gbq 0.13.3 requires google-cloud-bigquery[bqstorage,pandas]<2.0.0dev,>=1.11.1, but you have google-cloud-bigquery 2.34.4 which is incompatible.

multiprocess 0.70.13 requires dill>=0.3.5.1, but you have dill 0.3.1.1 which is incompatible.

jupyter-console 5.2.0 requires prompt-toolkit<2.0.0,>=1.0.0, but you have prompt-toolkit 3.0.30 which is incompatible.

gym 0.17.3 requires cloudpickle<1.7.0,>=1.2.0, but you have cloudpickle 2.1.0 which is incompatible.

google-colab 1.0.0 requires ipython~=5.5.0, but you have ipython 7.34.0 which is incompatible.

google-colab 1.0.0 requires requests~=2.23.0, but you have requests 2.28.1 which is incompatible.

datascience 0.10.6 requires folium==0.2.1, but you have folium 0.8.3 which is incompatible.

albumentations 0.1.12 requires imgaug<0.2.7,>=0.2.5, but you have imgaug 0.2.9 which is incompatible.

Successfully installed absl-py-0.12.0 apache-beam-2.40.0 attrs-20.3.0 clang-5.0 cloudpickle-2.1.0 dill-0.3.1.1 docker-4.4.4 fastavro-1.5.2 fasteners-0.17.3 flatbuffers-1.12 gast-0.4.0 google-apitools-0.5.31 google-auth-httplib2-0.1.0 google-cloud-aiplatform-1.15.0 google-cloud-bigquery-2.34.4 google-cloud-bigquery-storage-2.13.2 google-cloud-bigtable-1.7.2 google-cloud-core-1.7.2 google-cloud-dlp-3.7.1 google-cloud-language-1.3.2 google-cloud-pubsub-2.13.0 google-cloud-pubsublite-1.4.2 google-cloud-recommendations-ai-0.2.0 google-cloud-resource-manager-1.5.1 google-cloud-spanner-1.19.3 google-cloud-storage-2.2.1 google-cloud-videointelligence-1.16.3 google-cloud-vision-1.0.2 google-crc32c-1.3.0 google-resumable-media-2.3.3 grpc-google-iam-v1-0.12.4 grpcio-1.47.0 grpcio-gcp-0.2.2 grpcio-status-1.47.0 hdfs-2.7.0 ipython-7.34.0 joblib-0.14.1 keras-2.7.0 keras-tuner-1.1.2 kt-legacy-1.0.4 kubernetes-12.0.1 ml-metadata-1.3.0 ml-pipelines-sdk-1.3.0 numpy-1.19.5 orjson-3.7.7 overrides-6.1.0 packaging-20.9 prompt-toolkit-3.0.30 proto-plus-1.20.6 protobuf-3.19.4 pyarrow-2.0.0 pymongo-3.12.3 requests-2.28.1 tensorflow-2.6.0+zzzcolab20220506153740 tensorflow-data-validation-1.3.0 tensorflow-estimator-2.7.0 tensorflow-metadata-1.2.0 tensorflow-model-analysis-0.34.1 tensorflow-serving-api-2.6.0 tensorflow-transform-1.3.0 tfx-1.3.0 tfx-bsl-1.3.0 typing-extensions-3.7.4.3 typing-utils-0.1.0 websocket-client-1.3.3 wrapt-1.12.1

WARNING: Running pip as the 'root' user can result in broken permissions and conflicting behaviour with the system package manager. It is recommended to use a virtual environment instead: https://pip.pypa.io/warnings/venv

Looking in indexes: https://pypi.org/simple, https://us-python.pkg.dev/colab-wheels/public/simple/

Collecting tensorflow-estimator==2.6.0

Downloading tensorflow_estimator-2.6.0-py2.py3-none-any.whl (462 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 462.9/462.9 kB 2.0 MB/s eta 0:00:00

Installing collected packages: tensorflow-estimator

Attempting uninstall: tensorflow-estimator

Found existing installation: tensorflow-estimator 2.7.0

Uninstalling tensorflow-estimator-2.7.0:

Successfully uninstalled tensorflow-estimator-2.7.0

Successfully installed tensorflow-estimator-2.6.0

WARNING: Running pip as the 'root' user can result in broken permissions and conflicting behaviour with the system package manager. It is recommended to use a virtual environment instead: https://pip.pypa.io/warnings/venv

Looking in indexes: https://pypi.org/simple, https://us-python.pkg.dev/colab-wheels/public/simple/

Collecting keras==2.6.0

Downloading keras-2.6.0-py2.py3-none-any.whl (1.3 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.3/1.3 MB 53.0 MB/s eta 0:00:00

Installing collected packages: keras

Attempting uninstall: keras

Found existing installation: keras 2.7.0

Uninstalling keras-2.7.0:

Successfully uninstalled keras-2.7.0

Successfully installed keras-2.6.0

WARNING: Running pip as the 'root' user can result in broken permissions and conflicting behaviour with the system package manager. It is recommended to use a virtual environment instead: https://pip.pypa.io/warnings/venv

Note: In Google Colab, you need to restart the runtime at this point to finalize updating the packages you just installed. You can do so by clicking the Restart Runtime at the end of the output cell above (after installation), or by selecting Runtime > Restart Runtime in the Menu bar. Please do not proceed to the next section without restarting.* You can also ignore the errors about version incompatibility of some of the bundled packages because we won't be using those in this notebook.*

Imports¶

You will then import the packages you will need for this exercise.

import tensorflow as tf

from tensorflow import keras

import tensorflow_datasets as tfds

import os

import pprint

from tfx.components import ImportExampleGen

from tfx.components import ExampleValidator

from tfx.components import SchemaGen

from tfx.components import StatisticsGen

from tfx.components import Transform

from tfx.components import Tuner

from tfx.components import Trainer

from tfx.proto import example_gen_pb2

from tfx.orchestration.experimental.interactive.interactive_context import InteractiveContext

Download and prepare the dataset¶

As mentioned earlier, you will be using the Fashion MNIST dataset just like in the previous lab. This will allow you to compare the similarities and differences when using Keras Tuner as a standalone library and within an ML pipeline.

You will first need to setup the directories that you will use to store the dataset, as well as the pipeline artifacts and metadata store.

# Location of the pipeline metadata store

_pipeline_root = './pipeline/'

# Directory of the raw data files

_data_root = './data/fmnist'

# Temporary directory

tempdir = './tempdir'

# Create the dataset directory

!mkdir -p {_data_root}

# Create the TFX pipeline files directory

!mkdir {_pipeline_root}

You will now download FashionMNIST from Tensorflow Datasets. The with_info flag will be set to True so you can display information about the dataset in the next cell (i.e. using ds_info).

# Download the dataset

ds, ds_info = tfds.load('fashion_mnist', data_dir=tempdir, with_info=True)

Downloading and preparing dataset fashion_mnist/3.0.1 (download: 29.45 MiB, generated: 36.42 MiB, total: 65.87 MiB) to ./tempdir/fashion_mnist/3.0.1...

Dl Completed...: 0 url [00:00, ? url/s]

Dl Size...: 0 MiB [00:00, ? MiB/s]

Extraction completed...: 0 file [00:00, ? file/s]

0 examples [00:00, ? examples/s]

Shuffling and writing examples to ./tempdir/fashion_mnist/3.0.1.incomplete573I3J/fashion_mnist-train.tfrecord

0%| | 0/60000 [00:00<?, ? examples/s]

0 examples [00:00, ? examples/s]

Shuffling and writing examples to ./tempdir/fashion_mnist/3.0.1.incomplete573I3J/fashion_mnist-test.tfrecord

0%| | 0/10000 [00:00<?, ? examples/s]

Dataset fashion_mnist downloaded and prepared to ./tempdir/fashion_mnist/3.0.1. Subsequent calls will reuse this data.

# Display info about the dataset

print(ds_info)

tfds.core.DatasetInfo(

name='fashion_mnist',

version=3.0.1,

description='Fashion-MNIST is a dataset of Zalando's article images consisting of a training set of 60,000 examples and a test set of 10,000 examples. Each example is a 28x28 grayscale image, associated with a label from 10 classes.',

homepage='https://github.com/zalandoresearch/fashion-mnist',

features=FeaturesDict({

'image': Image(shape=(28, 28, 1), dtype=tf.uint8),

'label': ClassLabel(shape=(), dtype=tf.int64, num_classes=10),

}),

total_num_examples=70000,

splits={

'test': 10000,

'train': 60000,

},

supervised_keys=('image', 'label'),

citation="""@article{DBLP:journals/corr/abs-1708-07747,

author = {Han Xiao and

Kashif Rasul and

Roland Vollgraf},

title = {Fashion-MNIST: a Novel Image Dataset for Benchmarking Machine Learning

Algorithms},

journal = {CoRR},

volume = {abs/1708.07747},

year = {2017},

url = {http://arxiv.org/abs/1708.07747},

archivePrefix = {arXiv},

eprint = {1708.07747},

timestamp = {Mon, 13 Aug 2018 16:47:27 +0200},

biburl = {https://dblp.org/rec/bib/journals/corr/abs-1708-07747},

bibsource = {dblp computer science bibliography, https://dblp.org}

}""",

redistribution_info=,

)

You can review the downloaded files with the code below. For this lab, you will be using the train TFRecord so you will need to take note of its filename. You will not use the test TFRecord in this lab.

# Define the location of the train tfrecord downloaded via TFDS

tfds_data_path = f'{tempdir}/{ds_info.name}/{ds_info.version}'

# Display contents of the TFDS data directory

os.listdir(tfds_data_path)

['label.labels.txt', 'dataset_info.json', 'fashion_mnist-test.tfrecord-00000-of-00001', 'fashion_mnist-train.tfrecord-00000-of-00001', 'features.json']

You will then copy the train split from the downloaded data so it can be consumed by the ExampleGen component in the next step. This component requires that your files are in a directory without extra files (e.g. JSONs and TXT files).

# Define the train tfrecord filename

train_filename = 'fashion_mnist-train.tfrecord-00000-of-00001'

# Copy the train tfrecord into the data root folder

!cp {tfds_data_path}/{train_filename} {_data_root}

TFX Pipeline¶

With the setup complete, you can now proceed to creating the pipeline.

Initialize the Interactive Context¶

You will start by initializing the InteractiveContext so you can run the components within this Colab environment. You can safely ignore the warning because you will just be using a local SQLite file for the metadata store.

# Initialize the InteractiveContext

context = InteractiveContext(pipeline_root=_pipeline_root)

WARNING:absl:InteractiveContext metadata_connection_config not provided: using SQLite ML Metadata database at ./pipeline/metadata.sqlite.

ExampleGen¶

You will start the pipeline by ingesting the TFRecord you set aside. The ImportExampleGen consumes TFRecords and you can specify splits as shown below. For this exercise, you will split the train tfrecord to use 80% for the train set, and the remaining 20% as eval/validation set.

# Specify 80/20 split for the train and eval set

output = example_gen_pb2.Output(

split_config=example_gen_pb2.SplitConfig(splits=[

example_gen_pb2.SplitConfig.Split(name='train', hash_buckets=8),

example_gen_pb2.SplitConfig.Split(name='eval', hash_buckets=2),

]))

# Ingest the data through ExampleGen

example_gen = ImportExampleGen(input_base=_data_root, output_config=output)

# Run the component

context.run(example_gen)

WARNING:apache_beam.runners.interactive.interactive_environment:Dependencies required for Interactive Beam PCollection visualization are not available, please use: `pip install apache-beam[interactive]` to install necessary dependencies to enable all data visualization features.

WARNING:apache_beam.io.tfrecordio:Couldn't find python-snappy so the implementation of _TFRecordUtil._masked_crc32c is not as fast as it could be.

# Print split names and URI

artifact = example_gen.outputs['examples'].get()[0]

print(artifact.split_names, artifact.uri)

["train", "eval"] ./pipeline/ImportExampleGen/examples/1

StatisticsGen¶

Next, you will compute the statistics of the dataset with the StatisticsGen component.

# Run StatisticsGen

statistics_gen = StatisticsGen(

examples=example_gen.outputs['examples'])

context.run(statistics_gen)

--------------------------------------------------------------------------- TypeCheckError Traceback (most recent call last) <ipython-input-13-412d0fda3ef2> in <module> 3 examples=example_gen.outputs['examples']) 4 ----> 5 context.run(statistics_gen) /usr/local/lib/python3.7/dist-packages/tfx/orchestration/experimental/interactive/interactive_context.py in run_if_ipython(*args, **kwargs) 61 # __IPYTHON__ variable is set by IPython, see 62 # https://ipython.org/ipython-doc/rel-0.10.2/html/interactive/reference.html#embedding-ipython. ---> 63 return fn(*args, **kwargs) 64 else: 65 absl.logging.warning( /usr/local/lib/python3.7/dist-packages/tfx/orchestration/experimental/interactive/interactive_context.py in run(self, component, enable_cache, beam_pipeline_args) 181 telemetry_utils.LABEL_TFX_RUNNER: runner_label, 182 }): --> 183 execution_id = launcher.launch().execution_id 184 185 return execution_result.ExecutionResult( /usr/local/lib/python3.7/dist-packages/tfx/orchestration/launcher/base_component_launcher.py in launch(self) 201 copy.deepcopy(execution_decision.input_dict), 202 execution_decision.output_dict, --> 203 copy.deepcopy(execution_decision.exec_properties)) 204 205 absl.logging.info('Running publisher for %s', /usr/local/lib/python3.7/dist-packages/tfx/orchestration/launcher/in_process_component_launcher.py in _run_executor(self, execution_id, input_dict, output_dict, exec_properties) 72 # output_dict can still be changed, specifically properties. 73 executor.Do( ---> 74 copy.deepcopy(input_dict), output_dict, copy.deepcopy(exec_properties)) /usr/local/lib/python3.7/dist-packages/tfx/components/statistics_gen/executor.py in Do(self, input_dict, output_dict, exec_properties) 138 stats_api.GenerateStatistics(stats_options) 139 | 'WriteStatsOutput[%s]' % split >> --> 140 stats_api.WriteStatisticsToBinaryFile(output_path)) 141 logging.info('Statistics for split %s written to %s.', split, 142 output_uri) /usr/local/lib/python3.7/dist-packages/apache_beam/pvalue.py in __or__(self, ptransform) 135 136 def __or__(self, ptransform): --> 137 return self.pipeline.apply(ptransform, self) 138 139 /usr/local/lib/python3.7/dist-packages/apache_beam/pipeline.py in apply(self, transform, pvalueish, label) 651 if isinstance(transform, ptransform._NamedPTransform): 652 return self.apply( --> 653 transform.transform, pvalueish, label or transform.label) 654 655 if not isinstance(transform, ptransform.PTransform): /usr/local/lib/python3.7/dist-packages/apache_beam/pipeline.py in apply(self, transform, pvalueish, label) 661 old_label, transform.label = transform.label, label 662 try: --> 663 return self.apply(transform, pvalueish) 664 finally: 665 transform.label = old_label /usr/local/lib/python3.7/dist-packages/apache_beam/pipeline.py in apply(self, transform, pvalueish, label) 710 711 if type_options is not None and type_options.pipeline_type_check: --> 712 transform.type_check_outputs(pvalueish_result) 713 714 for tag, result in ptransform.get_named_nested_pvalues(pvalueish_result): /usr/local/lib/python3.7/dist-packages/apache_beam/transforms/ptransform.py in type_check_outputs(self, pvalueish) 464 465 def type_check_outputs(self, pvalueish): --> 466 self.type_check_inputs_or_outputs(pvalueish, 'output') 467 468 def type_check_inputs_or_outputs(self, pvalueish, input_or_output): /usr/local/lib/python3.7/dist-packages/apache_beam/transforms/ptransform.py in type_check_inputs_or_outputs(self, pvalueish, input_or_output) 495 hint=hint, 496 actual_type=pvalue_.element_type, --> 497 debug_str=type_hints.debug_str())) 498 499 def _infer_output_coder(self, input_type=None, input_coder=None): TypeCheckError: Output type hint violation at WriteStatsOutput[train]: expected <class 'apache_beam.pvalue.PDone'>, got <class 'str'> Full type hint: IOTypeHints[inputs=((<class 'tensorflow_metadata.proto.v0.statistics_pb2.DatasetFeatureStatisticsList'>,), {}), outputs=((<class 'apache_beam.pvalue.PDone'>,), {})] File "<frozen importlib._bootstrap>", line 677, in _load_unlocked File "<frozen importlib._bootstrap_external>", line 728, in exec_module File "<frozen importlib._bootstrap>", line 219, in _call_with_frames_removed File "/usr/local/lib/python3.7/dist-packages/tensorflow_data_validation/api/stats_api.py", line 113, in <module> class WriteStatisticsToBinaryFile(beam.PTransform): File "/usr/local/lib/python3.7/dist-packages/apache_beam/typehints/decorators.py", line 776, in annotate_input_types *converted_positional_hints, **converted_keyword_hints) based on: IOTypeHints[inputs=None, outputs=((<class 'apache_beam.pvalue.PDone'>,), {})] File "<frozen importlib._bootstrap>", line 677, in _load_unlocked File "<frozen importlib._bootstrap_external>", line 728, in exec_module File "<frozen importlib._bootstrap>", line 219, in _call_with_frames_removed File "/usr/local/lib/python3.7/dist-packages/tensorflow_data_validation/api/stats_api.py", line 113, in <module> class WriteStatisticsToBinaryFile(beam.PTransform): File "/usr/local/lib/python3.7/dist-packages/apache_beam/typehints/decorators.py", line 863, in annotate_output_types f._type_hints = th.with_output_types(return_type_hint) # pylint: disable=protected-access

# Run SchemaGen

schema_gen = SchemaGen(

statistics=statistics_gen.outputs['statistics'], infer_feature_shape=True)

context.run(schema_gen)

--------------------------------------------------------------------------- NotFoundError Traceback (most recent call last) /usr/local/lib/python3.7/dist-packages/tfx/dsl/io/plugins/tensorflow_gfile.py in listdir(path) 64 try: ---> 65 return tf.io.gfile.listdir(path) 66 except tf.errors.NotFoundError as e: /usr/local/lib/python3.7/dist-packages/tensorflow/python/lib/io/file_io.py in list_directory_v2(path) 771 op=None, --> 772 message="Could not find directory {}".format(path)) 773 NotFoundError: Could not find directory ./pipeline/StatisticsGen/statistics/4/Split-train The above exception was the direct cause of the following exception: NotFoundError Traceback (most recent call last) <ipython-input-14-2c5dd0b2167a> in <module> 2 schema_gen = SchemaGen( 3 statistics=statistics_gen.outputs['statistics'], infer_feature_shape=True) ----> 4 context.run(schema_gen) /usr/local/lib/python3.7/dist-packages/tfx/orchestration/experimental/interactive/interactive_context.py in run_if_ipython(*args, **kwargs) 61 # __IPYTHON__ variable is set by IPython, see 62 # https://ipython.org/ipython-doc/rel-0.10.2/html/interactive/reference.html#embedding-ipython. ---> 63 return fn(*args, **kwargs) 64 else: 65 absl.logging.warning( /usr/local/lib/python3.7/dist-packages/tfx/orchestration/experimental/interactive/interactive_context.py in run(self, component, enable_cache, beam_pipeline_args) 181 telemetry_utils.LABEL_TFX_RUNNER: runner_label, 182 }): --> 183 execution_id = launcher.launch().execution_id 184 185 return execution_result.ExecutionResult( /usr/local/lib/python3.7/dist-packages/tfx/orchestration/launcher/base_component_launcher.py in launch(self) 201 copy.deepcopy(execution_decision.input_dict), 202 execution_decision.output_dict, --> 203 copy.deepcopy(execution_decision.exec_properties)) 204 205 absl.logging.info('Running publisher for %s', /usr/local/lib/python3.7/dist-packages/tfx/orchestration/launcher/in_process_component_launcher.py in _run_executor(self, execution_id, input_dict, output_dict, exec_properties) 72 # output_dict can still be changed, specifically properties. 73 executor.Do( ---> 74 copy.deepcopy(input_dict), output_dict, copy.deepcopy(exec_properties)) /usr/local/lib/python3.7/dist-packages/tfx/components/schema_gen/executor.py in Do(self, input_dict, output_dict, exec_properties) 78 logging.info('Processing schema from statistics for split %s.', split) 79 stats_uri = io_utils.get_only_uri_in_dir( ---> 80 artifact_utils.get_split_uri([stats_artifact], split)) 81 if artifact_utils.is_artifact_version_older_than( 82 stats_artifact, artifact_utils._ARTIFACT_VERSION_FOR_STATS_UPDATE): # pylint: disable=protected-access /usr/local/lib/python3.7/dist-packages/tfx/utils/io_utils.py in get_only_uri_in_dir(dir_path) 78 """Gets the only uri from given directory.""" 79 ---> 80 files = fileio.listdir(dir_path) 81 if len(files) != 1: 82 raise RuntimeError( /usr/local/lib/python3.7/dist-packages/tfx/dsl/io/fileio.py in listdir(path) 71 def listdir(path: PathType) -> List[PathType]: 72 """Return the list of files in a directory.""" ---> 73 return _get_filesystem(path).listdir(path) 74 75 /usr/local/lib/python3.7/dist-packages/tfx/dsl/io/plugins/tensorflow_gfile.py in listdir(path) 65 return tf.io.gfile.listdir(path) 66 except tf.errors.NotFoundError as e: ---> 67 raise filesystem.NotFoundError() from e 68 69 @staticmethod NotFoundError:

# Visualize the results

context.show(schema_gen.outputs['schema'])

ExampleValidator¶

You can assume that the dataset is clean since we downloaded it from TFDS. But just to review, let's run it through ExampleValidator to detect if there are anomalies within the dataset.

# Run ExampleValidator

example_validator = ExampleValidator(

statistics=statistics_gen.outputs['statistics'],

schema=schema_gen.outputs['schema'])

context.run(example_validator)

# Visualize the results. There should be no anomalies.

context.show(example_validator.outputs['anomalies'])

_transform_module_file = 'fmnist_transform.py'

%%writefile {_transform_module_file}

import tensorflow as tf

import tensorflow_transform as tft

# Keys

_LABEL_KEY = 'label'

_IMAGE_KEY = 'image'

def _transformed_name(key):

return key + '_xf'

def _image_parser(image_str):

'''converts the images to a float tensor'''

image = tf.image.decode_image(image_str, channels=1)

image = tf.reshape(image, (28, 28, 1))

image = tf.cast(image, tf.float32)

return image

def _label_parser(label_id):

'''converts the labels to a float tensor'''

label = tf.cast(label_id, tf.float32)

return label

def preprocessing_fn(inputs):

"""tf.transform's callback function for preprocessing inputs.

Args:

inputs: map from feature keys to raw not-yet-transformed features.

Returns:

Map from string feature key to transformed feature operations.

"""

# Convert the raw image and labels to a float array

with tf.device("/cpu:0"):

outputs = {

_transformed_name(_IMAGE_KEY):

tf.map_fn(

_image_parser,

tf.squeeze(inputs[_IMAGE_KEY], axis=1),

dtype=tf.float32),

_transformed_name(_LABEL_KEY):

tf.map_fn(

_label_parser,

inputs[_LABEL_KEY],

dtype=tf.float32)

}

# scale the pixels from 0 to 1

outputs[_transformed_name(_IMAGE_KEY)] = tft.scale_to_0_1(outputs[_transformed_name(_IMAGE_KEY)])

return outputs

You will run the component by passing in the examples, schema, and transform module file.

Note: You can safely ignore the warnings and udf_utils related errors.

# Ignore TF warning messages

tf.get_logger().setLevel('ERROR')

# Setup the Transform component

transform = Transform(

examples=example_gen.outputs['examples'],

schema=schema_gen.outputs['schema'],

module_file=os.path.abspath(_transform_module_file))

# Run the component

context.run(transform)

Tuner¶

As the name suggests, the Tuner component tunes the hyperparameters of your model. To use this, you will need to provide a tuner module file which contains a tuner_fn() function. In this function, you will mostly do the same steps as you did in the previous ungraded lab but with some key differences in handling the dataset.

The Transform component earlier saved the transformed examples as TFRecords compressed in .gz format and you will need to load that into memory. Once loaded, you will need to create batches of features and labels so you can finally use it for hypertuning. This process is modularized in the _input_fn() below.

Going back, the tuner_fn() function will return a TunerFnResult namedtuple containing your tuner object and a set of arguments to pass to tuner.search() method. You will see these in action in the following cells. When reviewing the module file, we recommend viewing the tuner_fn() first before looking at the other auxiliary functions.

# Declare name of module file

_tuner_module_file = 'tuner.py'

%%writefile {_tuner_module_file}

# Define imports

from kerastuner.engine import base_tuner

import kerastuner as kt

from tensorflow import keras

from typing import NamedTuple, Dict, Text, Any, List

from tfx.components.trainer.fn_args_utils import FnArgs, DataAccessor

import tensorflow as tf

import tensorflow_transform as tft

# Declare namedtuple field names

TunerFnResult = NamedTuple('TunerFnResult', [('tuner', base_tuner.BaseTuner),

('fit_kwargs', Dict[Text, Any])])

# Label key

LABEL_KEY = 'label_xf'

# Callback for the search strategy

stop_early = tf.keras.callbacks.EarlyStopping(monitor='val_loss', patience=5)

def _gzip_reader_fn(filenames):

'''Load compressed dataset

Args:

filenames - filenames of TFRecords to load

Returns:

TFRecordDataset loaded from the filenames

'''

# Load the dataset. Specify the compression type since it is saved as `.gz`

return tf.data.TFRecordDataset(filenames, compression_type='GZIP')

def _input_fn(file_pattern,

tf_transform_output,

num_epochs=None,

batch_size=32) -> tf.data.Dataset:

'''Create batches of features and labels from TF Records

Args:

file_pattern - List of files or patterns of file paths containing Example records.

tf_transform_output - transform output graph

num_epochs - Integer specifying the number of times to read through the dataset.

If None, cycles through the dataset forever.

batch_size - An int representing the number of records to combine in a single batch.

Returns:

A dataset of dict elements, (or a tuple of dict elements and label).

Each dict maps feature keys to Tensor or SparseTensor objects.

'''

# Get feature specification based on transform output

transformed_feature_spec = (

tf_transform_output.transformed_feature_spec().copy())

# Create batches of features and labels

dataset = tf.data.experimental.make_batched_features_dataset(

file_pattern=file_pattern,

batch_size=batch_size,

features=transformed_feature_spec,

reader=_gzip_reader_fn,

num_epochs=num_epochs,

label_key=LABEL_KEY)

return dataset

def model_builder(hp):

'''

Builds the model and sets up the hyperparameters to tune.

Args:

hp - Keras tuner object

Returns:

model with hyperparameters to tune

'''

# Initialize the Sequential API and start stacking the layers

model = keras.Sequential()

model.add(keras.layers.Flatten(input_shape=(28, 28, 1)))

# Tune the number of units in the first Dense layer

# Choose an optimal value between 32-512

hp_units = hp.Int('units', min_value=32, max_value=512, step=32)

model.add(keras.layers.Dense(units=hp_units, activation='relu', name='dense_1'))

# Add next layers

model.add(keras.layers.Dropout(0.2))

model.add(keras.layers.Dense(10, activation='softmax'))

# Tune the learning rate for the optimizer

# Choose an optimal value from 0.01, 0.001, or 0.0001

hp_learning_rate = hp.Choice('learning_rate', values=[1e-2, 1e-3, 1e-4])

model.compile(optimizer=keras.optimizers.Adam(learning_rate=hp_learning_rate),

loss=keras.losses.SparseCategoricalCrossentropy(),

metrics=['accuracy'])

return model

def tuner_fn(fn_args: FnArgs) -> TunerFnResult:

"""Build the tuner using the KerasTuner API.

Args:

fn_args: Holds args as name/value pairs.

- working_dir: working dir for tuning.

- train_files: List of file paths containing training tf.Example data.

- eval_files: List of file paths containing eval tf.Example data.

- train_steps: number of train steps.

- eval_steps: number of eval steps.

- schema_path: optional schema of the input data.

- transform_graph_path: optional transform graph produced by TFT.

Returns:

A namedtuple contains the following:

- tuner: A BaseTuner that will be used for tuning.

- fit_kwargs: Args to pass to tuner's run_trial function for fitting the

model , e.g., the training and validation dataset. Required

args depend on the above tuner's implementation.

"""

# Define tuner search strategy

tuner = kt.Hyperband(model_builder,

objective='val_accuracy',

max_epochs=10,

factor=3,

directory=fn_args.working_dir,

project_name='kt_hyperband')

# Load transform output

tf_transform_output = tft.TFTransformOutput(fn_args.transform_graph_path)

# Use _input_fn() to extract input features and labels from the train and val set

train_set = _input_fn(fn_args.train_files[0], tf_transform_output)

val_set = _input_fn(fn_args.eval_files[0], tf_transform_output)

return TunerFnResult(

tuner=tuner,

fit_kwargs={

"callbacks":[stop_early],

'x': train_set,

'validation_data': val_set,

'steps_per_epoch': fn_args.train_steps,

'validation_steps': fn_args.eval_steps

}

)

With the module defined, you can now setup the Tuner component. You can see the description of each argument here.

Notice that we passed a num_steps argument to the train and eval args and this was used in the steps_per_epoch and validation_steps arguments in the tuner module above. This can be useful if you don't want to go through the entire dataset when tuning. For example, if you have 10GB of training data, it would be incredibly time consuming if you will iterate through it entirely just for one epoch and one set of hyperparameters. You can set the number of steps so your program will only go through a fraction of the dataset.

You can compute for the total number of steps in one epoch by: number of examples / batch size. For this particular example, we have 48000 examples / 32 (default size) which equals 1500 steps per epoch for the train set (compute val steps from 12000 examples). Since you passed 500 in the num_steps of the train args, this means that some examples will be skipped. This will likely result in lower accuracy readings but will save time in doing the hypertuning. Try modifying this value later and see if you arrive at the same set of hyperparameters.

from tfx.proto import trainer_pb2

# Setup the Tuner component

tuner = Tuner(

module_file=_tuner_module_file,

examples=transform.outputs['transformed_examples'],

transform_graph=transform.outputs['transform_graph'],

schema=schema_gen.outputs['schema'],

train_args=trainer_pb2.TrainArgs(splits=['train'], num_steps=500),

eval_args=trainer_pb2.EvalArgs(splits=['eval'], num_steps=100)

)

# Run the component. This will take around 10 minutes to run.

# When done, it will summarize the results and show the 10 best trials.

context.run(tuner, enable_cache=False)

Trainer¶

Like the Tuner component, the Trainer component also requires a module file to setup the training process. It will look for a run_fn() function that defines and trains the model. The steps will look similar to the tuner module file:

Define the model - You can get the results of the Tuner component through the

fn_args.hyperparametersargument. You will see it passed into themodel_builder()function below. If you didn't runTuner, then you can just explicitly define the number of hidden units and learning rate.Load the train and validation sets - You have done this in the Tuner component. For this module, you will pass in a

num_epochsvalue (10) to indicate how many batches will be prepared. You can opt not to do this and pass anum_stepsvalue as before.Setup and train the model - This will look very familiar if you're already used to the Keras Models Training API. You can pass in callbacks like the TensorBoard callback so you can visualize the results later.

Save the model - This is needed so you can analyze and serve your model. You will get to do this in later parts of the course and specialization.

# Declare trainer module file

_trainer_module_file = 'trainer.py'

%%writefile {_trainer_module_file}

from tensorflow import keras

from typing import NamedTuple, Dict, Text, Any, List

from tfx.components.trainer.fn_args_utils import FnArgs, DataAccessor

import tensorflow as tf

import tensorflow_transform as tft

# Define the label key

LABEL_KEY = 'label_xf'

def _gzip_reader_fn(filenames):

'''Load compressed dataset

Args:

filenames - filenames of TFRecords to load

Returns:

TFRecordDataset loaded from the filenames

'''

# Load the dataset. Specify the compression type since it is saved as `.gz`

return tf.data.TFRecordDataset(filenames, compression_type='GZIP')

def _input_fn(file_pattern,

tf_transform_output,

num_epochs=None,

batch_size=32) -> tf.data.Dataset:

'''Create batches of features and labels from TF Records

Args:

file_pattern - List of files or patterns of file paths containing Example records.