Introduction¶

The transformers library is an open-source, community-based repository to train, use and share models based on the Transformer architecture (Vaswani & al., 2017) such as Bert (Devlin & al., 2018), Roberta (Liu & al., 2019), GPT2 (Radford & al., 2019), XLNet (Yang & al., 2019), etc.

Along with the models, the library contains multiple variations of each of them for a large variety of downstream-tasks like Named Entity Recognition (NER), Sentiment Analysis, Language Modeling, Question Answering and so on.

Before Transformer¶

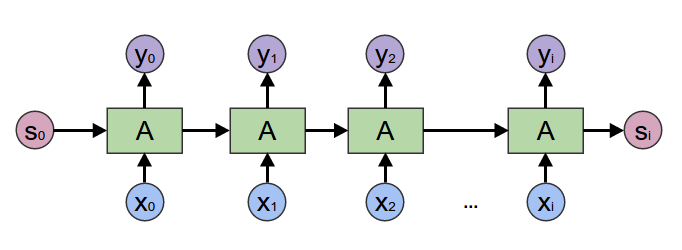

Back to 2017, most of the people using Neural Networks when working on Natural Language Processing were relying on sequential processing of the input through Recurrent Neural Network (RNN).

RNNs were performing well on large variety of tasks involving sequential dependency over the input sequence. However, this sequentially-dependent process had issues modeling very long range dependencies and was not well suited for the kind of hardware we're currently leveraging due to bad parallelization capabilities.

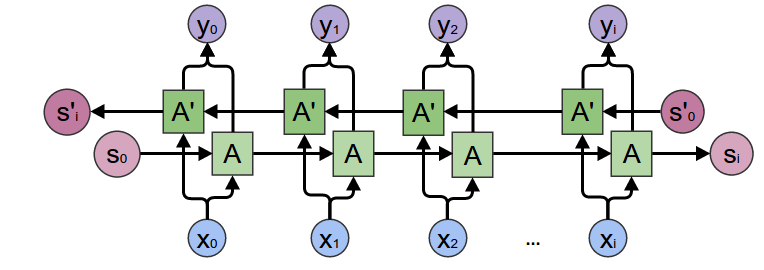

Some extensions were provided by the academic community, such as Bidirectional RNN (Schuster & Paliwal., 1997, Graves & al., 2005), which can be seen as a concatenation of two sequential process, one going forward, the other one going backward over the sequence input.

And also, the Attention mechanism, which introduced a good improvement over "raw" RNNs by giving a learned, weighted-importance to each element in the sequence, allowing the model to focus on important elements.

Then comes the Transformer¶

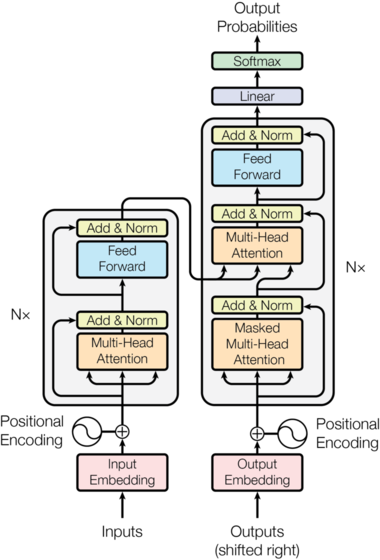

The Transformers era originally started from the work of (Vaswani & al., 2017) who demonstrated its superiority over Recurrent Neural Network (RNN) on translation tasks but it quickly extended to almost all the tasks RNNs were State-of-the-Art at that time.

One advantage of Transformer over its RNN counterpart was its non sequential attention model. Remember, the RNNs had to iterate over each element of the input sequence one-by-one and carry an "updatable-state" between each hop. With Transformer, the model is able to look at every position in the sequence, at the same time, in one operation.

For a deep-dive into the Transformer architecture, The Annotated Transformer will drive you along all the details of the paper.

Getting started with transformers¶

For the rest of this notebook, we will use the BERT (Devlin & al., 2018) architecture, as it's the most simple and there are plenty of content about it over the internet, it will be easy to dig more over this architecture if you want to.

The transformers library allows you to benefits from large, pretrained language models without requiring a huge and costly computational infrastructure. Most of the State-of-the-Art models are provided directly by their author and made available in the library in PyTorch and TensorFlow in a transparent and interchangeable way.

If you're executing this notebook in Colab, you will need to install the transformers library. You can do so with this command:

# !pip install transformers

import torch

from transformers import AutoModel, AutoTokenizer, BertTokenizer

torch.set_grad_enabled(False)

<torch.autograd.grad_mode.set_grad_enabled at 0x7ff0cc2a2c50>

# Store the model we want to use

MODEL_NAME = "bert-base-cased"

# We need to create the model and tokenizer

model = AutoModel.from_pretrained(MODEL_NAME)

tokenizer = AutoTokenizer.from_pretrained(MODEL_NAME)

With only the above two lines of code, you're ready to use a BERT pre-trained model. The tokenizers will allow us to map a raw textual input to a sequence of integers representing our textual input in a way the model can manipulate. Since we will be using a PyTorch model, we ask the tokenizer to return to us PyTorch tensors.

tokens_pt = tokenizer("This is an input example", return_tensors="pt")

for key, value in tokens_pt.items():

print("{}:\n\t{}".format(key, value))

input_ids: tensor([[ 101, 1188, 1110, 1126, 7758, 1859, 102]]) token_type_ids: tensor([[0, 0, 0, 0, 0, 0, 0]]) attention_mask: tensor([[1, 1, 1, 1, 1, 1, 1]])

The tokenizer automatically converted our input to all the inputs expected by the model. It generated some additional tensors on top of the IDs:

- token_type_ids: This tensor will map every tokens to their corresponding segment (see below).

- attention_mask: This tensor is used to "mask" padded values in a batch of sequence with different lengths (see below).

You can check our glossary for more information about each of those keys.

We can just feed this directly into our model:

outputs = model(**tokens_pt)

last_hidden_state = outputs.last_hidden_state

pooler_output = outputs.pooler_output

print("Token wise output: {}, Pooled output: {}".format(last_hidden_state.shape, pooler_output.shape))

Token wise output: torch.Size([1, 7, 768]), Pooled output: torch.Size([1, 768])

As you can see, BERT outputs two tensors:

- One with the generated representation for every token in the input

(1, NB_TOKENS, REPRESENTATION_SIZE) - One with an aggregated representation for the whole input

(1, REPRESENTATION_SIZE)

The first, token-based, representation can be leveraged if your task requires to keep the sequence representation and you want to operate at a token-level. This is particularly useful for Named Entity Recognition and Question-Answering.

The second, aggregated, representation is especially useful if you need to extract the overall context of the sequence and don't require a fine-grained token-level. This is the case for Sentiment-Analysis of the sequence or Information Retrieval.

# Single segment input

single_seg_input = tokenizer("This is a sample input")

# Multiple segment input

multi_seg_input = tokenizer("This is segment A", "This is segment B")

print("Single segment token (str): {}".format(tokenizer.convert_ids_to_tokens(single_seg_input['input_ids'])))

print("Single segment token (int): {}".format(single_seg_input['input_ids']))

print("Single segment type : {}".format(single_seg_input['token_type_ids']))

# Segments are concatened in the input to the model, with

print()

print("Multi segment token (str): {}".format(tokenizer.convert_ids_to_tokens(multi_seg_input['input_ids'])))

print("Multi segment token (int): {}".format(multi_seg_input['input_ids']))

print("Multi segment type : {}".format(multi_seg_input['token_type_ids']))

Single segment token (str): ['[CLS]', 'This', 'is', 'a', 'sample', 'input', '[SEP]'] Single segment token (int): [101, 1188, 1110, 170, 6876, 7758, 102] Single segment type : [0, 0, 0, 0, 0, 0, 0] Multi segment token (str): ['[CLS]', 'This', 'is', 'segment', 'A', '[SEP]', 'This', 'is', 'segment', 'B', '[SEP]'] Multi segment token (int): [101, 1188, 1110, 6441, 138, 102, 1188, 1110, 6441, 139, 102] Multi segment type : [0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1]

# Padding highlight

tokens = tokenizer(

["This is a sample", "This is another longer sample text"],

padding=True # First sentence will have some PADDED tokens to match second sequence length

)

for i in range(2):

print("Tokens (int) : {}".format(tokens['input_ids'][i]))

print("Tokens (str) : {}".format([tokenizer.convert_ids_to_tokens(s) for s in tokens['input_ids'][i]]))

print("Tokens (attn_mask): {}".format(tokens['attention_mask'][i]))

print()

Tokens (int) : [101, 1188, 1110, 170, 6876, 102, 0, 0] Tokens (str) : ['[CLS]', 'This', 'is', 'a', 'sample', '[SEP]', '[PAD]', '[PAD]'] Tokens (attn_mask): [1, 1, 1, 1, 1, 1, 0, 0] Tokens (int) : [101, 1188, 1110, 1330, 2039, 6876, 3087, 102] Tokens (str) : ['[CLS]', 'This', 'is', 'another', 'longer', 'sample', 'text', '[SEP]'] Tokens (attn_mask): [1, 1, 1, 1, 1, 1, 1, 1]

Frameworks interoperability¶

One of the most powerfull feature of transformers is its ability to seamlessly move from PyTorch to Tensorflow without pain for the user.

We provide some convenient methods to load TensorFlow pretrained weight insinde a PyTorch model and opposite.

from transformers import TFBertModel, BertModel

# Let's load a BERT model for TensorFlow and PyTorch

model_tf = TFBertModel.from_pretrained('bert-base-cased')

model_pt = BertModel.from_pretrained('bert-base-cased')

HBox(children=(FloatProgress(value=0.0, description='Downloading', max=526681800.0, style=ProgressStyle(descri…

Some layers from the model checkpoint at bert-base-cased were not used when initializing TFBertModel: ['nsp___cls', 'mlm___cls'] - This IS expected if you are initializing TFBertModel from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model). - This IS NOT expected if you are initializing TFBertModel from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model). All the layers of TFBertModel were initialized from the model checkpoint at bert-base-cased. If your task is similar to the task the model of the checkpoint was trained on, you can already use TFBertModel for predictions without further training.

# transformers generates a ready to use dictionary with all the required parameters for the specific framework.

input_tf = tokenizer("This is a sample input", return_tensors="tf")

input_pt = tokenizer("This is a sample input", return_tensors="pt")

# Let's compare the outputs

output_tf, output_pt = model_tf(input_tf), model_pt(**input_pt)

# Models outputs 2 values (The value for each tokens, the pooled representation of the input sentence)

# Here we compare the output differences between PyTorch and TensorFlow.

for name in ["last_hidden_state", "pooler_output"]:

print("{} differences: {:.5}".format(name, (output_tf[name].numpy() - output_pt[name].numpy()).sum()))

last_hidden_state differences: 1.2933e-05 pooler_output differences: 2.9691e-06

Want it lighter? Faster? Let's talk distillation!¶

One of the main concerns when using these Transformer based models is the computational power they require. All over this notebook we are using BERT model as it can be run on common machines but that's not the case for all of the models.

For example, Google released a few months ago T5 an Encoder/Decoder architecture based on Transformer and available in transformers with no more than 11 billions parameters. Microsoft also recently entered the game with Turing-NLG using 17 billions parameters. This kind of model requires tens of gigabytes to store the weights and a tremendous compute infrastructure to run such models which makes it impracticable for the common man !

With the goal of making Transformer-based NLP accessible to everyone we @huggingface developed models that take advantage of a training process called Distillation which allows us to drastically reduce the resources needed to run such models with almost zero drop in performances.

Going over the whole Distillation process is out of the scope of this notebook, but if you want more information on the subject you may refer to this Medium article written by my colleague Victor SANH, author of DistilBERT paper, you might also want to directly have a look at the paper (Sanh & al., 2019)

Of course, in transformers we have distilled some models and made them available directly in the library !

from transformers import DistilBertModel

bert_distil = DistilBertModel.from_pretrained('distilbert-base-cased')

input_pt = tokenizer(

'This is a sample input to demonstrate performance of distiled models especially inference time',

return_tensors="pt"

)

%time _ = bert_distil(input_pt['input_ids'])

%time _ = model_pt(input_pt['input_ids'])

HBox(children=(FloatProgress(value=0.0, description='Downloading', max=411.0, style=ProgressStyle(description_…

HBox(children=(FloatProgress(value=0.0, description='Downloading', max=263273408.0, style=ProgressStyle(descri…

CPU times: user 64.4 ms, sys: 0 ns, total: 64.4 ms Wall time: 72.9 ms CPU times: user 130 ms, sys: 124 µs, total: 130 ms Wall time: 131 ms

Community provided models¶

Last but not least, earlier in this notebook we introduced Hugging Face transformers as a repository for the NLP community to exchange pretrained models. We wanted to highlight this features and all the possibilities it offers for the end-user.

To leverage community pretrained models, just provide the organisation name and name of the model to from_pretrained and it will do all the magic for you !

We currently have more 50 models provided by the community and more are added every day, don't hesitate to give it a try !

# Let's load German BERT from the Bavarian State Library

de_bert = BertModel.from_pretrained("dbmdz/bert-base-german-cased")

de_tokenizer = BertTokenizer.from_pretrained("dbmdz/bert-base-german-cased")

de_input = de_tokenizer(

"Hugging Face ist eine französische Firma mit Sitz in New-York.",

return_tensors="pt"

)

print("Tokens (int) : {}".format(de_input['input_ids'].tolist()[0]))

print("Tokens (str) : {}".format([de_tokenizer.convert_ids_to_tokens(s) for s in de_input['input_ids'].tolist()[0]]))

print("Tokens (attn_mask): {}".format(de_input['attention_mask'].tolist()[0]))

print()

outputs_de = de_bert(**de_input)

last_hidden_state_de = outputs_de.last_hidden_state

pooler_output_de = outputs_de.pooler_output

print("Token wise output: {}, Pooled output: {}".format(last_hidden_state_de.shape, pooler_output_de.shape))

Tokens (int) : [102, 12272, 9355, 5746, 30881, 215, 261, 5945, 4118, 212, 2414, 153, 1942, 232, 3532, 566, 103] Tokens (str) : ['[CLS]', 'Hug', '##ging', 'Fac', '##e', 'ist', 'eine', 'französische', 'Firma', 'mit', 'Sitz', 'in', 'New', '-', 'York', '.', '[SEP]'] Tokens (attn_mask): [1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1] Token wise output: torch.Size([1, 17, 768]), Pooled output: torch.Size([1, 768])